Essence

Market Volatility Modeling functions as the mathematical apparatus for quantifying the dispersion of asset returns within decentralized finance. It serves as the bridge between stochastic processes and the tangible pricing of risk in permissionless environments. By translating observed price fluctuations into probabilistic expectations, this modeling framework enables participants to price options, manage collateralized positions, and hedge against systemic liquidity shocks.

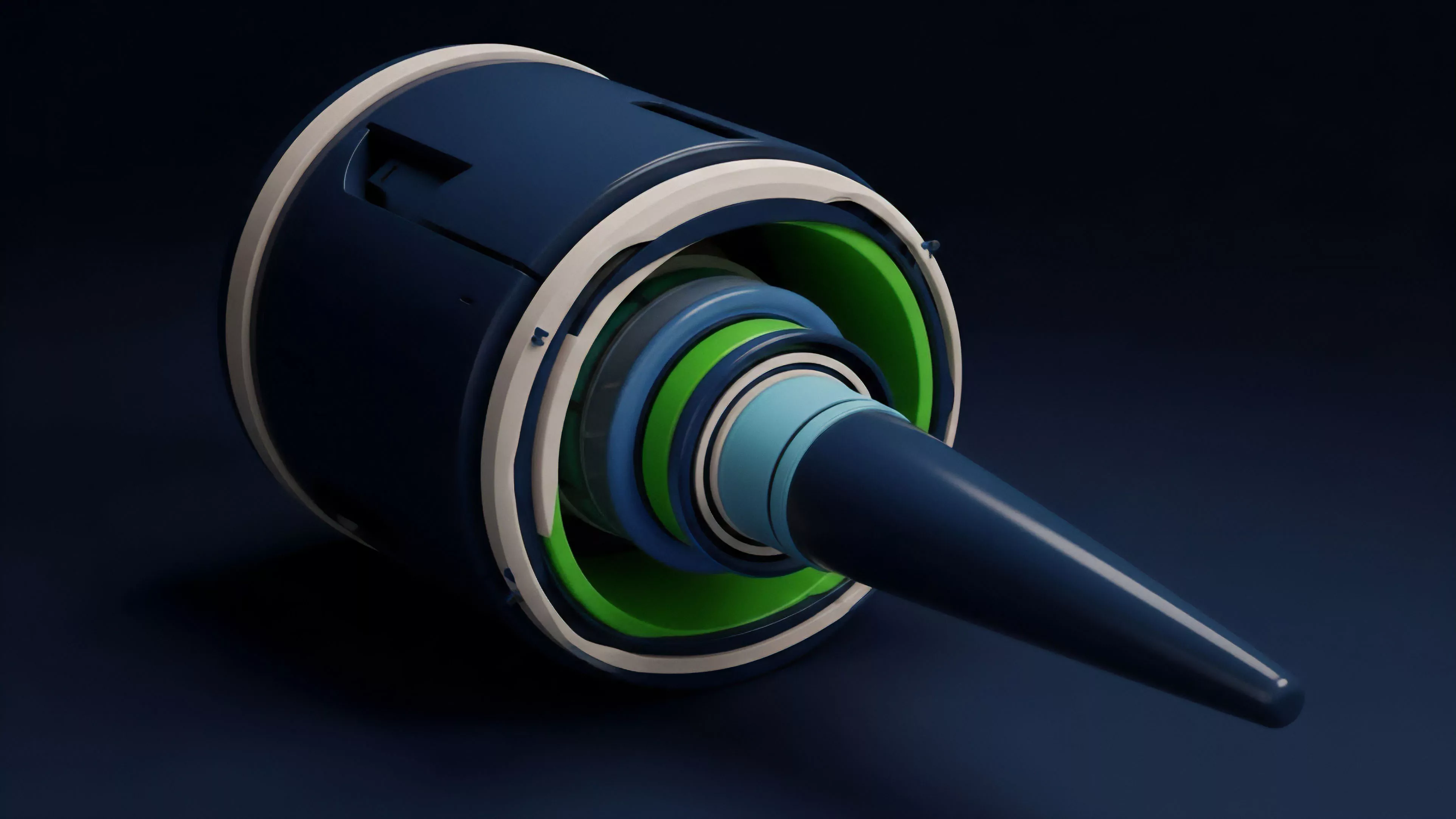

Market Volatility Modeling transforms raw historical price dispersion into actionable probability distributions for derivative pricing.

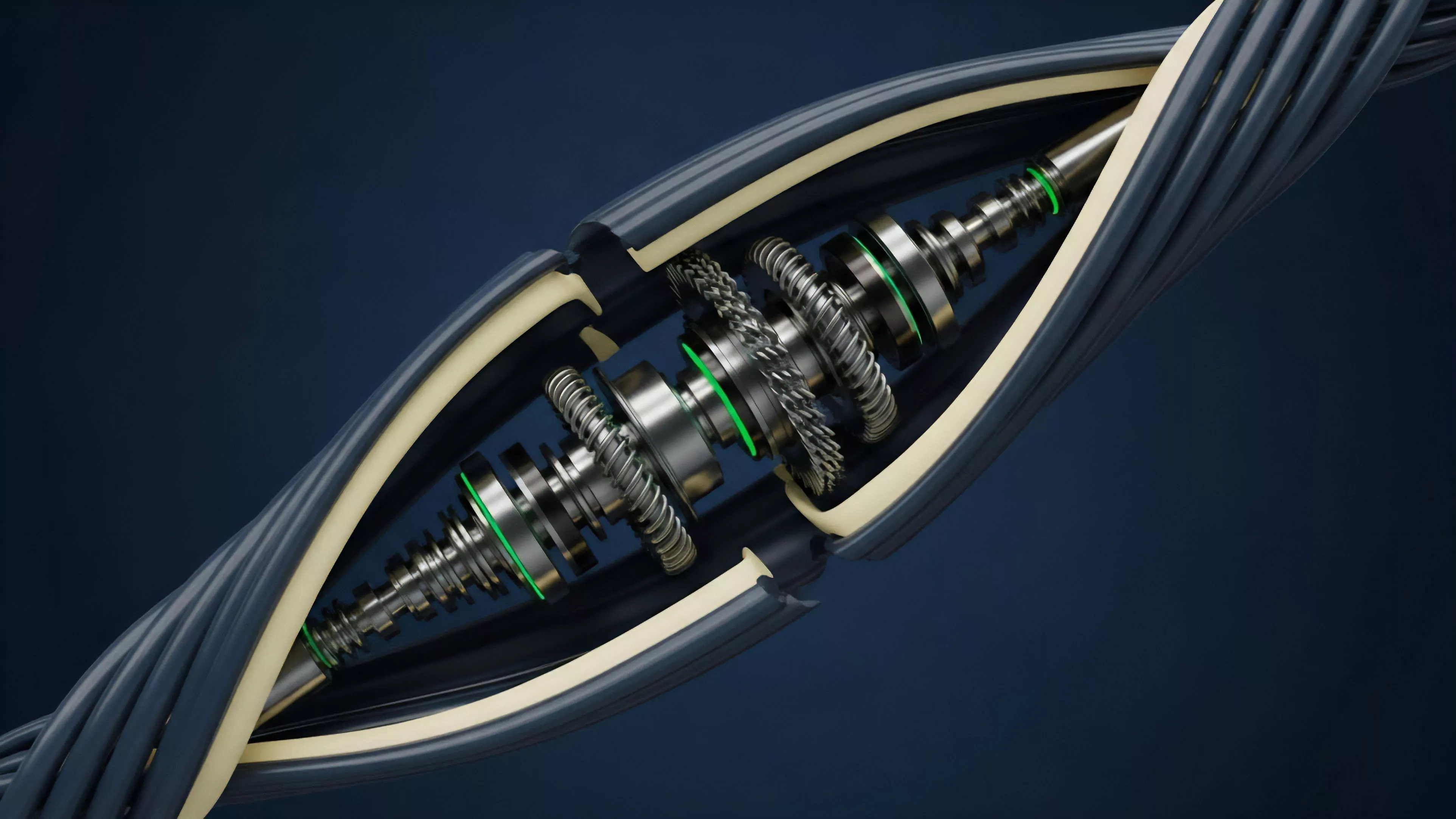

At its core, this discipline relies on the premise that volatility is not constant but exhibits distinct clustering patterns and structural dependencies. In digital asset markets, where order flow is transparent and settlement is instantaneous, these models must account for high-frequency feedback loops and the unique reflexive nature of token-based incentives.

Origin

The lineage of Market Volatility Modeling traces back to the development of the Black-Scholes-Merton framework, which introduced the concept of constant volatility as a fundamental input for option valuation. However, the subsequent observation of the volatility smile ⎊ where implied volatility varies across strike prices ⎊ necessitated a departure from the assumption of geometric Brownian motion.

- GARCH models provided the first robust mechanism to capture the tendency of volatility to cluster in time-series data.

- Stochastic volatility models shifted the paradigm by treating volatility itself as a random variable, allowing for more realistic price dynamics.

- Local volatility surfaces offered a deterministic approach to reconcile theoretical pricing with observed market prices.

These foundations were later adapted for crypto-native environments, where the lack of traditional market hours and the prevalence of leverage-induced liquidations created a distinct volatility signature. The transition from legacy finance models to crypto-specific frameworks was driven by the requirement to handle 24/7 continuous trading and the rapid propagation of contagion across interconnected protocols.

Theory

Market Volatility Modeling operates through the rigorous application of quantitative finance to analyze the tail risks inherent in digital assets. The primary challenge involves the calibration of models to account for the heavy-tailed nature of return distributions, which frequently exceed the parameters predicted by standard normal distributions.

Structural Components

Implied Volatility Dynamics

Implied volatility serves as the market-derived forecast of future price dispersion. Analysts evaluate the volatility surface to discern shifts in demand for protection, often identifying structural imbalances before they manifest as spot price movements.

Realized Volatility Analysis

Realized volatility measures the actual historical dispersion of returns over specific windows. The ratio between implied and realized volatility provides a measure of the risk premium demanded by liquidity providers within decentralized pools.

The divergence between implied and realized volatility signals systemic mispricing or shifting participant sentiment regarding tail risk.

| Model Type | Core Mechanism | Crypto Application |

| GARCH | Autoregressive variance | Short-term risk forecasting |

| SABR | Stochastic alpha beta rho | Volatility smile modeling |

| Jump Diffusion | Continuous and discrete shocks | Liquidation risk estimation |

The mathematical architecture of these models must remain adaptive. Occasionally, one finds that the most sophisticated model fails to account for the sheer force of a flash-loan-driven liquidation cascade, a reminder that mathematical precision is always subject to the underlying protocol’s physical constraints.

Approach

Current practices in Market Volatility Modeling prioritize the integration of on-chain order flow data with off-chain derivative pricing. Market participants utilize advanced statistical techniques to identify patterns in liquidity fragmentation and cross-protocol arbitrage.

- Order flow toxicity analysis evaluates the impact of informed trading on volatility.

- Liquidation threshold monitoring provides real-time data on the fragility of leveraged positions.

- Cross-asset correlation mapping identifies systemic risk transmission between base assets and derivative tokens.

Strategists focus on the Gex (Gamma Exposure) of the market, recognizing that the hedging activities of large market makers often drive spot price volatility. By analyzing the gamma profiles of major decentralized option vaults, analysts can anticipate periods of heightened instability.

Evolution

The trajectory of Market Volatility Modeling has shifted from reactive analysis to proactive systemic design. Early iterations merely applied legacy models to crypto assets, ignoring the specific constraints of automated market makers and decentralized margin engines.

Modern protocols now embed volatility modeling directly into their incentive structures. Governance models often adjust borrowing rates or collateral requirements based on real-time volatility inputs, creating a self-correcting mechanism for system health. This evolution reflects a broader transition toward financial systems that are inherently aware of their own risk parameters.

Volatility modeling has moved from an external observer’s tool to an internal, automated component of decentralized protocol architecture.

| Era | Focus | Primary Tool |

| Legacy Import | Model replication | Black-Scholes |

| Market Maturation | Volatility clustering | GARCH variants |

| Protocol Integration | Risk-aware governance | On-chain oracle feedback |

Horizon

The future of Market Volatility Modeling lies in the development of high-fidelity, privacy-preserving models that can incorporate fragmented data without compromising participant anonymity. We anticipate the rise of decentralized volatility oracles that aggregate cross-venue data to provide a unified, tamper-resistant volatility surface. Further integration with machine learning techniques will allow for the detection of non-linear dependencies that traditional models overlook. These advancements will facilitate the creation of more resilient derivatives, capable of maintaining stability even during extreme market stress. The ultimate objective is a financial architecture where risk is transparent, quantifiable, and dynamically priced by the protocol itself.