Essence

Market Data Verification functions as the technical bridge between raw off-chain price discovery and on-chain derivative execution. It provides the cryptographic proof required to validate that an asset price used for settlement or liquidation is accurate, timely, and untampered. Without this mechanism, decentralized derivative protocols operate on blind trust, exposing capital to the manipulation of localized liquidity pools.

Market Data Verification ensures the integrity of decentralized financial settlement by providing cryptographically signed price feeds for derivative contracts.

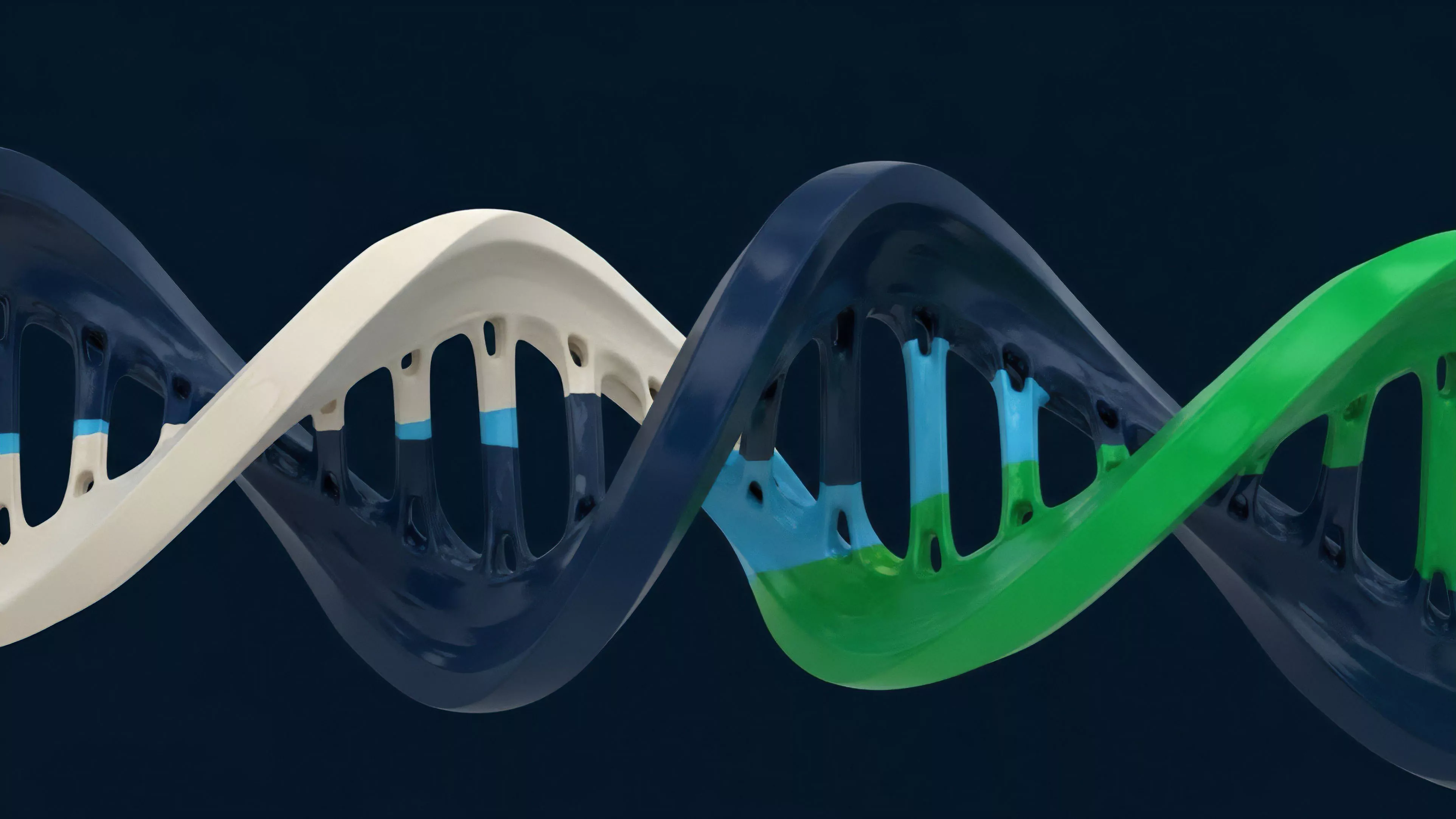

The core utility resides in its ability to transform external information into a verifiable state for smart contracts. This process mitigates the oracle problem, where malicious actors attempt to distort price inputs to trigger artificial liquidations or extract value from under-collateralized positions. The architecture relies on consensus mechanisms to aggregate multiple data sources, filtering outliers and ensuring that the final price reflects the true global state of the market.

Origin

The genesis of Market Data Verification traces back to the inherent limitations of blockchain isolation.

Early decentralized exchange models struggled with price manipulation because they relied on internal, thin-liquidity order books. This structural flaw allowed attackers to swing asset prices locally to execute profitable liquidations, necessitating the development of decentralized oracle networks. Early implementations focused on simple median-based aggregation, which proved insufficient against sophisticated adversarial agents.

As derivative volume shifted toward complex instruments, the requirement for higher-frequency and more resilient data verification became apparent. The evolution moved from centralized feed providers toward distributed, incentive-aligned networks where node operators stake capital to guarantee the accuracy of their reporting.

Theory

The architecture of Market Data Verification rests on three pillars: data source aggregation, cryptographic validation, and incentive-based reporting. The system must account for the latency between the occurrence of a trade on a centralized exchange and the inclusion of that price on a blockchain.

- Data Aggregation involves polling diverse liquidity venues to compute a weighted average that resists single-source bias.

- Cryptographic Proofs allow smart contracts to verify that a data point originated from an authorized set of nodes without needing to trust any single participant.

- Slashing Mechanisms impose economic penalties on nodes that submit price data deviating significantly from the established consensus, ensuring participants remain honest.

Verification mechanisms utilize multi-source aggregation and economic slashing to ensure price inputs remain robust against adversarial manipulation.

The mathematical modeling of these systems often employs Byzantine Fault Tolerance to guarantee that the network reaches consensus even when a subset of nodes acts maliciously. Quantitative analysts view this as a signal-to-noise optimization problem where the goal is to minimize the variance between the reported price and the true market price, subject to the constraints of block time and gas costs.

| Mechanism | Function | Risk Mitigation |

|---|---|---|

| Median Aggregation | Calculates central price point | Reduces outlier impact |

| Staked Consensus | Requires collateral from reporters | Prevents Sybil attacks |

| Latency Thresholds | Rejects stale data inputs | Prevents front-running |

Approach

Modern systems adopt a modular approach to Market Data Verification, separating the data acquisition layer from the settlement layer. This modularity allows protocols to plug into various oracle providers depending on their specific risk appetite and latency requirements. The current landscape emphasizes transparency, with every price update stored on-chain for post-trade auditability.

Sometimes, the complexity of verifying high-frequency data across fragmented liquidity venues introduces systemic lag that traders must incorporate into their execution strategies. This technical reality means that successful market participants account for the oracle delay as an additional cost of trading, often adjusting their hedge ratios to compensate for potential price deviations during volatile periods.

Evolution

The trajectory of Market Data Verification has shifted from static, low-frequency updates to real-time, event-driven feeds. Early designs relied on periodic pushes, which were prone to obsolescence during rapid market moves.

The current generation utilizes pull-based models where data is retrieved only when needed, reducing network congestion and increasing the accuracy of settlement at the exact moment of contract expiry.

Evolution in verification technology favors pull-based data retrieval to increase precision and reduce network congestion during high volatility.

This shift reflects the maturation of decentralized derivatives from experimental toys to professional-grade infrastructure. Protocols now integrate ZK-proofs to verify the validity of data off-chain, drastically reducing the computational load on the main chain while maintaining rigorous security standards. The move toward ZK-based verification represents a fundamental shift in how decentralized systems handle external information, allowing for more complex derivative products to function without sacrificing performance.

Horizon

The future of Market Data Verification points toward decentralized, permissionless infrastructure that can handle cross-chain liquidity aggregation without centralized intermediaries.

Future protocols will likely incorporate predictive modeling to anticipate potential oracle failures, allowing smart contracts to switch between data sources autonomously during periods of extreme network stress.

- Predictive Oracle Switching will enable protocols to dynamically select the most reliable data provider based on real-time health metrics.

- Cross-Chain Aggregation will provide a unified global price for assets across disparate blockchain environments, reducing arbitrage opportunities.

- Self-Healing Networks will allow nodes to identify and isolate compromised reporters without manual intervention, maintaining system uptime.

| Future Development | Systemic Benefit |

|---|---|

| ZK-Rollup Oracles | Lower gas, higher throughput |

| Cross-Chain Messaging | Unified global price discovery |

| Automated Risk Adjustment | Dynamic margin requirement updates |

The ultimate goal remains the total elimination of trusted third parties in the price discovery loop. As protocols continue to refine these verification mechanisms, the gap between decentralized derivative performance and legacy exchange efficiency will close, facilitating the migration of global capital into open financial systems.