Essence

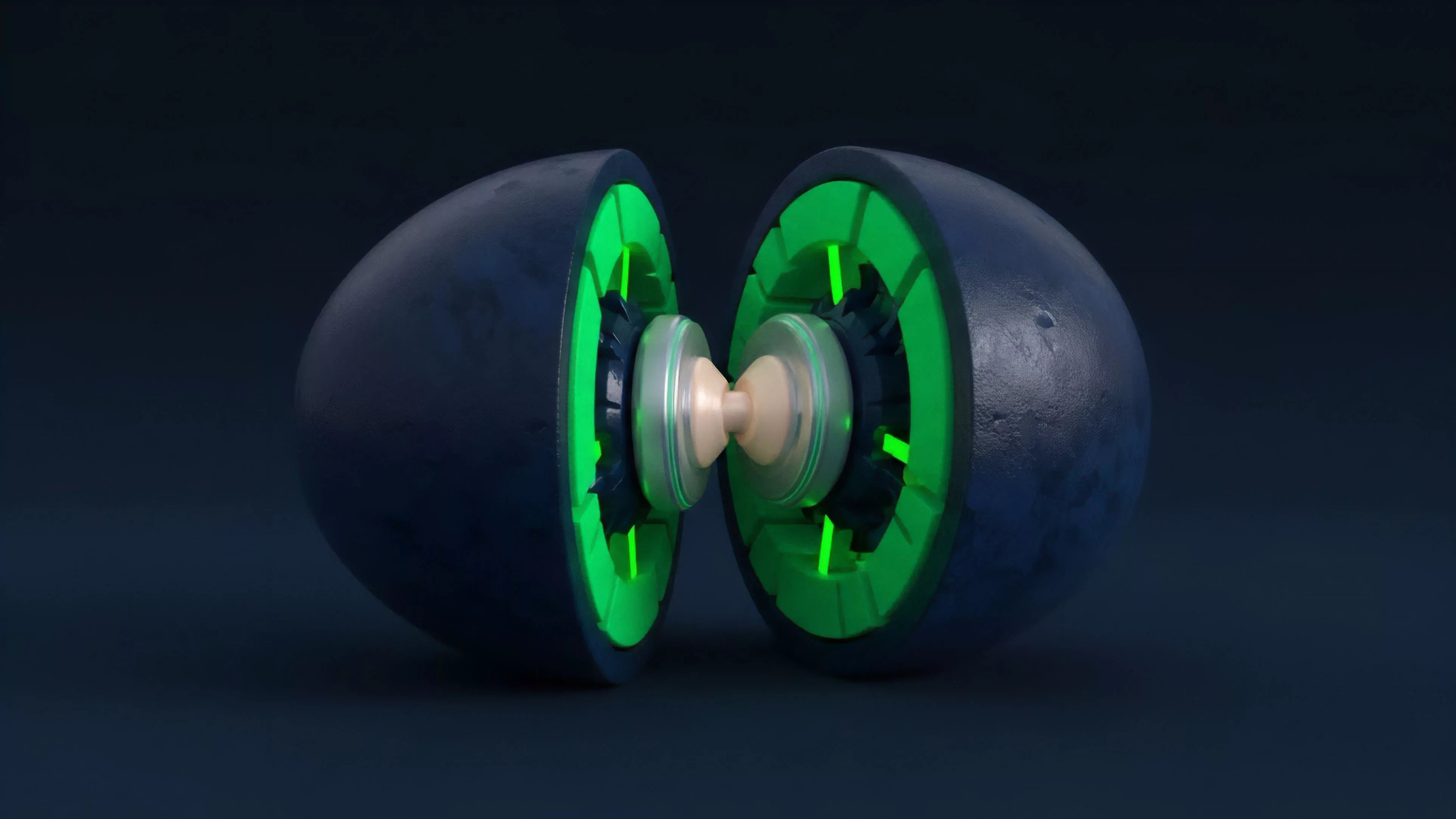

Market Data Validation serves as the algorithmic bedrock for decentralized derivative protocols. It represents the procedural integrity of price feeds, volume metrics, and order book states before they trigger automated execution logic. Without rigorous verification, smart contracts operate on poisoned inputs, leading to systematic liquidation errors and protocol insolvency.

Market Data Validation acts as the primary defense mechanism against malicious price manipulation and oracle failure within decentralized financial architectures.

This process necessitates the continuous reconciliation of off-chain liquidity snapshots with on-chain settlement states. It functions as a filter that strips noise from signal, ensuring that only authenticated, time-stamped, and cryptographically signed data points influence margin requirements and derivative settlement.

Origin

The genesis of Market Data Validation traces back to the inherent limitations of blockchain oracles during the early development of decentralized exchanges. Initial implementations relied on single-source price feeds, which exposed protocols to rapid flash-loan-driven price manipulation.

The requirement for a robust validation layer became undeniable following major liquidity crunches where price divergence between centralized exchanges and decentralized liquidity pools triggered premature liquidations.

- Oracle Decentralization: The shift toward multi-node consensus to prevent single-point-of-failure risks.

- Latency Mitigation: The development of high-frequency data ingestion techniques to minimize slippage during volatile periods.

- Statistical Filtering: The integration of outlier detection algorithms to discard anomalous trades that do not reflect genuine market equilibrium.

This evolution was driven by the realization that decentralized finance required a native, trustless mechanism to verify market state parity, independent of traditional financial intermediaries.

Theory

The theoretical framework for Market Data Validation rests upon the principle of multi-source verification and probabilistic consistency. To maintain protocol solvency, the system must ingest data from heterogeneous sources, apply weighting based on liquidity depth, and discard inputs that deviate from established volatility thresholds.

Mathematical Framework

The core validation engine utilizes a weighted average approach, often augmented by median-based filtering to mitigate the impact of extreme outliers. The formula for a validated price point, Vp, typically follows this structure:

| Parameter | Definition |

| Si | Price input from source i |

| Wi | Weight assigned to source i based on liquidity |

| T | Time-weighted decay factor |

The robustness of a derivative protocol depends on the statistical distance between ingested price data and the true underlying market value.

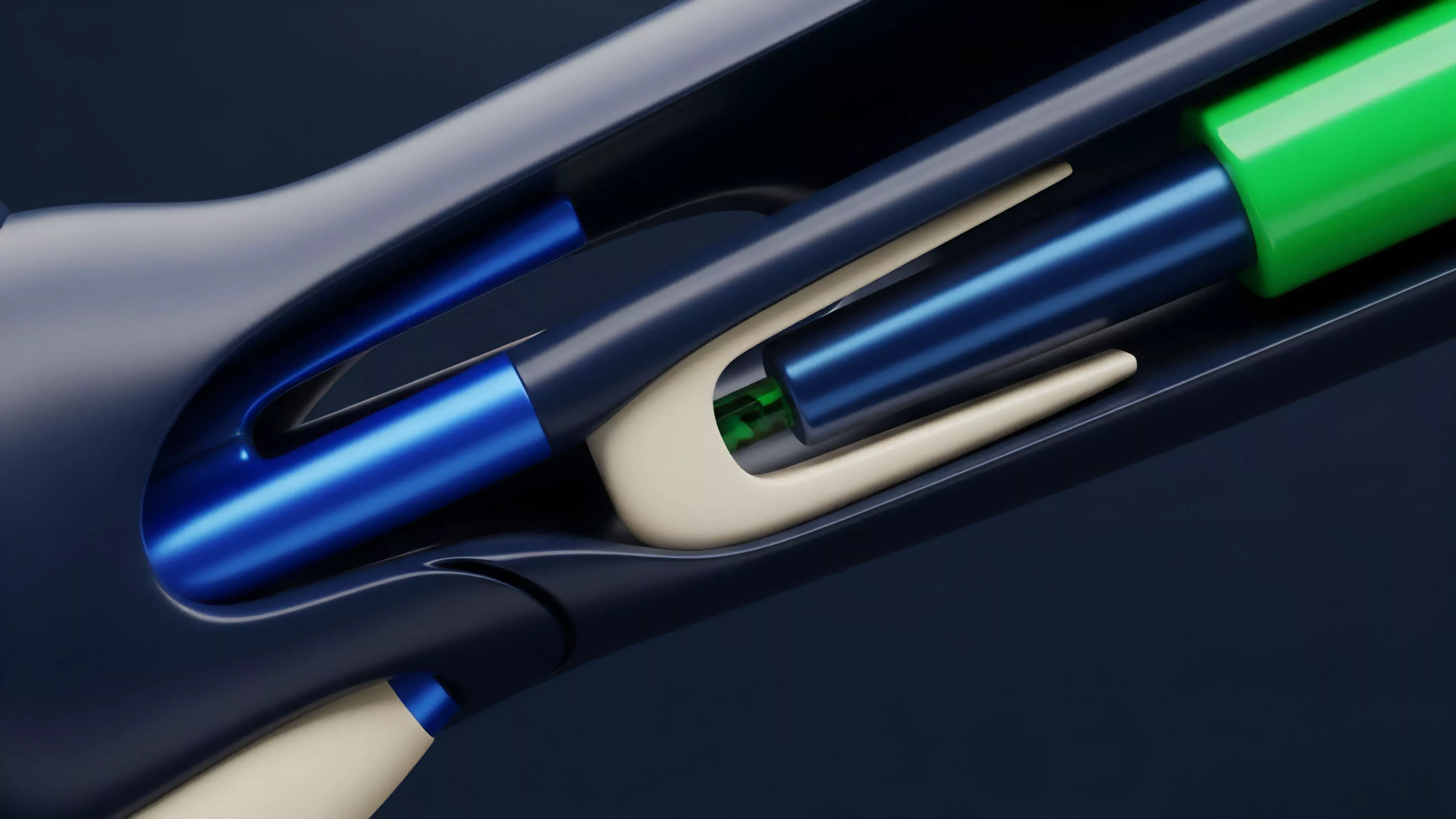

The system must account for the Protocol Physics of blockchain settlement, where block time latency can create an arbitrage window between the market event and the contract execution. Therefore, the validation layer incorporates time-delta constraints, rejecting data packets that exceed a specific latency threshold.

Approach

Current implementation strategies prioritize the modularity of data ingestion pipelines. Developers now employ cross-chain aggregation services that combine centralized exchange APIs with decentralized volume data to construct a comprehensive view of the market.

- Anomaly Detection: The system scans for rapid price deviations that occur outside of standard volatility bands.

- Cryptographic Proofs: Every data packet must carry a digital signature verifying its origin and integrity.

- Liquidity Depth Analysis: Validation algorithms assign higher weight to exchanges with higher order book density to prevent manipulation on thin markets.

This approach shifts the burden of security from individual smart contracts to a specialized, hardened data layer. It ensures that margin engines receive a smoothed, representative price that reflects actual executable liquidity rather than transient spikes.

Evolution

The transition from simple median-price feeds to complex, heuristic-based validation represents a major shift in derivative infrastructure. Early systems were static, relying on hard-coded parameters that failed under extreme market stress.

Modern iterations utilize dynamic thresholds that adjust automatically based on real-time volatility metrics, effectively tightening validation strictness during periods of high market turbulence.

Adaptive validation logic allows protocols to maintain stability by dynamically adjusting sensitivity to market noise during high-volatility events.

The field has moved toward incorporating Behavioral Game Theory into the validation process. By introducing economic penalties for oracle providers who submit data inconsistent with the broader market consensus, protocols now incentivize accurate reporting through cryptographic and economic alignment.

Horizon

The next phase of Market Data Validation involves the integration of zero-knowledge proofs to verify the provenance of data without exposing sensitive trade details. This enables private, high-frequency validation that maintains protocol privacy while ensuring data accuracy.

Future architectures will likely leverage decentralized, intent-based routing to ensure that the validation process occurs as close to the execution point as possible, minimizing the risk of front-running and MEV-related exploitation.

| Trend | Implication |

| Zero-Knowledge Proofs | Enhanced privacy and data integrity |

| Intent-Based Execution | Reduction in latency and front-running |

| Automated Margin Adjustments | Proactive risk management based on validated data |

The ultimate goal remains the total elimination of reliance on external, opaque data providers, replacing them with a fully transparent, verifiable, and resilient data consensus mechanism that mirrors the decentralized nature of the underlying assets.