Essence

Market Data Standardization represents the systematic harmonization of disparate price feeds, order book snapshots, and trade execution telemetry across decentralized venues. It acts as the technical substrate required for consistent valuation of complex financial instruments. Without this structural alignment, fragmented liquidity pools remain opaque, leading to significant basis risk for traders and inaccurate risk assessment for automated margin engines.

Standardized market data provides the singular, verifiable truth necessary for accurate derivative pricing and systemic risk management.

The primary function involves transforming raw, protocol-specific event logs into a uniform schema suitable for quantitative analysis. This process ensures that volatility surfaces, delta-neutral hedging strategies, and liquidation triggers operate on identical inputs regardless of the underlying exchange architecture.

Origin

Early crypto derivatives markets relied upon bespoke, exchange-specific data formats, forcing participants to maintain complex middleware to aggregate fragmented information. This environment favored entities with the technical capacity to build proprietary normalization pipelines.

The necessity for a unified approach became clear during periods of extreme volatility, where discrepancies between exchange feeds caused catastrophic failures in cross-margin systems.

- Liquidity fragmentation necessitated a common language for order book depth.

- Latency arbitrage drove the demand for synchronized timestamping across venues.

- Margin engine failures highlighted the danger of relying on unverified or non-standardized price indices.

These early technical hurdles established the requirement for robust data ingestion layers. The industry shifted toward consensus-based oracle designs and standardized websocket protocols to mitigate the risks inherent in isolated, proprietary data silos.

Theory

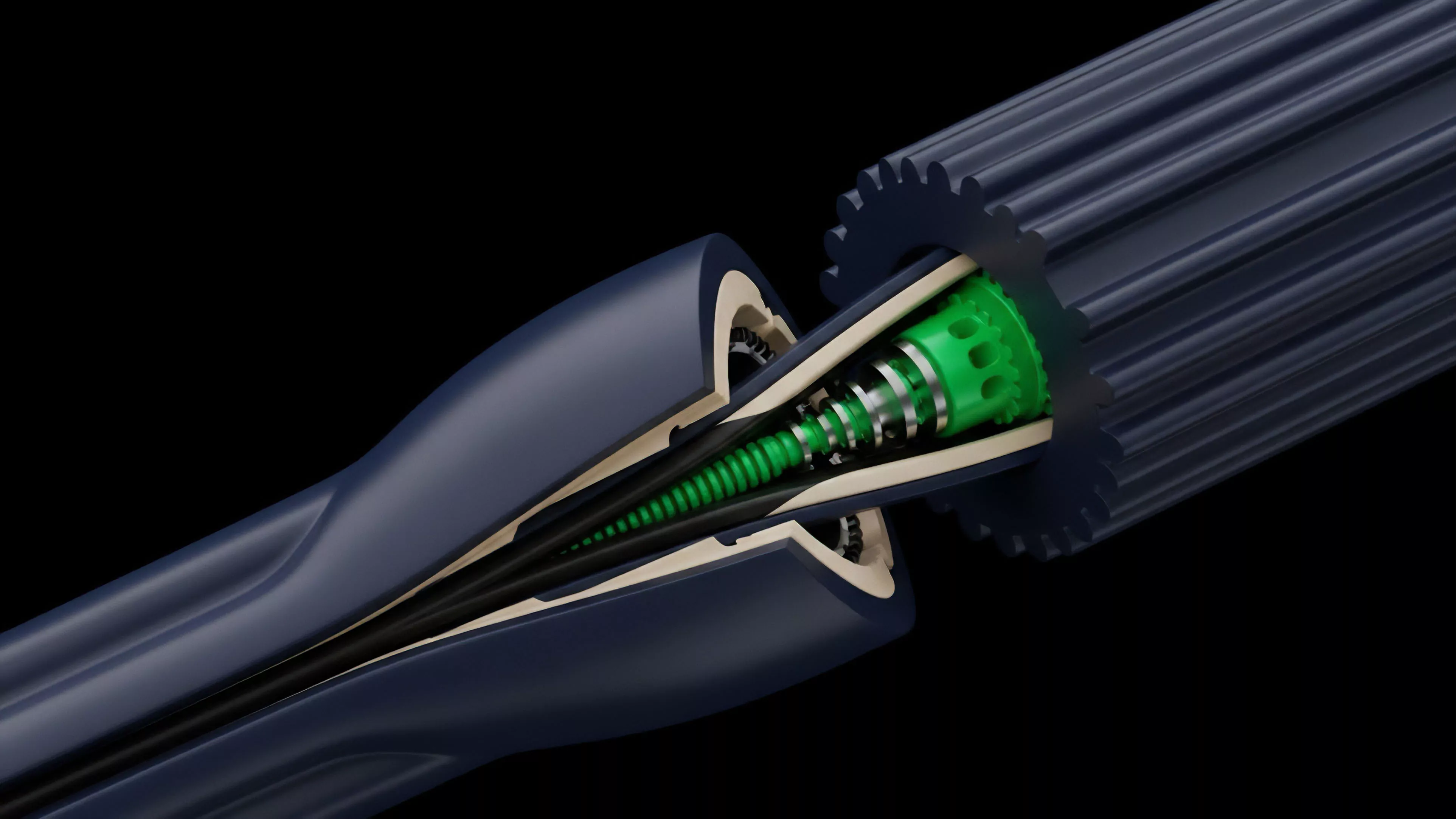

The architecture of Market Data Standardization relies on the precise mapping of event-driven data structures into a canonical form. This involves managing the conversion of heterogeneous data formats into a time-series model that respects the underlying physics of blockchain settlement.

Protocol Physics and Settlement

The interaction between Market Data Standardization and consensus mechanisms dictates the speed and accuracy of price discovery. Because blockchain finality introduces non-deterministic delays, data feeds must account for the difference between block time and execution time.

| Metric | Standardized Feed | Raw Feed |

| Latency | Deterministic | Variable |

| Accuracy | Verified | Unverified |

| Risk Exposure | Calculable | Probabilistic |

Rigorous standardization enables the application of complex mathematical models to decentralized order flow without loss of fidelity.

Quantitative models, such as the Black-Scholes framework, require continuous, accurate inputs to maintain validity. When standardization protocols fail to capture the nuances of order flow toxicity or sudden liquidity withdrawal, the resulting pricing errors create immediate, exploitable opportunities for adversarial agents. This is where the pricing model becomes elegant ⎊ and dangerous if ignored.

Approach

Current strategies for achieving Market Data Standardization focus on decentralized oracle networks and standardized API layers.

These systems aggregate data from multiple exchanges, apply filtering algorithms to remove outliers, and deliver a consensus-based price feed to smart contracts.

- Normalization pipelines convert varying exchange message formats into a unified JSON or binary structure.

- Consensus-based oracles aggregate cross-venue data to prevent manipulation of individual price points.

- Historical replay engines allow for backtesting strategies against standardized, high-fidelity datasets.

These approaches ensure that derivative protocols can execute complex risk management functions, such as automated liquidation, with a high degree of confidence. The shift toward standardized data also facilitates better interoperability between disparate DeFi protocols, allowing for more efficient capital deployment across the broader landscape.

Evolution

The transition from proprietary, siloed data to standardized, open-access protocols reflects the maturing of decentralized financial systems. Early iterations prioritized basic price updates, whereas current architectures incorporate comprehensive order flow metrics, including top-of-book depth and trade volume distributions.

Standardized data architectures reduce information asymmetry, fostering more resilient and efficient decentralized markets.

This evolution mirrors the development of traditional financial market data providers but adapts to the unique constraints of decentralized environments. The move toward on-chain, verifiable data streams addresses the inherent distrust in centralized exchange reporting. The industry is currently shifting toward high-frequency, low-latency data aggregation, which is essential for competing with traditional electronic market makers.

Horizon

Future developments in Market Data Standardization will likely involve the integration of zero-knowledge proofs to verify the integrity of data feeds without exposing the underlying private trading activity.

This advancement will enable institutional-grade data privacy while maintaining the public transparency required for trustless financial operations.

| Trend | Impact |

| ZK-Proofs | Verifiable privacy |

| Cross-Chain Oracles | Unified liquidity |

| Real-Time Aggregation | Institutional efficiency |

The trajectory points toward a fully transparent, high-fidelity data environment where standardized inputs drive all automated derivative settlement. As these systems scale, the reliance on fragmented, unverified data will vanish, replaced by robust, standardized protocols that underpin the next generation of decentralized financial infrastructure.