Essence

Long-Range Attacks represent a fundamental challenge to the security model of proof-of-stake blockchain systems. These adversarial actions occur when an attacker acquires private keys that once held significant stake in a network but have since been sold or moved. By utilizing these obsolete credentials, the actor constructs an alternative chain history starting from a point in the distant past.

Long-Range Attacks exploit the lack of physical constraints in virtual consensus to rewrite transaction history using discarded historical stake.

This capability renders traditional longest-chain rules insufficient for verifying the current state. Without external anchoring or checkpointing, a node syncing from scratch faces multiple valid chains, making it impossible to distinguish the legitimate network history from a malicious, retrospectively generated fork.

Origin

The genesis of this vulnerability traces back to the transition from proof-of-work to proof-of-stake. In proof-of-work, the physical requirement of electricity and hardware creates an immutable link to the present, preventing the generation of past blocks.

Proof-of-stake removes this energy barrier, allowing stake to be reused across time. Early theoretical research identified that validators with access to historical private keys could essentially manufacture a new, conflicting history from the genesis block or any subsequent point. This insight shifted the architectural requirements for consensus protocols from purely local validation to systems requiring global coordination and trust-based synchronization.

Theory

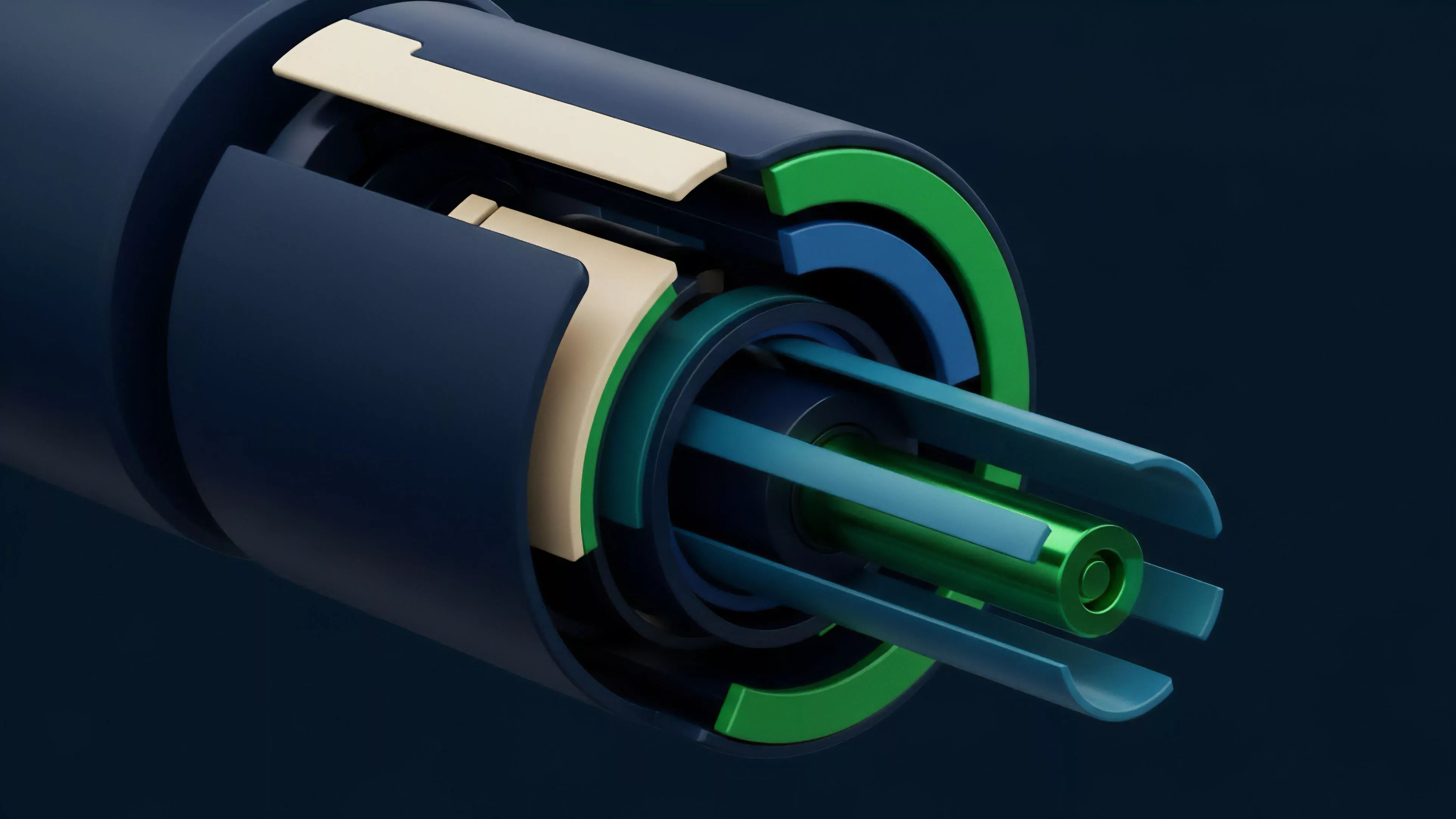

The mechanics of these attacks rely on the separation of stake ownership from the temporal context of its validity.

Consensus Finality mechanisms attempt to mitigate this by introducing irreversible checkpoints.

| Attack Vector | Mechanism | Mitigation |

| History Rewrite | Reusing old keys | Weak Subjectivity |

| Fork Proliferation | Creating alternative chains | Checkpointing |

| Validator Collusion | Historical stake control | Slashing conditions |

The mathematical risk sensitivity is defined by the probability of an attacker controlling a supermajority of the historical stake at a specific block height. If the protocol lacks Weak Subjectivity, a node is vulnerable to being tricked by an adversary providing a chain that appears valid but contradicts the established network state.

Weak Subjectivity serves as the necessary security assumption that nodes rely on recent, trusted state information to validate incoming blocks.

Game theory suggests that if an attacker can remain undetected while building this shadow chain, the cost of the attack becomes purely the opportunity cost of the initial stake acquisition, rather than the ongoing capital expenditure required for proof-of-work dominance.

Approach

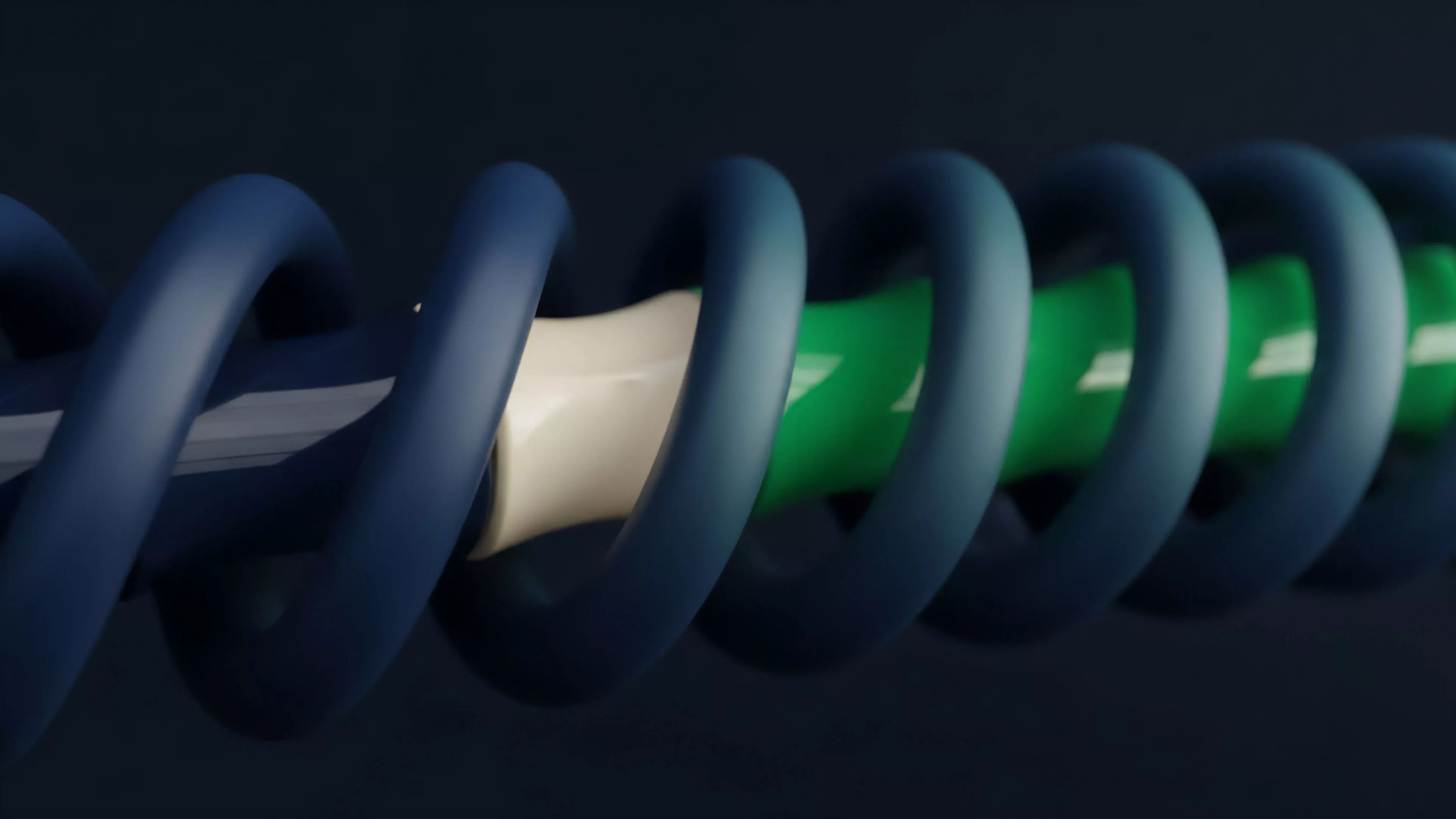

Modern protocol design manages this risk through a combination of social and technical constraints. Developers now implement Checkpointing, where the network periodically commits to a specific state, effectively pruning the potential for deep reorgs.

- Validator Rotation limits the duration that any single key set can influence the consensus state.

- Social Consensus relies on node operators manually verifying the correct chain head through community-verified sources during initial synchronization.

- Slashing Mechanisms impose permanent economic penalties on validators who sign conflicting blocks, even if those blocks are historical.

This structural evolution acknowledges that decentralization requires a trade-off between absolute permissionless synchronization and the pragmatic necessity of a trusted entry point.

Evolution

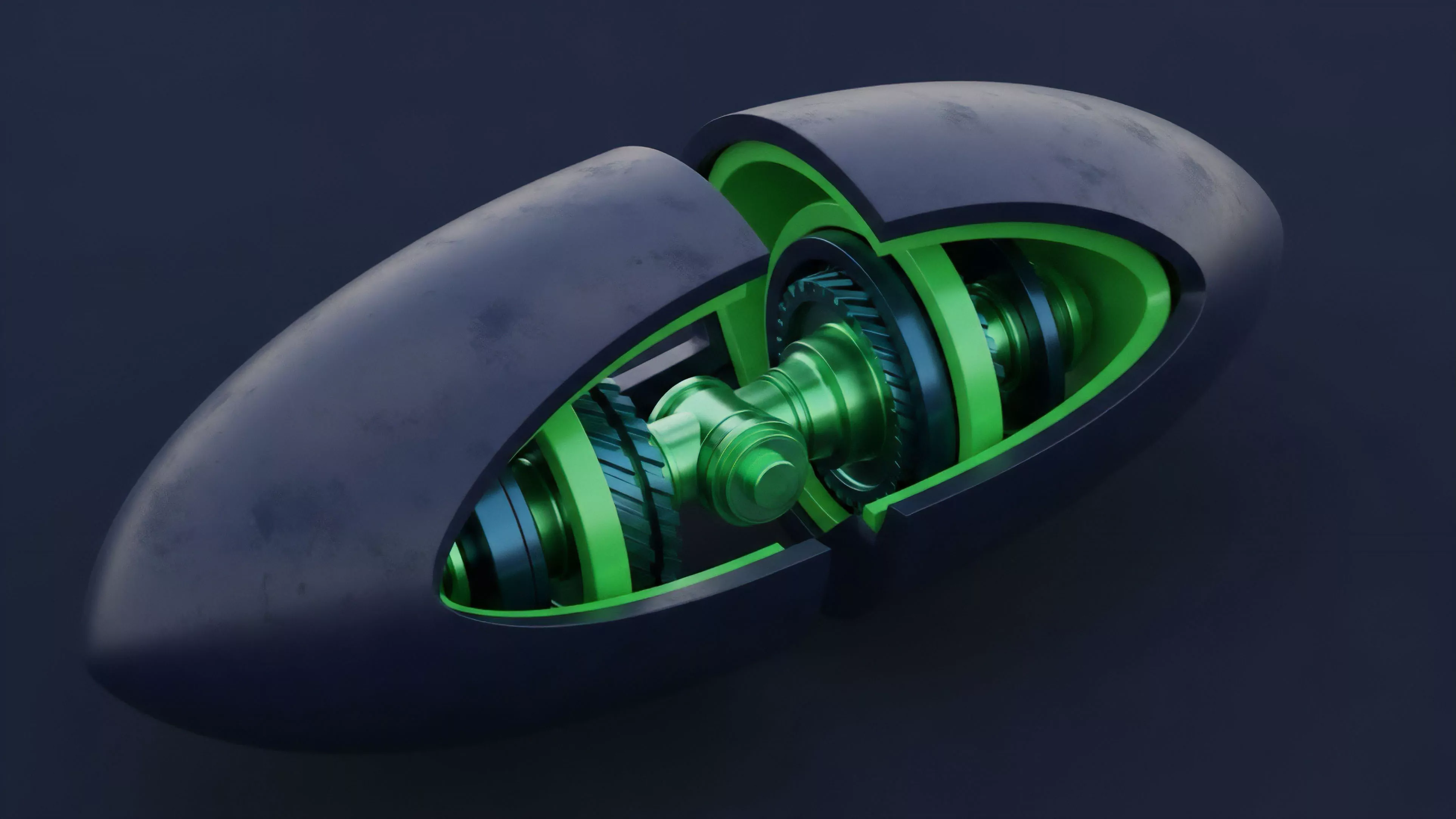

The transition from raw, pure-stake models to sophisticated hybrid architectures reflects the maturation of decentralized finance. Initially, the community viewed these attacks as a catastrophic failure mode, leading to overly rigid designs. Current systems now prioritize Finality Gadgets that provide explicit, deterministic proof of block inclusion.

Finality Gadgets provide the mathematical certainty required to render historical forks irrelevant to current network participants.

This shift has moved the problem from a core cryptographic vulnerability to a manageable operational parameter. By forcing the cost of attack to be significantly higher than the potential gain, protocols stabilize against the threat of historical revisionism.

Horizon

The future of securing these networks lies in the integration of zero-knowledge proofs to compress and verify historical states without requiring full chain re-synchronization. As we optimize for capital efficiency, the pressure to allow faster unstaking periods increases, which in turn necessitates more robust, automated checkpointing mechanisms. The ultimate goal remains the elimination of the requirement for manual, social-layer verification during the onboarding process. Achieving this would mean the network can autonomously prove its own validity to any new entrant, regardless of how long the node has been offline.