Essence of Screening

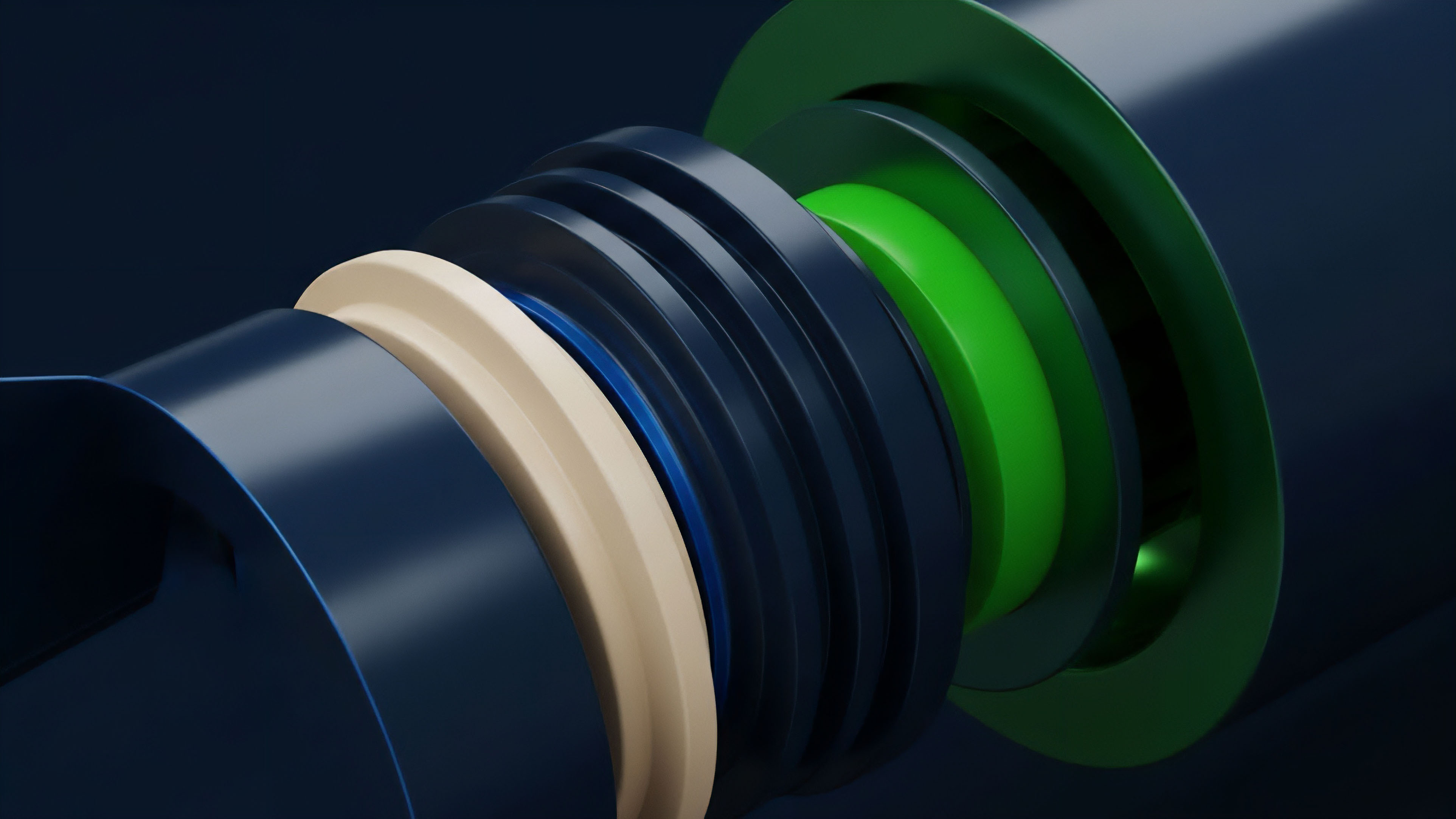

The concept of Liquidity Provider Screening (LPS) represents the mandatory, multi-dimensional risk assessment framework a derivative protocol or institutional participant must apply to an entity providing two-sided quotes. This is not a clerical task; it is a systemic necessity for maintaining the integrity of the options market’s clearing and settlement layer. The functional significance lies in quantifying and bounding the Counterparty Solvency Cartography ⎊ the mapping of a provider’s capacity to absorb unexpected volatility and execute their delta-hedging strategies without initiating a cascade failure.

The screening process must verify the economic reality of the liquidity being offered, distinguishing genuine capital commitment from thinly capitalized, high-leverage speculation that could vanish at the first volatility spike.

Our focus on the screening process reveals a deeper truth about decentralized finance: trust is replaced by auditable economic constraints. The goal is to move beyond superficial metrics like total value locked (TVL) and assess the underlying Value-at-Risk (VaR) and Expected Shortfall (ES) of the LP’s portfolio structure. A robust screening process acts as a systemic firebreak, ensuring that the liquidity layer can withstand tail-risk events.

Liquidity Provider Screening is the foundational risk-modeling exercise used to quantify a provider’s capacity to absorb systemic volatility and maintain two-sided quoting across the options surface.

Origin in Market Microstructure

The origin of formalized LP screening is rooted in the traditional, exchange-mandated Market Maker Capital Requirements and Default Fund Contributions established in centralized options markets like the CBOE. These mechanisms recognized that the provision of immediacy ⎊ the core product of liquidity ⎊ generates a necessary, unhedgeable inventory risk for the market maker. To mitigate this risk to the clearing house, exchanges demanded collateralization and rigorous financial audits.

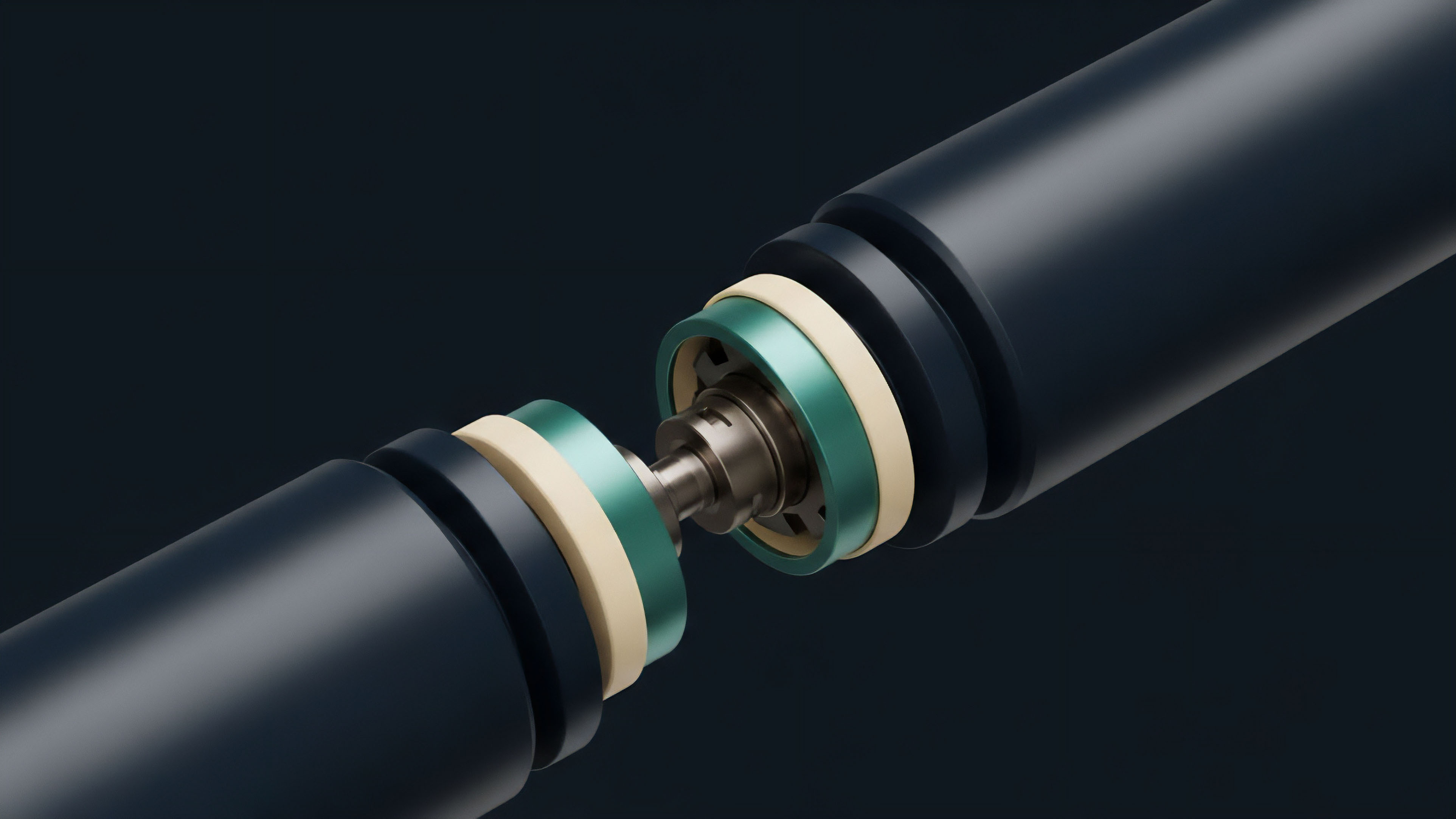

When derivatives protocols transitioned to decentralized automated market makers (AMMs), the problem mutated. The original Constant Product Market Maker (CPMM) models, while elegant, exposed LPs to unbounded Impermanent Loss (IL), effectively a short volatility position that arbitrageurs exploited. This systemic vulnerability necessitated a shift from screening the counterparty to screening the protocol’s mechanism itself.

The screening question became: Does the protocol’s physics protect the LP, or must the LP’s external capital cushion protect the protocol? This intellectual pivot led to the development of concentrated liquidity and options-specific AMMs designed to structurally reduce the IL and capital-at-risk, making the liquidity provision itself a more screenable, bounded risk.

Theory of Quantitative Risk

The theoretical foundation for LPS in options rests on Quantitative Finance and the rigorous application of the Option Greeks. The screening process must ascertain the LP’s ability to manage a multi-dimensional risk vector, not simply a directional bet. A failure to accurately model and dynamically hedge the portfolio’s Greek exposure suggests a high probability of collapse during market stress.

Greeks Sensitivity Analysis

The theoretical screening requires an LP to demonstrate competency across the entire risk surface. The focus is not solely on the first-order derivative.

- Delta Hedging Efficacy: The ability to maintain a near-zero Delta exposure by dynamically trading the underlying asset. Screening verifies the latency and execution quality of the LP’s underlying spot market infrastructure.

- Gamma Risk Absorption: Gamma, the second derivative of price, is the cost of being a liquidity provider. Screening examines the LP’s capital allocation relative to their Gamma exposure, ensuring sufficient capital to cover the transaction costs and slippage incurred while re-hedging during rapid price movements.

- Vega and Volatility Skew Management: Vega measures sensitivity to implied volatility. Screening must evaluate the LP’s model for pricing the Volatility Skew ⎊ the phenomenon where out-of-the-money options trade at higher implied volatility than at-the-money options. An LP that underprices the tail risk inherent in the skew is fundamentally unstable.

- Vanna and Volga: These second-order Greeks ⎊ Vanna (Delta’s sensitivity to Volatility) and Volga (Vega’s sensitivity to Volatility) ⎊ test the LP’s sophistication. Their modeling capacity for these factors determines their survival in a market where volatility itself is stochastic.

A screening process that ignores second-order Greeks fails to quantify the true tail risk of an options LP, treating a non-linear problem with a linear approximation.

Stress Testing and Protocol Physics

Beyond traditional VaR, the screening process must incorporate Protocol Physics ⎊ the technical constraints of the smart contract environment. This involves stress-testing the LP’s strategy against on-chain mechanics.

| Risk Vector | Quant Metric | Protocol Constraint |

|---|---|---|

| Liquidation Risk | Value-at-Risk (VaR) | Collateralization Ratio, Oracle Latency |

| Hedging Execution | Gamma/Delta Costs | Gas Fees, Block Time, Transaction Latency |

| Solvency/Contagion | Expected Shortfall (ES) | Smart Contract Audit Score, Governance Failure Potential |

The core challenge is that a DeFi LP’s liquidation is a programmatic event, not a discretionary one. The screening must model the precise market conditions under which the LP’s on-chain collateral is insufficient to cover the automated debt to the pool, triggering a cascade that impacts other participants.

Approach to Operational Due Diligence

The practical approach to Liquidity Provider Screening requires an integrated analysis of financial capacity, technical infrastructure, and behavioral stability. It transcends a simple balance sheet review to assess the velocity of capital and information flow.

Technical & Execution Audit

The LP’s trading engine must be scrutinized for its Market Microstructure advantages. Low-latency infrastructure is a competitive necessity, but in screening, it is a risk mitigation tool.

- Latency Profile: Verification of the LP’s ability to receive price updates, reprice their quotes, and execute their delta-hedges within a single block time, particularly during high-volatility events. Slow LPs are stale LPs, and stale LPs are systemic liabilities.

- Order Routing and Execution Quality: Auditing the LP’s smart order routing algorithms for optimal execution on the underlying asset’s spot markets. Poor execution quality in the hedge leg directly increases the effective cost of Gamma, shrinking the profit margin and accelerating insolvency.

- Smart Contract Security Score: For DeFi LPs, a mandatory audit of the contracts they use to interact with the options protocol. This assesses Smart Contract Security risk, where a single bug can render the LP’s position unhedgeable or allow the capital to be drained.

Behavioral Game Theory and Incentive Alignment

The screening process must also account for Behavioral Game Theory. The LP is an economic agent operating within an adversarial environment.

The protocol must screen for incentive alignment. Does the protocol’s Tokenomics structure reward sustainable, long-term liquidity provision, or does it incentivize mercenary capital seeking short-term yield farming gains? LPs primarily driven by volatile token incentives are more likely to execute a “rug pull” of their liquidity at the first sign of adverse market movement, prioritizing incentive harvesting over market stability.

The screening should favor LPs with a demonstrable history of maintaining quotes during stress, a proxy for strategic commitment over opportunistic behavior.

The most dangerous liquidity is that which is incentivized to disappear precisely when it is needed most, a failure of tokenomic design masked as a screening problem.

Evolution to Algorithmic Solvency

The evolution of LPS has moved from a static, post-trade financial audit to a dynamic, algorithmic, and on-chain solvency check. Traditional screening focused on the LP’s balance sheet; modern screening focuses on the LP’s real-time risk position.

The Shift to Real-Time Margin Engines

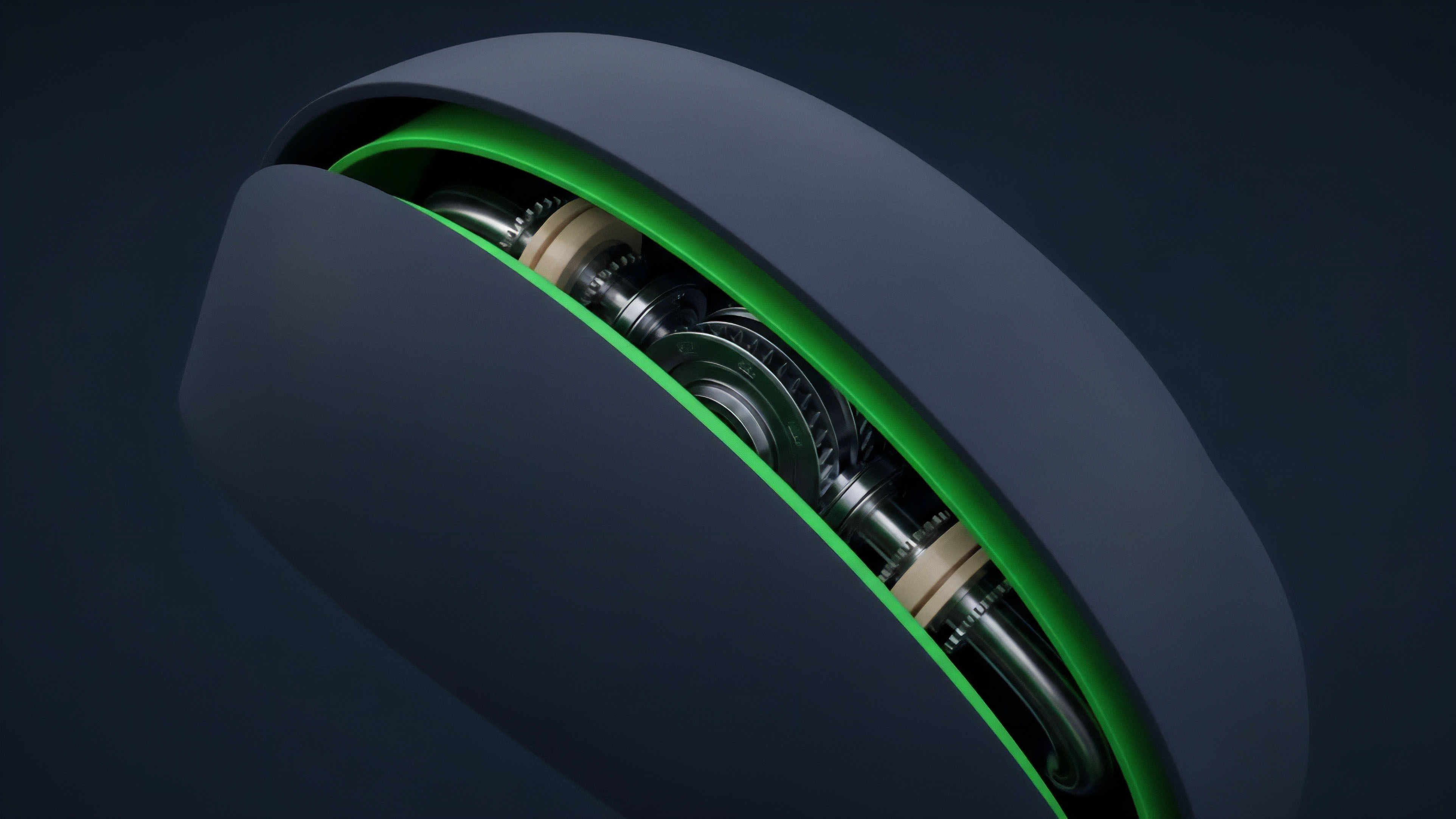

The initial DeFi options protocols relied on simple over-collateralization. The problem with this model is that it is capital-inefficient and does not account for the non-linear risk of options. The current state is characterized by Portfolio Margin Systems, which screen the LP’s risk position continuously.

The core innovation is the development of a Cross-Margining System that calculates the total risk across all of an LP’s positions ⎊ long and short calls, puts, and their underlying spot hedges ⎊ and determines a single, consolidated margin requirement. This system effectively screens the LP’s strategy by enforcing risk limits programmatically. If the combined Greeks exceed the protocol’s defined Liquidity Thresholds, the system either forces a partial liquidation or prevents new quotes from being posted, effectively auto-screening the LP out of the market before they become a contagion risk.

Regulatory Arbitrage and Global Screening

The screening process is complicated by Regulatory Arbitrage. Centralized LPs are screened by jurisdictional law (e.g. KYC/AML requirements, capital adequacy rules), but decentralized LPs are pseudonymous and operate outside these frameworks.

The market is witnessing a divergence:

| LP Type | Primary Screening Focus | Systemic Risk Source |

|---|---|---|

| Centralized (CEX) | Capital Adequacy, Regulatory Compliance | Operational Failure, Centralized Custody Risk |

| Decentralized (DEX) | Smart Contract Risk, Protocol Risk | Impermanent Loss, Governance Failure |

Protocols operating in this gray space must apply a form of Geo-fencing and Access Control ⎊ a screening layer based on IP address and wallet behavior ⎊ to mitigate regulatory exposure, even as they attempt to screen for financial solvency. The choice of which LPs to accept becomes a simultaneous financial and legal decision.

Horizon Autonomous Risk Engines

The future of Liquidity Provider Screening lies in autonomous, AI-Driven Risk Engines that move beyond simple historical VaR and engage in predictive, adversarial modeling. The system will not simply react to an LP’s capital; it will anticipate the LP’s strategic failure point.

The next generation of screening will rely on Macro-Crypto Correlation data and Trend Forecasting models. An AI-based screening engine will analyze an LP’s quoted volatility surface against global macroeconomic indicators, identifying LPs whose pricing models are systematically underestimating the risk of a correlated market crash. For example, if a provider’s Vega exposure is high and the macro-liquidity cycle is tightening, the system can automatically increase the required collateral margin, screening the provider’s capital for resilience against an anticipated rather than a historical event.

This moves the system from a passive auditor to an active, risk-averse counterparty. The ultimate goal is to architect a Decentralized Clearing Mechanism that uses Zero-Knowledge Proofs to verify an LP’s solvency and Greek exposure off-chain without revealing the proprietary details of their strategy. This preserves the LP’s competitive edge while providing the protocol with an undeniable, cryptographic proof of solvency.

This is the necessary bridge between market efficiency and systemic security. The screening process becomes a continuous, cryptographically-enforced equilibrium state.

The final evolution of LP screening is its complete disappearance as a manual process, replaced by a real-time, autonomous, and cryptographically verifiable solvency proof.

Glossary

Tokenomics Design

Liquidity Provider Function

Data Provider Independence

Data Provider Reputation System

Ai Driven Risk Engines

Liquidity Provider Hedging

Infrastructure Provider Risk

Systemic Volatility

Backstop Liquidity Provider