Essence

Liquidity Mining Optimization represents the programmatic refinement of capital allocation strategies within decentralized automated market makers. Participants seek to maximize yield while minimizing exposure to impermanent loss and protocol-specific risks. This practice shifts the focus from passive asset provision to active portfolio management, where yield is a function of price volatility, trading volume, and incentive structures.

Liquidity mining optimization involves the precise calibration of capital deployment to maximize fee revenue and token rewards while hedging against liquidity provider risks.

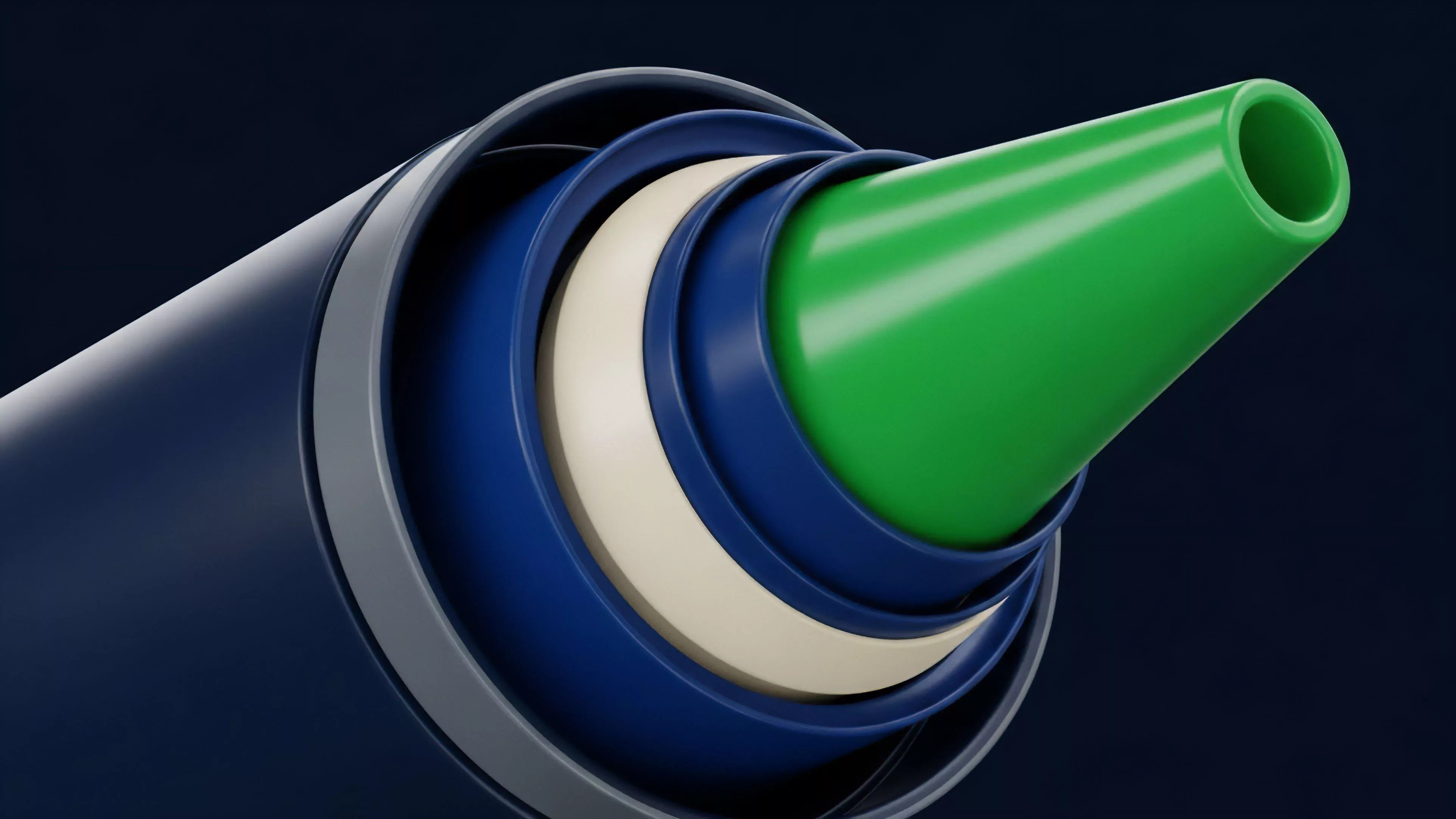

The core objective is to align liquidity depth with the statistical distribution of asset prices. By narrowing the range of active liquidity provision, providers increase capital efficiency and fee capture. This requires constant monitoring of market microstructure and the underlying volatility of the pooled assets to avoid periods of inactivity where the position sits outside the active trading band.

Origin

The genesis of this practice resides in the transition from traditional order books to constant product market makers.

Initial decentralized finance models relied on uniform liquidity distribution across an infinite price range, which proved highly inefficient for capital utilization. As protocols introduced concentrated liquidity, the necessity for automated management tools became apparent. Early liquidity providers faced substantial challenges in maintaining optimal positions as market conditions shifted.

The volatility of digital assets often rendered manual adjustments reactive rather than proactive. Developers and quantitative traders responded by creating algorithmic frameworks capable of rebalancing positions based on real-time on-chain data, effectively moving the industry toward systematic liquidity management.

Theory

The theoretical foundation rests on the interplay between Concentrated Liquidity, Impermanent Loss, and Fee Revenue. Mathematical models, such as those derived from the Black-Scholes framework for option pricing, are adapted to estimate the probability of price movements within specific ranges.

- Gamma Exposure: Liquidity providers essentially hold short volatility positions, necessitating precise range selection to capture theta decay and trading fees.

- Capital Efficiency: The ratio of trading volume to the total value locked determines the profitability of a specific pool.

- Rebalancing Costs: Frequent adjustments incur gas expenses and potential slippage, which must be factored into the expected net yield.

Successful optimization relies on the rigorous application of quantitative models to predict price ranges and manage the delta exposure inherent in liquidity provision.

This domain draws heavily from market microstructure theory, where the liquidity provider acts as a market maker capturing the spread. The strategy involves balancing the desire for high fee accumulation against the risk of the asset price moving outside the chosen range, which leads to total exposure in the underperforming asset.

| Metric | Implication |

| Range Width | Inverse relationship between fee potential and risk of inactivity |

| Rebalancing Frequency | Trade-off between accuracy and transaction overhead |

| Volatility Sensitivity | Higher volatility requires wider ranges or faster adjustment cycles |

Approach

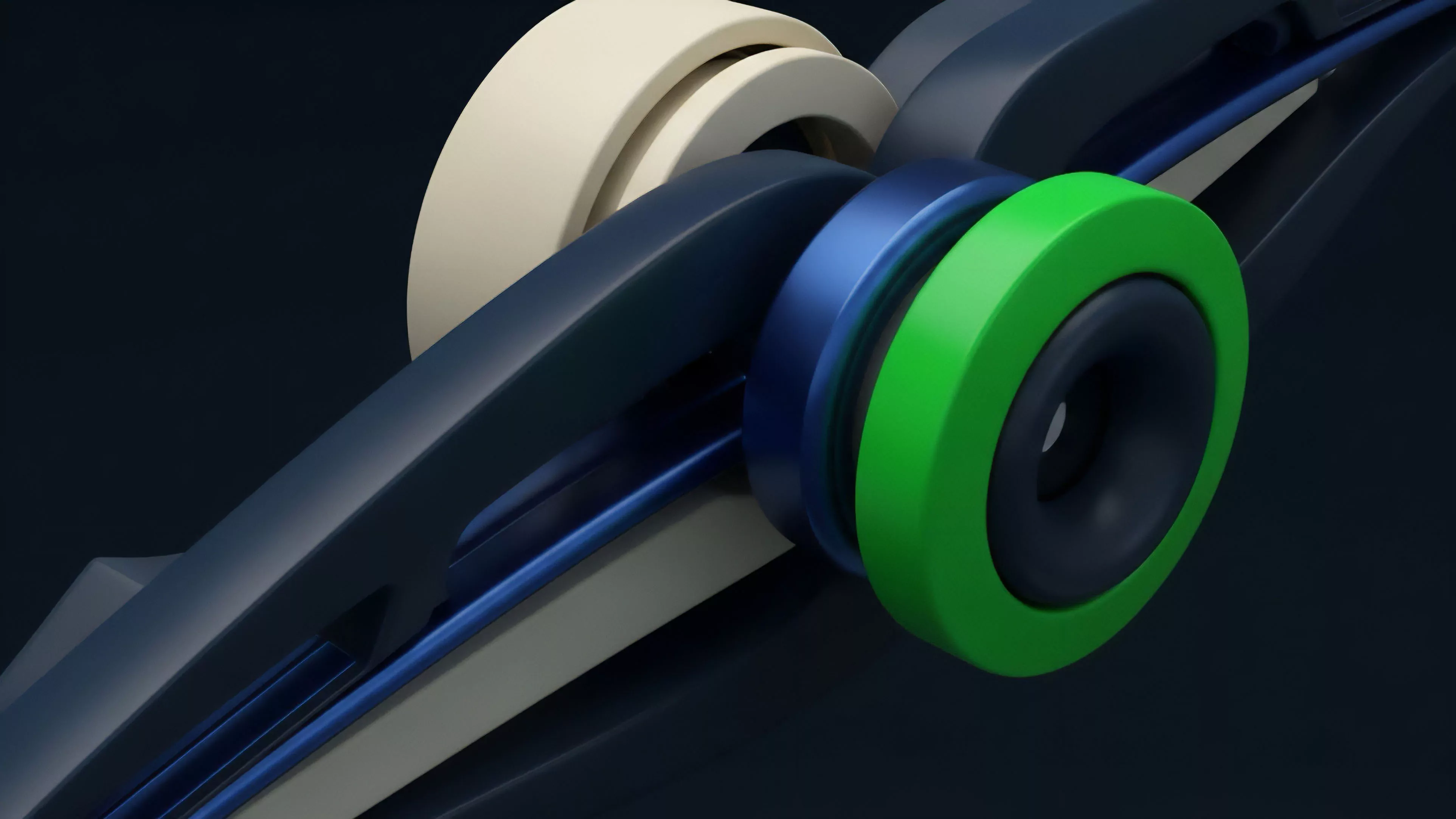

Current strategies employ automated agents to dynamically shift liquidity bands. These agents ingest real-time price feeds and order flow data to execute rebalancing transactions when specific thresholds are breached. This approach mitigates the need for constant manual oversight and allows for sophisticated hedging using external derivative markets.

Advanced participants utilize off-chain computation to run simulations before committing capital to on-chain vaults. These vaults aggregate assets and execute pre-defined strategies, distributing the cost of rebalancing across multiple participants. The shift toward modular, vault-based management allows for more resilient strategies that can withstand sudden market shocks.

- Predictive Modeling: Utilizing historical volatility and order book depth to forecast optimal price bands.

- Cross-Protocol Arbitrage: Identifying discrepancies in fee structures across different decentralized exchanges to maximize returns.

- Hedging Mechanics: Purchasing put options or using perpetual futures to offset the directional risk of the underlying assets.

Liquidity mining optimization transforms passive asset holding into an active, risk-adjusted trading strategy that thrives on market movement.

Evolution

The transition from simple yield farming to sophisticated liquidity management marks a maturation of decentralized markets. Initially, protocols rewarded users merely for providing capital, regardless of the utility or efficiency of that liquidity. This led to inflationary token models that often lacked long-term sustainability.

Market participants recognized that liquidity without efficiency is a cost, not an asset. The evolution toward protocol-owned liquidity and user-managed vaults signifies a move toward market-driven incentives. We are now witnessing the integration of complex derivatives ⎊ such as interest rate swaps and volatility-indexed products ⎊ directly into the liquidity management stack, allowing for more granular risk control.

Horizon

The future points toward fully autonomous, AI-driven liquidity managers that adapt to regime changes without human intervention.

These systems will incorporate macroeconomic indicators and global liquidity cycles into their decision-making process. The goal is to move beyond localized pool optimization toward cross-chain, multi-asset portfolio management that treats liquidity provision as a component of global risk management.

| Development Stage | Focus |

| Next Generation | Cross-chain liquidity aggregation |

| Advanced Integration | Embedded derivative hedging within pools |

| Systemic Evolution | Macro-sensitive autonomous rebalancing |

The critical pivot point lies in the ability to bridge the gap between fragmented liquidity pools and institutional-grade risk management tools. As these systems scale, the distinction between professional market making and retail liquidity provision will blur, creating a more robust and efficient decentralized financial infrastructure. What remains the fundamental limit of liquidity provision in an environment where automated agents possess near-perfect information about market depth?