Definition and Systemic Function

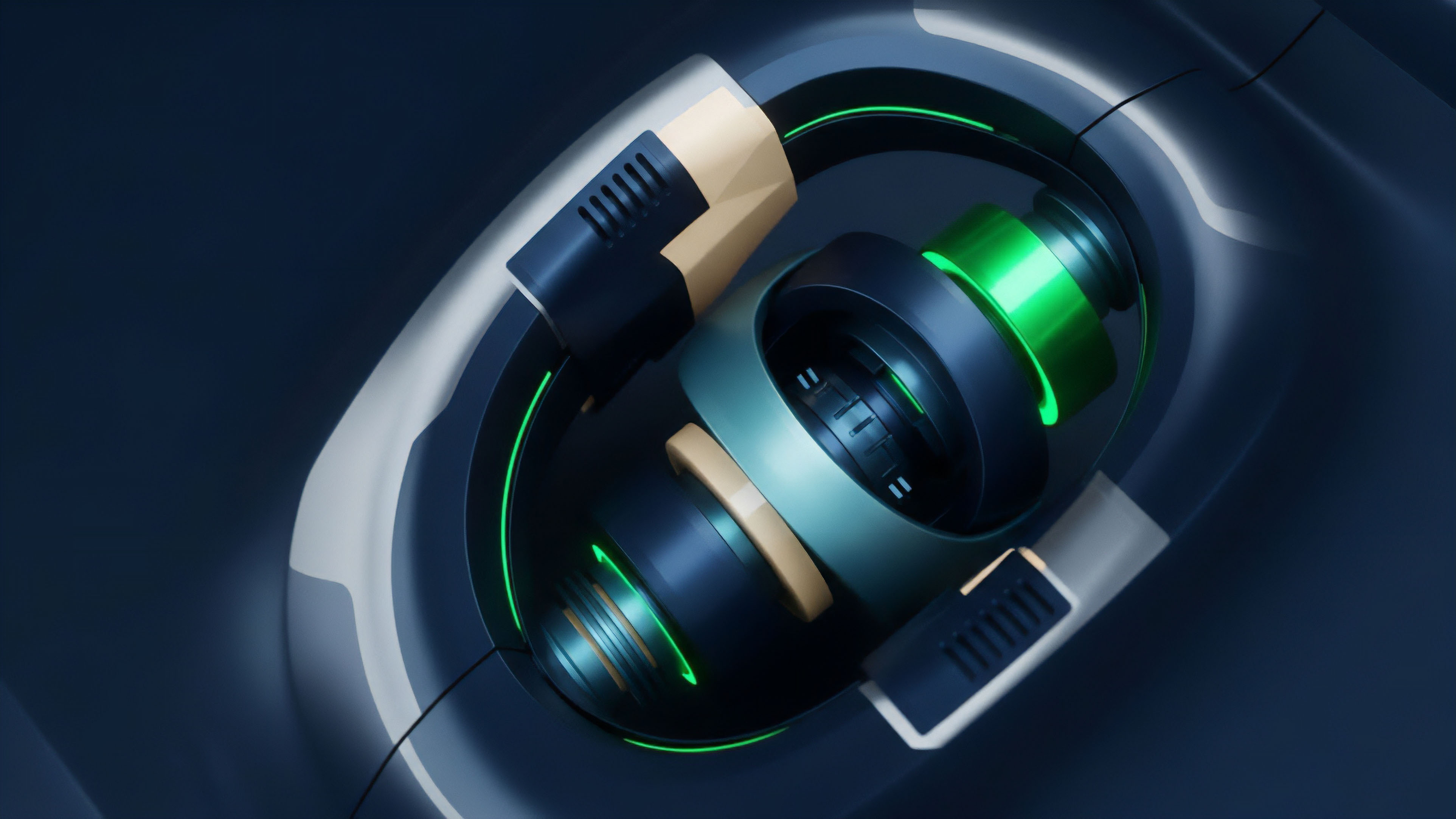

Mathematical precision in the calibration of collateral requirements determines the boundary between sustainable leverage and protocol insolvency. Liquidation Threshold Optimization serves as the structural governor of decentralized margin engines, defining the exact point where a position’s value relative to its debt triggers automated liquidation. This parameter is the primary defense against bad debt accumulation, ensuring that third-party liquidators have sufficient price spread to close underwater positions before they threaten the solvency of the entire lending pool.

The liquidation threshold defines the maximum borrowable amount relative to collateral value before automated seizure occurs.

The systemic relevance of Liquidation Threshold Optimization resides in its role as a stabilizer of market microstructure. When thresholds are set too aggressively, they invite cascading liquidations during periods of high volatility, leading to reflexive selling pressure and price suppression. Conversely, overly conservative thresholds reduce capital efficiency, forcing participants to over-collateralize and limiting the throughput of the credit market.

The objective is to identify a mathematical equilibrium that maximizes credit availability while maintaining a robust safety margin against tail-risk events.

Historical Development of Margin Engines

Margin requirements in traditional finance evolved through centralized regulatory mandates, such as Regulation T in the United States, which established fixed collateral ratios for securities. These systems relied on human intervention and clearinghouses to manage defaults. The transition to digital asset markets necessitated a shift toward algorithmic enforcement, where smart contracts replaced the role of the broker.

Early iterations of decentralized lending used static, high-margin requirements to compensate for the extreme variance of nascent assets.

Early margin systems relied on static ratios that failed to account for real-time liquidity depth.

As the market matured, the need for more responsive Liquidation Threshold Optimization became apparent. The 2020 market contraction demonstrated that fixed thresholds could not account for oracle latency or sudden liquidity evaporation. This realization prompted a move toward tiered collateral systems and risk-parity models, where the threshold is adjusted based on the specific asset’s volatility profile and the total volume of debt within the system.

This development reflects a broader trend toward the automation of risk management, removing the reliance on manual governance votes for parameter adjustments.

Mathematical Modeling of Risk Thresholds

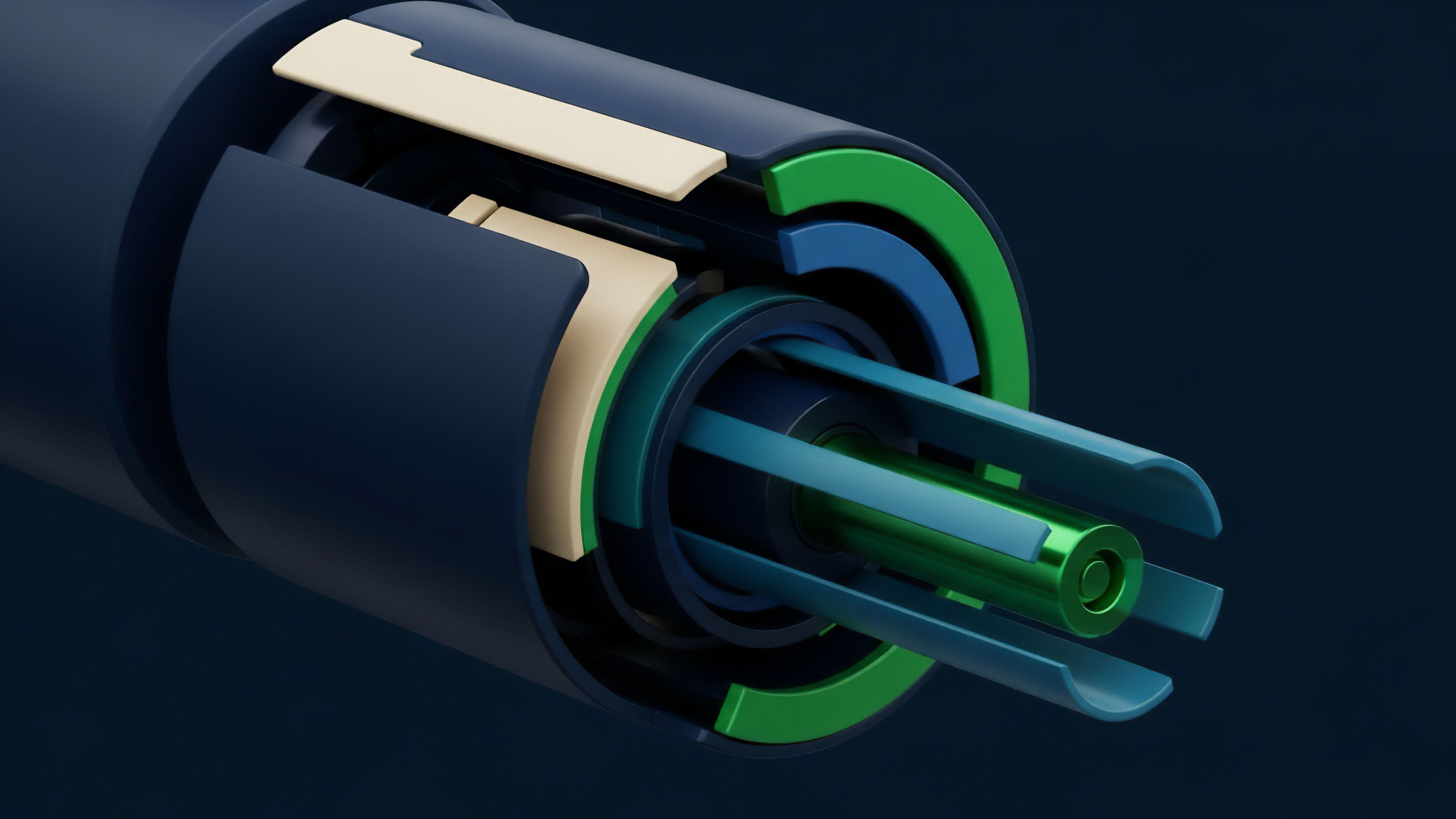

Quantitative analysis of Liquidation Threshold Optimization requires a rigorous application of Value-at-Risk (VaR) and Expected Shortfall (ES) methodologies. The central challenge is modeling the probability that an asset’s price will drop below the liquidation price faster than the system can execute a trade. This involves analyzing the interaction between oracle update frequency, the Liquidation Bonus offered to bots, and the available liquidity on decentralized exchanges.

A dense mathematical framework must account for the slippage incurred during large liquidations, which effectively lowers the realized value of the collateral. By simulating thousands of market scenarios, including fat-tail events and correlated asset crashes, architects can determine the optimal threshold that minimizes the probability of a protocol deficit. This process is not a one-time calculation but a continuous feedback loop that monitors the health of the lending pool.

The relationship between the Loan-to-Value (LTV) ratio and the liquidation threshold is the most sensitive lever in this architecture. A narrow gap between these two values increases capital efficiency but leaves the system vulnerable to oracle manipulation or sudden price gaps. The architecture must also consider the Health Factor of individual positions, which serves as a leading indicator of systemic stress.

| Variable | Impact on Threshold | Systemic Significance |

|---|---|---|

| Asset Volatility | Inverse Correlation | Higher variance requires lower thresholds to maintain safety. |

| Liquidity Depth | Direct Correlation | Deeper markets allow for higher thresholds due to lower slippage. |

| Oracle Latency | Inverse Correlation | Slow price updates necessitate larger safety margins. |

The mathematical gap between the borrow limit and the liquidation point determines the protocol safety margin.

Contemporary Execution in Decentralized Finance

Current methodologies for Liquidation Threshold Optimization utilize sophisticated off-chain simulations and on-chain risk controllers. Protocols like Aave and Compound employ risk managers who use agent-based modeling to stress-test parameters under various market conditions. These simulations analyze how different thresholds perform when faced with liquidity crunches or malicious price manipulation.

The execution involves a combination of governance-led adjustments and automated risk controllers that can adjust parameters in real-time based on market data.

- Oracle Heartbeat frequency determines how quickly the system reacts to price changes, directly influencing the required threshold.

- Liquidation Bonus structures must be calibrated to ensure that liquidators remain profitable even during periods of high gas prices.

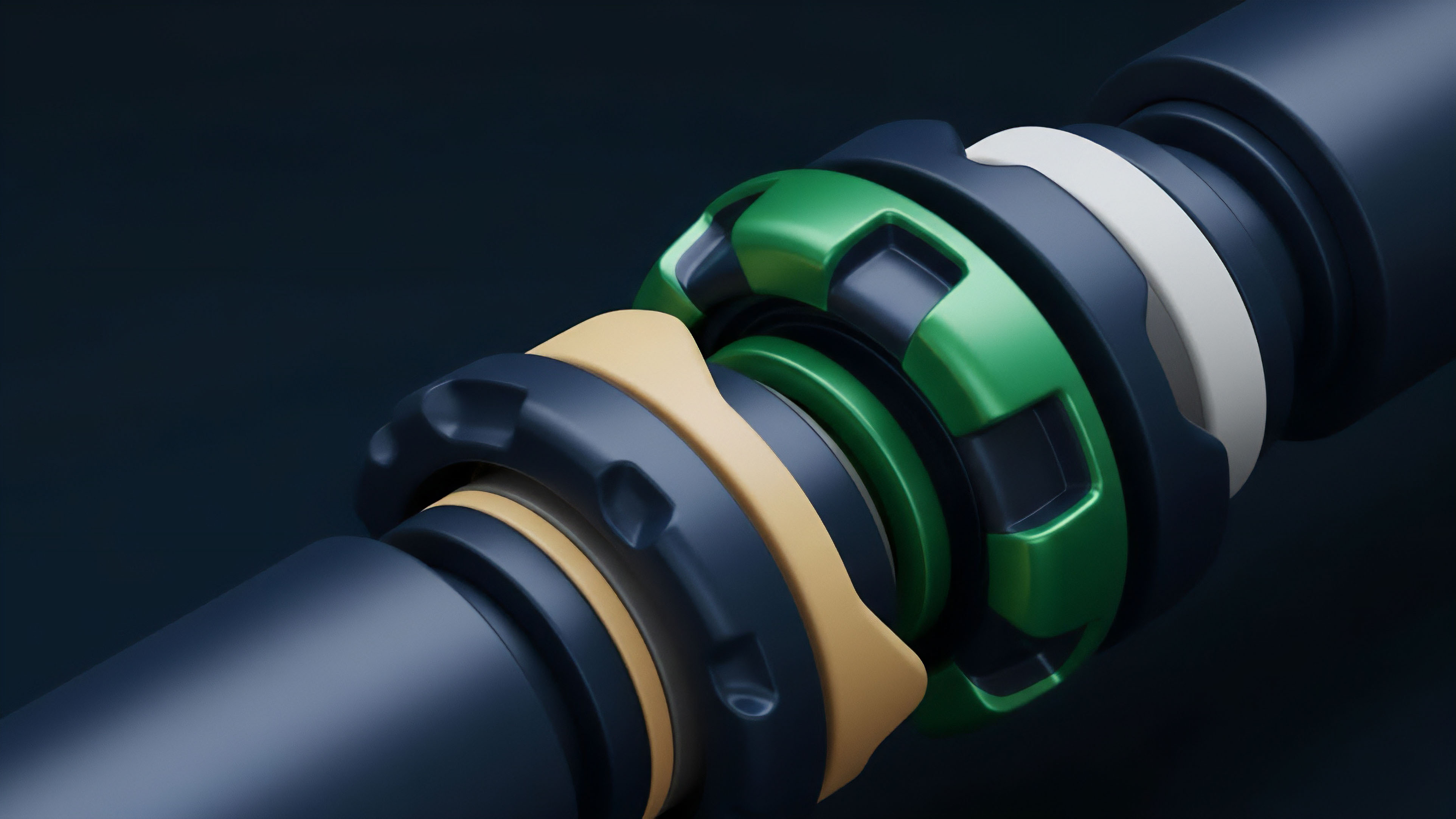

- Cross-Tier Collateralization allows for different thresholds based on the risk profile of the asset being used as backing.

- Bad Debt Socialization mechanisms act as a final backstop if the optimized thresholds fail to prevent insolvency.

The adversarial nature of the crypto market means that Liquidation Threshold Optimization must also account for MEV (Maximal Extractable Value). Liquidators often compete in priority gas auctions to be the first to close a position, and the threshold must be high enough to accommodate these costs. If the threshold is too tight, the profit margin for liquidators disappears, leading to a failure of the liquidation mechanism and the accumulation of toxic debt within the protocol.

Structural Transformations in Liquidation Logic

The logic governing liquidations has shifted from simple binary triggers to complex, multi-stage auctions.

This evolution addresses the problem of “liquidation cascades,” where a single large liquidation triggers a price drop that causes further liquidations. Modern Liquidation Threshold Optimization often includes Dutch Auctions, where the liquidation penalty starts high and decreases over time, allowing the market to find the most efficient price for the collateral. This reduces the impact on the underlying asset price and provides a more stable environment for borrowers.

| Mechanism Phase | Description | Risk Mitigation Level |

|---|---|---|

| Fixed Penalty | Static percentage fee paid to the liquidator. | Low – Leads to price dumping. |

| Tiered Thresholds | Thresholds vary by asset and position size. | Medium – Better capital allocation. |

| Variable Auctions | Collateral price is discovered through an auction process. | High – Minimizes market impact. |

Variable auction mechanisms allow the market to price liquidation risk more accurately than fixed penalties.

Another significant shift is the move toward Isolation Mode for new or high-risk assets. In this model, Liquidation Threshold Optimization is applied to specific asset pairs rather than the entire pool, preventing a single volatile asset from contaminating the rest of the protocol. This compartmentalization of risk is a major advancement in the architecture of decentralized lending, allowing for the inclusion of a wider range of assets without compromising the safety of the central liquidity reserves.

Future Architectures for Capital Efficiency

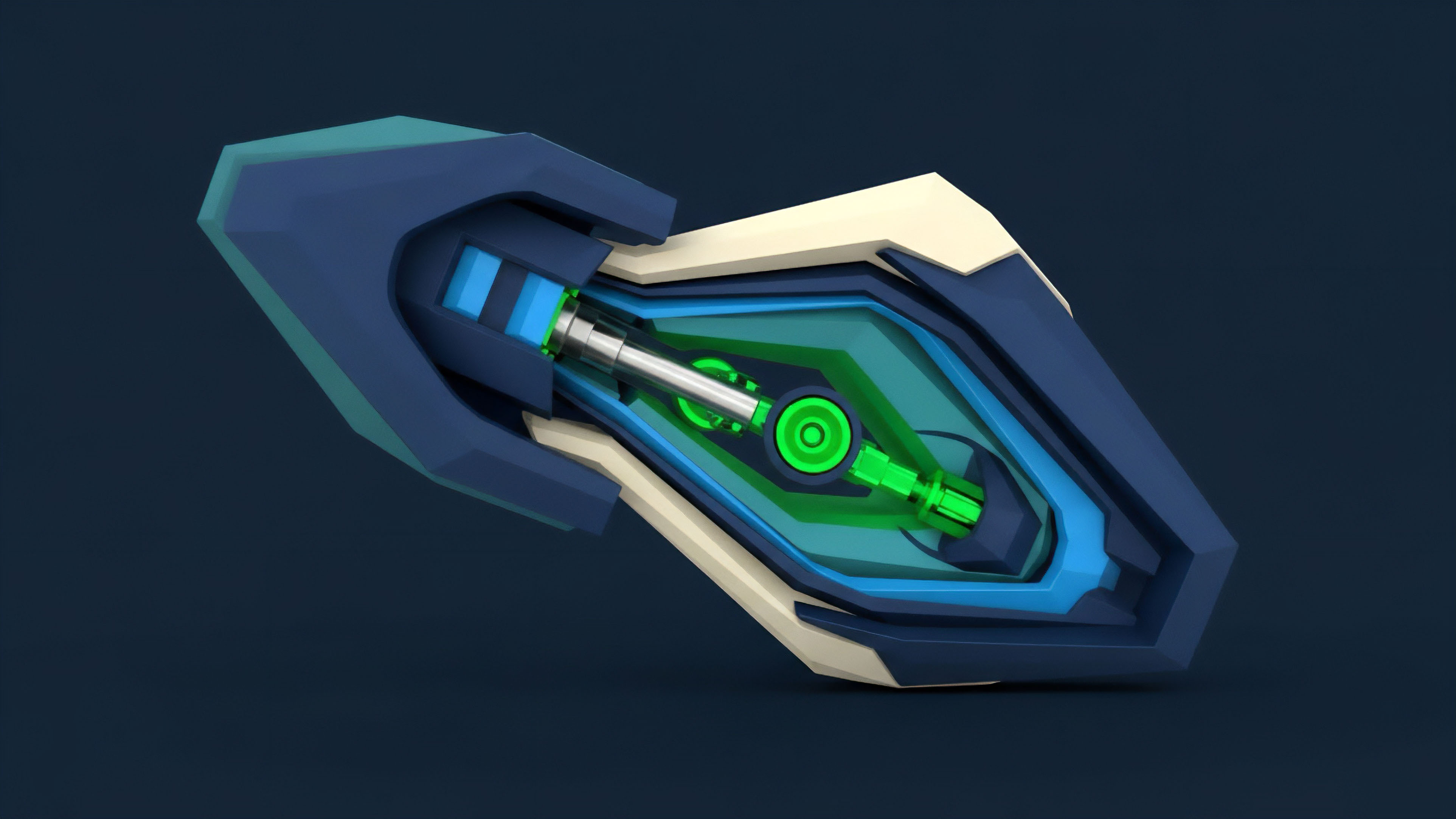

The future of Liquidation Threshold Optimization lies in the integration of zero-knowledge proofs and machine learning.

These technologies will allow for more granular and private risk assessments, potentially enabling under-collateralized lending in a decentralized context. By using ZK-proofs, borrowers could prove their creditworthiness or the health of their broader portfolio without revealing their specific holdings, allowing for more aggressive thresholds for trusted participants.

- Predictive Risk Engines will use machine learning to anticipate market volatility and adjust thresholds before a crash occurs.

- Zero Knowledge Solvency proofs will allow protocols to verify their total backing without exposing sensitive trade data.

- Cross-Chain Margin will enable the optimization of thresholds across multiple networks, pooling liquidity and risk.

- Dynamic Insurance Funds will automatically adjust their fee structures based on the real-time risk profile of the lending pool.

The ultimate goal is a self-healing financial system where Liquidation Threshold Optimization happens autonomously, without the need for human governance. In this terminal state, the protocol acts as a living organism, constantly sensing market conditions and adjusting its internal parameters to maintain a perfect balance between capital efficiency and systemic resilience. This will lead to a more robust and efficient global financial operating system, capable of withstanding extreme shocks while providing maximum utility to its participants.

Glossary

Risk Management Automation

Systemic Resilience

Tail Risk

Portfolio Health

Agent-Based Modeling

Capital Efficiency

Priority Gas Auction

Fat Tail Distribution

Loan-to-Value Ratio