Essence

Liquidation Threshold Calibration represents the quantitative setting of the loan-to-value ratio at which a collateralized position becomes subject to forced closure. This parameter serves as the primary defense mechanism for decentralized lending protocols and derivative engines, ensuring the solvency of the system against rapid asset depreciation.

Liquidation threshold calibration defines the critical boundary where collateral value fails to secure outstanding debt obligations.

The process involves balancing capital efficiency against systemic risk. Setting the threshold too high encourages leverage but invites insolvency risk during market volatility. Setting it too low restricts utility and prevents optimal capital deployment.

This calibration acts as a synthetic circuit breaker, preventing the accumulation of bad debt that would otherwise destabilize the protocol.

Origin

The necessity for Liquidation Threshold Calibration emerged from the limitations of early decentralized lending experiments. Initial protocols relied on static, overly conservative ratios that failed to account for the unique volatility profiles of crypto assets. Developers observed that standard financial models, designed for traditional assets with predictable settlement times, were insufficient for the twenty-four-hour, high-frequency nature of digital asset markets.

- Collateral Volatility: Early systems struggled with rapid price swings that rendered static thresholds obsolete within minutes.

- Latency Risks: Blockchain finality times created windows of vulnerability where positions could theoretically become undercollateralized before a liquidation event could execute.

- Incentive Alignment: The need to attract liquidators required a system where the threshold was predictable enough to allow for profitable, automated execution.

These early challenges forced a shift toward dynamic modeling. Protocols began incorporating data feeds from decentralized oracles to adjust thresholds based on realized and implied volatility, moving away from rigid, manual updates.

Theory

The architecture of Liquidation Threshold Calibration relies on the interaction between risk sensitivity and market liquidity. Quantitative models must calculate the probability of a position becoming undercollateralized within a specific time horizon, typically correlated to the protocol’s liquidation delay or oracle update frequency.

Quantitative Framework

The calibration is often modeled using a variation of the Value at Risk framework, adjusted for the non-linear dynamics of crypto derivatives.

| Parameter | Impact on Threshold |

| Asset Volatility | Inverse correlation with threshold safety |

| Market Depth | Direct correlation with liquidation capacity |

| Oracle Latency | Inverse correlation with threshold sensitivity |

The threshold functions as a probability density function boundary, isolating insolvency risk from protocol liquidity.

The logic follows a stochastic path. If an asset’s price drops below the threshold, the Liquidation Engine triggers an auction or a direct sale of the collateral. The efficacy of this mechanism depends on the slippage experienced during the sale; if the liquidation process consumes the remaining collateral faster than the debt can be repaid, the protocol incurs bad debt.

This necessitates a buffer, often called the Liquidation Penalty, which incentivizes third-party liquidators to act swiftly even during periods of extreme market stress.

Approach

Modern implementation of Liquidation Threshold Calibration utilizes multi-factor risk engines that ingest real-time data. Protocols no longer rely on singular values; they deploy complex, algorithmic adjustments that respond to shifting market regimes.

- Realized Volatility Analysis: Measuring historical price movements over specific look-back periods to determine current threshold buffers.

- Correlation Stress Testing: Assessing how collateral assets behave in relation to the broader market during systemic liquidations.

- Liquidity Depth Monitoring: Evaluating order book density on major exchanges to ensure that a large-scale liquidation can be absorbed without causing a price cascade.

This approach acknowledges that market conditions are rarely static. By automating the adjustment process, protocols reduce their reliance on governance intervention, which is often too slow to react to flash crashes or liquidity voids. The calibration is a continuous, feedback-driven process that aims to minimize the Systemic Exposure of the protocol while maximizing the user’s ability to maintain a position.

Evolution

The path from simple, fixed-ratio models to sophisticated, automated systems reflects the maturation of decentralized finance.

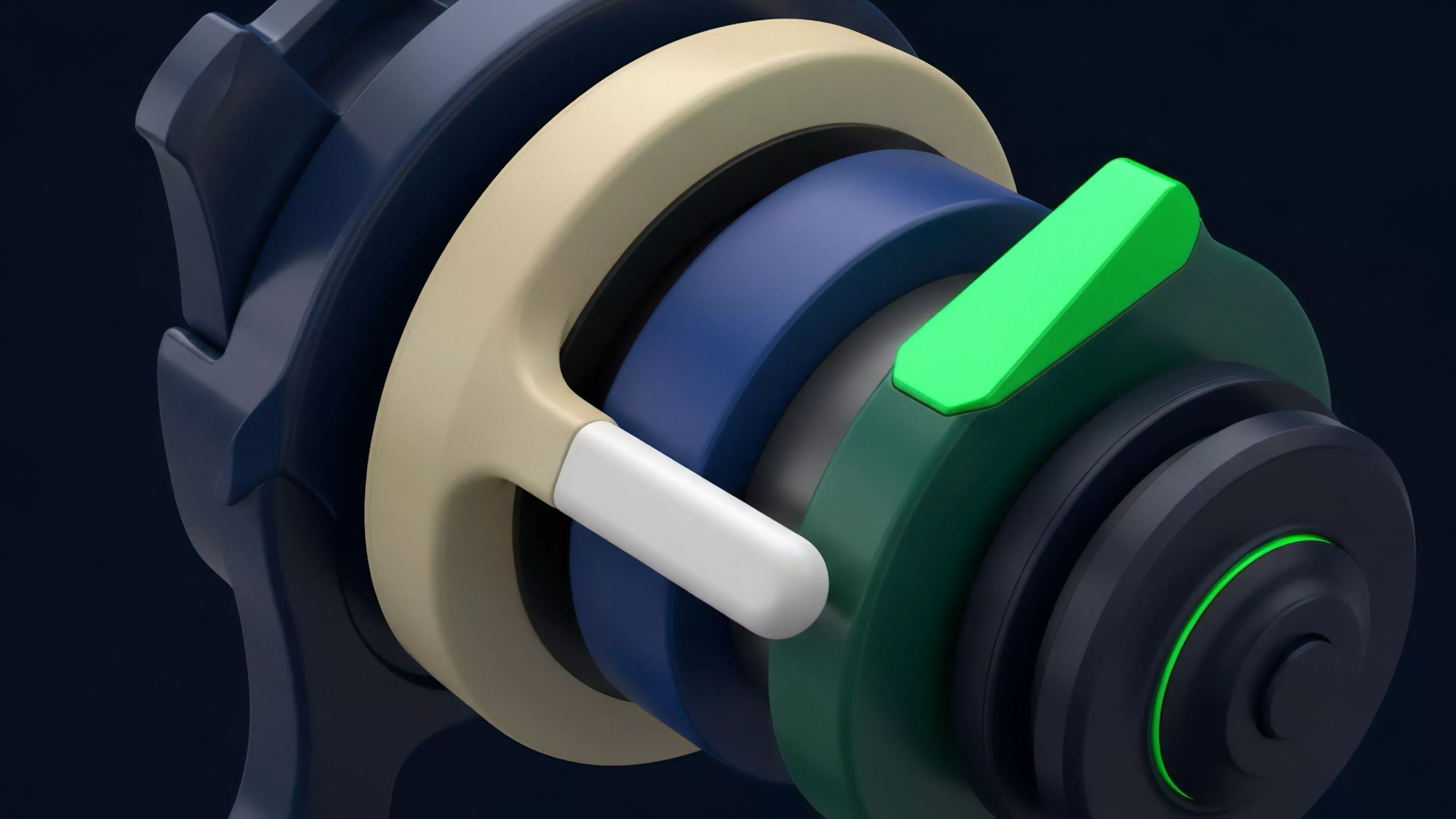

Early iterations were manual, requiring governance votes for every parameter change, which proved insufficient for the speed of modern trading. The current state involves Modular Risk Engines that separate the threshold calculation from the core lending logic. This allows for specialized risk parameters for different asset classes, recognizing that a stablecoin requires a different calibration than a volatile governance token.

Sometimes I consider the parallel to military logistics ⎊ the supply chain of collateral must be as resilient as the front line of the order book. The shift toward decentralized, trustless oracles has also changed the game, providing the high-fidelity data needed to justify tighter, more efficient thresholds. We are moving toward a future where thresholds are not just calculated, but are predictive, anticipating volatility before it hits the order books.

Horizon

The future of Liquidation Threshold Calibration lies in the integration of machine learning models that can predict liquidity exhaustion events.

Protocols will likely transition to Predictive Thresholds, which adjust in real-time based on market sentiment, on-chain volume, and derivative skew.

Predictive calibration will allow protocols to preemptively tighten thresholds during periods of rising systemic risk.

Future architectures will emphasize Cross-Protocol Liquidity Sharing, where the liquidation threshold of one protocol is informed by the risk state of others. This interconnectedness will enhance overall system stability, preventing isolated failures from propagating. The focus will shift from simple collateral maintenance to a holistic management of Systemic Contagion Risk, ensuring that the liquidation of one position strengthens rather than weakens the broader financial architecture.