Essence

Liquidation Protocol Verification functions as the cryptographic and mathematical audit layer ensuring that decentralized lending and derivatives platforms maintain solvency during periods of extreme volatility. It represents the set of procedures, oracle interactions, and smart contract logic that trigger the orderly disposal of under-collateralized positions to prevent systemic collapse. This verification process serves as the primary mechanism for maintaining the peg between synthetic assets and their underlying collateral, effectively acting as the heartbeat of decentralized risk management.

Liquidation Protocol Verification is the automated enforcement mechanism ensuring collateral sufficiency within decentralized financial architectures.

The integrity of these systems depends on the precise execution of liquidation thresholds, where the value of a user’s locked assets falls below the required maintenance margin. Without robust verification, protocols face catastrophic insolvency risks, as bad debt accumulates when collateral cannot be liquidated fast enough to cover outstanding liabilities. The architecture requires high-frequency data validation to ensure that liquidators act upon accurate market pricing rather than stale or manipulated price feeds.

Origin

The genesis of Liquidation Protocol Verification resides in the early development of collateralized debt positions within decentralized finance, specifically originating from the necessity to replicate traditional margin call systems without centralized intermediaries.

Initial iterations relied on simple, on-chain price feeds, which proved insufficient during sudden market downturns. The industry shifted toward more sophisticated, multi-source oracle aggregators to mitigate the risk of price manipulation, which had historically led to massive, unintended liquidations.

- Collateralization Ratios established the foundational mathematical requirement for debt issuance.

- Oracle Decentralization addressed the single point of failure inherent in early price feed architectures.

- Liquidation Auctions provided a competitive mechanism for disposing of distressed assets while minimizing slippage.

Early protocols faced significant challenges regarding latency arbitrage, where participants exploited the time delay between off-chain price movements and on-chain liquidation execution. This led to the development of more complex verification logic that incorporates gas price optimization and multi-step validation to ensure that liquidators are properly incentivized to act even during network congestion. The evolution of this field reflects a continuous struggle to balance capital efficiency with extreme safety parameters.

Theory

The theoretical framework governing Liquidation Protocol Verification is rooted in quantitative finance, specifically the modeling of stochastic volatility and jump-diffusion processes.

Protocols must define a precise Liquidation Penalty that balances the need to incentivize liquidators with the desire to minimize the impact on the borrower. The mathematical model assumes that asset prices can move faster than the network can process transactions, necessitating a conservative buffer in the form of over-collateralization.

Mathematical modeling of liquidation thresholds requires balancing incentive compatibility for liquidators against the protection of borrower equity.

Risk Sensitivity Parameters

| Parameter | Functional Role |

| Liquidation Threshold | The collateral-to-debt ratio triggering the liquidation event |

| Maintenance Margin | The minimum buffer required to keep a position active |

| Liquidation Bonus | The incentive premium provided to liquidators for covering bad debt |

The game theory underlying these systems involves adversarial participants, or liquidators, who monitor the chain for under-collateralized accounts. The protocol design must ensure that these actors behave rationally, effectively serving as the janitors of the system. If the liquidation incentive is too low, the system risks insolvency; if it is too high, it creates excessive slippage for the borrower.

The verification logic must therefore be dynamic, adjusting to market conditions to ensure that the cost of liquidation does not exceed the value of the recovered assets.

Approach

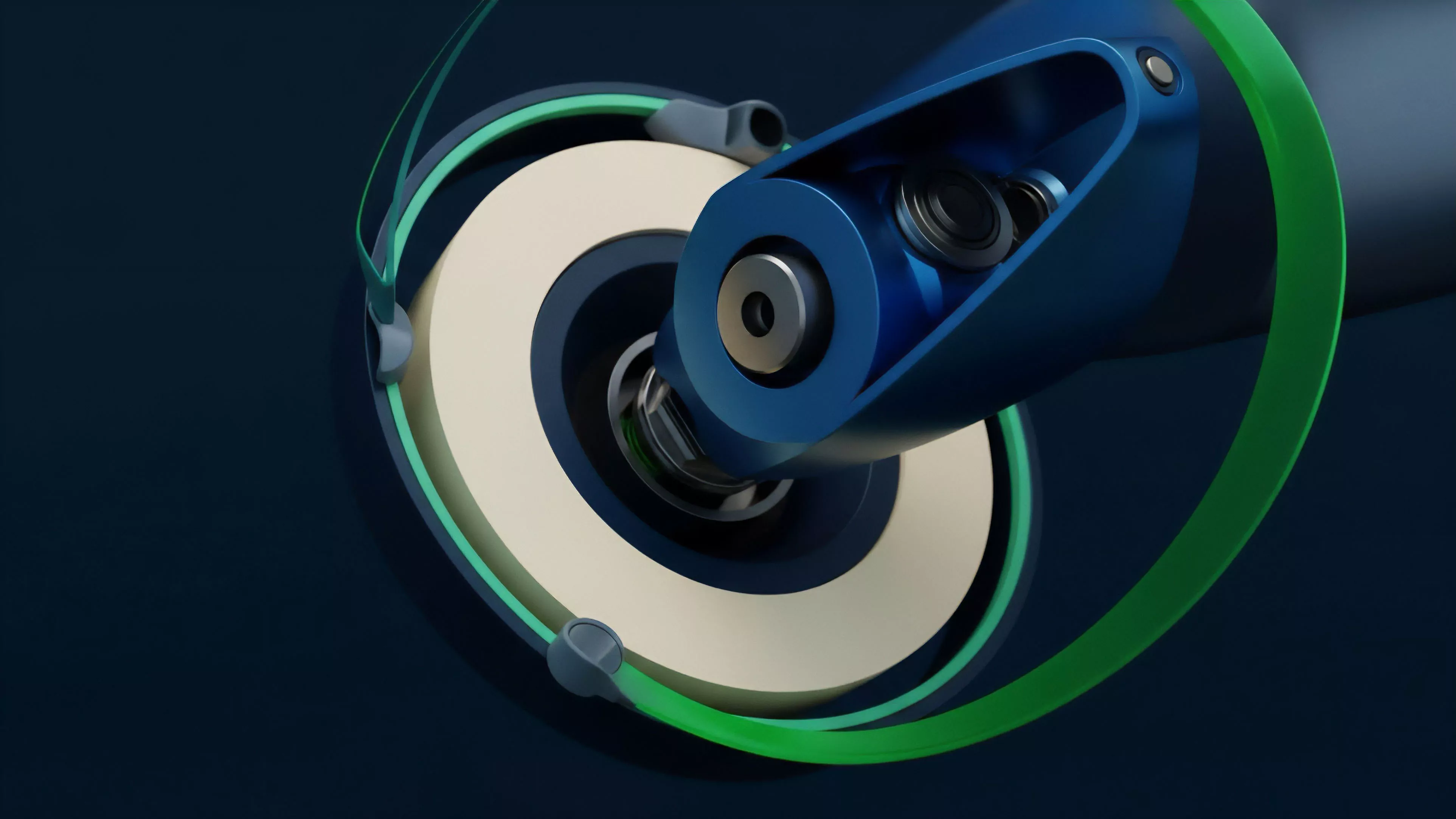

Modern approaches to Liquidation Protocol Verification prioritize asynchronous validation and modular oracle integration. Rather than relying on a single, monolithic check, protocols now employ multi-layered verification that confirms price data across multiple decentralized exchanges before authorizing a liquidation. This reduces the risk of flash loan attacks where malicious actors manipulate a local price feed to trigger unfair liquidations.

- Validator Sets confirm the authenticity of oracle reports before they impact the margin engine.

- Circuit Breakers pause liquidation processes if anomalous market activity is detected across connected chains.

- Risk Parameters are dynamically updated via governance to reflect changes in asset volatility and liquidity depth.

Current liquidation architectures utilize multi-source oracle verification to prevent price manipulation and ensure market integrity.

The execution environment must be highly optimized, as liquidation efficiency is directly tied to the ability of the smart contract to process transactions during high-traffic periods. Sophisticated protocols now utilize off-chain computation to calculate the optimal liquidation path, which is then verified on-chain to ensure adherence to protocol rules. This hybrid approach significantly reduces the gas overhead of maintaining complex liquidation engines, allowing for more frequent and precise checks.

Evolution

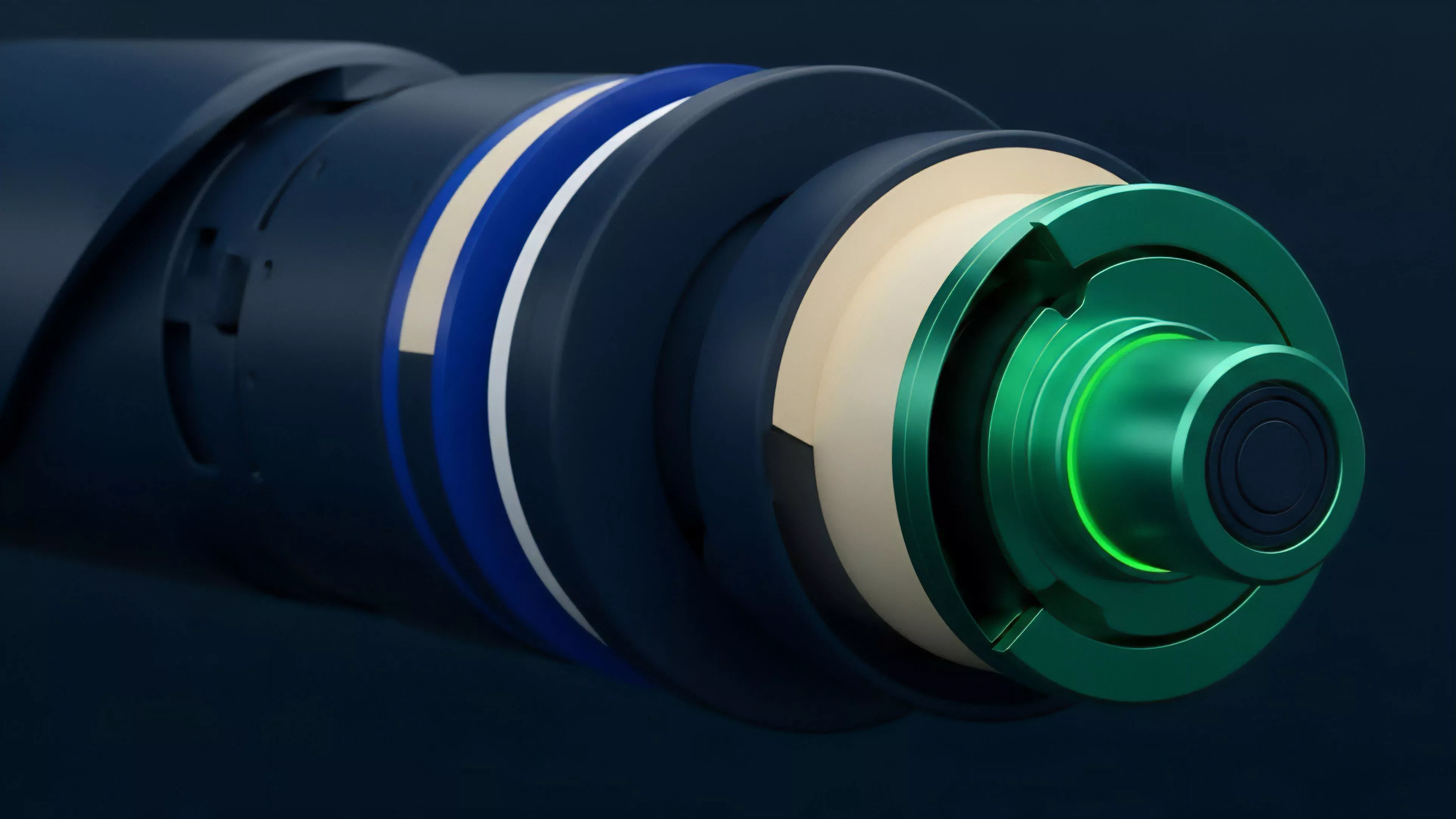

The trajectory of Liquidation Protocol Verification has moved from simplistic, binary triggers to adaptive, predictive models.

Early systems treated every asset with the same volatility profile, leading to either excessive liquidations or insufficient protection. Current systems employ volatility-adjusted thresholds, where the liquidation requirement expands or contracts based on real-time realized volatility data. One might consider the parallel between this development and the history of flight control systems, where manual pilot input evolved into fly-by-wire automation to handle stresses beyond human reaction speeds.

This shift allows the protocol to remain stable even when the underlying market undergoes extreme structural changes. The move toward cross-chain liquidation represents the next frontier, where collateral locked on one network can be liquidated against debt on another, requiring a unified, global verification layer.

| Generation | Verification Mechanism | Risk Profile |

| Gen 1 | Static Thresholds | High Systemic Risk |

| Gen 2 | Oracle Aggregation | Moderate Systemic Risk |

| Gen 3 | Dynamic Volatility-Adjusted | Low Systemic Risk |

Horizon

The future of Liquidation Protocol Verification lies in the integration of zero-knowledge proofs to verify the solvency of positions without exposing sensitive user data. This allows for private, yet fully auditable, margin accounts. Additionally, the adoption of machine learning-based risk assessment will enable protocols to predict potential liquidations before they occur, allowing for proactive, graceful deleveraging rather than abrupt, disruptive liquidations. The ultimate objective is to achieve liquidation-less solvency, where automated rebalancing strategies maintain collateral health continuously. This requires a profound integration between decentralized derivatives and automated market maker liquidity pools. As these systems mature, the distinction between lending and trading will blur, creating a unified financial architecture where Liquidation Protocol Verification serves as the invisible, robust foundation for all risk-adjusted capital allocation. What happens to systemic stability when liquidation verification becomes perfectly predictive, and does this eliminate the role of the liquidator as a necessary adversarial actor?