Essence

Latency-Sensitive Applications in the domain of digital asset derivatives function as high-velocity execution frameworks where the temporal delta between market data ingestion and order transmission determines competitive viability. These systems operate at the intersection of network topology and order book mechanics, requiring sub-millisecond propagation speeds to maintain parity with shifting liquidity profiles. The core objective remains the minimization of slippage and the optimization of fill probability within decentralized environments prone to block-time variability.

Latency-sensitive applications prioritize the reduction of execution delay to ensure consistent interaction with rapidly evolving order books in decentralized markets.

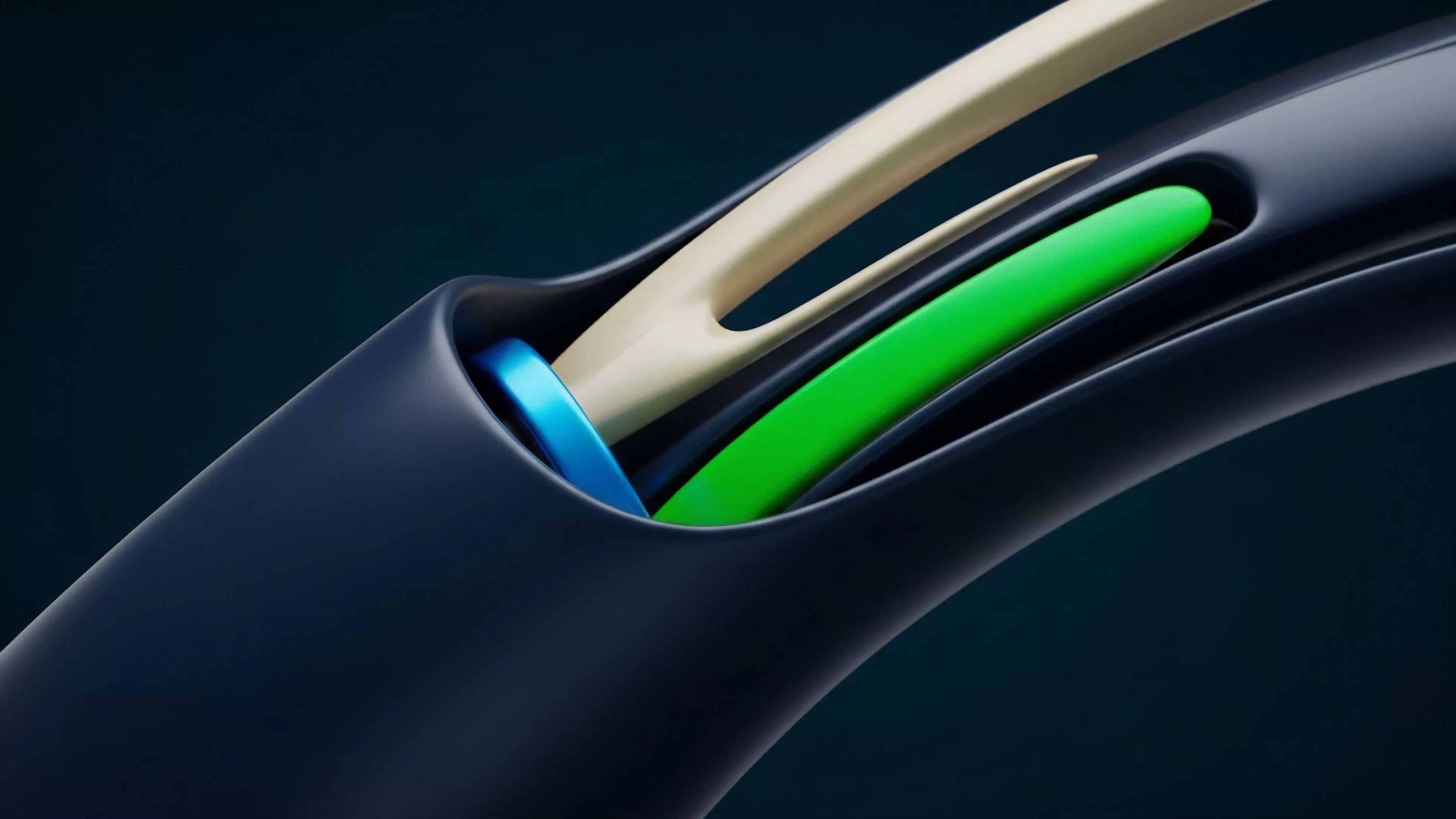

These architectures prioritize deterministic execution paths, often bypassing standard routing layers to engage directly with liquidity sources or decentralized exchange mempools. Participants utilizing these tools frequently manage complex portfolios where the Greek sensitivity of options contracts necessitates immediate rebalancing as underlying spot volatility accelerates. Systemic relevance arises from the ability of these applications to provide price discovery efficiency, acting as a buffer against fragmented liquidity that otherwise plagues nascent derivative venues.

Origin

The genesis of Latency-Sensitive Applications traces back to the integration of high-frequency trading principles into the nascent landscape of automated market making on decentralized protocols.

Early iterations focused on arbitrage between centralized exchanges and on-chain liquidity pools, where the inability to react to price discrepancies within a single block resulted in significant capital leakage. Developers identified that the inherent transparency of public mempools created a race condition, compelling the design of custom infrastructure to prioritize transaction inclusion.

| Historical Phase | Primary Driver | Technological Constraint |

| Early Arbitrage | Price Discrepancy | Layer 1 Block Time |

| MEV Extraction | Mempool Transparency | Transaction Sequencing |

| Derivative Scaling | Portfolio Greeks | Cross-Protocol Latency |

The shift from simple arbitrage to sophisticated Latency-Sensitive Applications reflects the professionalization of decentralized finance. As options markets matured, the requirement for managing non-linear risk exposures forced the development of specialized execution agents capable of monitoring global state changes. This evolution moved beyond simple speed to incorporate predictive modeling, where applications anticipate network congestion to adjust gas parameters dynamically, ensuring settlement priority during periods of high market stress.

Theory

The theoretical framework governing Latency-Sensitive Applications rests on the principle of information asymmetry within a public, adversarial environment.

In decentralized derivatives, the cost of delay is directly correlated to the volatility of the underlying asset and the gamma exposure of the option position. Mathematical models for these applications incorporate stochastic calculus to account for the probabilistic nature of block inclusion, where the expected value of an order is a function of its timestamp relative to the validator consensus schedule.

Theoretical models for latency-sensitive systems quantify the cost of delay by linking execution speed directly to gamma-adjusted risk exposure.

- Order Flow Mechanics dictate that competitive advantage resides in the ability to front-run or back-run liquidity shifts, requiring local node infrastructure that maintains real-time synchronization with network peers.

- Protocol Physics impose strict limits on throughput, necessitating that applications optimize for the smallest possible transaction footprint to minimize propagation time across global validator sets.

- Adversarial Game Theory models the behavior of competing agents who utilize priority fees and private relay networks to capture alpha, turning the mempool into a zero-sum competition for temporal advantage.

This domain functions as a digital arms race where the hardware-software stack must be finely tuned to mitigate the impact of jitter and packet loss. While traditional finance relies on proximity to exchange colocation centers, decentralized participants must solve the problem of geographic dispersion by deploying distributed infrastructure that strategically positions nodes to minimize the hop count to major validator clusters. The efficiency of this deployment dictates the survival of the strategy during high-volatility regimes.

Approach

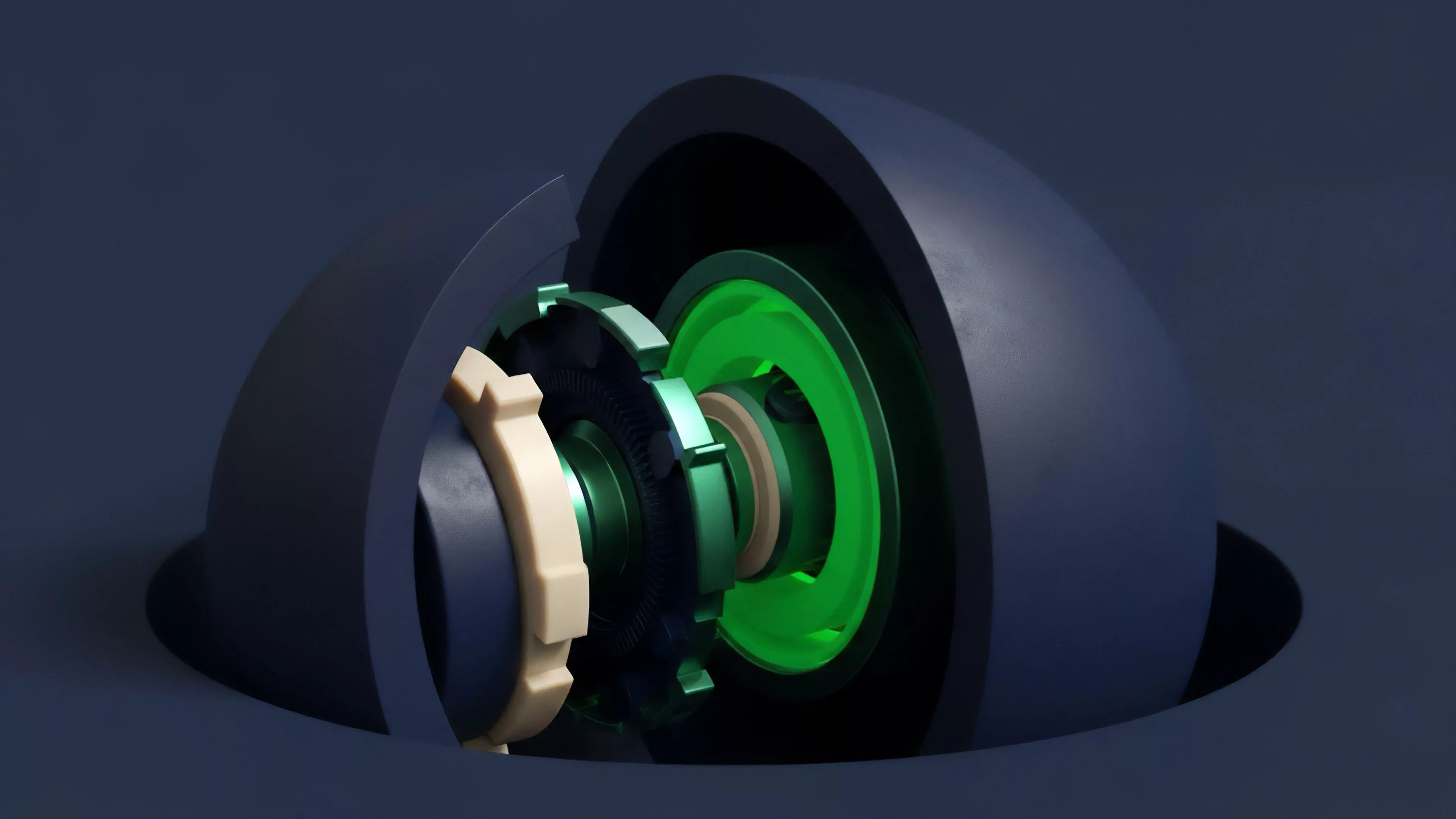

Current approaches to Latency-Sensitive Applications emphasize the vertical integration of the entire trading stack, from custom node implementations to optimized smart contract interactions.

Developers now deploy specialized middleware that performs off-chain pre-calculation of derivative payoffs, allowing for the instantaneous submission of signed transactions upon triggering conditions. This bypasses the overhead of standard client-side libraries, providing a lean execution path that minimizes latency at every stage of the lifecycle.

| Execution Component | Optimization Target | Functional Impact |

| Node Infrastructure | Peer Synchronization | Data Freshness |

| Transaction Relays | Propagation Speed | Inclusion Probability |

| Smart Contract Logic | Gas Consumption | Execution Throughput |

Current strategies utilize vertically integrated stacks to bypass traditional bottlenecks and ensure rapid transaction submission in volatile markets.

Strategists often utilize private mempools or MEV-aware relays to shield their order flow from predatory actors while ensuring rapid settlement. This tactical deployment requires continuous monitoring of network health and validator behavior, as the competitive landscape is fluid and highly responsive to protocol upgrades. The objective remains the maintenance of a robust feedback loop between market data analysis and automated position adjustment, ensuring that risk parameters remain within defined thresholds regardless of external network congestion.

Evolution

The trajectory of Latency-Sensitive Applications moves toward the abstraction of network complexity, where decentralized infrastructure increasingly mimics the performance characteristics of institutional-grade trading environments.

Initial efforts focused on manual optimization of gas limits and basic node connectivity, but the current paradigm shifts toward intent-based execution architectures. These frameworks allow users to express financial goals that are subsequently fulfilled by specialized solvers, removing the burden of low-level network management from the end participant.

Evolutionary trends point toward intent-based execution models that offload network complexity to specialized solver networks.

- Protocol-Level Enhancements such as EIP-1559 and subsequent gas-market improvements have stabilized transaction costs, allowing for more predictable scheduling of latency-sensitive tasks.

- Cross-Chain Interoperability has expanded the scope of these applications, requiring sophisticated routing algorithms that manage latency across disparate consensus mechanisms and bridge architectures.

- Institutional Adoption drives the development of hardware-accelerated signature verification, significantly reducing the compute time required to authorize trades on high-throughput derivative protocols.

The shift toward modular blockchain stacks introduces new variables, as applications must now contend with data availability layers and settlement finality times. This architectural transition necessitates a more nuanced approach to risk management, where the temporal uncertainty of cross-domain communication becomes a primary factor in strategy design. Agents operating in this environment must possess a deep understanding of the underlying protocol physics to effectively navigate the trade-offs between speed, security, and capital efficiency.

Horizon

The future of Latency-Sensitive Applications lies in the convergence of edge computing and decentralized consensus, where localized execution agents provide near-instantaneous response times to market events.

Predictive analytics integrated directly into the transaction signing layer will allow applications to preemptively adjust to volatility spikes before they are fully reflected in the public state. This capability will likely transform the market microstructure, reducing the efficacy of traditional arbitrage while increasing the importance of liquidity provision efficiency.

Future developments will likely leverage edge computing to achieve near-instantaneous response times, fundamentally altering decentralized market dynamics.

As decentralized derivatives continue to capture market share, the demand for deterministic performance will force protocol designers to implement native support for high-frequency interaction. This may include dedicated transaction channels or priority lanes for verified market makers, effectively formalizing the role of latency in the decentralized ecosystem. The ultimate success of these applications depends on their ability to balance this performance with the core principles of decentralization, ensuring that the benefits of speed do not come at the cost of systemic integrity.