Essence

Latency Arbitrage Prevention functions as the structural safeguard against the exploitation of time-asymmetric information in decentralized order books. At its core, this mechanism addresses the inherent delta between transaction propagation across distributed nodes and the finality of settlement. When market participants utilize superior infrastructure to observe price movements before others, they capture risk-free profit by executing trades against stale quotes.

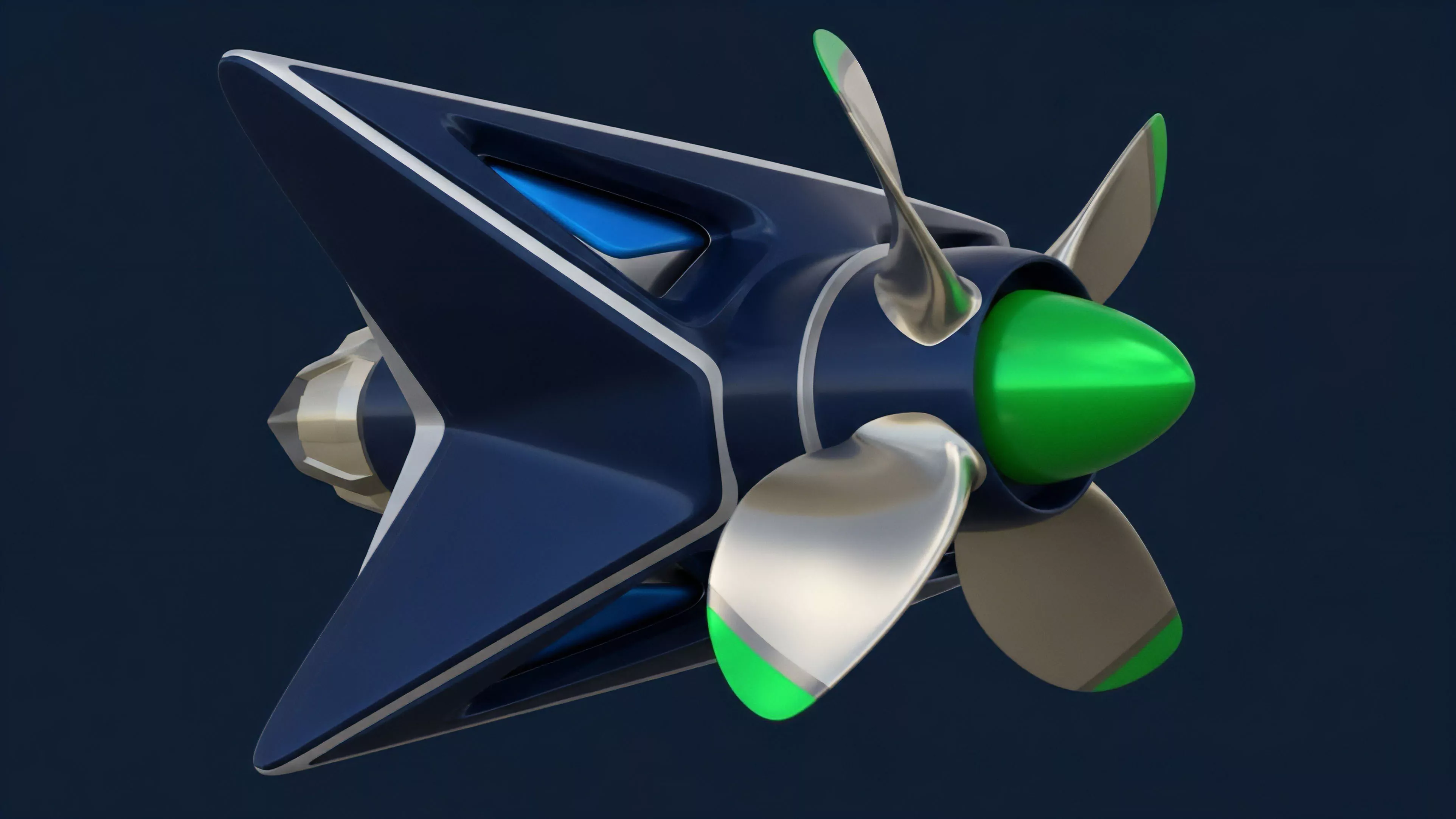

Latency arbitrage prevention acts as a systemic filter that nullifies the information advantage gained from superior physical proximity to validator nodes.

This practice undermines market integrity by forcing liquidity providers to widen spreads, thereby increasing the cost of capital for all participants. Protocols mitigate this by implementing architectural constraints that decouple the moment of trade submission from the moment of matching or execution. By imposing artificial delays or utilizing batch-auction models, systems neutralize the value of sub-millisecond execution speeds, shifting the competitive focus from physical infrastructure to strategic risk management.

Origin

The necessity for Latency Arbitrage Prevention emerged directly from the rapid maturation of high-frequency trading techniques within traditional electronic markets, which were subsequently imported into the nascent decentralized finance landscape.

Early decentralized exchanges relied on continuous order matching models that prioritized first-come, first-served processing, inadvertently incentivizing a race for validator priority.

Technical Roots

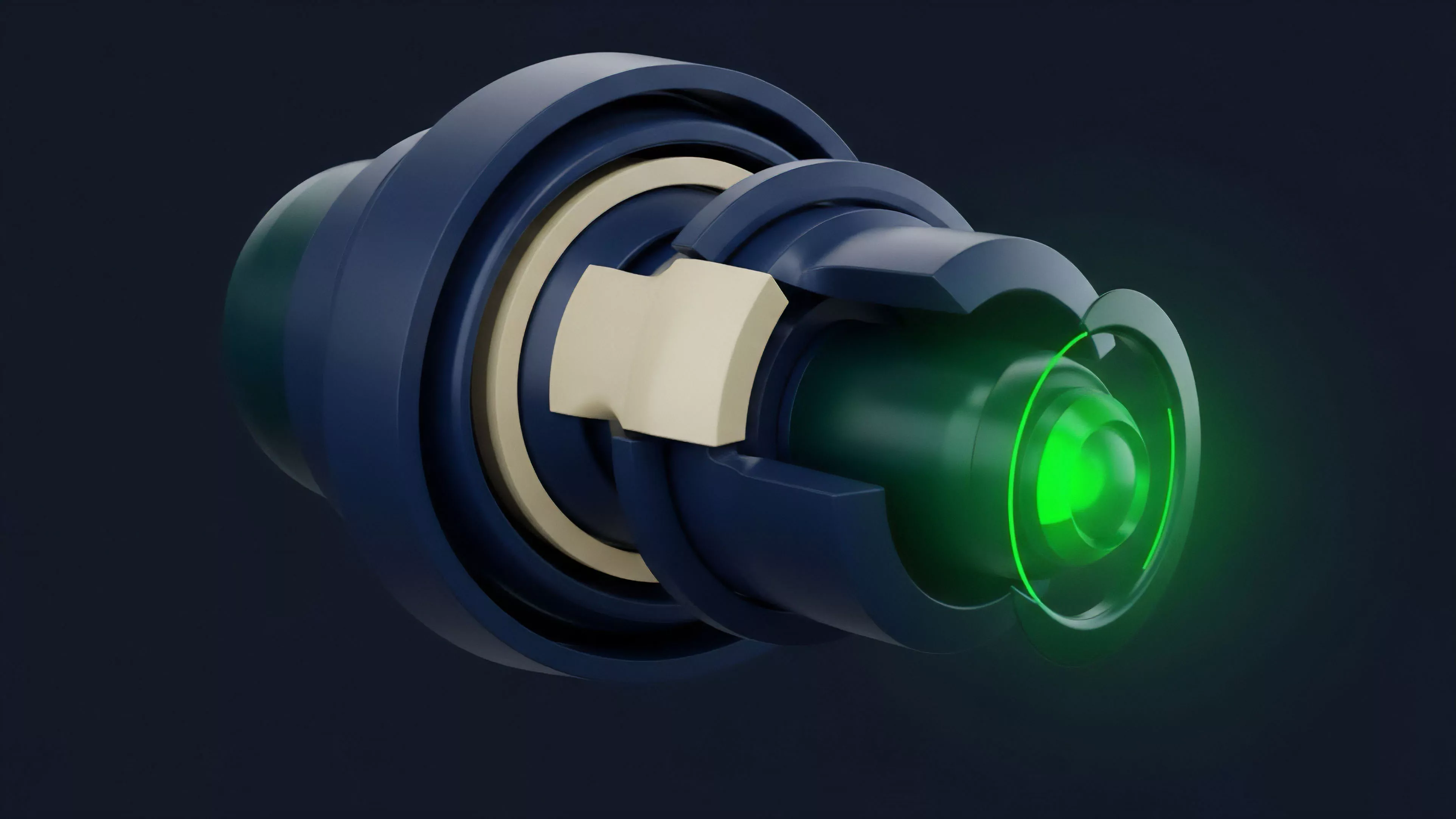

The realization that blockchain mempools behave as public, observable queues exposed the vulnerability of pending transactions to front-running and sandwich attacks. Developers observed that actors could pay higher gas fees or operate private relay networks to ensure their orders were processed before those of retail users. This realization prompted a departure from legacy matching engines, leading to the creation of protocols that prioritize fairness over raw speed.

The genesis of latency mitigation lies in the transition from sequential transaction processing to batched order settlement models.

Historical Context

Market participants observed that the lack of institutional-grade matching engines in early decentralized protocols allowed arbitrageurs to extract significant value from retail order flow. This systemic leakage necessitated a redesign of protocol physics, where the goal shifted from minimizing block times to ensuring that all transactions within a specific temporal window receive identical treatment by the matching engine.

Theory

The theoretical framework of Latency Arbitrage Prevention relies on the transformation of continuous time into discrete intervals. By aggregating orders over a fixed duration, protocols create a level playing field where individual submission timing within that interval becomes irrelevant to the final execution price.

Order Flow Dynamics

The following components define the mechanics of current prevention strategies:

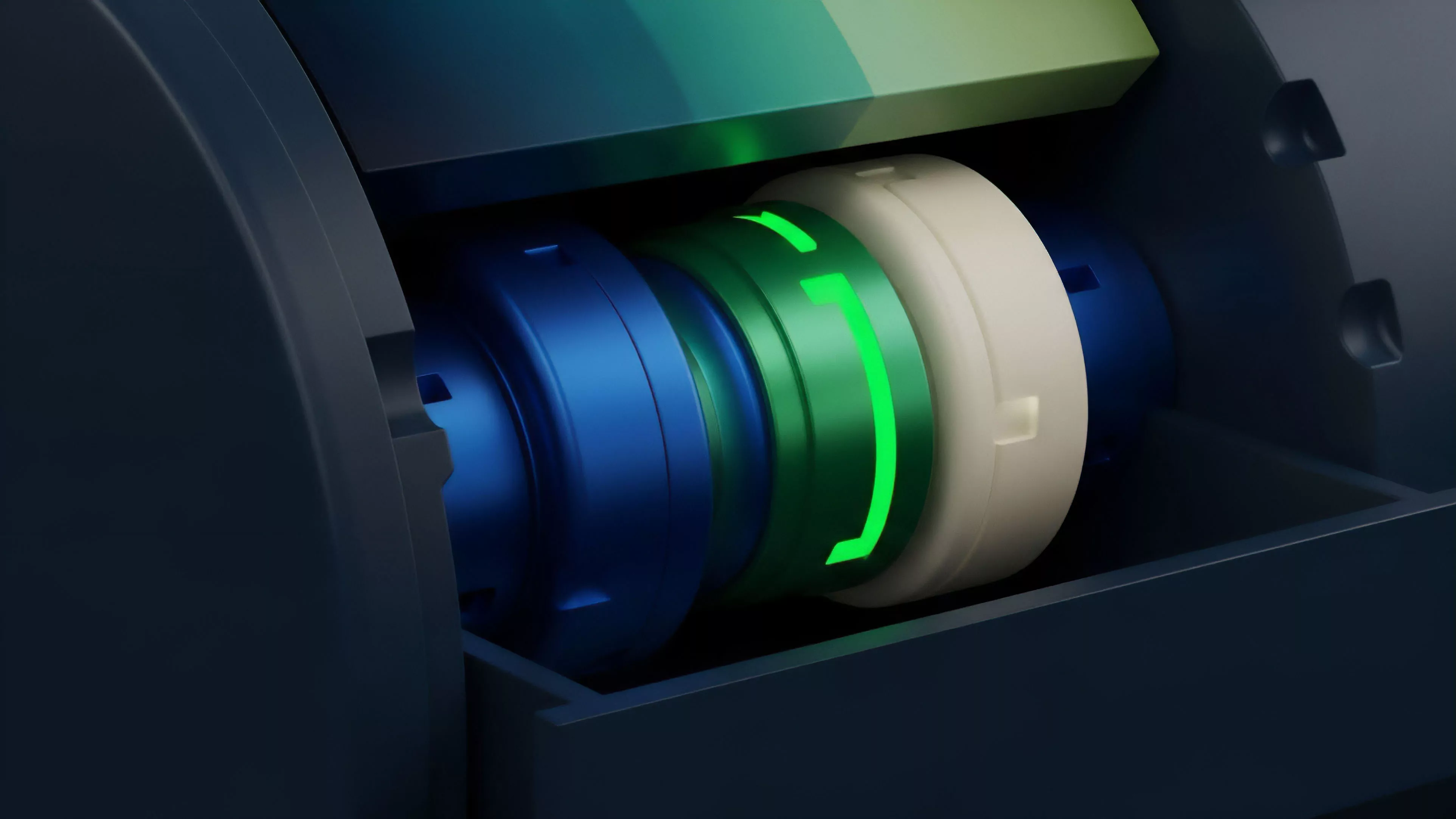

- Batch Auctions aggregate all buy and sell orders submitted within a defined timeframe, calculating a uniform clearing price that maximizes volume.

- Commit-Reveal Schemes require participants to submit encrypted orders that are only decrypted and matched after a specific block height, preventing pre-trade visibility.

- Frequent Batch Auctions operate by clearing the market at regular intervals, such as every hundred milliseconds, effectively removing the advantage of microsecond-level speed.

Quantitative Sensitivity

The efficacy of these mechanisms is measured by the reduction in Adverse Selection experienced by liquidity providers. When arbitrage is effectively suppressed, the variance in execution prices across different nodes decreases, and the Bid-Ask Spread narrows as providers no longer need to price in the cost of being picked off by faster agents.

| Strategy | Mechanism | Impact on Arbitrage |

| Batching | Time-window aggregation | High |

| Encryption | Commit-reveal protocol | Moderate |

| Randomization | Sequence shuffling | Low |

The mathematical model assumes that if the time window is sufficiently small to prevent significant price drift but large enough to include multiple participants, the market reaches a state of Information Equilibrium. This is where the physics of the protocol forces participants to compete on price rather than speed. Occasionally, one considers the sociological dimension ⎊ that our desire for speed is perhaps a relic of a pre-distributed reality, yet here we are, fighting for nanoseconds in a system designed for global synchronization.

Approach

Current implementation strategies for Latency Arbitrage Prevention focus on embedding fairness directly into the smart contract layer.

Instead of relying on off-chain relayers, modern protocols utilize Programmable Liquidity to manage execution.

Systemic Implementation

Protocols currently deploy the following techniques to maintain market integrity:

- Time-Weighted Average Price models are used to determine settlement values, smoothing out volatility spikes that attract arbitrageurs.

- Dynamic Fee Structures increase the cost of high-frequency order cancellation, discouraging participants from spamming the network to probe for liquidity.

- Virtual Automated Market Makers allow for the synthetic representation of liquidity, providing a buffer that absorbs small-scale arbitrage attempts without impacting the broader price discovery process.

The current state of market architecture prioritizes protocol-enforced fairness over the unfettered speed of legacy electronic trading venues.

Operational Trade-Offs

Architects must balance the need for low latency with the requirement for order fairness. Implementing long batch windows improves protection against arbitrage but introduces Execution Risk for traders, as prices may shift significantly before the batch is cleared. Successful protocols optimize this duration to align with the underlying volatility of the asset class, ensuring that the cost of protection does not outweigh the benefits of reduced slippage.

Evolution

The progression of Latency Arbitrage Prevention has moved from simple, reactive measures to proactive, consensus-level protocols.

Initially, developers attempted to mitigate arbitrage through off-chain matching, but this introduced central points of failure and trust requirements.

Structural Shifts

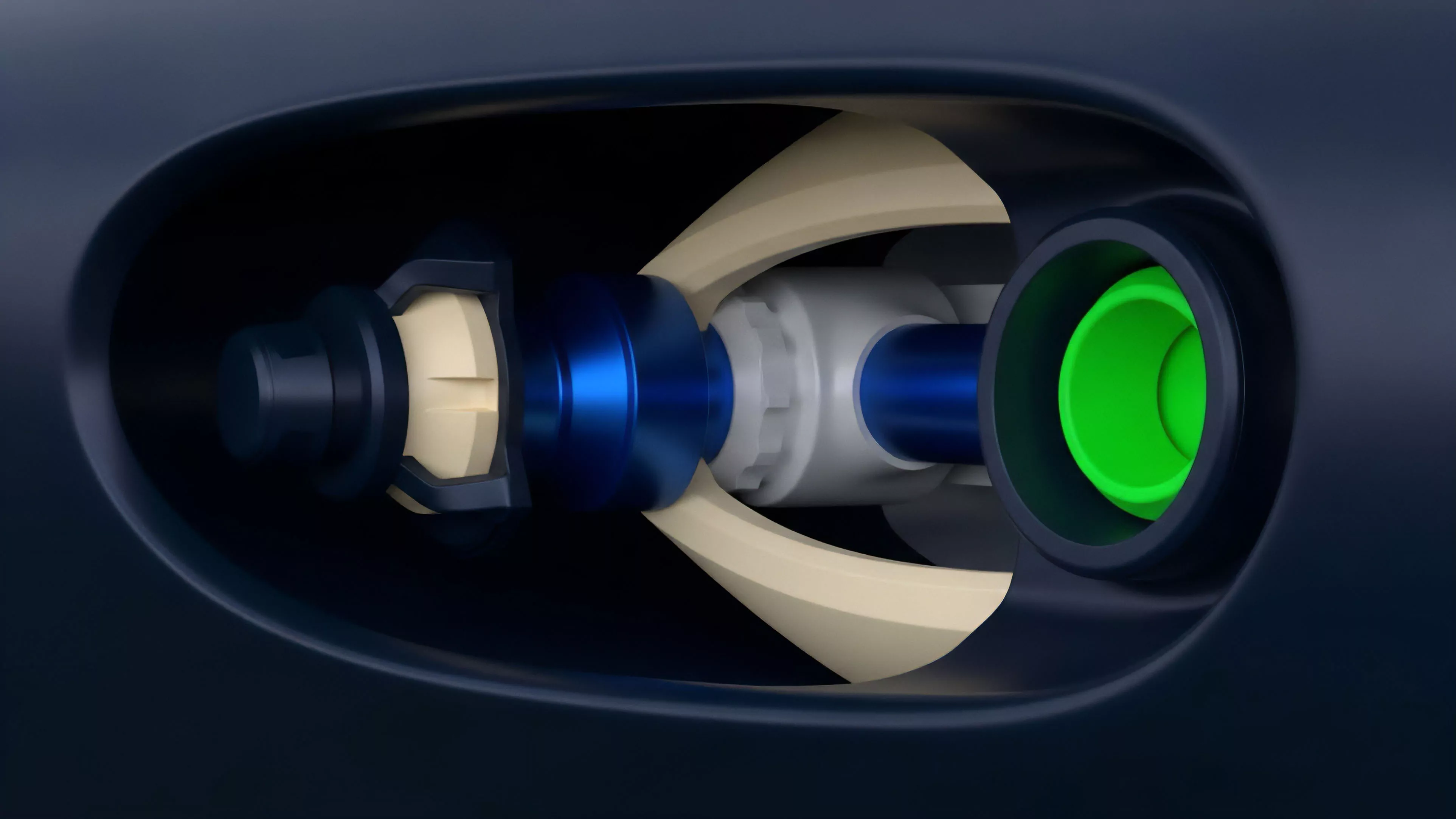

The industry has transitioned through three distinct phases:

- Phase One involved basic gas auction mechanisms where users competed purely on transaction fees to gain priority.

- Phase Two introduced private mempools and relayers, attempting to hide order flow from public observation.

- Phase Three represents the current standard, where protocol-level batching and consensus-driven ordering provide deterministic fairness for all participants.

This evolution demonstrates a clear trend toward decentralizing the order matching process itself. By moving the matching logic into the Consensus Layer, protocols ensure that no single validator can manipulate the sequence of trades for personal gain. This shift is essential for the scaling of decentralized derivatives, as it allows for deeper liquidity without the constant threat of predatory extraction.

Horizon

The future of Latency Arbitrage Prevention lies in the development of Zero-Knowledge Proofs to facilitate private, verifiable order matching.

By enabling participants to prove their orders are valid without revealing the contents until the point of execution, protocols will eliminate the visibility that currently fuels arbitrage.

Systemic Trajectory

Future research focuses on:

- Cross-Chain Atomic Settlement which will allow for the synchronization of liquidity across multiple networks, preventing arbitrage that currently exists due to inter-chain latency.

- Hardware-Accelerated Cryptography that will enable complex matching algorithms to run at speeds previously thought impossible without sacrificing security.

- Automated Market Governance where parameters like batch window duration adjust in real-time based on network congestion and market volatility metrics.

The ultimate goal is the creation of a global, unified liquidity layer where the concept of physical location is entirely abstracted away. As these technologies mature, the distinction between decentralized and traditional finance will blur, with the former providing superior transparency and fairness through cryptographic, rather than legal, enforcement.