Essence

The Internal Models Approach represents the transition from standardized regulatory oversight to protocol-native risk quantification. It allows decentralized derivative venues to calculate capital requirements based on proprietary sensitivity analysis rather than static, one-size-fits-all coefficients. This mechanism centers on the granular measurement of Delta, Gamma, Vega, and Theta to determine collateral sufficiency within automated market makers or order book architectures.

Internal Models Approach utilizes bespoke risk sensitivities to calibrate collateral requirements against real-time market volatility.

By shifting the burden of proof from generic formulas to evidence-based simulation, protocols optimize capital efficiency for liquidity providers. The systemic weight rests on the integrity of the underlying stochastic volatility models and the precision of Monte Carlo simulations used to stress-test portfolio exposure. When implemented correctly, this framework aligns individual liquidity provider incentives with the broader solvency requirements of the protocol.

Origin

The lineage of this methodology traces back to the Basel II Accord, which introduced the concept of internal ratings-based systems for banking institutions. In the digital asset space, this framework was adapted to address the limitations of simplistic initial margin requirements that failed to account for the non-linear risk profiles inherent in crypto-native options. Early decentralized finance protocols relied on constant product formulas, but the emergence of complex derivatives necessitated a shift toward more sophisticated risk management.

- Basel Accords established the foundational requirement for financial entities to justify their risk capital allocation through quantitative proof.

- Crypto Derivatives adoption created an urgent need to move beyond static leverage limits to dynamic, sensitivity-based assessments.

- Protocol Architecture evolved as developers recognized that generic margin systems hindered capital efficiency and increased the likelihood of cascade liquidations.

Decentralized systems adopt established institutional risk frameworks to replace rigid leverage caps with dynamic, data-driven margin requirements.

Theory

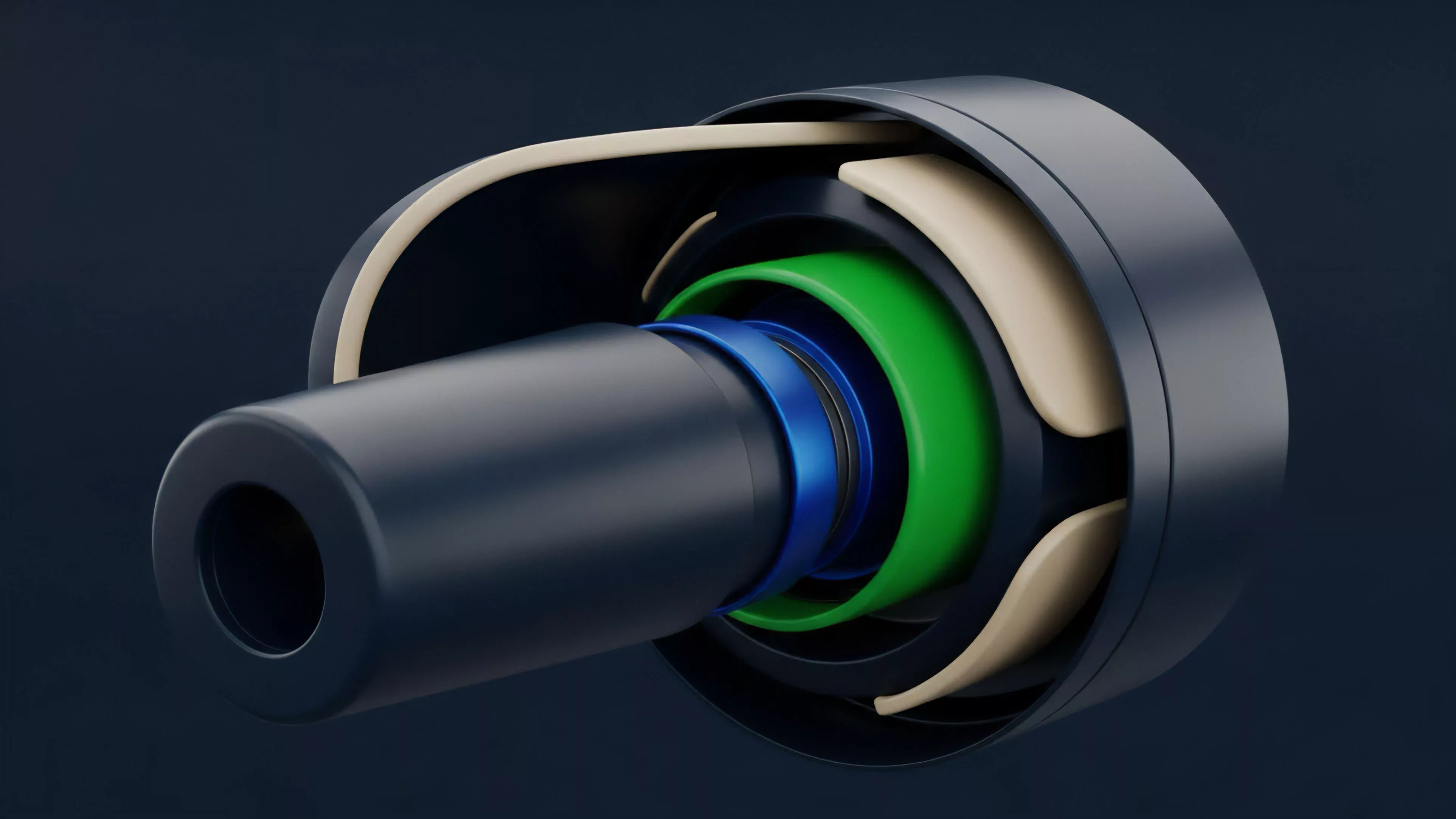

The Internal Models Approach relies on the rigorous application of quantitative finance to model the probability distribution of asset price paths. Protocols construct a Value at Risk framework that estimates the maximum loss over a specific time horizon with a defined confidence level. This involves integrating Black-Scholes-Merton sensitivities into the smart contract logic to ensure that collateral buffers adjust in real-time as market conditions shift.

The system treats the protocol as an adversarial environment where liquidation thresholds must be robust enough to withstand rapid deleveraging events. The interplay between market microstructure and consensus latency remains the primary challenge in executing these models. If the time required to compute risk exceeds the time required for price discovery, the system becomes vulnerable to arbitrage exploits.

| Component | Function | Risk Impact |

|---|---|---|

| Sensitivity Mapping | Quantifies portfolio exposure to price and volatility shifts | Reduces probability of under-collateralization |

| Stochastic Simulation | Generates potential future price scenarios | Addresses tail-risk and black-swan events |

| Liquidation Engine | Triggers automated asset seizure upon threshold breach | Prevents systemic contagion and insolvency |

Mathematical modeling here is rarely static. The models often incorporate Jump-Diffusion processes to account for the discontinuous price movements frequently observed in digital asset markets. This represents a divergence from traditional equity markets where price action is more continuous, necessitating a higher frequency of model re-calibration.

Approach

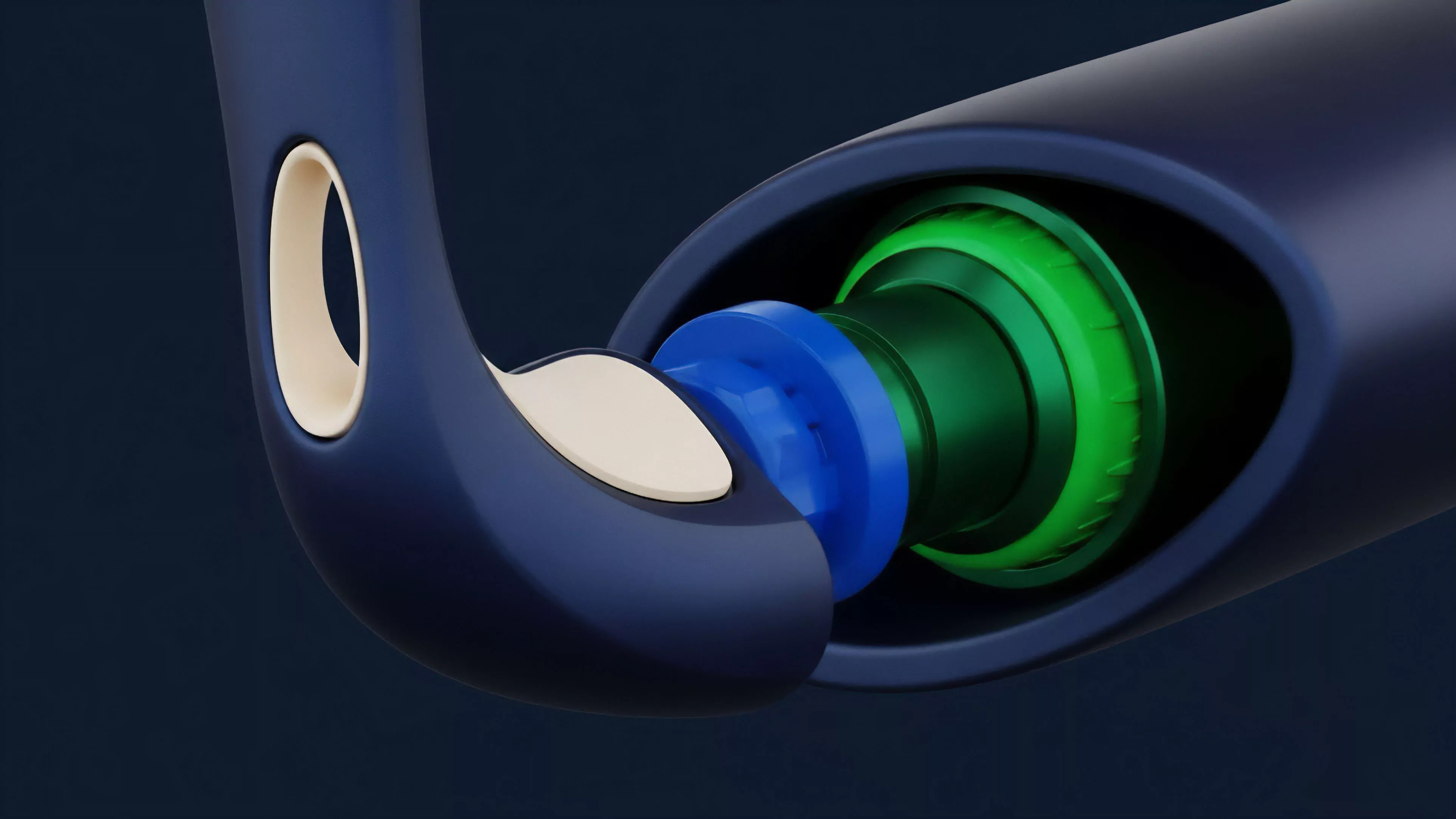

Current implementations prioritize the automation of margin engine calculations within the smart contract execution environment. Developers employ on-chain oracle feeds to provide the high-frequency data necessary for the models to function. The focus remains on maintaining capital efficiency while simultaneously protecting the protocol against insolvency risks during high-volatility regimes.

Real-time risk assessment requires seamless integration between high-frequency oracle data and on-chain margin computation engines.

The operational flow follows a distinct lifecycle within the protocol:

- Data Ingestion: Aggregation of price, volatility, and order book depth via decentralized oracles.

- Sensitivity Calculation: Execution of proprietary algorithms to determine the current portfolio Greeks.

- Margin Verification: Comparison of collateral value against the calculated risk exposure.

- Automated Enforcement: Execution of liquidations if the margin balance falls below the required threshold.

This process demands extreme precision. Any latency in the oracle update frequency or any discrepancy in the pricing model results in immediate liquidity leakage. Systems architects must balance the computational overhead of complex simulations against the necessity for low-latency settlement.

Evolution

The progression of these models reflects the maturation of the digital asset market. Early designs were limited by gas constraints, which forced developers to use simplified linear approximations of risk. As layer-two scaling solutions and off-chain computation became viable, protocols gained the ability to execute more sophisticated, non-linear models that better capture the complexity of option payoffs.

The transition from centralized clearing to trustless margin systems is the defining characteristic of this era. By moving the internal model logic into transparent, auditable code, protocols mitigate the counterparty risks associated with opaque institutional systems. The integration of cross-margining across different derivative products further enhances the ability to net exposures, though it increases the risk of systemic contagion if the models fail to capture correlations during market stress.

| Generation | Model Sophistication | Settlement Speed |

|---|---|---|

| First Generation | Static Leverage Limits | Slow |

| Second Generation | Linear Sensitivity Models | Moderate |

| Third Generation | Stochastic Non-linear Simulation | High |

Horizon

Future iterations of the Internal Models Approach will likely integrate zero-knowledge proofs to allow protocols to verify risk calculations without revealing private portfolio data. This preserves user privacy while maintaining the integrity of the protocol’s collateralization ratios. The next logical step involves the development of autonomous risk management agents that adjust model parameters in response to changing macro-crypto correlations without human intervention.

The synthesis of behavioral game theory and quantitative finance will dictate the resilience of these systems. As protocols become more interconnected, the Internal Models Approach must evolve to account for inter-protocol risk, where the failure of one venue propagates through shared collateral or common liquidity providers. Success in this domain requires the construction of systems that are not just efficient, but fundamentally adversarial-resilient.