Essence

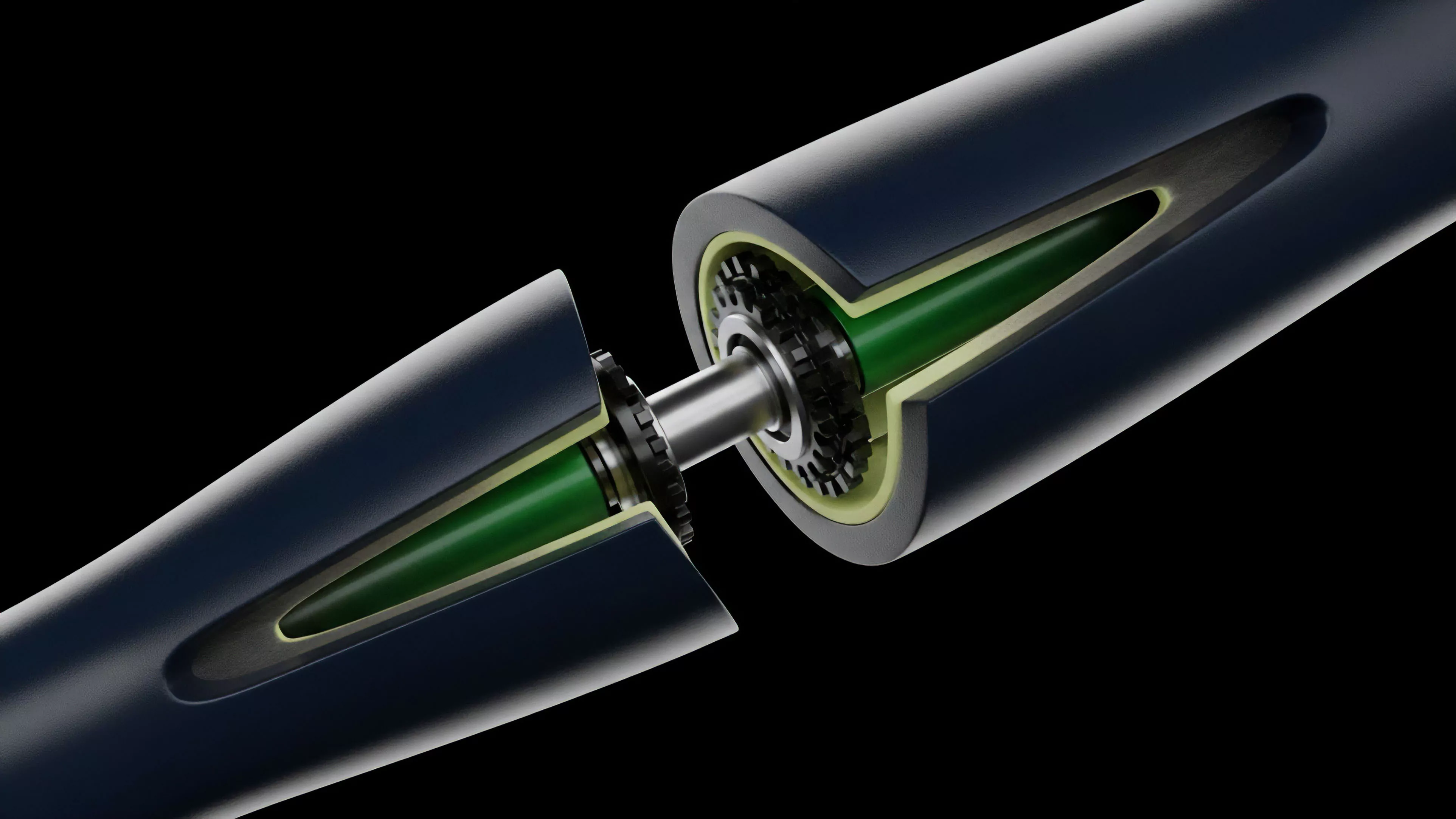

Interchain Data Availability functions as the verifiable ledger substrate for modular blockchain architectures. It ensures that transaction data remains accessible across disparate network domains, allowing light clients and rollups to confirm state transitions without downloading entire block histories. This mechanism addresses the fundamental tension between decentralization and scalability by decoupling the consensus process from the storage of execution-related information.

Interchain Data Availability provides the cryptographic proof that transaction data is published and retrievable across independent blockchain environments.

By offloading the storage requirement, this framework enables throughput expansion without forcing validators to maintain massive state databases. It acts as the anchor for trustless interoperability, ensuring that execution environments can verify the validity of cross-chain messages by accessing the underlying data commitments stored on a specialized, high-redundancy layer.

Origin

The genesis of this architecture lies in the limitations of monolithic blockchain design, where every node must process and store every transaction. Early research into sharding and data availability sampling revealed that the bottleneck for network performance was not computation, but the sheer volume of data required to reach consensus on state validity.

Developers realized that the security of a rollup or sidechain depends entirely on the availability of its transaction inputs. If this data disappears, the network state becomes unrecoverable, rendering assets trapped or stolen. This realization prompted the shift toward dedicated layers designed specifically for data dissemination and availability proofs, moving away from the assumption that the settlement layer must also be the storage layer.

Theory

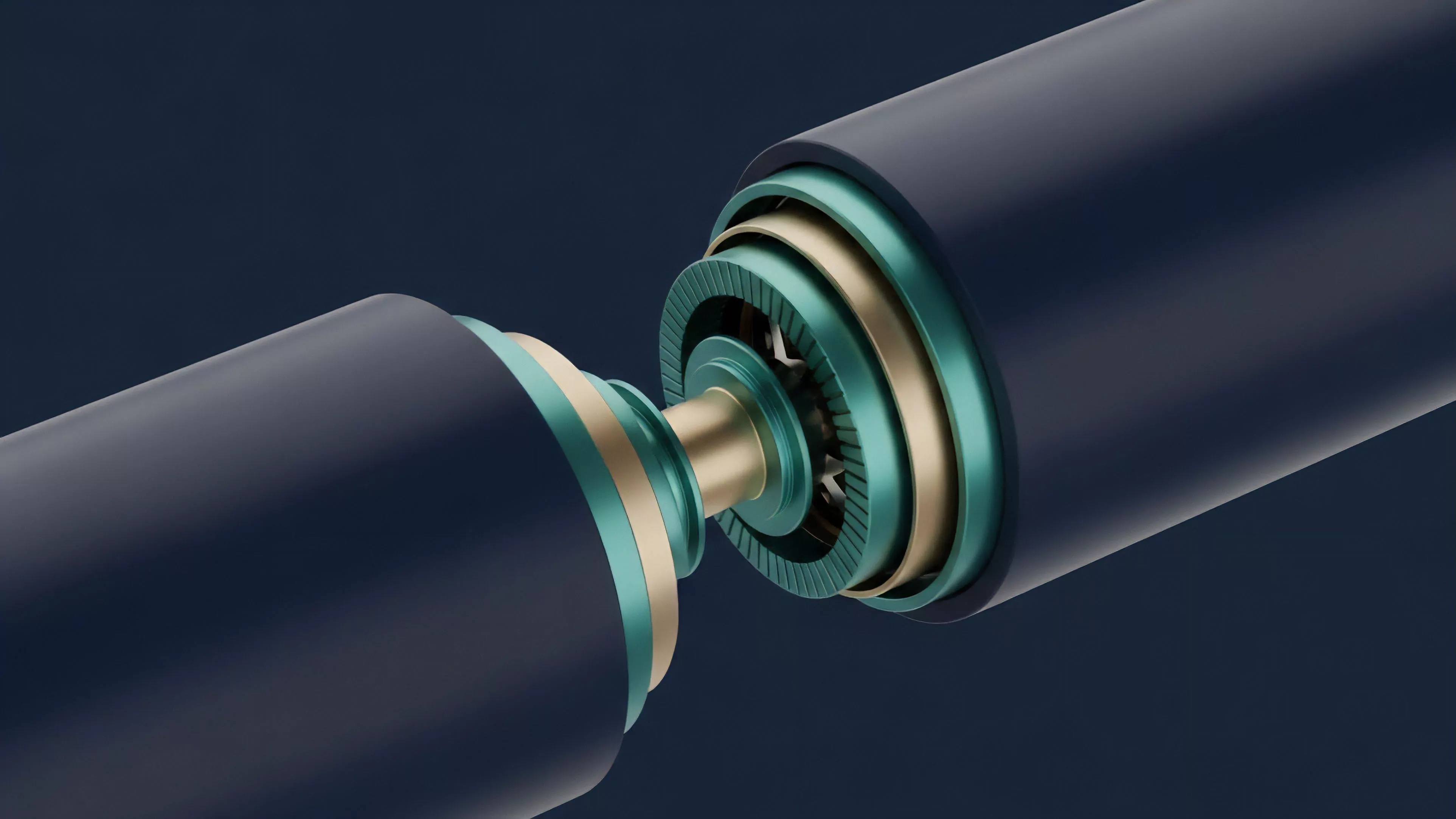

The mechanical integrity of Interchain Data Availability rests upon erasure coding and data availability sampling.

By using mathematical techniques such as Reed-Solomon encoding, data is expanded into redundant fragments. Even if a significant portion of these fragments becomes unavailable, the original dataset remains reconstructible.

Erasure coding allows nodes to verify the availability of large datasets by sampling only a fraction of the total encoded information.

Nodes participate in this system by performing random checks on the network. If a node can successfully retrieve a random piece of the encoded data, the probability that the entire block is available approaches certainty. This probabilistic model shifts the burden from high-bandwidth nodes to a distributed network of light clients, dramatically increasing the systemic capacity for transaction processing.

| Mechanism | Function |

| Erasure Coding | Ensures data redundancy through mathematical expansion |

| Sampling | Enables probabilistic verification of block availability |

| Commitment Schemes | Links execution state to verifiable data fragments |

Approach

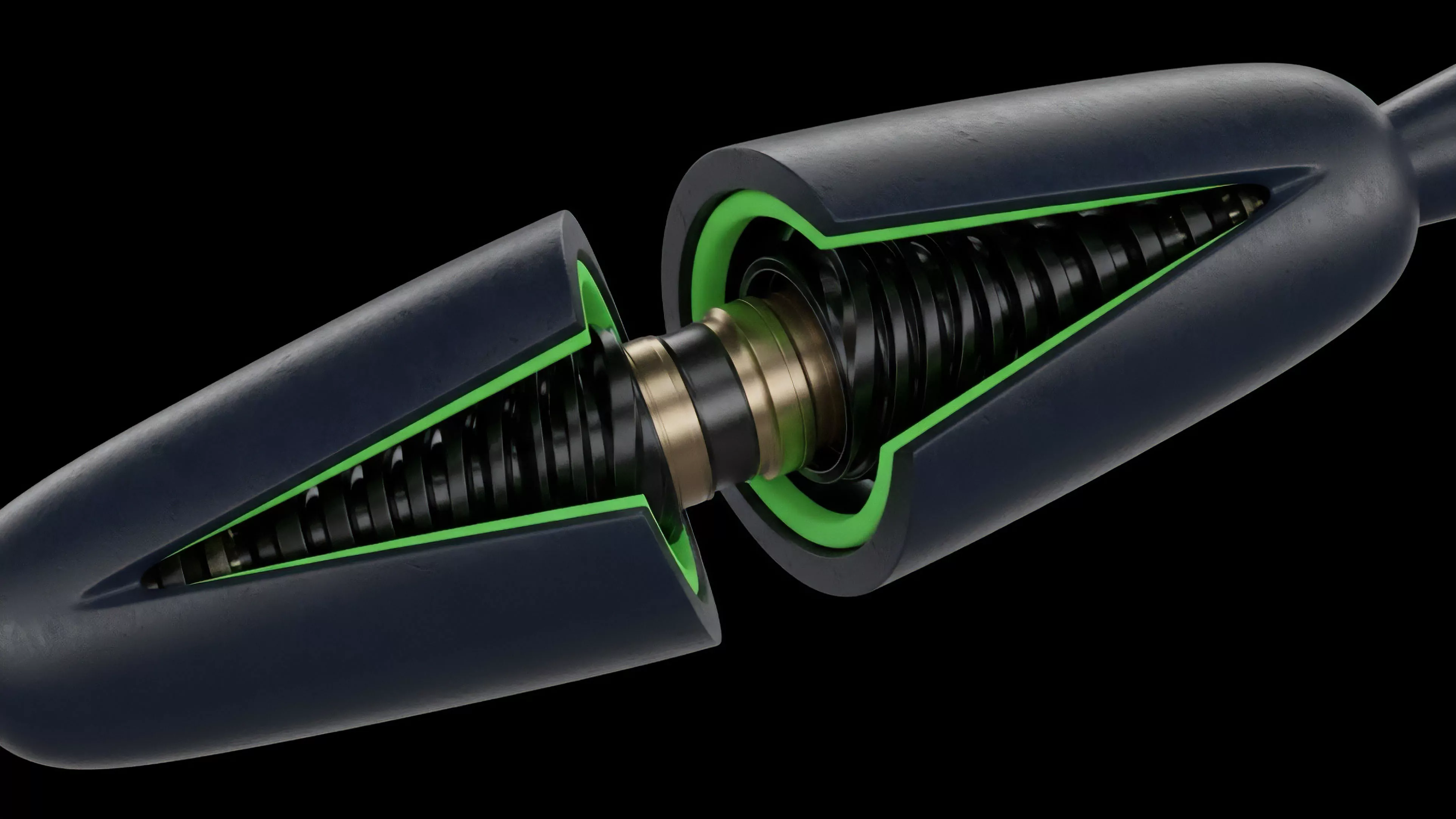

Current implementations utilize specialized peer-to-peer networks where data is fragmented and broadcasted. Validators or dedicated providers host these fragments, and the state of the network is maintained through cryptographic commitments like Merkle roots or KZG polynomial commitments. These commitments allow any observer to verify that a specific piece of data belongs to a valid block without needing the entire dataset.

- Data Dissemination ensures that information is broadcasted to enough participants to guarantee survival.

- Proof Generation creates succinct cryptographic evidence that the data was indeed published.

- State Verification allows external chains to confirm that an action occurred on the source network.

This approach transforms the role of the validator from a bottlenecked processor into a decentralized archivist. It creates a market for storage where the cost of data availability is separated from the cost of compute, allowing for more efficient resource allocation across the interchain.

Evolution

The transition from monolithic architectures to modular stacks represents the most significant shift in decentralized systems. Initially, chains were self-contained silos, each managing their own security and storage.

This created extreme fragmentation and forced users to pay for redundant storage across dozens of networks.

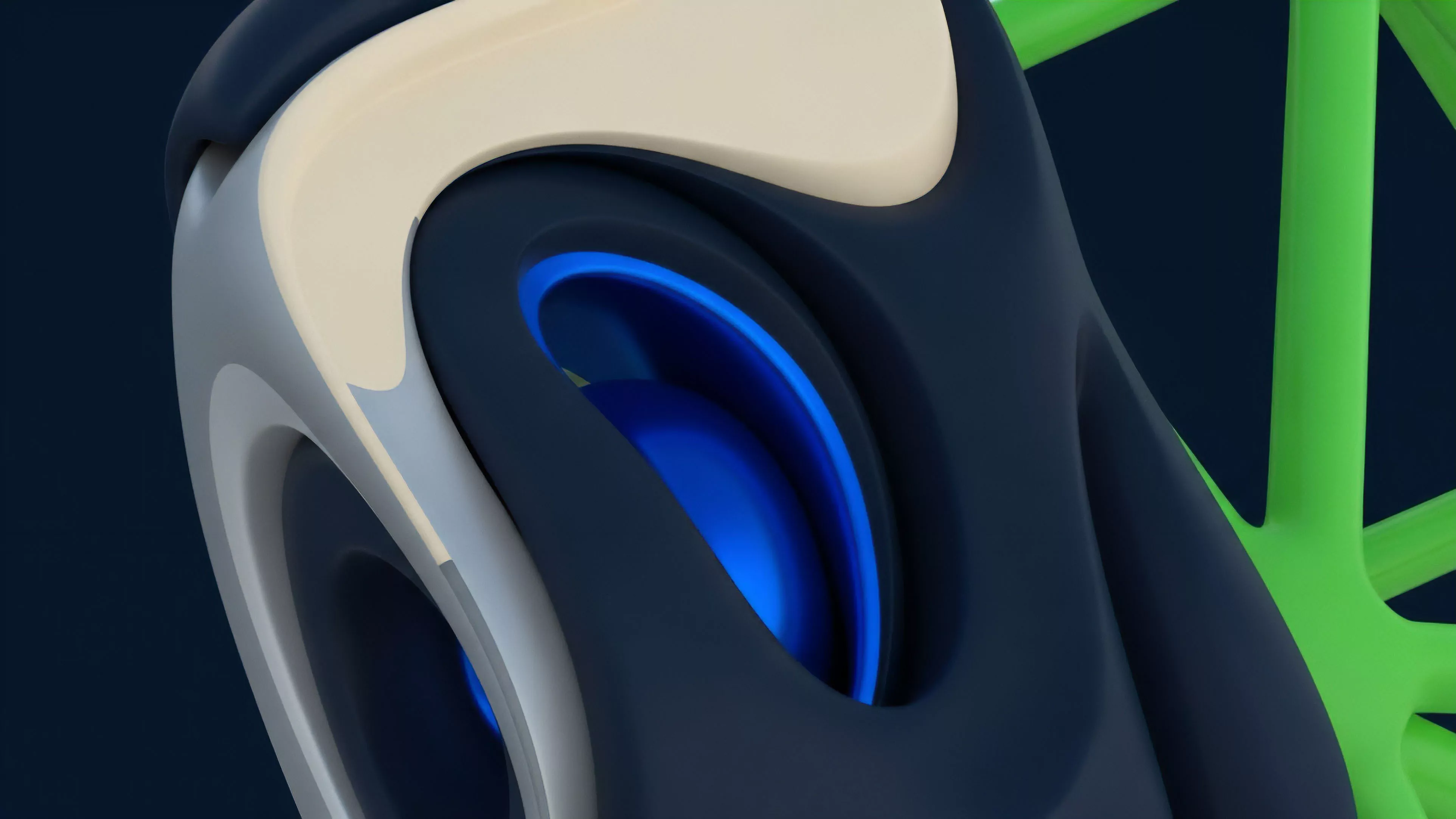

Modular design separates execution, settlement, consensus, and data availability into distinct, specialized protocol layers.

Modern systems now treat Interchain Data Availability as a commodity service. By utilizing specialized protocols, developers can launch new rollups that inherit the security of the primary chain while utilizing the cost-efficient storage of a dedicated data availability network. This change has lowered the barrier to entry for decentralized applications, enabling high-frequency trading platforms to operate with throughput levels previously only possible in centralized environments.

Horizon

The trajectory of this technology points toward a unified interchain state, where data availability is abstracted away from the end user entirely.

Future protocols will likely implement automated sharding and dynamic data pricing, where the network adjusts its availability parameters based on real-time demand.

| Development Stage | Strategic Focus |

| Phase One | Establishing basic data sampling mechanisms |

| Phase Two | Optimizing throughput and cost-efficiency |

| Phase Three | Autonomous cross-chain state synchronization |

The ultimate goal is the creation of a seamless financial infrastructure where assets move across chains without requiring bridges that rely on third-party trust. By standardizing the way data is published and verified, these systems will provide the foundation for a truly global, permissionless, and resilient digital market.