Essence

Information Aggregation Mechanisms function as the structural nervous system for decentralized derivatives, converting fragmented market signals into unified price references. These systems synthesize diverse data points ⎊ ranging from on-chain liquidity depth to off-chain exchange feeds ⎊ to establish a coherent valuation for complex financial instruments. Without these mechanisms, the risk of localized price manipulation and liquidity fragmentation renders large-scale derivative protocols unusable for institutional participants.

Information aggregation mechanisms transform dispersed data into actionable price discovery for decentralized financial markets.

These systems maintain the integrity of the margin engine by providing a singular, reliable source of truth for liquidation triggers. They reduce the impact of toxic order flow by filtering outliers and ensuring that derivative contracts remain anchored to the broader market reality.

Origin

The requirement for these mechanisms surfaced as decentralized exchanges transitioned from simple automated market makers to sophisticated order book and margin-based systems. Early protocols relied on single-source oracles, which proved vulnerable to front-running and flash loan attacks.

The evolution toward decentralized Information Aggregation Mechanisms was a direct response to the systemic fragility of these early designs. Developers identified that relying on a single exchange feed invited adversarial exploitation, particularly during periods of high volatility. This realization necessitated the development of decentralized oracle networks and volume-weighted average price engines that could withstand localized manipulation.

Theory

The mathematical structure of these mechanisms relies on weighting algorithms that prioritize data quality over raw quantity.

Protocols employ various statistical models to filter noise and detect anomalies in incoming price streams.

Weighted Data Models

- Volume-Weighted Average Price prioritizes data from exchanges with higher liquidity to ensure the aggregate reflects actual market depth.

- Medianized Price Feeds eliminate the influence of extreme outliers caused by temporary technical glitches or intentional price suppression.

- Time-Weighted Average Price smooths volatility over specific intervals to prevent flash-crash liquidations.

Statistical weighting models ensure that aggregate prices remain resilient against isolated exchange failures and malicious data manipulation.

The physics of these protocols involves a constant tension between latency and accuracy. A system that updates too slowly risks liquidating users based on stale data, while one that updates too rapidly may trigger liquidations based on transient price spikes. Optimal design requires a balance that accounts for the specific volatility profile of the underlying asset.

| Mechanism Type | Primary Benefit | Risk Factor |

| Volume Weighting | Reflects Market Depth | Manipulation via Wash Trading |

| Time Smoothing | Reduces Flash Crashes | Increased Latency |

| Decentralized Consensus | Eliminates Single Failure | Network Coordination Overhead |

Approach

Modern implementations utilize a layered architecture to maintain accuracy while ensuring security. The current standard involves aggregating data from multiple decentralized and centralized venues before processing it through a consensus-based filter.

Execution Layers

- Data ingestion from multiple liquidity providers and public APIs.

- Sanitization of data to remove erroneous or manipulated entries.

- Consensus calculation to determine the final reference price for the margin engine.

- On-chain broadcasting to update contract state.

This approach minimizes the attack surface by ensuring that no single compromised source can dictate the protocol price. Market participants now expect these mechanisms to be fully transparent, with the logic governing price aggregation codified directly into the smart contracts.

Evolution

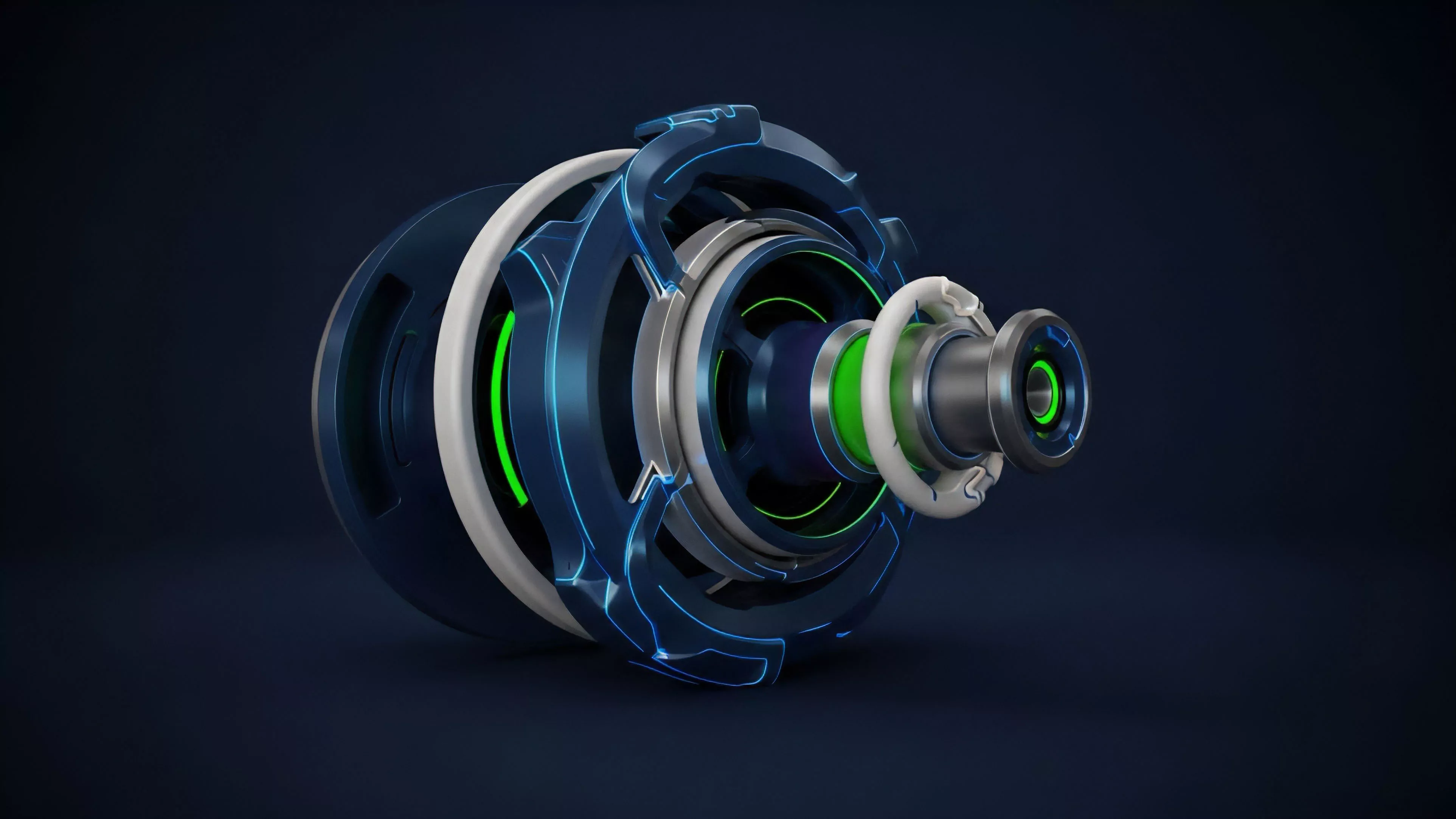

The transition from basic price feeds to sophisticated aggregation engines mirrors the broader maturity of the crypto-derivative landscape. Early iterations functioned as simple mirrors of centralized exchange data.

Contemporary systems now incorporate complex behavioral game theory to incentivize honest data reporting by node operators.

Advanced aggregation protocols now leverage incentive-aligned node networks to secure the integrity of decentralized pricing.

The evolution has moved toward modularity, where protocols can plug into different aggregation services based on their specific risk tolerance. This flexibility allows for the creation of exotic derivatives that require highly specific and verifiable data inputs.

Horizon

Future developments will focus on the integration of zero-knowledge proofs to verify the authenticity of data feeds without exposing the underlying sources. This will allow for the inclusion of private or proprietary data streams, significantly increasing the precision of derivative pricing.

Strategic Developments

- Zero-Knowledge Verification will enable the cryptographic proof of data integrity from external sources.

- Adaptive Latency Models will allow protocols to adjust update frequencies based on real-time market volatility.

- Cross-Chain Aggregation will enable the synthesis of liquidity from multiple blockchain networks into a unified price feed.

The shift toward predictive aggregation models will allow protocols to anticipate volatility before it manifests in the order book. This transition from reactive to proactive pricing will fundamentally change how margin requirements are calculated and managed.