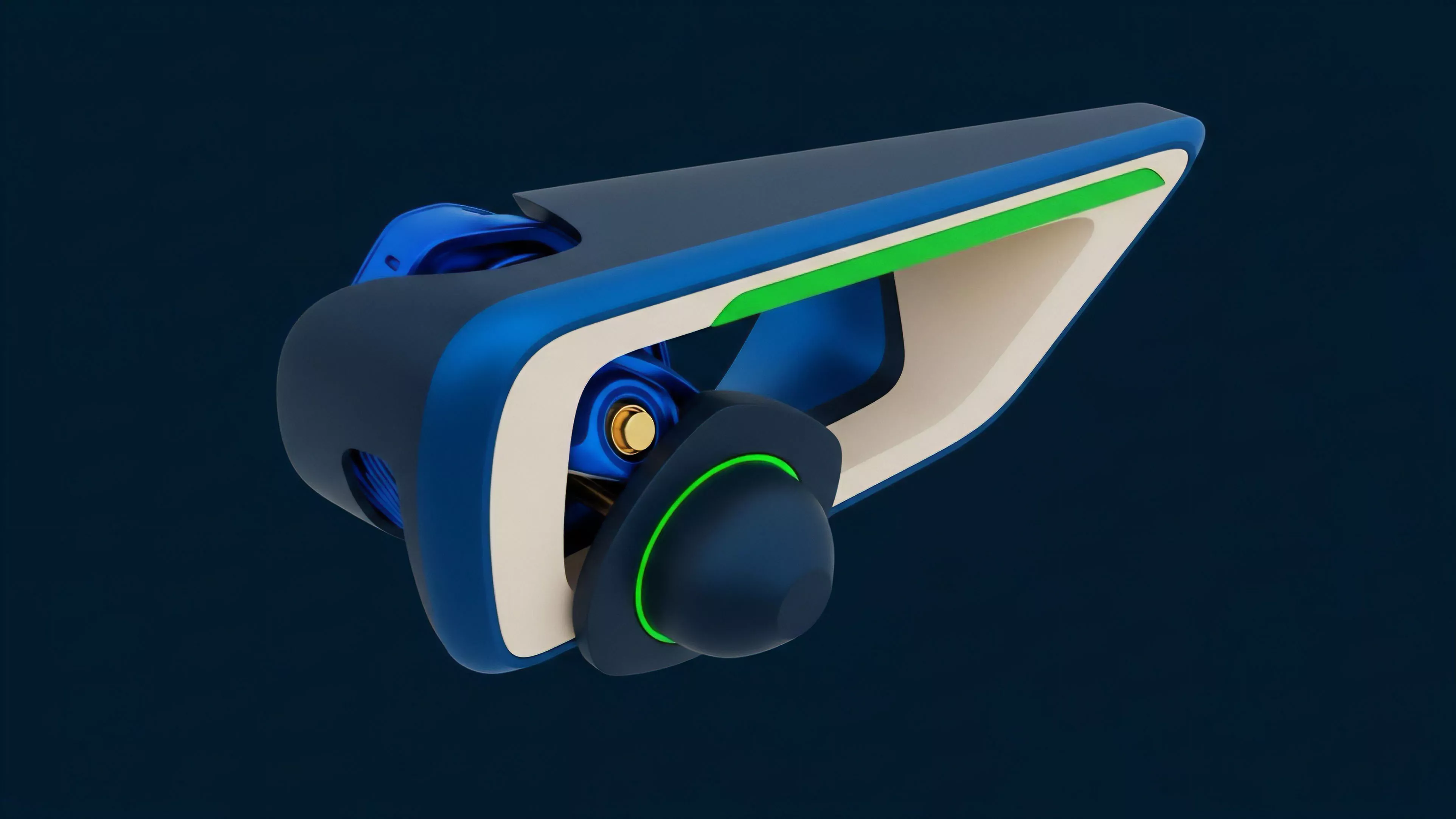

Essence

Hybrid Data Feeds represent the architectural synthesis of on-chain deterministic execution and off-chain probabilistic information retrieval. These mechanisms bridge the informational gap between decentralized protocols and external financial markets, ensuring that smart contracts possess the necessary awareness of asset prices, volatility surfaces, and macroeconomic indicators to facilitate complex derivative operations.

Hybrid Data Feeds function as the critical translation layer between external market reality and internal protocol logic.

The primary utility lies in mitigating the inherent latency and information asymmetry that plague purely decentralized oracle solutions. By combining the cryptographic verifiability of decentralized networks with the speed and breadth of centralized financial data, these feeds provide a robust foundation for automated margin management, liquidation engines, and algorithmic pricing models within crypto options markets.

Origin

The genesis of Hybrid Data Feeds traces back to the fundamental limitations of early oracle designs. Initially, protocols relied upon simple, single-source price feeds, which proved insufficient for the high-frequency requirements of derivative platforms.

The industry required a solution capable of handling the rapid state changes necessitated by options pricing, where volatility skew and time decay demand continuous, accurate input.

- Information Asymmetry necessitated mechanisms that could ingest data from multiple, high-throughput centralized exchanges without sacrificing the security guarantees of decentralization.

- Latency Requirements forced developers to seek alternatives to synchronous, block-by-block updates, leading to the adoption of off-chain computation modules that push data to the ledger only when specific thresholds or time intervals are triggered.

- Security Constraints drove the move toward consensus-based aggregation, where multiple independent nodes verify off-chain data before committing it to the smart contract state.

This evolution reflects a transition from simplistic, monolithic oracle architectures to layered, modular systems designed for resilience under adversarial conditions.

Theory

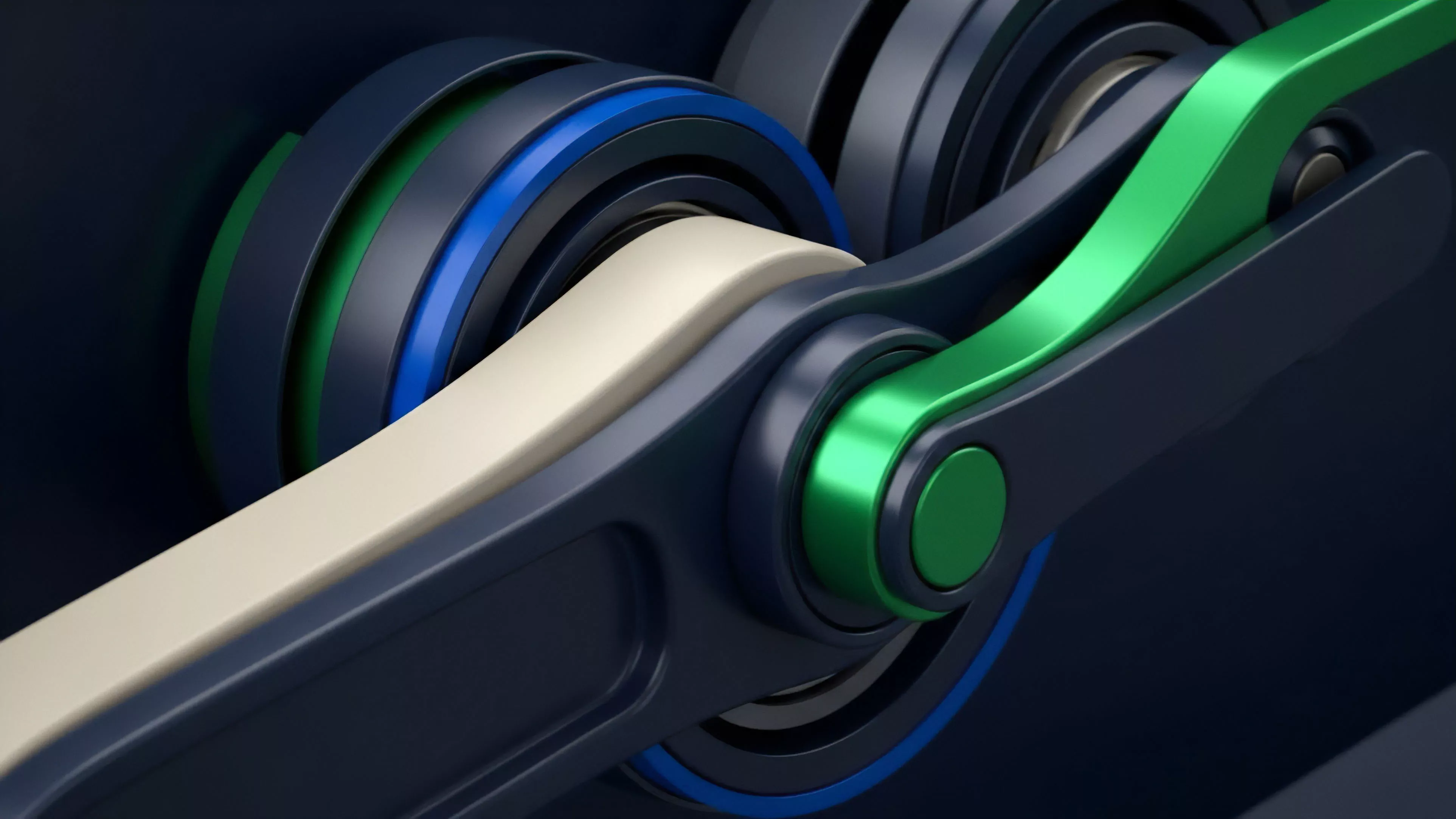

The structural integrity of Hybrid Data Feeds relies on a multi-tiered validation framework. At the base layer, data is aggregated from diverse, high-liquidity sources, including centralized exchanges and decentralized order books. This raw input undergoes rigorous filtering and normalization to account for anomalies, flash crashes, or market manipulation attempts.

Mathematical rigor in feed design requires the active rejection of outlier data points that threaten the stability of automated liquidation engines.

The computation layer employs sophisticated statistical models, such as volume-weighted average prices or median-based aggregators, to ensure that the reported price reflects the true market state. This process is inherently adversarial; participants in the feed network are incentivized through tokenomic structures to provide accurate data, while penalties for malicious behavior are enforced via stake slashing.

| Parameter | Mechanism | Function |

| Aggregation | Median-based consensus | Reduces noise and manipulation risk |

| Latency | Off-chain batching | Minimizes transaction costs and block congestion |

| Verification | Cryptographic signatures | Ensures provenance and data integrity |

The integration of these feeds into derivative protocols necessitates a deep understanding of Protocol Physics, specifically how update frequency impacts the margin engine’s sensitivity to price volatility. Frequent updates improve accuracy but increase the risk of triggering liquidations during transient market dislocations, creating a persistent trade-off between precision and systemic stability.

Approach

Current implementations of Hybrid Data Feeds emphasize the modularity of data delivery. Developers utilize off-chain relayer networks that monitor external markets and push data updates to the blockchain when specific price deviations occur.

This event-driven architecture reduces the gas overhead associated with constant updates while maintaining the high resolution required for active options trading.

Strategic deployment of these feeds involves balancing update frequency against the systemic costs of chain congestion.

The practical application of these feeds involves a constant calibration of risk parameters. Operators must configure the sensitivity of their Liquidation Engines to distinguish between genuine market trends and momentary volatility spikes. Failure to accurately tune these inputs leads to unnecessary liquidations, undermining the trust and utility of the underlying derivative protocol.

- Price Feed Sensitivity must be dynamically adjusted based on the underlying asset’s historical volatility and current market regime.

- Redundancy Protocols ensure that if one primary data source fails or becomes compromised, the feed seamlessly switches to secondary or tertiary sources to maintain operational continuity.

- Auditability Standards demand that every price update be traceable to its origin, allowing for post-mortem analysis of potential systemic failures.

This approach necessitates a high level of vigilance, as the feed is the primary point of failure for any automated derivative strategy.

Evolution

The trajectory of Hybrid Data Feeds is moving toward increased decentralization and trust-minimized verification. Early iterations relied on centralized aggregators, which created single points of failure. The current state incorporates decentralized oracle networks that distribute the responsibility of data collection and validation across a large set of independent participants, significantly enhancing the security posture of the entire system.

This evolution mirrors the broader development of decentralized finance, where the focus has shifted from mere functionality to extreme robustness. The integration of zero-knowledge proofs is the next frontier, allowing for the verification of complex computations performed off-chain without requiring the entire data set to be published on-chain. This advancement will allow for more complex derivative instruments, such as path-dependent options, to be traded with high efficiency and lower security overhead.

Horizon

The future of Hybrid Data Feeds lies in the development of predictive, rather than merely reactive, information streams.

Integrating machine learning models directly into the oracle architecture will allow for the anticipation of market volatility and liquidity shifts, enabling protocols to preemptively adjust margin requirements. This proactive stance is the key to achieving parity with traditional financial markets.

Future feed architectures will transition from passive observers to active participants in the risk management lifecycle of decentralized derivatives.

The systemic implication of this advancement is profound. By reducing the reliance on manual intervention and enhancing the predictive capabilities of smart contracts, these systems will enable a more resilient and efficient marketplace. The ultimate goal is the creation of a self-correcting financial infrastructure capable of maintaining stability under extreme stress, effectively neutralizing the contagion risks that have historically plagued decentralized ecosystems.