Essence

High-Frequency Trading Analysis operates as the sophisticated study of automated market interaction where speed and algorithmic precision dictate financial outcomes. This discipline scrutinizes how order flow, latency, and execution strategies determine price discovery within decentralized venues. It functions as the skeletal structure for liquidity provision, transforming raw cryptographic signals into coherent, actionable market data.

High-Frequency Trading Analysis examines the interaction between sub-millisecond execution speeds and the resulting impact on decentralized asset pricing and liquidity stability.

Market participants utilize this analytical lens to decode the hidden mechanics of order books. By deconstructing every tick and trade, the architect gains visibility into the adversarial nature of digital asset markets. This process does not involve simple observation; it requires deep engagement with the protocol-level events that govern asset movement and value exchange.

Origin

The genesis of this field traces back to the maturation of electronic exchanges where human intermediaries lost their status as primary liquidity providers.

Early developments in equity markets provided the blueprint for digital asset protocols, emphasizing the transition from manual quote submission to machine-driven market making. Decentralized finance adapted these principles, embedding them directly into smart contract architectures.

- Latency Arbitrage emerged as the primary driver for early infrastructure investment, forcing participants to optimize physical server proximity to exchange gateways.

- Algorithmic Execution evolved from basic rule-based systems to complex models capable of anticipating order book imbalances before they manifested in the price.

- Market Microstructure research became the standard for understanding how specific protocol rules influence participant behavior and overall venue efficiency.

This history reveals a clear trajectory toward total automation. As exchanges shifted to distributed ledgers, the need for advanced analysis grew exponentially to account for the unique constraints of block times and consensus mechanisms.

Theory

The theoretical framework rests on the study of Order Flow and its impact on the limit order book. Every transaction leaves a trace within the public ledger, allowing for a reconstruction of participant intent.

Quantitative models treat this flow as a stochastic process, identifying patterns that predict short-term price movements or liquidity gaps.

Market Microstructure Dynamics

The interaction between Takers and Makers creates a constant tension. Takers prioritize immediate execution, paying a premium in the form of the spread, while Makers supply liquidity, harvesting that premium as compensation for inventory risk.

| Metric | Function | Impact |

|---|---|---|

| Bid-Ask Spread | Cost of immediacy | Liquidity gauge |

| Order Book Depth | Absorptive capacity | Volatility buffer |

| Latency | Information speed | Arbitrage edge |

The integrity of decentralized markets depends on the efficiency of liquidity provision mechanisms that mitigate the risks of information asymmetry.

Mathematical modeling of Greeks ⎊ delta, gamma, theta, vega ⎊ provides the necessary risk sensitivity for derivative instruments. These variables allow the strategist to quantify exposure to price, time, and volatility changes. The complexity of these models often hides systemic vulnerabilities that only become apparent during periods of extreme market stress.

Approach

Modern practitioners utilize high-fidelity data streams to map the topography of decentralized venues.

This requires a synthesis of Smart Contract Security and Quantitative Finance. The goal involves identifying structural weaknesses in protocol design that allow for profitable arbitrage or defensive positioning.

- On-chain Monitoring provides real-time visibility into whale movements and liquidity shifts, allowing for proactive adjustments to strategy.

- Adversarial Simulation tests how specific trading algorithms behave under the pressure of malicious actors or sudden network congestion.

- Governance Analysis evaluates how protocol parameter changes might impact long-term liquidity and participant incentives.

The application of Behavioral Game Theory remains essential. Markets function as adversarial environments where every participant seeks to optimize their position at the expense of others. Understanding these strategic interactions allows for the development of robust, resilient financial strategies capable of surviving the inherent volatility of digital assets.

Evolution

The transition from centralized to decentralized venues fundamentally altered the landscape.

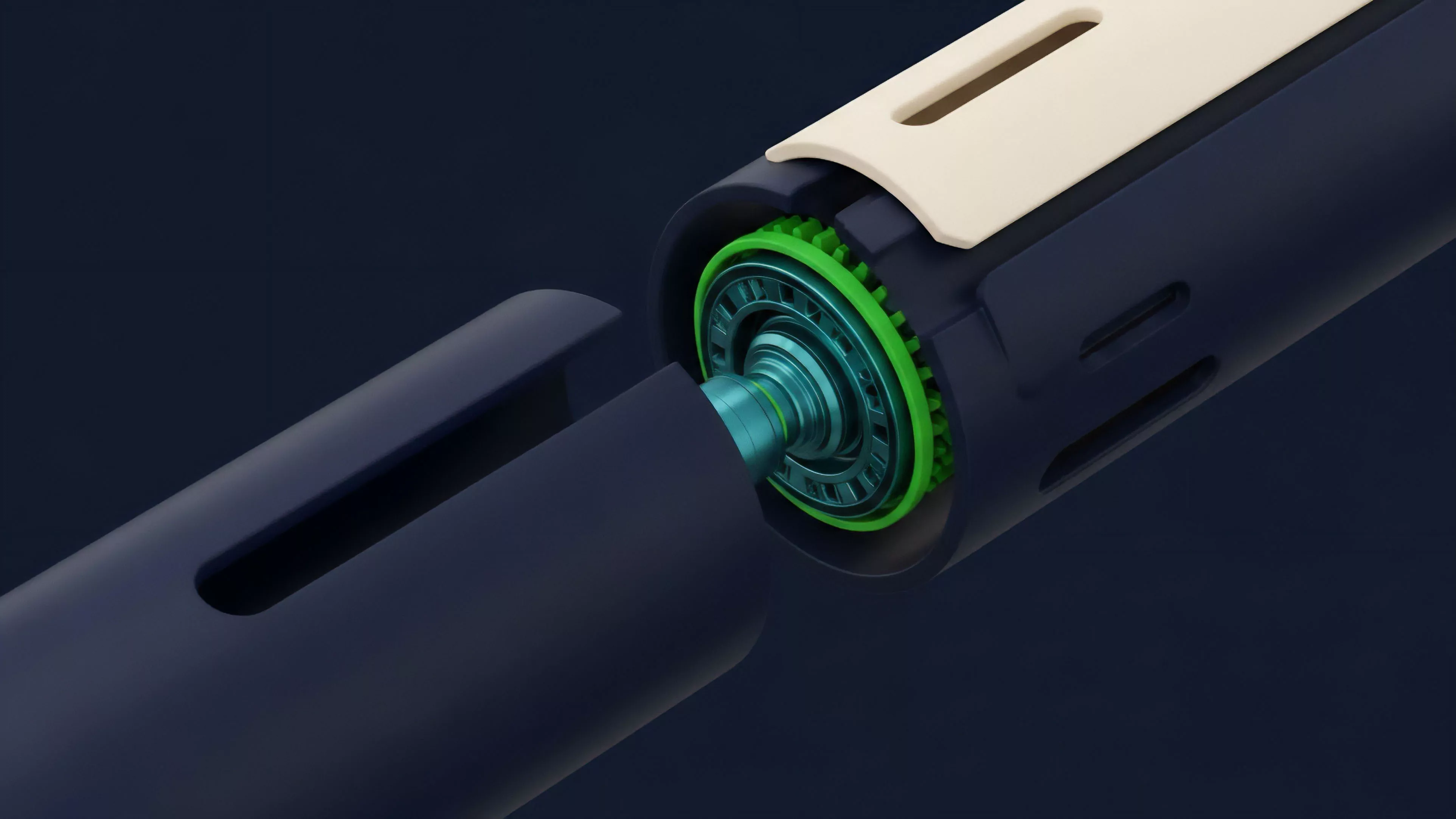

Traditional models relied on trusted gateways, whereas modern systems depend on Consensus Physics and decentralized validation. This shift forces a re-evaluation of how latency and information propagation occur within the system.

Evolutionary shifts in trading venues from centralized servers to distributed networks require a complete re-engineering of risk management and execution logic.

Recent developments highlight the increasing importance of MEV (Maximal Extractable Value) as a component of the trading analysis. Participants now factor in the ability to influence transaction ordering within blocks, turning a technical feature of blockchain architecture into a core financial strategy. This evolution demonstrates how deeply technical constraints define the boundaries of potential profit.

| Era | Focus | Primary Tool |

|---|---|---|

| Legacy | Speed | Direct connection |

| Current | Architecture | Smart contract logic |

| Future | Predictive | Machine learning |

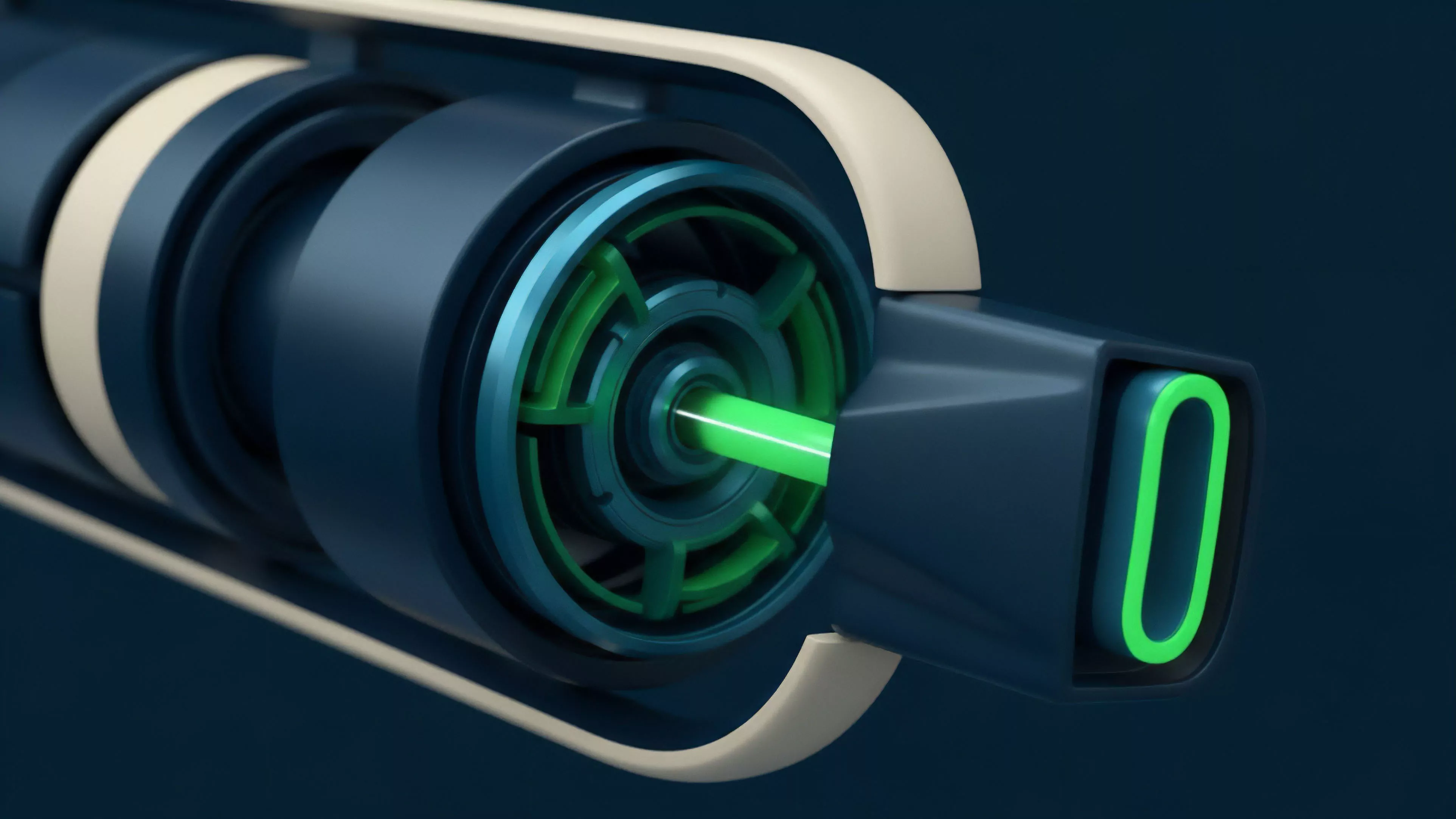

Sometimes, looking at the physics of a system ⎊ the way energy or information moves through a medium ⎊ reveals more about the financial outcome than any balance sheet analysis. The architecture dictates the reality of the market, and those who understand the protocol’s physical limits gain a significant advantage.

Horizon

The next phase involves the integration of predictive analytics directly into the consensus layer. As protocols become more complex, the ability to anticipate liquidity needs will determine which venues attract institutional volume. Cross-chain Arbitrage and Multi-protocol Liquidity Management will represent the standard for sophisticated participants. Systemic risk remains the most significant hurdle. The interconnectedness of modern protocols creates paths for contagion that are difficult to model using legacy tools. The focus must shift toward creating self-healing systems that can withstand extreme volatility without collapsing into a state of total liquidity failure. The future belongs to those who view the market as a living, breathing machine that requires constant, rigorous oversight and structural innovation.