Essence

Fundamental Data Integration serves as the connective tissue between raw on-chain telemetry and the sophisticated pricing models required for derivative markets. It functions by normalizing disparate data streams ⎊ such as protocol revenue, token velocity, and active address counts ⎊ into a structured format compatible with high-frequency financial engines. This process transforms decentralized noise into actionable inputs for volatility estimation and risk management.

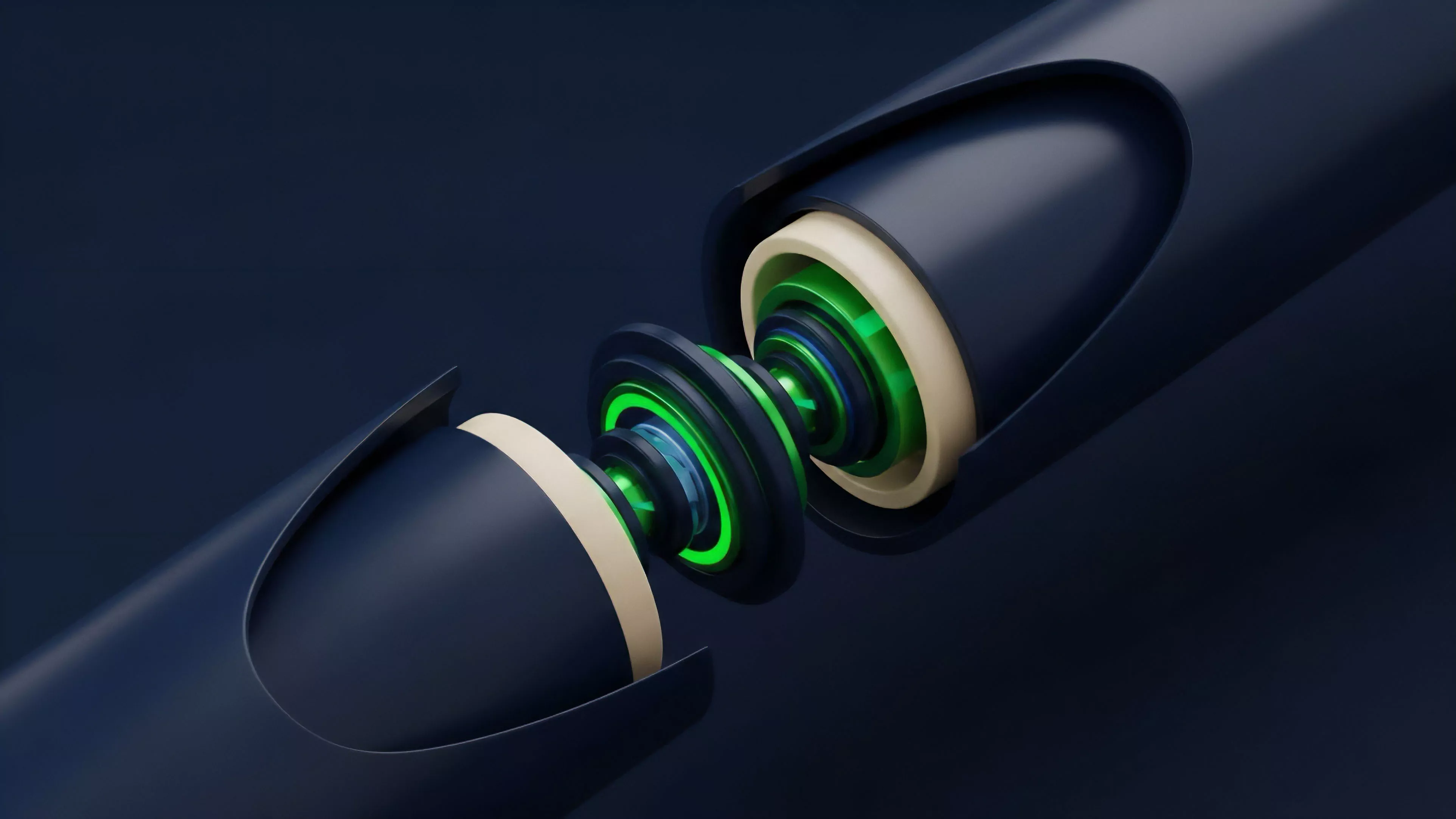

Fundamental Data Integration transforms raw decentralized network activity into structured variables essential for pricing derivative instruments.

The core utility lies in bridging the gap between blockchain-native events and traditional quantitative finance metrics. Without this layer, market participants rely on speculative price action alone, ignoring the underlying economic health of the protocols supporting their positions. By embedding these metrics into the pricing framework, traders gain a clearer view of the intrinsic value driving long-term volatility, moving beyond simple technical analysis.

Origin

The necessity for Fundamental Data Integration surfaced during the early maturity of decentralized finance protocols.

Initial derivative platforms functioned with limited visibility, relying on external price feeds that lacked context regarding the protocol’s internal state. Developers recognized that sustainable leverage requires understanding the collateral’s health, not just its current market price.

- Protocol Analytics provided the first primitive indicators, such as total value locked and transaction throughput.

- Quantitative Research demanded standardized interfaces to ingest these metrics into Black-Scholes or binomial option pricing models.

- Market Maker Requirements drove the transition from static data ingestion to real-time, event-driven pipelines capable of adjusting Greek exposure based on network fundamentals.

This evolution was fueled by the requirement to mitigate systemic risks that arise when derivative liquidity decouples from the economic reality of the underlying asset. The transition from simple price oracles to comprehensive data integration layers represents a significant advancement in the robustness of decentralized financial systems.

Theory

The theoretical framework of Fundamental Data Integration rests upon the assumption that protocol activity and asset value exhibit measurable correlations over specific time horizons. By quantifying network usage as a proxy for economic activity, architects can derive more accurate volatility surfaces for crypto options.

This involves mapping blockchain state transitions to financial variables such as implied volatility, skew, and kurtosis.

Structural Components

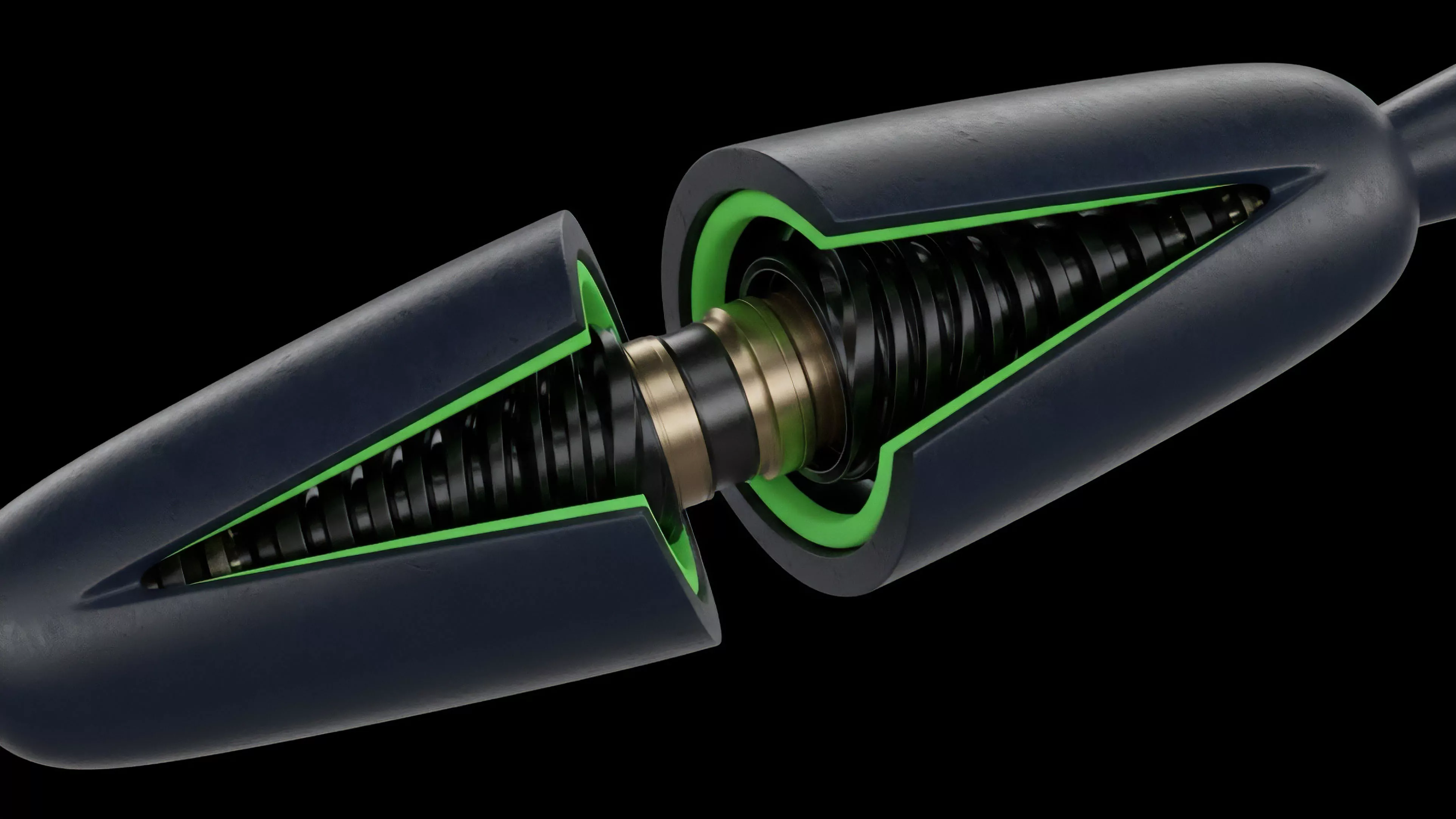

- Telemetry Normalization involves mapping raw byte-level blockchain data to standardized financial schemas.

- Latency Management ensures that data ingestion occurs within thresholds required for real-time derivative margin adjustments.

- Validation Logic filters out anomalous on-chain events that could trigger false signals in risk management engines.

Standardized telemetry normalization allows for the seamless mapping of on-chain activity to complex derivative pricing parameters.

The quantitative rigor here is intense. When modeling option pricing, the inclusion of fundamental data as a drift parameter or a volatility modifier changes the outcome significantly. If the model ignores protocol revenue growth, the resulting delta hedging strategy may fail during periods of high market stress, as the model remains blind to the fundamental shift in asset demand.

It is a constant battle against information asymmetry in an adversarial environment.

Approach

Current methodologies for Fundamental Data Integration prioritize modular architecture and cryptographic verification. Rather than relying on centralized intermediaries, modern protocols utilize decentralized oracle networks and state proofs to verify on-chain data before feeding it into pricing engines. This approach minimizes the attack surface and ensures that the inputs used for margin calculations are tamper-resistant.

| Integration Type | Mechanism | Risk Profile |

| Oracle-based | Aggregated off-chain consensus | Medium |

| On-chain native | Smart contract state reads | Low |

| Hybrid | State proofs with relayers | Low |

The strategic focus is on creating feedback loops where changes in network metrics automatically adjust the risk parameters of open positions. This automation requires highly precise data pipelines, as errors in the integration layer can lead to incorrect liquidations or suboptimal capital allocation. Maintaining this precision in a permissionless, high-throughput environment remains the primary technical challenge.

Evolution

The path of Fundamental Data Integration has moved from manual, batch-processed reports to autonomous, low-latency streams.

Early implementations were reactive, providing retrospective insights that offered little value for active trading. The current state represents a shift toward predictive systems where data is processed in real-time, allowing derivative protocols to adjust their risk parameters dynamically.

Dynamic risk parameter adjustment based on real-time fundamental data represents the current standard for robust derivative protocols.

This evolution mirrors the broader development of decentralized finance, where the focus has shifted from simple utility to high-performance financial engineering. The integration layer now supports sophisticated instruments like exotic options and volatility-linked tokens, which require constant, reliable streams of fundamental data to remain solvent. The structural shift toward composability has allowed these data layers to be shared across multiple protocols, reducing the cost of infrastructure development.

Horizon

The future of Fundamental Data Integration lies in the intersection of zero-knowledge proofs and high-frequency financial modeling.

Future systems will likely allow for the private, verifiable integration of sensitive protocol data, enabling more complex risk management strategies without compromising user privacy. As these technologies mature, the granularity of data will increase, allowing for the pricing of risk at the individual account level rather than just the protocol level.

- ZK-Proofs will enable trustless verification of complex on-chain metrics, increasing the reliability of derivative pricing.

- Predictive Analytics will move beyond current metrics to incorporate cross-protocol correlation data into pricing engines.

- Autonomous Governance will utilize these integrated data streams to adjust protocol parameters without manual intervention.

The convergence of these advancements suggests a future where decentralized markets operate with the same, if not greater, efficiency as their traditional counterparts. The ability to integrate fundamental data into the core of the derivative stack is the definitive step toward building a resilient, transparent financial system.