Essence

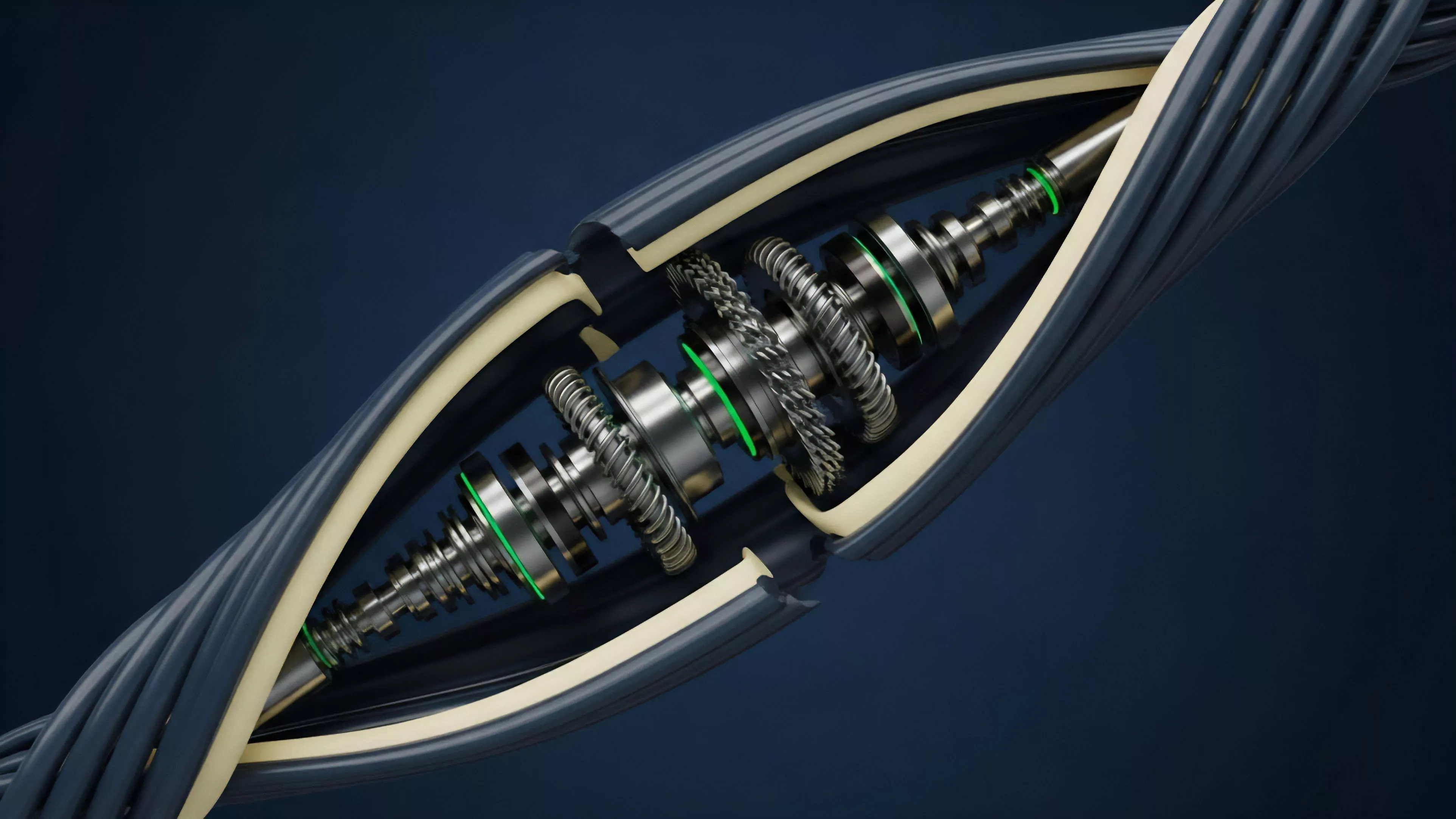

Financial Data Reporting constitutes the systematic aggregation, standardization, and dissemination of trade-related metrics within decentralized derivatives venues. It transforms raw, asynchronous blockchain event logs into coherent streams of market intelligence, providing the visibility required for participants to evaluate risk, liquidity, and price discovery. This infrastructure serves as the connective tissue between opaque smart contract execution and the requirements of professional capital allocation.

Financial Data Reporting functions as the primary bridge between raw decentralized execution logs and actionable market intelligence for participants.

Without these standardized reporting mechanisms, market participants operate in a state of informational asymmetry where the internal state of a protocol remains decoupled from external valuation models. The reporting layer enforces transparency, allowing for the observation of open interest, funding rate decay, and volatility surfaces that define the health of a decentralized exchange.

Origin

The necessity for Financial Data Reporting emerged from the limitations of early decentralized order books, which lacked the latency and indexing capabilities of traditional financial databases. Initial iterations relied on direct node queries, an inefficient process that failed under high volatility.

The evolution began when indexers and subgraphs enabled the transformation of event-driven blockchain data into queryable formats.

- Indexer Protocols provide the foundational indexing layers that allow raw transaction logs to be sorted into relational databases.

- Standardized API Frameworks enable third-party analytics providers to consume protocol data without custom integration for every new contract deployment.

- On-chain Oracles supply the external price references necessary to calculate mark-to-market valuations for derivative positions.

This transition moved the market away from fragmented, protocol-specific data silos toward a more unified view of decentralized liquidity. The architecture of these reporting systems now mirrors traditional financial data providers, yet it remains fundamentally tethered to the constraints of block confirmation times and gas costs.

Theory

The architecture of Financial Data Reporting rests on the principle of verifiable transparency. By mapping contract-level events ⎊ such as margin updates, liquidations, and trade executions ⎊ to a time-series database, the system constructs a granular history of market behavior.

This data allows for the rigorous application of quantitative models, including the calculation of implied volatility and delta sensitivity for complex option structures.

| Metric | Systemic Relevance |

|---|---|

| Open Interest | Indicates total capital exposure and market leverage |

| Funding Rates | Reflects cost of carry and directional bias |

| Liquidation Thresholds | Measures systemic risk and collateral health |

The mathematical integrity of these reports depends on the synchronization between block timestamps and the actual execution of trade logic. If a reporting layer experiences latency, the resulting data misrepresents the true state of market liquidity, potentially triggering cascading liquidations if automated trading agents rely on stale information.

Granular reporting of trade events enables the application of rigorous quantitative models to evaluate volatility surfaces in decentralized markets.

This is where the model becomes dangerous if ignored; the abstraction of data often masks the underlying protocol physics. A reported price is a historical artifact of a specific consensus state, not a continuous stream. Understanding the difference between a block-based snapshot and continuous time-series data is the primary hurdle for any quantitative strategist operating in this space.

Approach

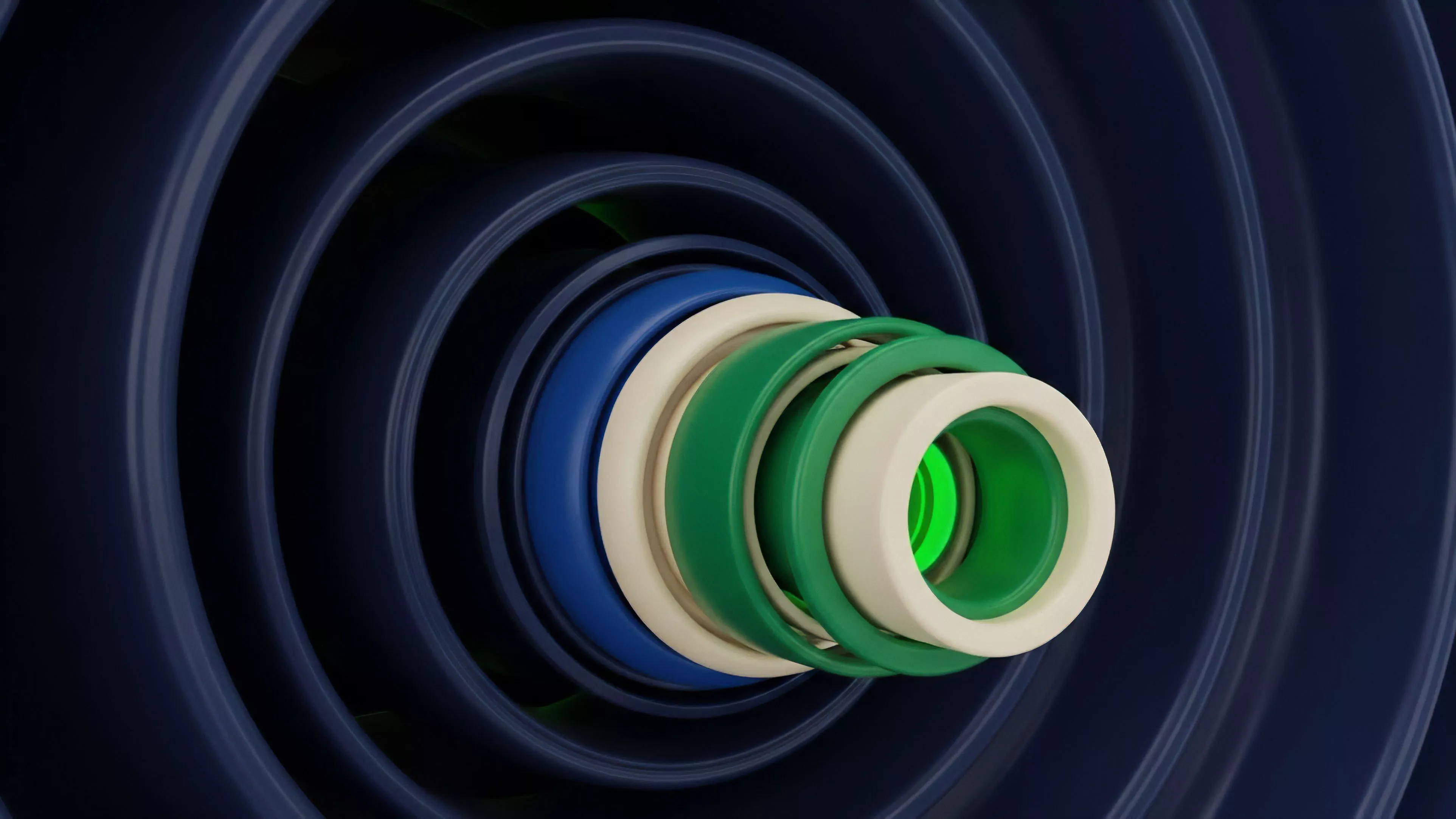

Current methodologies for Financial Data Reporting leverage a combination of off-chain indexers and on-chain verification to maintain data integrity.

Sophisticated market makers now operate proprietary nodes to bypass public API latency, ensuring they receive event updates at the earliest possible block height. This creates a tiered informational hierarchy where speed dictates the accuracy of risk management.

- Direct Node Synchronization offers the lowest latency for high-frequency trading strategies.

- Graph-based Indexing allows for complex historical analysis of liquidity provider behavior.

- WebSocket Streams provide near-real-time updates on order book changes and trade execution.

The shift toward modular, decentralized reporting services is accelerating. These services aggregate data from multiple chains and protocols, offering a unified dashboard for tracking cross-margin exposures. This consolidation is vital for assessing contagion risks, as it allows for the monitoring of collateral concentration across different decentralized lending and derivatives platforms.

Evolution

The trajectory of Financial Data Reporting has moved from manual, post-hoc data scraping toward automated, high-fidelity streaming.

Early participants relied on static CSV exports from block explorers, which were insufficient for the rapid pace of crypto options. The current state involves sophisticated, low-latency pipelines that process terabytes of event data to feed risk engines in real time.

Standardized reporting architectures have evolved from manual data extraction to automated, low-latency streams capable of supporting complex risk engines.

This development reflects a broader transition toward institutional-grade infrastructure. As protocols adopt more complex margin models, the reporting layer must handle increasingly sophisticated inputs, such as cross-collateralization and dynamic risk parameters. The ability to audit these data pipelines is becoming as significant as the smart contracts themselves, as market participants demand verifiable proof of the inputs driving their risk management software.

Horizon

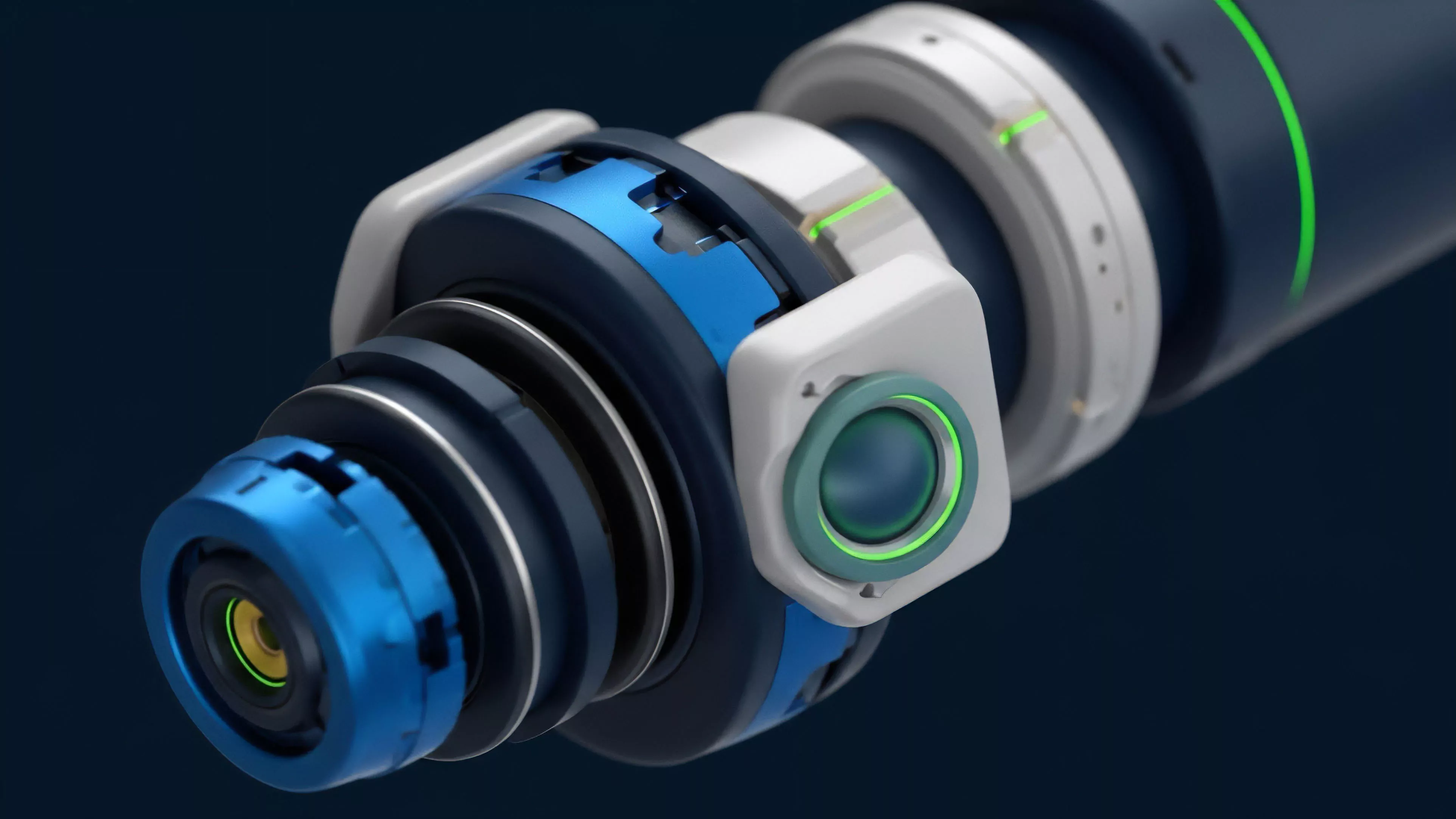

Future developments in Financial Data Reporting will center on the integration of zero-knowledge proofs to verify the accuracy of off-chain data feeds without requiring full node synchronization.

This allows for lightweight, trustless reporting that maintains the integrity of decentralized markets while reducing the technical burden on participants. The next phase involves the emergence of decentralized data oracles that compete on accuracy and latency.

| Innovation | Expected Impact |

|---|---|

| ZK-Proofs | Verifiable data integrity for off-chain reporting |

| Decentralized Oracles | Reduction of single points of failure in price feeds |

| Predictive Analytics | Automated identification of potential systemic liquidations |

We are witnessing the transformation of data from a passive resource into an active component of protocol security. The ultimate goal is a self-reporting ecosystem where the protocol itself provides cryptographically signed metrics, removing the reliance on centralized indexers entirely. This evolution is the critical path toward achieving true institutional participation in decentralized derivatives markets.