Essence

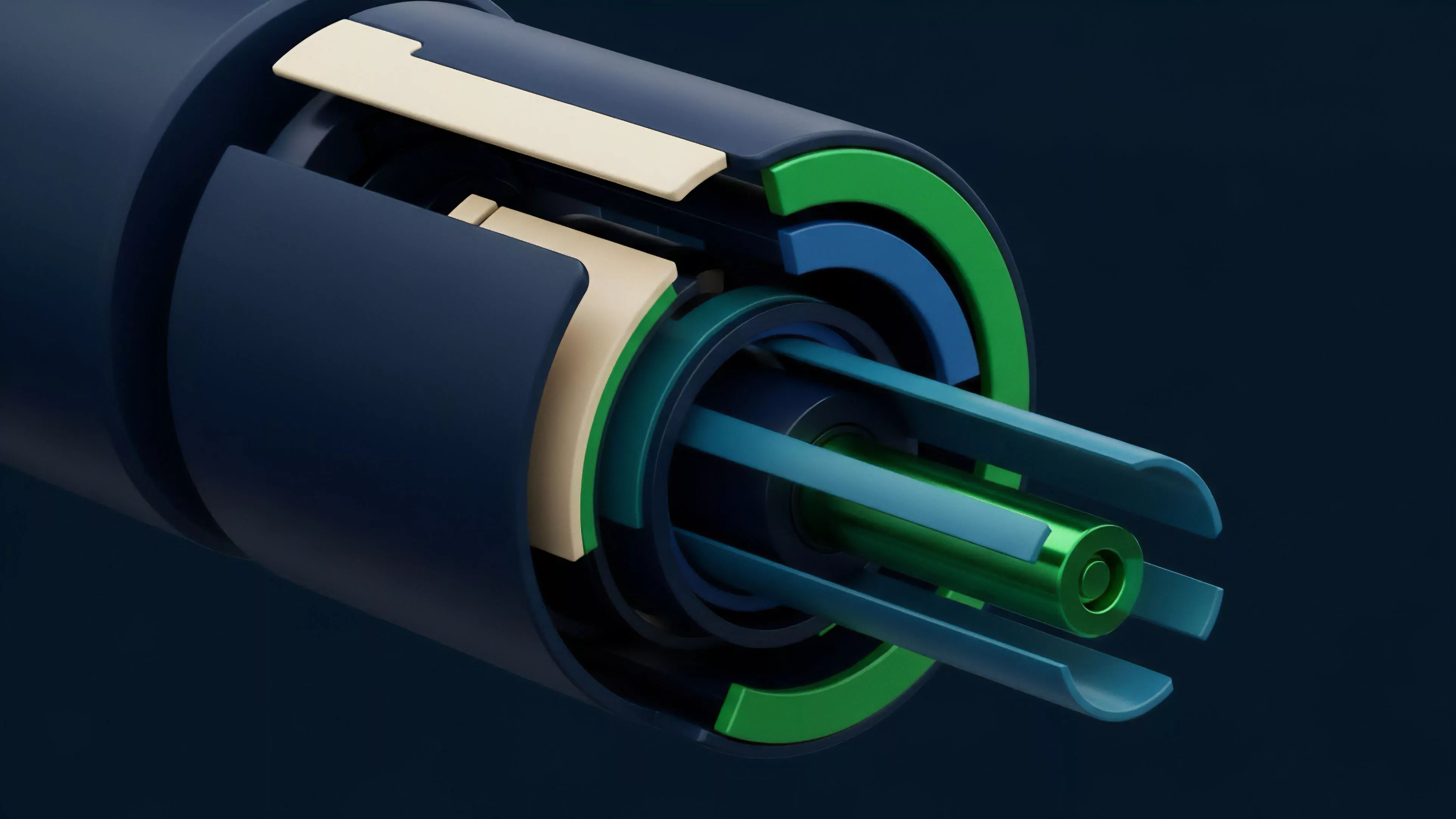

External Data Validation serves as the connective tissue between deterministic on-chain execution and the stochastic nature of off-chain reality. Decentralized derivatives protocols rely upon these mechanisms to bridge the gap between internal state updates and global market prices. Without this verification, smart contracts operate in a vacuum, susceptible to manipulated inputs and localized price discrepancies that deviate from broader liquidity pools.

External Data Validation acts as the cryptographic verification layer ensuring that off-chain price feeds align with actual market settlement values.

The fundamental utility of this process lies in its ability to enforce contract integrity. When an option contract triggers a settlement or a liquidation, the protocol must determine the underlying asset price with absolute certainty. By establishing a robust pipeline for data ingestion, the system minimizes the risk of front-running or malicious oracle manipulation.

This ensures that the decentralized financial architecture maintains fidelity to the economic reality it purports to track.

Origin

The requirement for External Data Validation emerged from the inherent limitations of blockchain architectures, which cannot natively access internet-based information. Early iterations of decentralized finance faced a bottleneck where price discovery remained trapped on centralized exchanges, while contract settlement occurred on-chain. This structural divide created an arbitrage opportunity for actors capable of exploiting latency between these two environments.

Early developers recognized that relying on a single, centralized data provider created a single point of failure. The subsequent shift toward decentralized oracle networks aimed to distribute trust across multiple nodes. This transition transformed the validation process from a static, vulnerable point of data entry into a dynamic, consensus-based mechanism.

The evolution mirrors the broader development of blockchain technology, moving from trusted intermediaries to trust-minimized, cryptographic verification protocols.

Theory

The mechanics of External Data Validation depend on the aggregation of diverse data points to construct a representative price index. This involves complex statistical filtering to discard outliers that could trigger erroneous liquidations. The mathematical rigor applied to these feeds dictates the stability of the entire derivatives ecosystem.

Consensus Mechanisms

- Data Aggregation involves polling multiple independent sources to derive a median or volume-weighted average price.

- Deviation Thresholds define the sensitivity of the system to price updates, ensuring that minor noise does not cause excessive gas consumption.

- Cryptographic Proofs provide verification that the data originated from an authorized and uncompromised source.

Mathematical filtering of off-chain data prevents localized price spikes from destabilizing the on-chain margin engine.

The interplay between these variables creates a robust defensive perimeter. When an adversarial agent attempts to manipulate a specific exchange, the aggregation algorithm identifies the deviation and excludes the compromised data point. This behavior relies on the assumption that the majority of sources remain honest or that the cost of corrupting the entire set exceeds the potential profit from a successful exploit.

Approach

Current methodologies prioritize high-frequency updates and multi-layered redundancy to mitigate systemic risks.

Developers now implement sophisticated filtering algorithms that evaluate the reputation and historical accuracy of individual data nodes. This ensures that the inputs governing option pricing models remain consistent with global liquidity trends.

| Mechanism | Function | Risk Profile |

| Decentralized Oracles | Multi-node data aggregation | Low |

| Direct Exchange Feeds | Real-time price stream | High |

| ZK Proof Verification | Compressed data integrity | Very Low |

The operational focus centers on latency reduction. In fast-moving markets, even a few seconds of delay in data validation can lead to significant slippage or unfair liquidations. Consequently, architects are deploying off-chain computation layers that process data and provide signed proofs to the smart contract, which then validates these inputs against predefined protocol constraints.

Evolution

The path from simple, centralized price feeds to sophisticated, multi-source validation systems represents a maturation of the decentralized derivatives sector.

Initial systems often suffered from extreme volatility during market stress events, as the validation mechanisms failed to account for sudden liquidity drops. This prompted a pivot toward more resilient, modular architectures that incorporate historical data analysis and circuit breakers.

Adaptive validation logic now incorporates real-time liquidity assessment to adjust for market stress and prevent cascading failures.

We currently witness a shift toward incorporating cross-chain data verification. As derivatives protocols expand across multiple networks, the ability to validate data from disparate sources becomes critical. This allows for a unified price reference that remains consistent regardless of the underlying blockchain environment.

The integration of zero-knowledge proofs further enhances this process, allowing for the transmission of large datasets without sacrificing the efficiency of the settlement engine.

Horizon

The future of External Data Validation lies in the development of trustless, high-fidelity feeds that eliminate the need for human-managed node sets. We anticipate the rise of protocols that derive their validation logic directly from decentralized exchange order books, effectively creating a feedback loop between trading activity and oracle updates. This will likely reduce the reliance on external data providers and move toward a self-contained, endogenous validation system.

Strategic Developments

- Endogenous Price Discovery utilizes protocol-specific order flow to determine settlement prices without reliance on external exchanges.

- Predictive Validation Models use machine learning to identify anomalous data patterns before they impact contract settlement.

- Hardware-Based Security leverages Trusted Execution Environments to ensure that data ingestion occurs within a secure, tamper-proof environment.

The ultimate goal remains the total elimination of systemic latency. By architecting systems that treat external data as a verifiable stream of proofs rather than a reactive input, the next generation of decentralized options will achieve parity with institutional trading venues. The critical question remains whether the computational cost of these advanced validation techniques will scale alongside the increasing throughput requirements of global decentralized markets.