Essence

Execution Speed Optimization functions as the structural bedrock for competitive participation in decentralized derivatives markets. It encompasses the reduction of latency across the entire lifecycle of an order, from local generation to network propagation, validation by validators, and final settlement within a smart contract. This capability determines the ability of market participants to capture fleeting arbitrage opportunities, manage liquidation risk under extreme volatility, and provide liquidity without suffering from adverse selection.

Execution Speed Optimization represents the technical capacity to minimize the temporal distance between intent and market settlement.

At its core, this optimization is an adversarial race against the physical constraints of distributed ledgers and the strategic maneuvers of competing agents. Systems must account for the asynchronous nature of decentralized sequencing, where the order of operations directly impacts financial outcomes. Participants who master this domain secure a persistent edge, effectively transforming technical throughput into capital efficiency.

Origin

The necessity for Execution Speed Optimization emerged alongside the transition from simple spot exchanges to complex on-chain derivative protocols.

Early iterations of decentralized finance relied on naive transaction broadcasting, where orders were processed in the order they arrived at a node, often subject to unpredictable network congestion. This environment created a fertile ground for front-running and sandwich attacks, where sophisticated actors utilized superior infrastructure to extract value from slower participants.

- Latency Arbitrage serves as the historical driver, compelling developers to engineer custom RPC nodes and direct peering connections.

- Mempool Dynamics forced a shift toward private transaction relayers to circumvent public mempool exposure.

- Validator Relationships incentivized the development of direct submission channels to influence block production.

These early challenges necessitated a fundamental rethink of how derivatives interact with underlying protocol physics. Developers moved from treating blockchain settlement as a static event to viewing it as a dynamic, high-stakes game of speed and priority.

Theory

The theoretical framework governing Execution Speed Optimization relies on the interaction between market microstructure and protocol consensus mechanisms. Quantitative models must incorporate the Greeks, specifically Delta and Gamma, as inputs into the execution engine to determine the urgency of order placement.

In high-volatility environments, the cost of delay is not just the loss of a favorable price, but the potential for catastrophic failure in margin maintenance.

| Factor | Impact on Execution |

| Network Latency | Determines signal propagation delay |

| Block Time | Sets the frequency of state updates |

| Gas Pricing | Dictates priority in the mempool |

| MEV Extraction | Influences risk of transaction displacement |

The financial viability of a derivatives strategy remains tethered to the technical throughput of the underlying settlement layer.

Mathematical modeling of this space requires a probabilistic approach to transaction inclusion. Because decentralized systems lack a centralized matching engine, participants model their execution as a stochastic process where the probability of success correlates with the speed of submission and the economic weight assigned to the transaction.

Approach

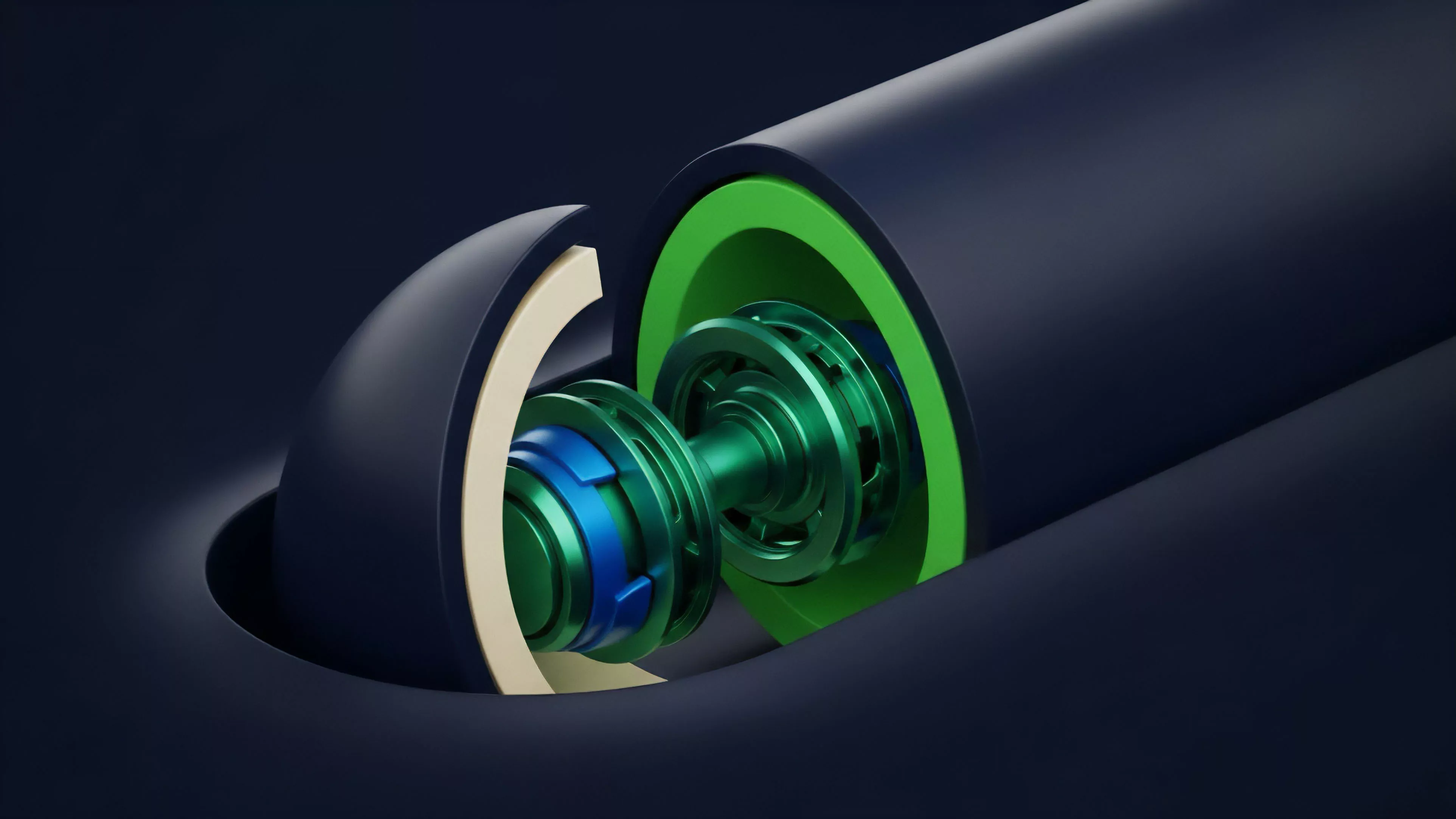

Current strategies for Execution Speed Optimization focus on minimizing the path length between the trading algorithm and the block producer. This involves a multi-layered architectural approach that prioritizes deterministic outcomes over broadcast-based methods.

Sophisticated participants now operate proprietary infrastructure stacks that bypass public network entry points.

- Colocation of execution agents with validator infrastructure significantly reduces physical propagation time.

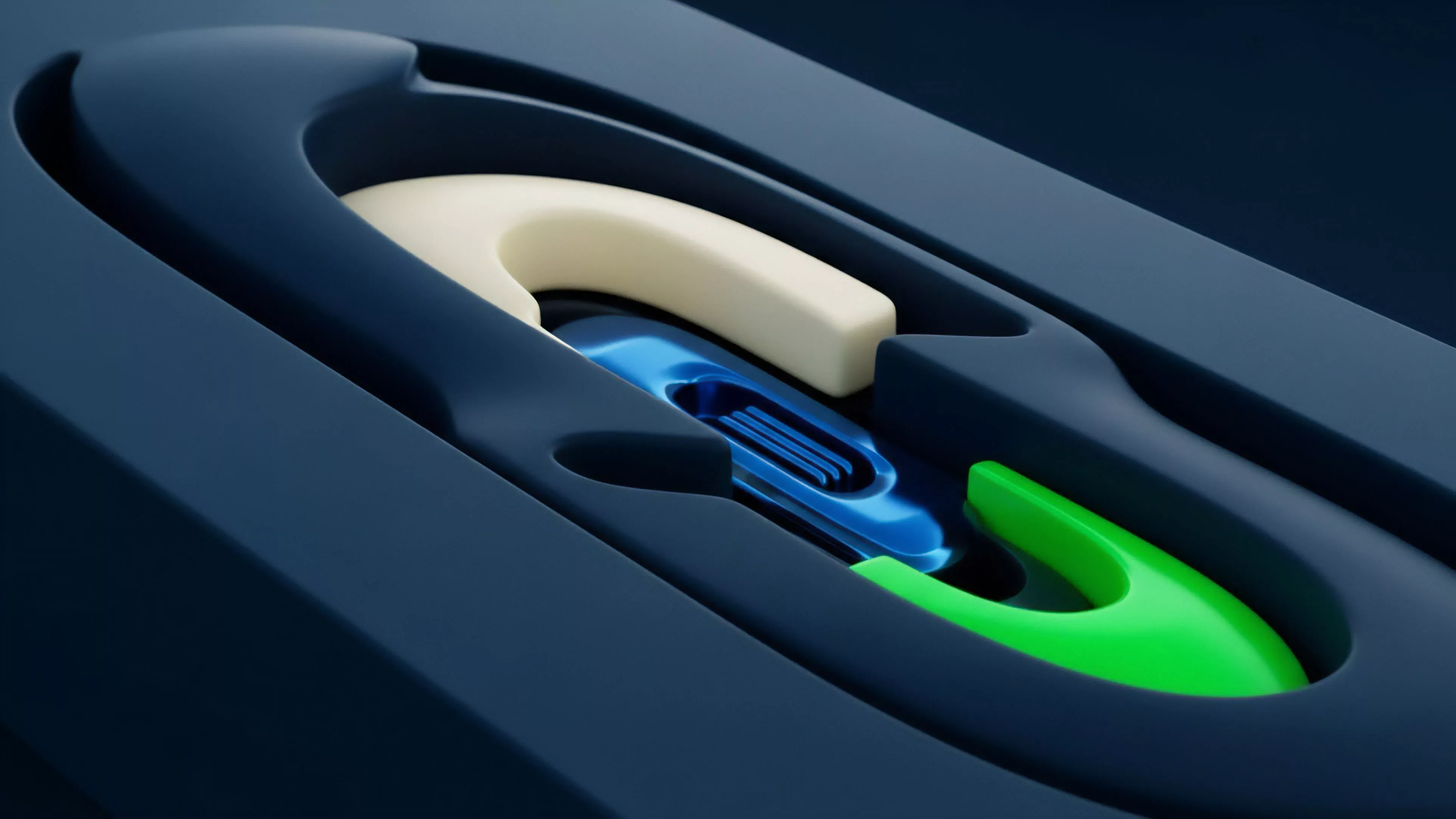

- Private Relayers allow for the submission of sensitive orders directly to block builders, mitigating the risk of mempool monitoring.

- Pre-confirmation Services provide near-instantaneous feedback on transaction success, allowing for rapid strategy adjustment.

This domain demands an adversarial mindset. Every architectural choice is tested by competing agents seeking to exploit inefficiencies in the protocol. Systems are designed to be resilient against network partitioning and to maximize the probability of transaction inclusion during periods of extreme market stress.

Evolution

The trajectory of Execution Speed Optimization reflects the broader maturation of decentralized finance.

Initially, the focus remained on basic gas optimization and simple transaction rebroadcasting. As protocols evolved, the requirement shifted toward managing complex liquidation cascades, where execution speed became the primary defense against insolvency. The industry is currently moving toward off-chain matching engines that provide centralized-like performance while maintaining cryptographic verifiability.

This transition acknowledges the limitations of current base-layer throughput. We are witnessing the emergence of specialized hardware and customized consensus rules designed specifically to support high-frequency derivative activity. The shift from monolithic chains to modular architectures further complicates this, as execution now requires navigating cross-chain liquidity fragmentation.

Horizon

Future developments in Execution Speed Optimization will likely center on the integration of Zero-Knowledge Proofs and Threshold Cryptography to achieve private, high-speed execution.

These technologies will decouple order privacy from transaction speed, allowing participants to commit to trades without revealing intent until the moment of settlement.

Advanced execution frameworks will eventually automate the negotiation of block space priority through programmable consensus mechanisms.

Expect to see a greater emphasis on Asynchronous Execution, where complex derivative strategies are decomposed into smaller, atomic operations that settle across different shards or layer-two networks. The ultimate goal is the creation of a seamless, global liquidity fabric where the technical cost of execution approaches zero, leaving only market risk as the primary variable for participants to manage. The success of these systems will hinge on their ability to maintain decentralization while providing the performance metrics demanded by global financial institutions.