Essence

Execution Algorithm Design represents the mathematical and structural blueprint governing how orders transition from intent to on-chain settlement. It functions as the logic layer that manages the lifecycle of a trade, balancing the desire for rapid liquidity against the risks of price slippage and adverse selection in fragmented, high-latency decentralized environments. The primary objective involves minimizing market impact while maximizing the probability of filling an order at a target price.

Execution algorithm design functions as the systematic translation of trading intent into optimal market outcomes by managing liquidity, latency, and slippage.

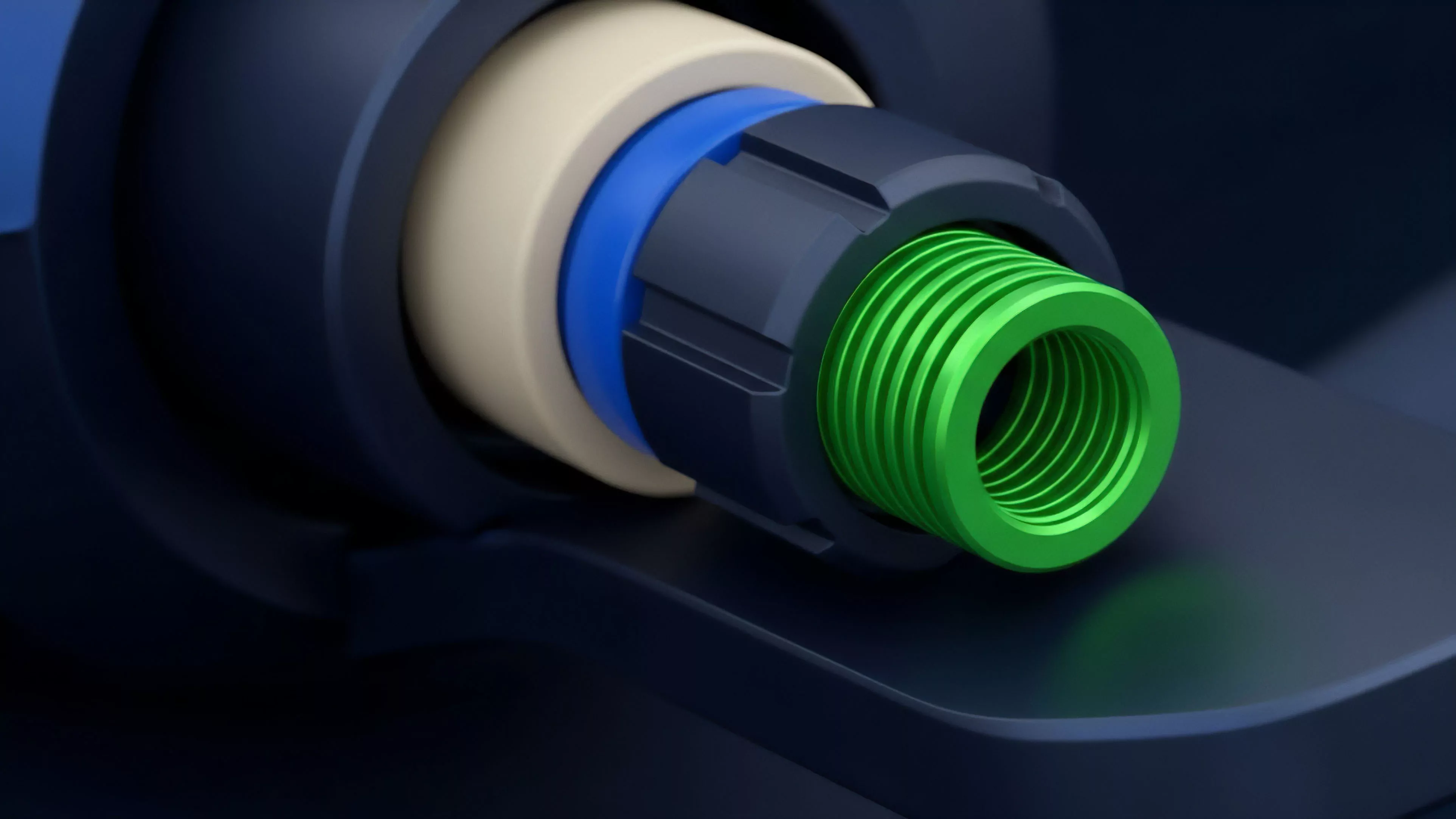

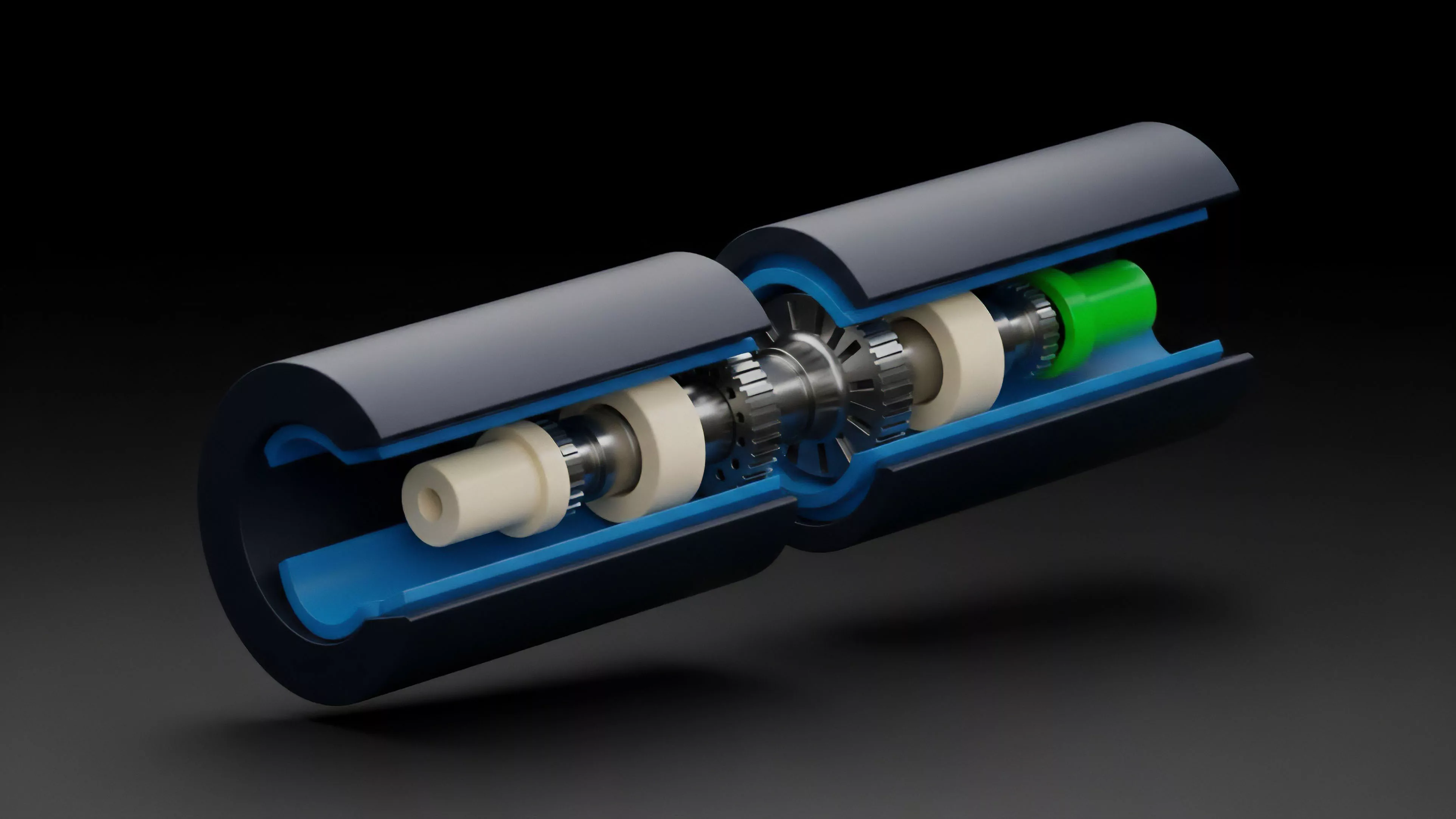

At the architectural level, these designs dictate how an order interacts with the order book or liquidity pool. They determine whether to slice large orders into smaller fragments, how to distribute these fragments across multiple venues, and when to pause execution based on real-time market signals. The design must account for the specific constraints of the underlying blockchain, such as block time latency, gas costs, and the deterministic nature of transaction ordering within the mempool.

Origin

The lineage of Execution Algorithm Design traces back to traditional equity markets, specifically the evolution of electronic communication networks and the rise of algorithmic trading desks in the late 1990s.

Early frameworks focused on minimizing transaction costs through simple strategies like Time-Weighted Average Price or Volume-Weighted Average Price. These methods aimed to mask large parent orders from predatory high-frequency participants by breaking them into smaller child orders.

Legacy market principles regarding order fragmentation and latency arbitrage provide the foundational logic for modern decentralized execution strategies.

Transitioning these concepts to crypto-native environments required a radical shift in perspective. The move from centralized limit order books to automated market makers introduced new variables, such as impermanent loss, front-running via maximal extractable value, and the absence of a unified global price. Developers began engineering algorithms capable of navigating these decentralized primitives, prioritizing resilience against adversarial actors over simple cost-minimization.

Theory

The theoretical framework rests on the intersection of market microstructure and quantitative finance.

An effective Execution Algorithm Design treats the market as an adversarial system where information leakage and latency are the primary enemies. The model must solve for an optimal path of execution that maximizes utility, often defined as the difference between the expected execution price and the mid-market price, adjusted for the cost of time and risk.

Mathematical Components

- Order Slicing: Dividing a large position into discrete units to minimize the footprint on the order book.

- Latency Management: Adjusting the timing of order submission to account for block production intervals and mempool congestion.

- Price Impact Modeling: Estimating the slippage caused by the order size relative to the depth of the available liquidity.

Mathematical modeling of order execution requires precise estimation of liquidity depth and real-time sensitivity to adverse market signals.

The design process incorporates Greeks to hedge the delta or gamma exposure created during the execution window. For instance, if an algorithm executes an options spread, it must monitor the volatility skew and adjust its bidding behavior to avoid filling at disadvantageous prices when market sentiment shifts. The interaction between the algorithm and the protocol’s consensus mechanism is critical, as high-frequency submission can lead to transaction reverts or increased gas expenditure.

Approach

Current strategies prioritize robustness in the face of unpredictable on-chain conditions.

Architects utilize sophisticated feedback loops that adjust execution parameters based on real-time volatility data and mempool monitoring. Instead of relying on static models, modern systems employ dynamic strategies that react to sudden liquidity withdrawals or aggressive arbitrage activity.

| Strategy | Primary Objective | Risk Factor |

| Volume Participation | Align execution with market flow | Information leakage |

| Liquidity Taker | Prioritize speed of fill | High price impact |

| Mempool Arbitrage | Front-run price shifts | High gas competition |

The deployment of Execution Algorithm Design often involves off-chain computation that submits transactions to the chain only when specific conditions are met. This hybrid architecture mitigates the cost of continuous on-chain polling while maintaining the transparency of decentralized settlement. The strategist must weigh the trade-off between the precision of off-chain models and the necessity of on-chain confirmation.

Evolution

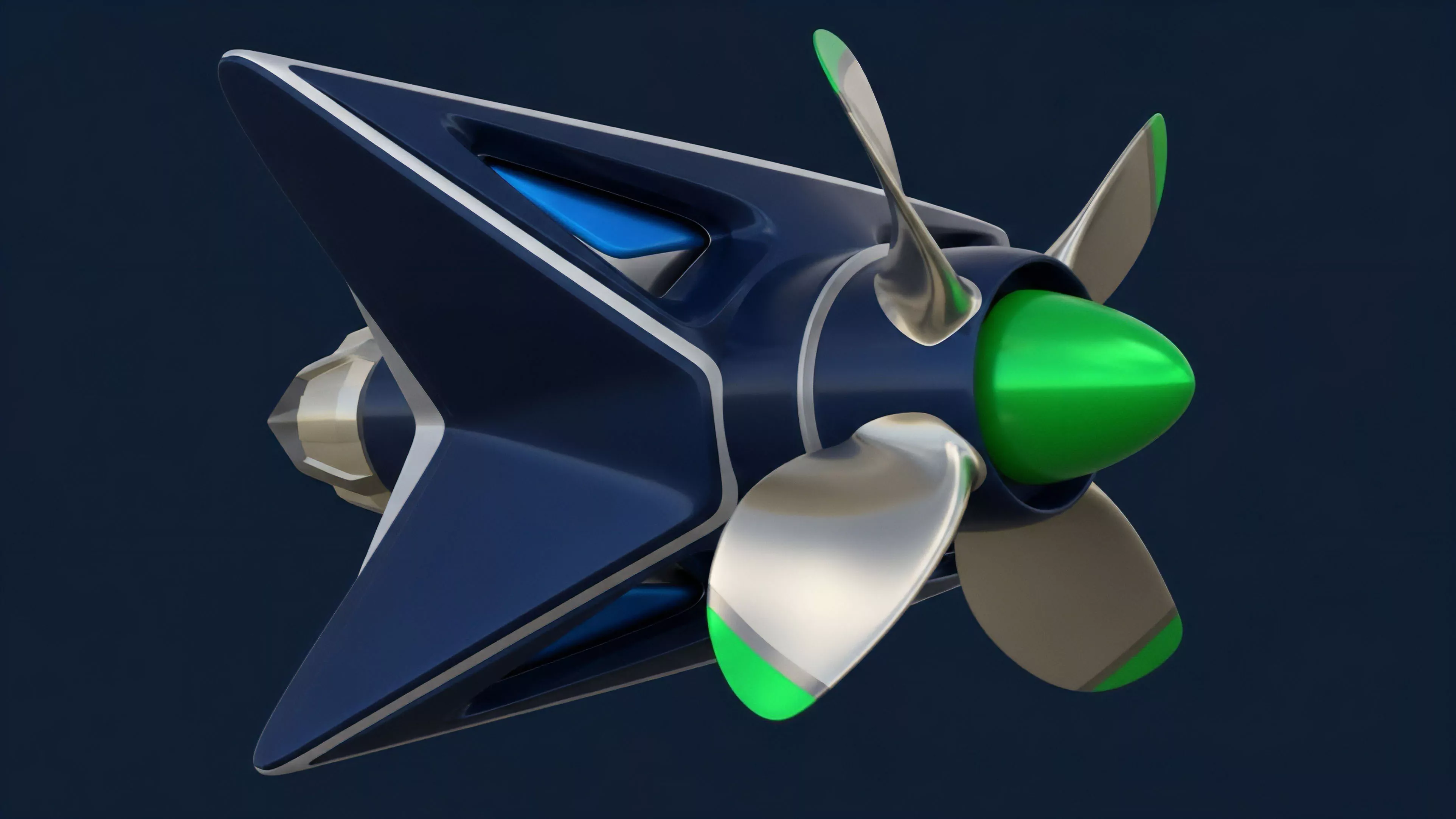

The trajectory of these designs has shifted from basic execution tools to autonomous, agentic systems capable of complex decision-making.

Initially, traders merely used scripts to automate manual clicking. The current environment demands agents that interpret on-chain data to anticipate market moves before they occur. This shift toward predictive execution reflects the increasing maturity of decentralized derivative venues.

Autonomous execution agents now replace static scripts by interpreting real-time on-chain signals to predict liquidity shifts and minimize impact.

The integration of Cross-Protocol Liquidity has further forced these designs to become multi-threaded. An algorithm today might monitor three different decentralized exchanges and a bridge simultaneously, routing orders to the venue with the lowest realized cost. This level of complexity requires a sophisticated understanding of smart contract security, as the algorithm itself becomes a target for exploitation if the execution logic is not hardened against re-entrancy or sandwich attacks.

Horizon

Future developments in Execution Algorithm Design will likely center on the integration of decentralized solvers and intent-based architectures.

The shift toward an intent-centric model means the algorithm will no longer define the “how” of execution, but rather the “what” of the desired outcome, delegating the tactical routing to specialized network participants. This evolution promises to abstract away the technical friction of decentralized trading.

- Intent-Based Routing: Allowing users to define outcomes while solvers handle the execution mechanics.

- Privacy-Preserving Execution: Utilizing zero-knowledge proofs to mask order intent from predatory observers.

- Hardware Acceleration: Deploying specialized nodes to reduce the latency of complex execution logic.

The systemic risk remains that these sophisticated algorithms could induce flash-crash scenarios if they all react to the same volatility trigger simultaneously. The next generation of designers must incorporate systemic stability constraints, ensuring that automated execution does not become a catalyst for uncontrollable market contagion.