Essence

Distributed System Scalability represents the capacity of a decentralized network to maintain performance, throughput, and settlement finality as transaction volume increases. In the context of crypto derivatives, this metric dictates the viability of high-frequency trading engines and complex margin management systems that require sub-millisecond state updates across global nodes.

Distributed System Scalability defines the operational threshold where a decentralized protocol maintains transaction throughput and settlement integrity under increasing network load.

The structural integrity of any derivative venue relies on its ability to handle concurrent state changes without compromising the consensus mechanism. When Distributed System Scalability fails, the system experiences latency spikes that render real-time risk management impossible, leading to potential liquidation failures during periods of extreme market volatility.

Origin

The genesis of this challenge lies in the Blockchain Trilemma, which posits that developers must trade off between security, decentralization, and speed. Early decentralized exchange architectures attempted to replicate centralized order books directly on-chain, which quickly hit the physical limits of sequential transaction processing.

- Synchronous Consensus: Early protocols required every node to validate every state change, creating a rigid bottleneck that restricted throughput.

- State Bloat: Increasing transaction frequency led to exponential growth in the ledger size, forcing hardware requirements that centralized the validator set.

- Settlement Latency: The time required for block confirmation introduced significant slippage risks for traders attempting to execute complex delta-neutral strategies.

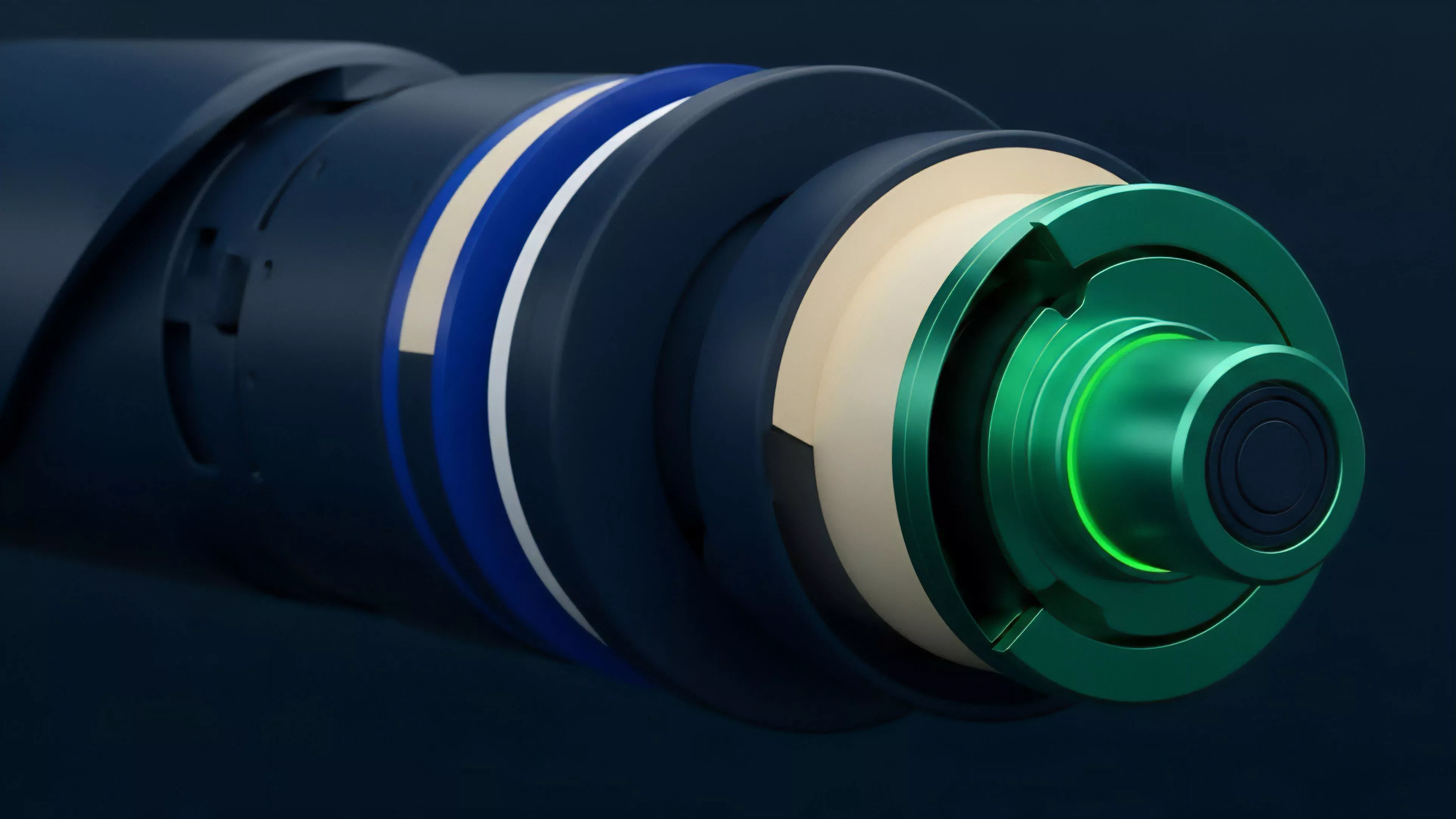

These limitations forced a shift toward modular architectures. By decoupling execution from consensus, developers sought to achieve Horizontal Scalability, allowing protocols to expand capacity by adding computational resources rather than increasing the burden on individual validator nodes.

Theory

Analyzing Distributed System Scalability requires a rigorous focus on the Asynchronous Byzantine Fault Tolerance properties of the underlying network. In a high-stakes derivative environment, the margin engine must operate as a deterministic state machine where the cost of state transitions remains predictable despite network congestion.

Efficient scaling models rely on off-chain execution environments that settle final state transitions to a secure base layer to minimize latency and gas costs.

Mathematically, the system performance is bound by the Propagation Delay of the network and the Computational Overhead of the consensus algorithm. When modeling these systems, we look at the following performance indicators:

| Metric | Description |

| TPS Capacity | Transactions processed per second |

| Finality Time | Duration until state becomes irreversible |

| State Growth | Rate of ledger expansion per epoch |

The interaction between these variables creates a feedback loop. As transaction volume rises, the Propagation Delay increases, which can lead to higher rates of chain forks or orphaned blocks. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

If the network cannot handle the spike, the Liquidation Engine might fail to execute, leaving the protocol exposed to systemic insolvency.

Approach

Current strategies for scaling decentralized derivative venues focus on Rollup Technology and Sharding. By moving the heavy lifting of order matching and margin calculation to Layer 2 environments, protocols can achieve throughput levels comparable to centralized exchanges while maintaining cryptographic proof of state integrity.

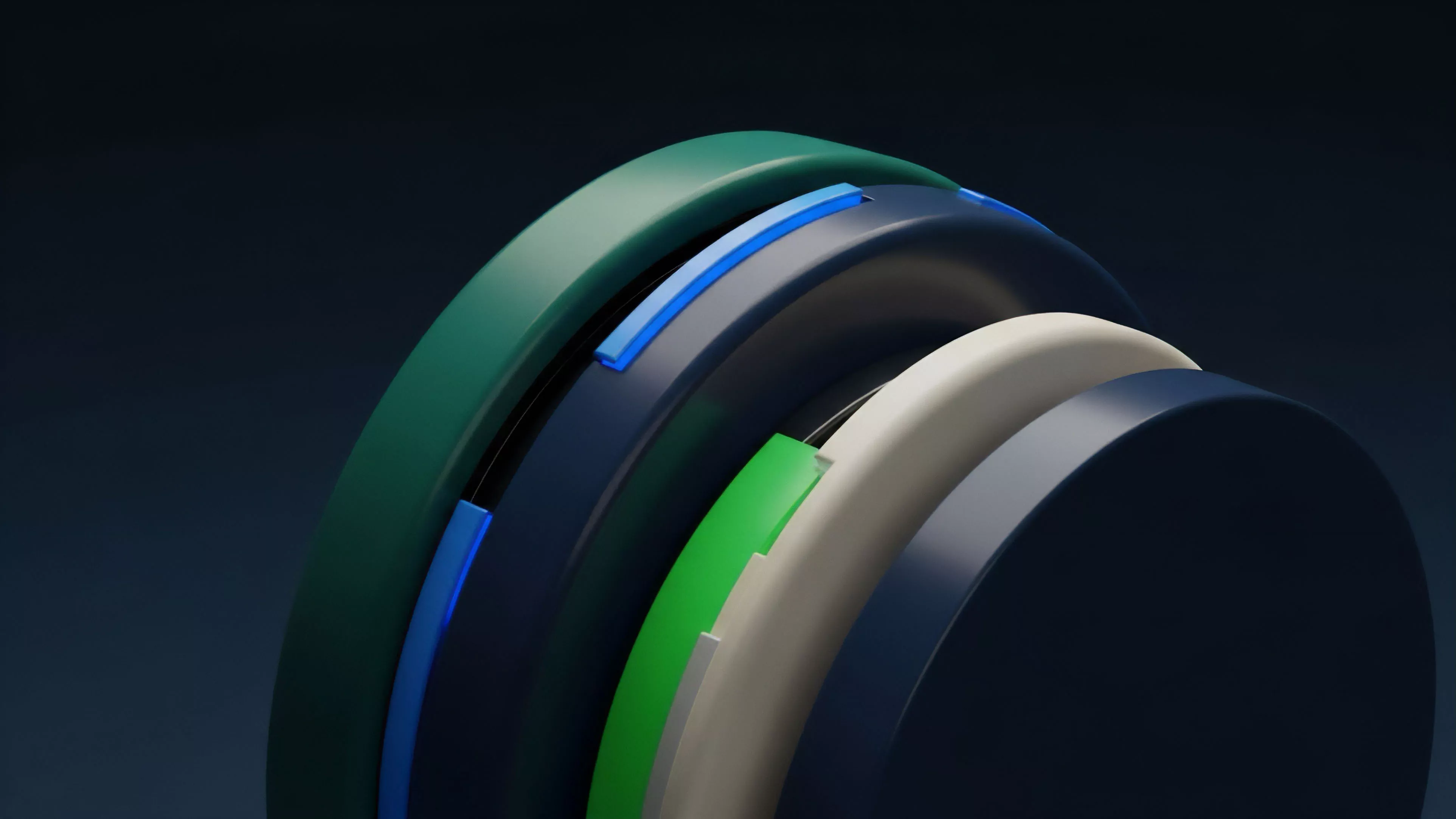

- Validity Proofs: Zero-knowledge constructions allow the network to verify the correctness of thousands of trades without re-executing each individual operation.

- Optimistic Execution: Systems assume validity unless challenged, reducing the immediate computational burden on validators during normal operation.

- Shared Sequencing: Centralized or decentralized sequencers order transactions before submitting them to the base layer, mitigating front-running risks.

Managing liquidity across these fragmented execution environments remains a significant hurdle. Market makers must now deploy capital across multiple bridges and L2 chains, which introduces Bridge Risk and complicates the unified view of a trader’s margin health. Anyway, as I was saying, the complexity of managing these fragmented states is the true cost of our current scaling solutions.

Evolution

The trajectory of Distributed System Scalability has moved from monolithic, single-chain designs to sophisticated, multi-layered architectures. Initial efforts focused on simple asset transfers, whereas modern implementations support complex, path-dependent derivatives that require high-fidelity price feeds and frequent margin calls.

The evolution of scaling has transitioned from increasing base-layer block sizes to implementing complex off-chain computation and cryptographic state verification.

We have seen the rise of Application-Specific Blockchains, where a protocol optimizes its consensus mechanism entirely for derivative trading. This approach minimizes the noise from unrelated network traffic, allowing for dedicated throughput that stabilizes the Liquidation Thresholds of the platform.

Horizon

The future of Distributed System Scalability lies in Asynchronous Execution and Parallel Processing. Future protocols will move away from sequential transaction ordering, allowing for concurrent state updates that dramatically increase the ceiling for market activity. This will enable the creation of decentralized, high-frequency market-making strategies that were previously impossible.

- Concurrent State Access: Future architectures will allow multiple trades to be processed simultaneously if they do not share the same state dependencies.

- Hardware-Accelerated Consensus: Integration with specialized hardware will further reduce the latency of cryptographic verification.

- Unified Liquidity Layers: Protocols will develop native interoperability to allow margin to be shared across disparate execution environments.

This path leads toward a financial system where the distinction between centralized and decentralized performance vanishes. However, the reliance on these complex architectures introduces new, opaque vectors for systemic risk. The ultimate question is whether we can maintain this speed without sacrificing the fundamental auditability that makes these systems valuable in the first place.