Essence

Decentralized Sequencer Networks represent the architectural transition from monolithic transaction ordering to distributed, multi-party computation models. These systems decouple the transaction inclusion process from state execution, replacing single-operator bottlenecks with consensus-driven sequencing layers. By distributing the authority to order transactions, these networks mitigate risks associated with censorship, front-running, and centralized control over transaction flow.

Decentralized Sequencer Networks shift transaction ordering authority from single entities to distributed consensus mechanisms to ensure censorship resistance and fair order execution.

The primary function involves the creation of a canonical transaction stream that downstream execution layers consume. This mechanism acts as the heartbeat of a modular blockchain stack, where the integrity of the sequence dictates the finality of the state transition. Participants within these networks, often termed sequencers or validators, compete or cooperate to determine the order of pending transactions, fundamentally altering how value accrues within the block space market.

Origin

The necessity for Decentralized Sequencer Networks emerged as a direct response to the inherent limitations of early rollups.

Initial implementations relied upon centralized sequencers, creating single points of failure and trust requirements that contradicted the core tenets of decentralized finance. Developers identified that reliance on a single sequencer granted that entity unilateral control over the order of transactions, allowing for the extraction of maximal extractable value at the expense of end-users.

- Transaction Censorship risks surfaced as centralized operators gained the ability to selectively include or exclude specific user transactions based on private incentives.

- MEV Extraction dynamics became increasingly predatory as centralized sequencers optimized for personal gain rather than network utility.

- Systemic Fragility became evident when single sequencer outages resulted in total halt of state updates across entire layer two ecosystems.

This realization drove the evolution toward shared and decentralized sequencing models. The shift mirrors historical progressions in financial markets where order matching transitioned from centralized broker-dealers to more transparent, rule-based electronic order books. Researchers recognized that by leveraging consensus algorithms, they could enforce fairness and liveness guarantees that no single entity could subvert.

Theory

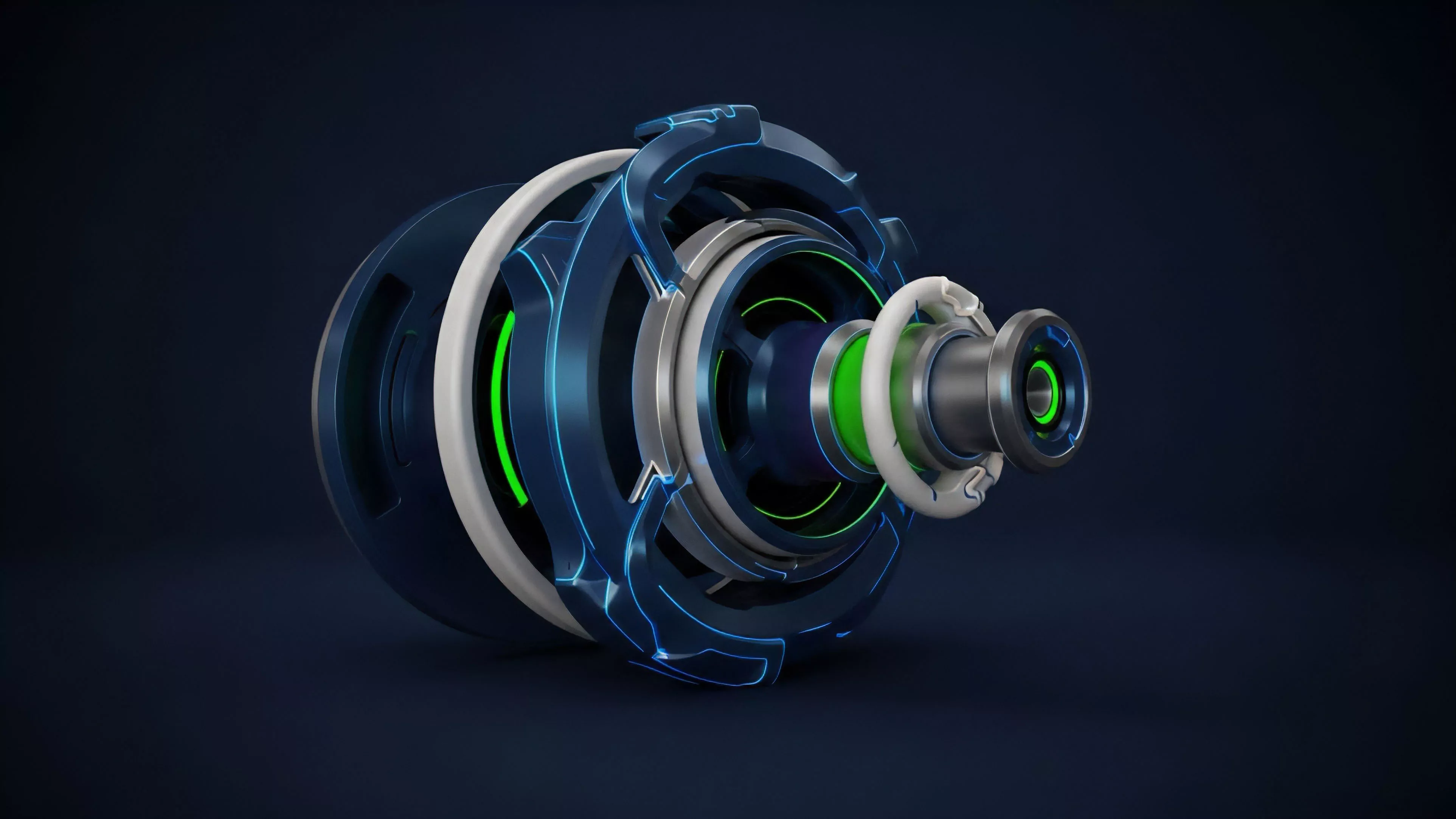

The theoretical framework governing Decentralized Sequencer Networks rests upon the application of threshold cryptography and Byzantine Fault Tolerant consensus.

These protocols require a set of nodes to agree on a sequence of transactions before submitting them to an execution layer. The process involves cryptographic commitment schemes where sequencers sign off on specific transaction batches, ensuring that the final order remains tamper-proof.

Distributed sequencing protocols leverage threshold cryptography to ensure that transaction ordering reflects consensus rather than the unilateral intent of a single participant.

The economic structure relies on incentive compatibility, where the cost of attacking the sequence must exceed the potential gain from transaction reordering. This environment is adversarial by design, forcing participants to adhere to protocols through economic stakes and slashing conditions. The interaction between these participants is modeled through game theory, where the payoff matrix favors honest inclusion over malicious manipulation.

| Mechanism | Function |

| Threshold Signatures | Ensure transaction batch integrity and validity |

| Staking Requirements | Align participant incentives with network security |

| Fair Ordering Policies | Prevent front-running and latency-based extraction |

The underlying physics of these protocols demand low latency to maintain high throughput. As I observe these systems, the tension between absolute decentralization and the physical constraints of network propagation speed creates a unique optimization challenge. It is a balancing act ⎊ a delicate calibration of consensus overhead versus the need for rapid settlement.

Approach

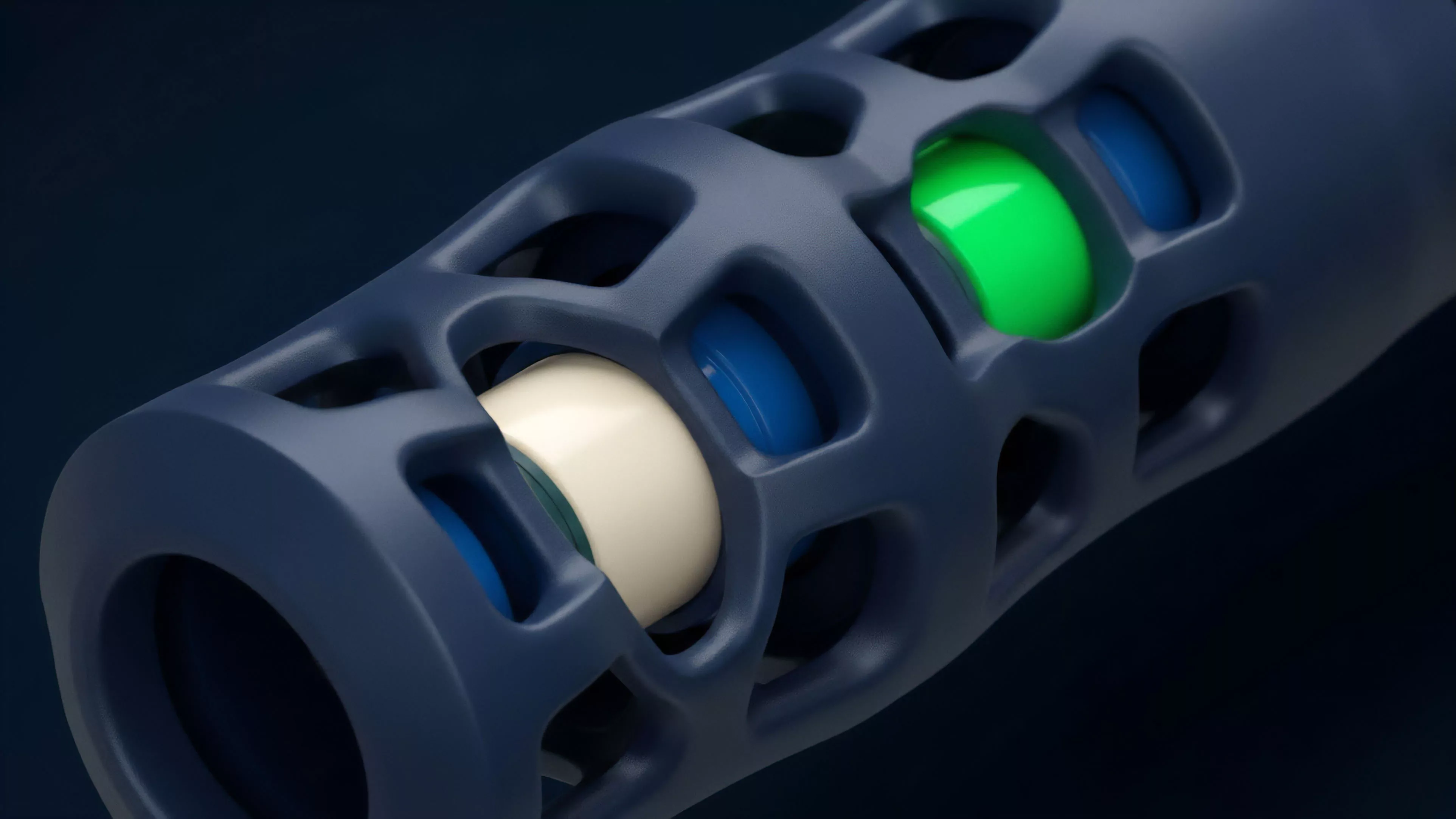

Current implementation strategies focus on shared sequencing layers that serve multiple rollups simultaneously.

This approach allows for atomic cross-rollup transactions, significantly improving capital efficiency and user experience. By aggregating transaction flow from diverse sources, these networks create a more liquid and robust market for block space.

- Shared Sequencing enables multiple execution environments to utilize a single ordering layer, fostering greater interoperability across disparate chains.

- Auction-Based Ordering allows market participants to bid for inclusion, creating a transparent price discovery mechanism for transaction priority.

- Cryptographic Proofs verify that the sequencing process adhered to the defined rules, allowing for trustless verification of transaction order.

Market participants now view transaction ordering as a distinct asset class. The ability to influence this order, or to provide the infrastructure for it, represents a significant source of revenue and control. This evolution forces us to rethink traditional liquidity provision, as the sequence itself now dictates the execution price of complex derivative strategies.

Evolution

The trajectory of these networks moved from theoretical proposals in academic papers to active deployments within production environments.

Early designs prioritized simple decentralization, whereas current iterations focus on performance and cross-chain composability. This progression highlights a clear maturation process where developers increasingly prioritize the integration of advanced cryptographic primitives to solve the latency-security trilemma.

The evolution of sequencing architecture reflects a shift from basic decentralization to high-performance systems capable of atomic cross-chain transaction settlement.

The industry has moved beyond merely replacing centralized operators. It now actively designs protocols that facilitate complex financial interactions between distinct blockchain instances. We are witnessing the birth of a unified transaction fabric, where the boundaries between individual rollups become increasingly porous.

This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

Horizon

Future developments will center on the integration of artificial intelligence for predictive order flow management and the expansion of privacy-preserving sequencing techniques. As these networks scale, the focus will shift toward formal verification of sequencing rules and the hardening of consensus mechanisms against sophisticated MEV-based attacks. The goal remains the creation of a global, permissionless ordering layer that operates with the efficiency of centralized exchanges but the resilience of distributed ledgers.

| Development Phase | Primary Objective |

| Privacy Integration | Shielding transaction details from front-running bots |

| AI Optimization | Dynamic latency adjustment and congestion management |

| Cross-Chain Interoperability | Enabling synchronous atomic execution across networks |

The systemic implications of these advancements are profound. We are building the infrastructure for a truly global financial operating system where the sequencer is the neutral arbiter of all value exchange. The success of these networks will determine whether decentralized markets can eventually challenge the dominance of legacy financial clearinghouses. What unanswered paradoxes remain when we successfully remove all human intermediaries from the transaction ordering process?