Essence

The testing of Decentralized Margin Engine Resilience is the rigorous, adversarial process of subjecting an options protocol’s risk infrastructure to extreme, non-linear market conditions to assess its capacity for systemic solvency. It moves past simple unit testing of liquidation logic, instead focusing on the load-bearing capacity of the entire capital structure when facing multiple, correlated stress vectors. This evaluation is not an audit of code correctness, but an assessment of financial engineering robustness under duress.

The primary objective is to quantify the system’s Maximum Loss Tolerance ⎊ the largest single-event loss the protocol can absorb before the insurance fund is depleted and the system is forced into a recapitalization event or, worse, a protocol-wide haircut on positions. This quantification requires simulating market movements that exceed historical observations, specifically targeting the fat tails of the volatility distribution.

Decentralized Margin Engine Resilience Testing measures a protocol’s systemic solvency under extreme, correlated market stress events.

The concept hinges on the fact that margin systems in decentralized finance (DeFi) are inherently transparent but globally accessible, meaning a single, coordinated attack or an unexpected oracle failure can trigger a cascade across all open positions simultaneously. Understanding the speed and efficiency of the liquidation engine ⎊ the system’s automatic circuit breaker ⎊ is paramount. We analyze the latency between a margin call and the execution of a liquidation order, treating this time delta as a direct measure of systemic risk exposure.

- Stress Vector Correlation: The simultaneous application of adverse price movement, oracle lag, and gas spike, as these three factors often compound during market panic.

- Liquidation Cascade Modeling: Analyzing the second-order effects where the forced sale of collateral from one liquidation event triggers a price move that pushes other positions below their maintenance margin.

- Insurance Fund Load-Bearing: Calculating the necessary size and composition of the shared risk pool required to absorb the largest plausible loss without triggering protocol insolvency.

Origin

The necessity for dedicated resilience testing in decentralized options systems arose directly from the systemic failures observed in centralized exchanges (CeFi) during periods of acute volatility, notably the crypto market crash of March 2020. These events revealed that margin systems relying on simple, time-delayed liquidation mechanisms and insufficient insurance funds were structurally unsound. When price gaps occurred ⎊ where the asset price moved faster than the liquidator could execute ⎊ the exchange’s internal risk engine was often left with a significant bad debt.

In the move to DeFi, the transparency of the blockchain architecture presented a new set of constraints and opportunities. The opportunity was verifiable collateral and algorithmic enforcement. The constraint was the dependence on external data feeds (oracles) and the non-deterministic nature of transaction inclusion and cost (gas fees).

The traditional financial model of a central counterparty absorbing risk was replaced by a shared pool, typically an insurance fund or a staking mechanism. The foundational principle for DMERT was derived from the Basel Accords’ stress testing mandates for banks, but adapted for the high-velocity, low-latency environment of programmable money. We had to account for Protocol Physics ⎊ the reality that a smart contract cannot liquidate a position if the transaction cost exceeds the profit of the liquidation, a scenario which happens precisely when it is most needed.

This led to the recognition that the economic model of the liquidator (the incentive) is as important as the mathematical model of the margin calculation. The first attempts at resilience testing focused on static scenarios: “What if BTC drops 30%?” The evolution quickly moved to dynamic, path-dependent stress tests: “What if BTC drops 30% over three blocks, while ETH drops 40%, and the oracle updates lag by 60 seconds?” This shift marked the true birth of Decentralized Margin Engine Resilience Testing as a specialized discipline.

Theory

The theoretical framework for resilience testing is rooted in the rigorous application of quantitative finance, specifically the study of high-dimensional risk surfaces and the statistical properties of extreme events. The core challenge is modeling the multi-asset, cross-collateralized environment where the margin requirement is a function of not just price, but the entire portfolio’s sensitivity to underlying market factors ⎊ the Greeks.

We cannot simply use the Black-Scholes framework, as it assumes continuous trading and log-normal returns, neither of which holds true in the discrete, fat-tailed, and often illiquid world of decentralized derivatives. The true theoretical weight is placed on simulating the impact of second-order Greeks, particularly Vomma (the sensitivity of Vega to changes in volatility) and Vanna (the sensitivity of Vega to changes in the underlying price). When volatility spikes, the change in the portfolio’s Vega exposure ⎊ how much delta hedging is required ⎊ can be massive and non-linear.

The margin engine must hold enough collateral to cover the hedging cost, not just the current loss. This is where many simple systems fail; they only account for first-order Delta risk. The theoretical stress test therefore becomes a high-frequency Monte Carlo simulation where the input variables are not prices, but the entire term structure of volatility and the instantaneous gas price of the network.

We model the system’s reaction function as a series of non-linear differential equations where the primary variables are the change in asset price, the change in implied volatility, and the change in transaction latency. A successful margin engine design respects the principle of Risk Absorption by Design, where the protocol’s architecture ⎊ its choice of collateral types, its liquidation waterfall, and its oracle configuration ⎊ is deliberately engineered to dampen systemic shock rather than simply react to it. The system’s true resilience is measured by its ability to maintain a positive net equity across all accounts and the insurance fund, even when the simulated market path includes multiple standard deviations of adverse movement, a complete failure of the primary oracle, and a concurrent 10x spike in gas costs, effectively simulating a full-spectrum denial-of-service on the liquidator network.

This theoretical lens compels us to look beyond the nominal collateral ratio and analyze the liquidity profile of the collateral itself, understanding that a highly-leveraged system collateralized by a thinly-traded asset is structurally weak, regardless of the initial over-collateralization ratio. The theoretical model must account for the liquidity shock ⎊ the reduction in available market depth that occurs precisely when the liquidation engine attempts to sell collateral, further exacerbating the price decline.

The true test of a margin engine is its capacity to cover the hedging cost implied by non-linear Vomma and Vanna risk during volatility spikes.

Approach

The practical execution of DMERT involves a multi-stage process of adversarial simulation, designed to push the protocol to its breaking point without compromising live user funds. This is a continuous process, not a one-time audit.

Adversarial Simulation Framework

The testing framework operates on dedicated shadow forks or testnets, replicating the mainnet state but allowing for the injection of synthetic market data and protocol-level faults.

- Synthetic Market Generation: Creation of high-frequency, path-dependent price feeds that include historically unprecedented movements, such as instantaneous 50% price drops, rapid whipsaws, and extended periods of zero-liquidity.

- Protocol Fault Injection: Deliberate introduction of system failures, including oracle price freezing, delayed block confirmation, and the complete halt of the primary liquidator bot network.

- Liquidation Stress Analysis: Measuring the percentage of liquidations that fail to execute due to slippage, gas costs, or bad debt creation, and calculating the resultant drain on the insurance fund.

Comparative Stress Models

We utilize a variety of established financial models, each targeting a different type of systemic vulnerability. The choice of model determines the type of structural weakness revealed.

| Stress Model | Targeted Risk Vector | Metric of Failure |

|---|---|---|

| Historical Event Replication | Known market shocks (e.g. ‘Black Thursday’) | Bad debt generated relative to event size |

| Factor-Based Stress Testing | Sensitivity to macro factors (e.g. USD liquidity, interest rate spike) | Margin requirement coverage ratio |

| Hypothetical Extreme Scenario (HES) | Tail-risk events (e.g. -5 standard deviations) | Time-to-insolvency of the insurance fund |

| Adversarial Game Theory Simulation | Coordinated attacks by large participants | Cost of attack versus profit to attacker |

The most challenging approach involves Adversarial Game Theory Simulation, where we model the behavior of a sophisticated actor who strategically opens positions designed to maximize the protocol’s loss during a predicted market event. This is where the Tokenomics of the liquidator incentive system are tested; if the profit incentive is too low, liquidators will simply stand down during high-gas events, leaving the protocol exposed. The engine’s resilience depends on its ability to maintain a positive expected value for the liquidator, even under maximal network congestion.

Evolution

The evolution of decentralized margin resilience has tracked the complexity of the derivatives offered.

Early protocols relied on isolated, fully-collateralized margin systems ⎊ simple, but capital-inefficient. A user’s collateral for a BTC option could not be used for an ETH option. This created a high degree of local resilience but hindered market growth.

The first major architectural shift was the move to Cross-Margining. This allowed collateral to be shared across multiple positions, drastically improving capital efficiency. This improvement, however, introduced the risk of contagion ⎊ a loss in one market could now directly threaten positions in another.

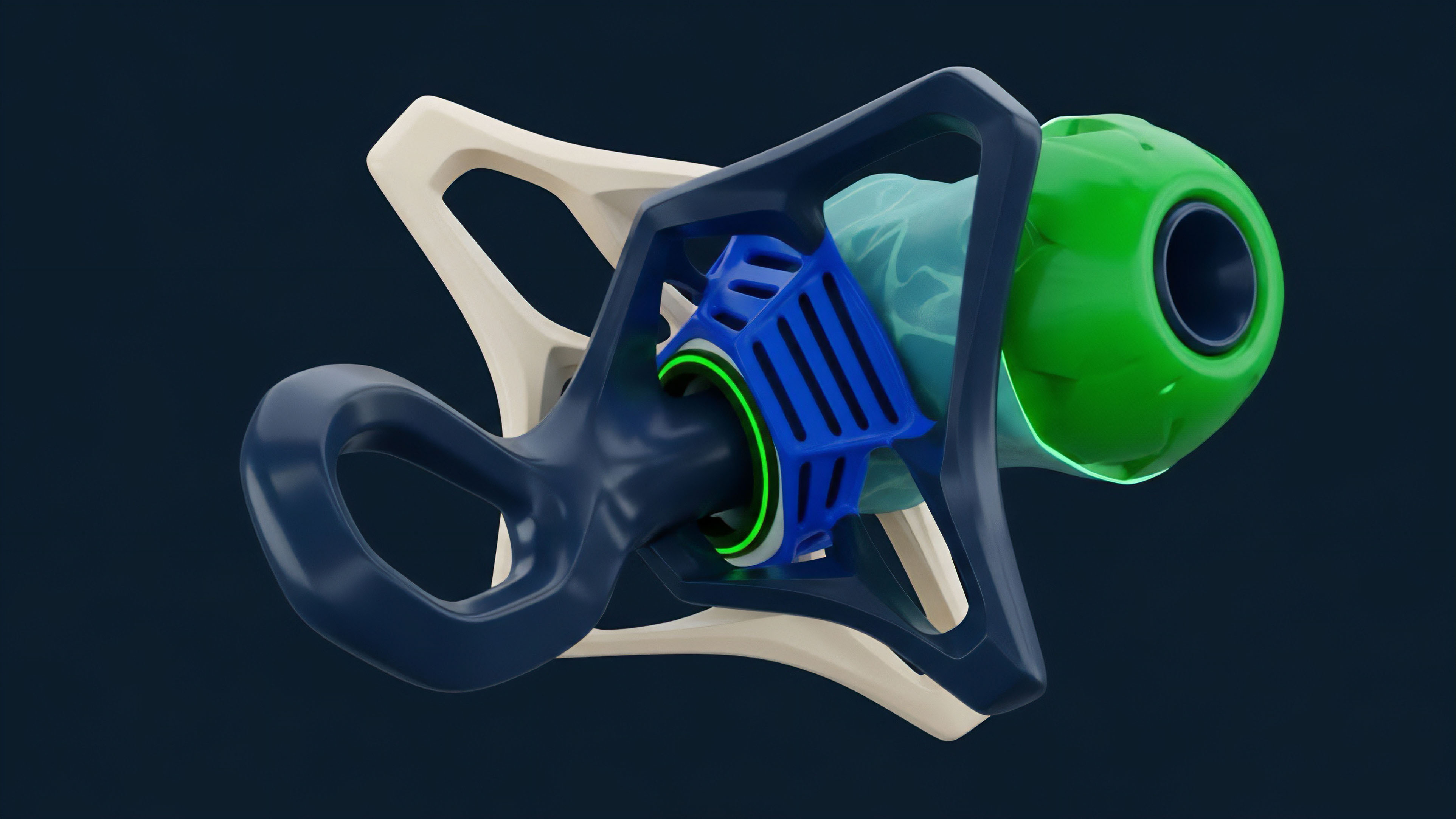

Resilience testing had to evolve from single-asset stress to multi-asset correlation matrices. The current generation of margin engines moves toward Portfolio Margining, a system that calculates margin based on the net risk of the entire portfolio, often using a standardized risk unit (like SPAN in traditional finance). This requires continuous, real-time calculation of the portfolio’s combined Delta, Vega, and Gamma exposure.

- From Isolated to Portfolio Risk: The systemic shift from calculating margin per position to calculating margin on the basis of total risk surface.

- Liquidation Mechanism Maturation: Moving past simple Dutch auctions to sophisticated, fixed-price liquidations (often near the bankruptcy price) with the bad debt absorbed by the insurance fund, prioritizing speed over optimal price execution.

- The Emergence of Shared Risk Pools: The transition from simple, passive insurance funds to actively managed, dynamically capitalized Backstop Liquidity Providers (BLPs) who commit capital in exchange for yield, effectively becoming the system’s first line of defense against insolvency.

This trajectory is not an incremental refinement; it is a fundamental re-architecting of the financial foundation. The systems are becoming more capital-efficient, yet simultaneously more interconnected, demanding a far higher standard of resilience testing. Our ability to model the propagation of failure ⎊ the Systems Risk & Contagion ⎊ must keep pace with the increasing interconnectedness.

Horizon

The future of Decentralized Margin Engine Resilience Testing points toward two interconnected vectors: predictive solvency modeling and cryptographic verification of risk.

Predictive Solvency Modeling

The current state is reactive: we test known stress paths. The horizon involves moving to proactive risk management through Real-Time Probabilistic Margin (RPM). This system would not rely on static margin requirements but would continuously calculate the probability of a user’s portfolio going bankrupt within the next settlement window, factoring in current market volatility and gas price forecasts.

- Machine Learning-Driven Stress: Using deep learning models trained on historical and synthetic market data to generate novel, non-obvious stress scenarios that human analysts may overlook.

- Autonomous Parameter Adjustment: Protocols will move toward self-healing mechanisms where margin requirements, liquidation thresholds, and even oracle update frequencies are automatically adjusted by the system based on real-time stress test results and predictive solvency scores.

Cryptographic Risk Verification

The ultimate expression of resilience lies in removing the need for trust in the calculation itself. The integration of Zero-Knowledge Proofs (ZKPs) will allow a user to prove that their portfolio is solvent according to the protocol’s margin rules without revealing the details of their underlying positions. This maintains privacy while offering absolute, cryptographically-verifiable solvency. This ZKP layer transforms the resilience check from an adversarial simulation into a provable mathematical statement. The protocol’s resilience is then only limited by the security of the ZK-SNARK circuit itself, shifting the systemic risk from financial engineering to pure cryptography. This is the final frontier: building financial systems whose structural integrity is secured by mathematics, not just collateral. The entire system becomes a single, auditable, and provably solvent ledger of risk.

Glossary

Fundamental Network Data

Incentive Structure Analysis

Smart Contract Security Audit

Synthetic Market Generation

Risk-Weighted Collateral

Value Accrual Mechanism

Automated Parameter Adjustment

Non-Linear Market Dynamics

Systemic Failure Propagation