Essence

Decentralized Data Provenance represents the cryptographic assurance of information origin, integrity, and temporal sequence within permissionless environments. It functions as the foundational layer for derivative valuation, ensuring that the inputs governing automated execution engines remain tamper-proof and verifiable by all market participants.

Decentralized data provenance provides the cryptographic audit trail necessary to validate the veracity of information inputs in automated financial systems.

The systemic value lies in the removal of centralized gatekeepers who historically mediated data streams. By anchoring information to distributed ledgers, protocols gain a trust-minimized mechanism to verify the lifecycle of an asset price, an order flow, or a risk parameter. This creates a state where the market participants themselves act as the ultimate auditors of the underlying financial reality.

Origin

The trajectory of Decentralized Data Provenance stems from the limitations inherent in early blockchain oracles.

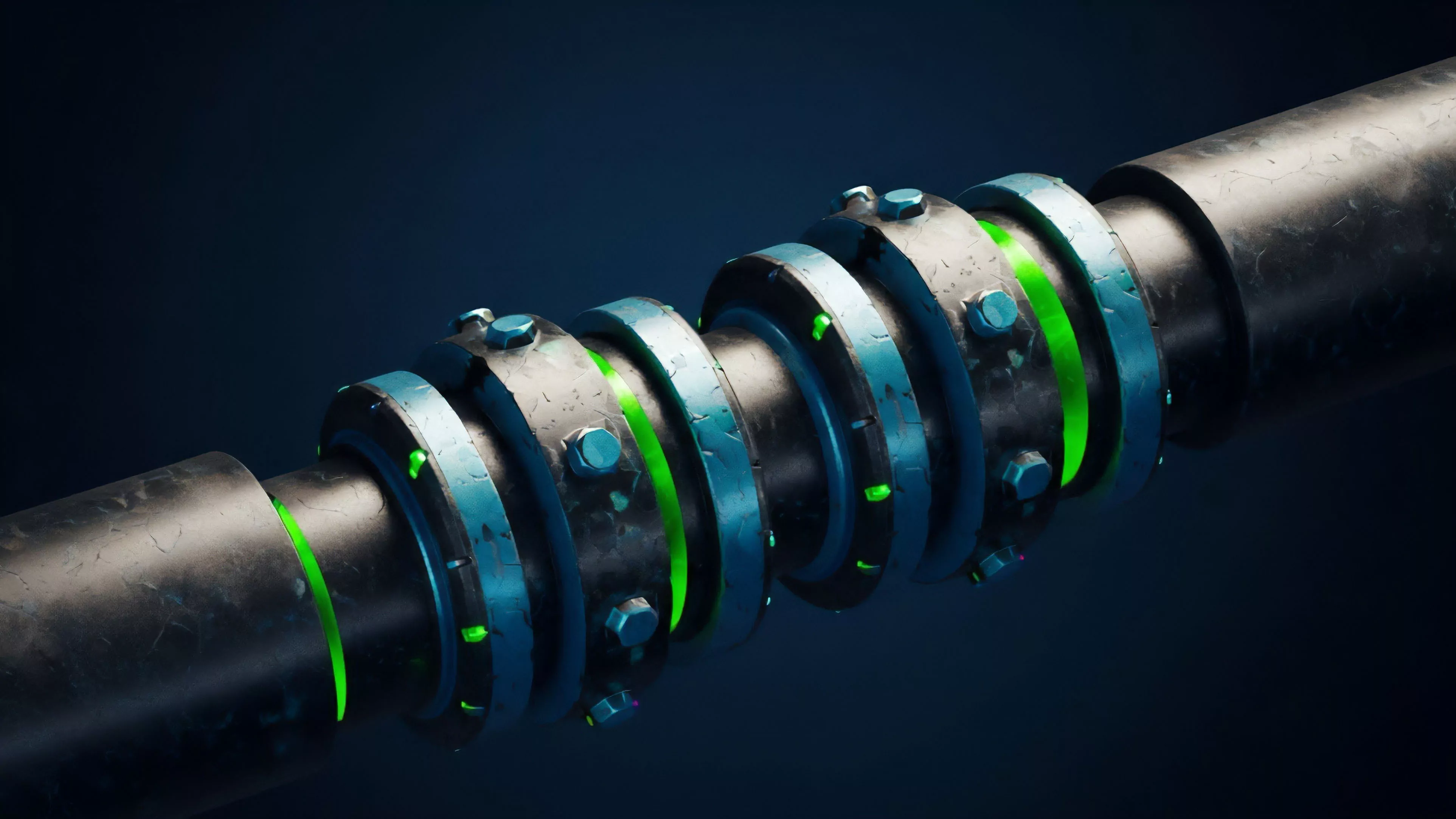

Initial architectures relied upon single-node feeds, creating significant single points of failure. The subsequent shift toward multi-party aggregation and cryptographically signed data streams established the requirement for a transparent history of how information travels from a primary source to a smart contract.

- Cryptographic Hash Functions: These serve as the mathematical backbone, enabling the creation of immutable digital fingerprints for data packets.

- Merkle Proofs: These allow for efficient verification of large datasets, ensuring that specific information remains part of a larger, validated state.

- Threshold Signature Schemes: These mechanisms distribute the power of data validation across a decentralized network, preventing any single actor from manipulating the provenance stream.

This evolution reflects a transition from monolithic data reliance toward modular, verifiable architectures. Financial engineers realized that without a clear lineage for every data point, derivative pricing models would remain perpetually vulnerable to manipulation, leading to systemic instability in decentralized venues.

Theory

The theoretical framework governing Decentralized Data Provenance relies on the intersection of game theory and distributed systems. Participants providing data must face economic consequences for malfeasance, typically through staked capital that is slashed upon the detection of inaccurate reporting.

This adversarial design forces rational actors to prioritize the accuracy of the provenance chain over the short-term gains of data manipulation.

| Mechanism | Function |

| Staking | Provides economic collateral for truthful data reporting |

| Slashing | Executes punitive measures for verifiable provenance failures |

| Aggregation | Reduces noise by synthesizing multiple independent data streams |

The integrity of a derivative pricing model depends entirely on the verifiable lineage of its underlying data inputs.

Market participants interact within this structure by balancing the cost of participation against the rewards of honest data contribution. This environment creates a natural equilibrium where the most accurate providers gain reputation and influence, while malicious actors face exclusion. The system behaves like an automated laboratory, constantly testing the validity of incoming data against historical benchmarks and cross-protocol comparisons.

Approach

Current methodologies for Decentralized Data Provenance involve the deployment of specialized decentralized oracle networks and state proofs.

These systems ingest data from off-chain sources, sign it with unique cryptographic keys, and commit the state to a chain where it remains permanently accessible. This allows smart contracts to perform complex calculations, such as option pricing or margin adjustments, based on data that possesses a known and verifiable history.

- Timestamping: Every data entry includes a precise, ledger-recorded moment of creation, preventing replay attacks.

- Source Attribution: Protocols maintain a registry of validated data providers, enabling users to choose sources based on reliability metrics.

- Proof of Stake: Data nodes commit capital to demonstrate their commitment to the veracity of the information provided.

These implementations focus on reducing the latency between data generation and its availability to derivative protocols. The technical challenge remains the minimization of oracle gas costs while maintaining high-frequency updates, which are essential for managing the Greeks of complex options portfolios.

Evolution

The progression of Decentralized Data Provenance has moved from simple price feeds toward full-spectrum data integrity solutions. Early implementations focused on spot price delivery for simple lending protocols.

As derivative markets expanded, the requirement shifted toward high-fidelity data that includes volatility indices, order book depth, and historical liquidity metrics.

Provenance systems are transitioning from passive data delivery to active, cryptographically enforced truth verification for complex financial instruments.

The current landscape involves a sophisticated interplay between zero-knowledge proofs and decentralized computation. By utilizing zero-knowledge technology, protocols can now verify the correctness of a data transformation or calculation without exposing the raw underlying information, providing a new dimension of privacy alongside provenance. This represents a fundamental shift in how decentralized systems handle sensitive financial intelligence, moving away from public exposure toward selective disclosure of validated facts.

Horizon

The future of Decentralized Data Provenance points toward a seamless integration of hardware-level security with decentralized consensus.

Trusted execution environments will likely pair with blockchain-based verification to ensure that data remains secure from the moment of capture within a sensor or server until its final settlement on-chain. This will reduce the reliance on external economic incentives by moving toward hardware-rooted truth.

| Future Focus | Anticipated Impact |

| Hardware Integration | Hardens data security at the point of origin |

| Zero Knowledge Proofs | Enables private yet verifiable data provenance |

| Cross Chain Interoperability | Allows provenance chains to function across fragmented networks |

The convergence of these technologies will enable the creation of decentralized derivatives that are not only more efficient but also more resilient to systemic shocks. As the provenance layer matures, the financial industry will likely witness a transition where traditional auditing practices are replaced by continuous, automated cryptographic verification, fundamentally altering the risk profile of global digital markets.