Essence

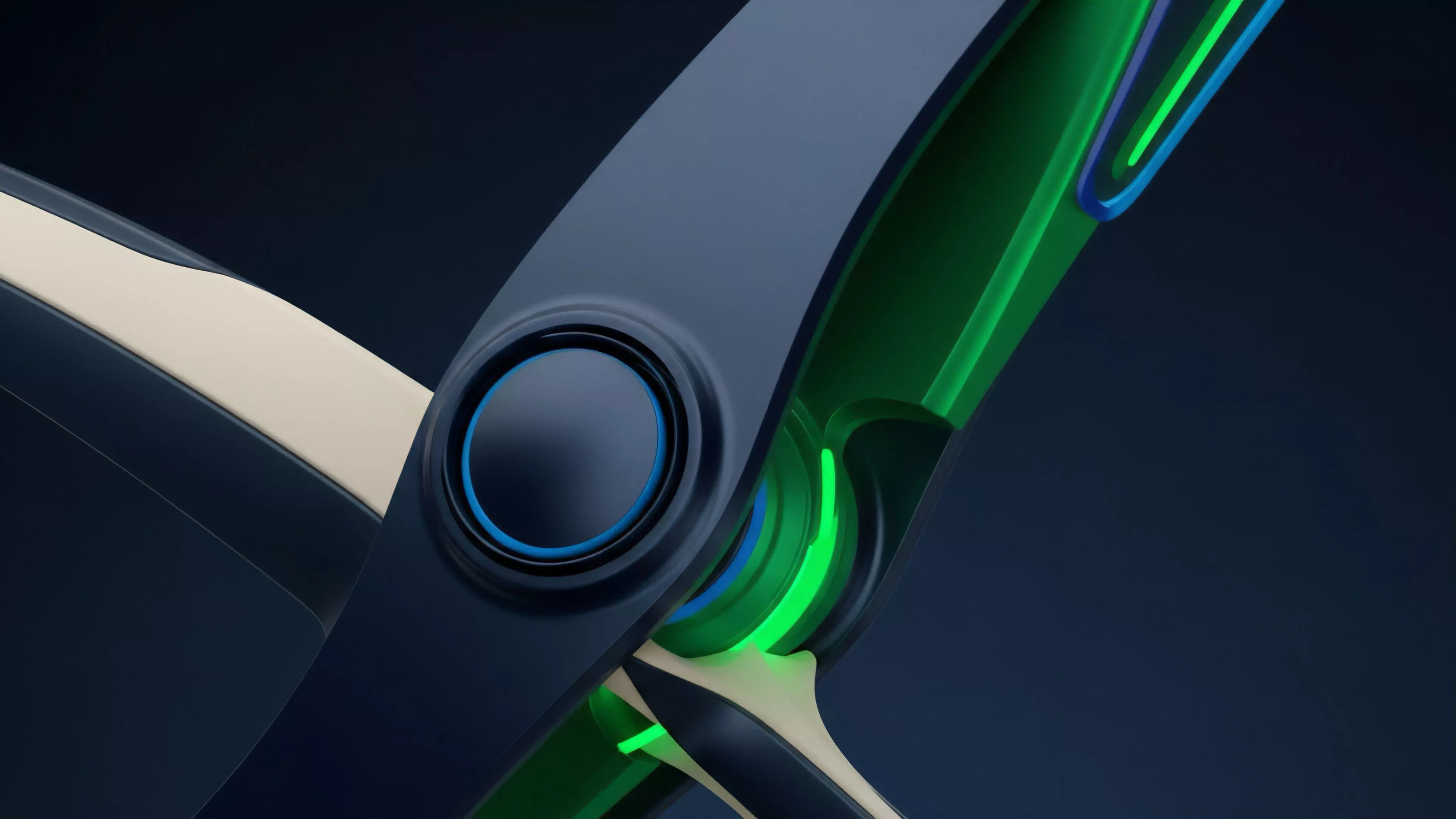

Decentralized Data Integrity functions as the cryptographic guarantee that information inputs ⎊ ranging from asset price feeds to complex derivative state variables ⎊ remain immutable and tamper-resistant within a permissionless financial architecture. This concept addresses the fundamental vulnerability of off-chain data bridges, ensuring that the inputs governing automated execution engines cannot be manipulated by centralized intermediaries or malicious actors.

Decentralized Data Integrity provides the trustless verification layer necessary for automated financial protocols to operate securely without reliance on centralized data providers.

The architectural necessity for Decentralized Data Integrity arises from the inherent friction between deterministic smart contract logic and the stochastic nature of external market data. When a protocol relies on a single source for price discovery, it creates a point of failure that compromises the entire derivative ecosystem. By distributing the validation process across a decentralized network of nodes, the system achieves a state where the cost of manipulating the data significantly exceeds the potential gain from such an attack.

Structural Pillars

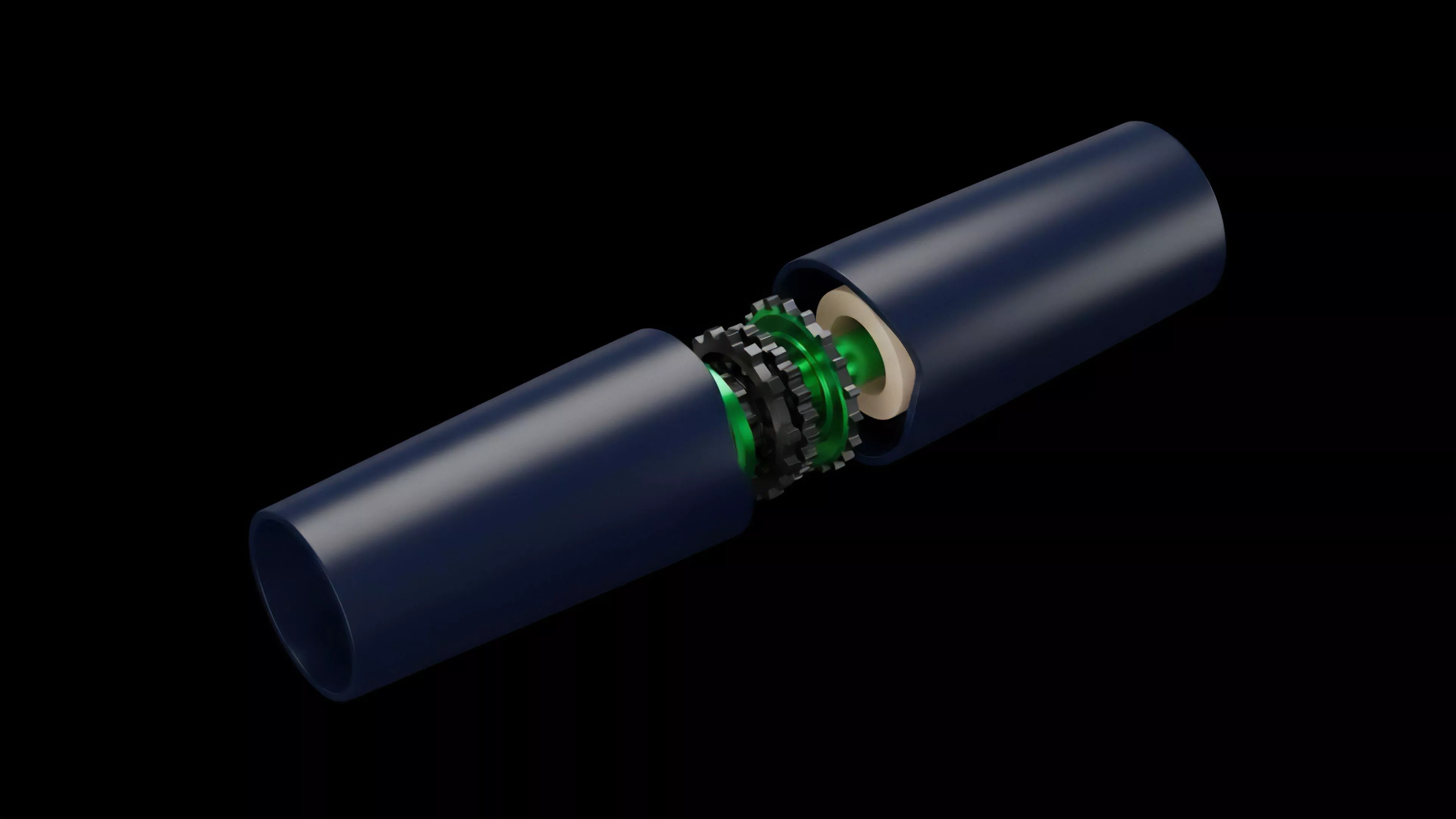

- Cryptographic Proofs establish the mathematical validity of data points before they are ingested by the settlement engine.

- Consensus Mechanisms aggregate disparate data sources to filter out anomalous or corrupted inputs.

- Economic Incentives align the behavior of data validators with the long-term stability of the protocol.

Origin

The genesis of Decentralized Data Integrity tracks the evolution of oracle networks, which initially emerged as a solution to the oracle problem in early DeFi iterations. Developers recognized that the deterministic execution of smart contracts lacked access to real-world financial data, necessitating a bridge that would not introduce centralized risk. Early approaches utilized simple multi-signature schemes, which proved insufficient against sophisticated adversarial manipulation.

The transition from centralized oracles to decentralized data validation protocols represents the maturation of DeFi from experimental code to resilient financial infrastructure.

As market complexity increased, the requirement for robust Decentralized Data Integrity became clear. Financial history illustrates that centralized points of failure, whether in traditional clearinghouses or early blockchain bridges, consistently invite systemic collapse. The development of decentralized networks, which utilize game-theoretic models to ensure truthfulness, reflects a deliberate departure from the fragility of legacy financial systems.

| Generation | Mechanism | Integrity Risk |

|---|---|---|

| First | Centralized Oracles | High Counterparty Risk |

| Second | Multi-signature Aggregation | Collusion Vulnerability |

| Third | Decentralized Proof Aggregation | Protocol-Level Adversarial Stress |

Theory

The mechanical operation of Decentralized Data Integrity relies on the rigorous application of Game Theory to disincentivize data poisoning. Validators are required to stake collateral, which is subject to slashing if they submit data that deviates significantly from the median or the observed market reality. This mechanism forces participants to act in accordance with the system’s objective truth, as the economic cost of subverting the data feed is programmed to be higher than any benefit derived from market manipulation.

Validators maintain data integrity by anchoring their financial incentives to the accuracy of the information they provide to the protocol.

From a quantitative perspective, the integrity of the data feed is modeled as a function of the number of independent nodes and the distribution of their geographic and jurisdictional locations. By maximizing entropy within the validator set, the protocol reduces the probability of coordinated attacks. This is not about building a perfect system, but about creating an environment where the cost of failure is contained and predictable.

Systemic Dynamics

- Latency Sensitivity ensures that data updates remain relevant to high-frequency trading environments.

- Validation Thresholds define the minimum number of nodes required to confirm a data state change.

- Collateral Slashes provide the punitive mechanism for detected malicious activity.

Approach

Current implementations of Decentralized Data Integrity prioritize modularity, allowing protocols to select specific data validation methods based on their risk tolerance and liquidity requirements. Some protocols utilize Zero-Knowledge Proofs to verify the provenance of data without revealing sensitive source information, while others employ Time-Weighted Average Price models to smooth out short-term volatility and mitigate the impact of flash-loan-driven price manipulation.

Modern protocols mitigate data risk by integrating multiple validation layers, combining on-chain consensus with off-chain cryptographic proofs.

The strategic deployment of these systems requires an understanding of the trade-off between speed and security. High-frequency derivative markets demand low-latency data, which can sometimes conflict with the time-intensive process of multi-node consensus. Architects are increasingly utilizing Optimistic Oracles, where data is assumed correct unless challenged within a specific window, effectively balancing efficiency with rigorous verification.

| Methodology | Primary Benefit | Primary Constraint |

|---|---|---|

| Optimistic Validation | High Throughput | Latency During Dispute |

| Zero-Knowledge Proofs | Privacy and Speed | Computational Overhead |

| Staked Consensus | Economic Security | Capital Inefficiency |

Evolution

The trajectory of Decentralized Data Integrity has shifted from basic price feeds to complex state verification, encompassing proof of reserves and cross-chain messaging. Initially, the focus was solely on ensuring that a price arrived on-chain correctly. Today, the scope has expanded to include the verification of entire asset balances and the integrity of collateralization ratios across disparate blockchain networks.

This expansion reflects a broader shift toward a multi-chain financial landscape, where the movement of value is constant and the risks of data fragmentation are significant. The infrastructure now supports sophisticated Derivative Clearing, where the integrity of the data determines the margin requirements and liquidation thresholds in real time. Anyway, as I was saying, the evolution of these systems mirrors the history of auditing in traditional finance, moving from periodic manual checks to continuous, automated verification.

The shift is not merely structural but fundamental, changing the nature of how financial trust is established and maintained.

Horizon

The future of Decentralized Data Integrity lies in the integration of Hardware-Level Validation, where trusted execution environments provide an additional layer of security at the processor level. This will enable the verification of complex, real-world data sets that were previously too computationally expensive to process on-chain. As the boundaries between digital and physical assets blur, the demand for high-fidelity data integrity will become the standard for all institutional-grade decentralized applications.

Future integrity protocols will leverage hardware-based verification to bridge the gap between real-world data and smart contract execution.

We are entering a phase where the protocols that provide the most resilient and transparent data will capture the majority of liquidity. The competition will no longer be about which network is fastest, but about which network provides the most verifiable and tamper-proof data foundation. This shift will force a consolidation of data providers, as only those with robust economic and cryptographic safeguards will remain viable in an adversarial market.