Essence

Decentralized Data Access represents the architectural decoupling of information retrieval from centralized intermediaries, establishing a trust-minimized layer for market intelligence. It functions as the infrastructure for verifiable, real-time ingestion of off-chain pricing, volatility surfaces, and historical trade data directly into smart contract environments. By removing the reliance on singular data feeds, this mechanism ensures that derivatives protocols maintain integrity during high-volatility events, preventing systemic failure caused by stale or manipulated information.

Decentralized data access provides the foundational layer for trust-minimized market intelligence within automated derivative protocols.

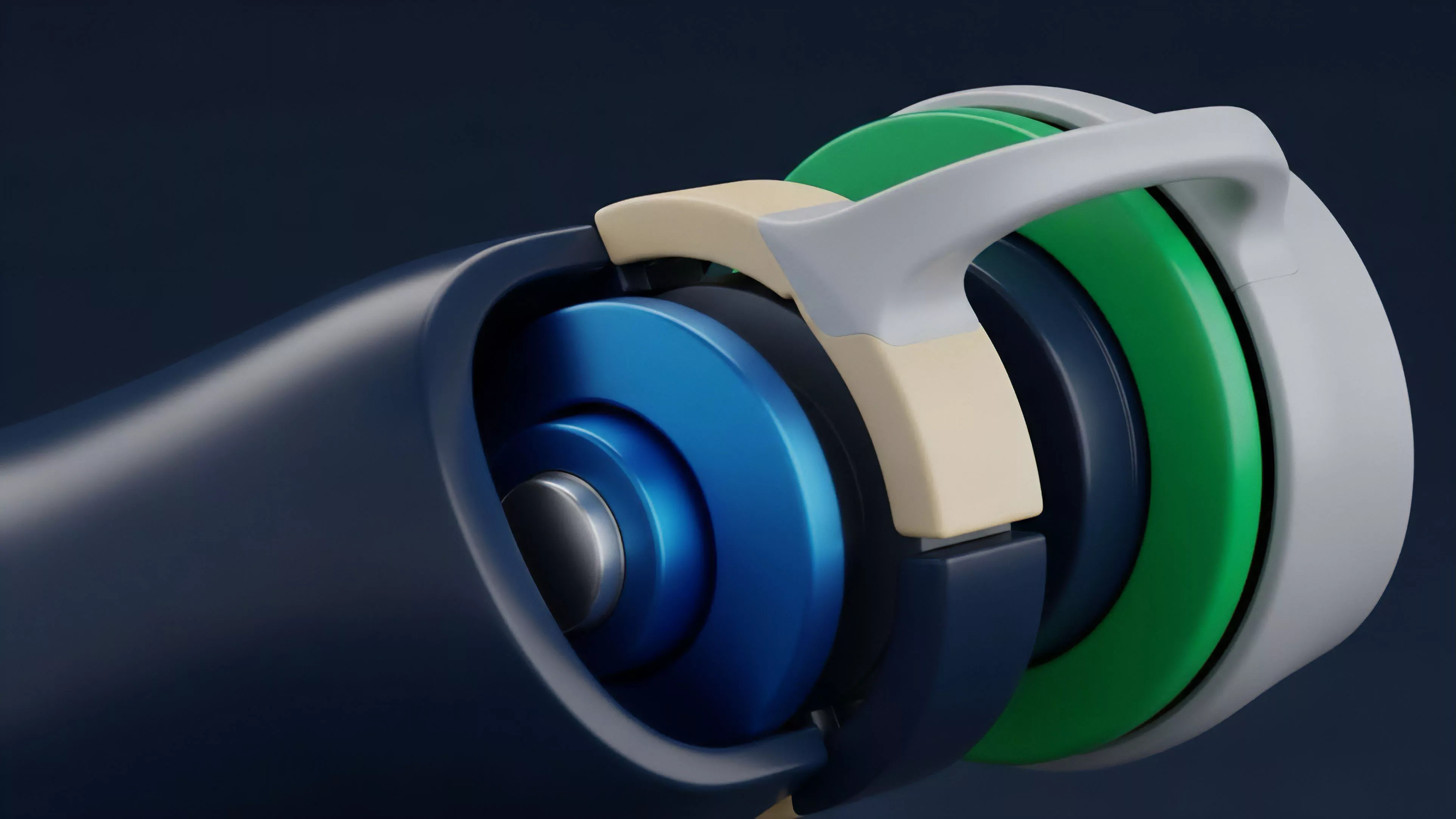

The core utility lies in the aggregation of independent validator nodes that reach consensus on specific data points before committing them to the blockchain. This prevents single points of failure, effectively creating a decentralized oracle network capable of delivering sub-second updates. The financial significance is profound: it enables the creation of sophisticated options products ⎊ such as exotic volatility instruments ⎊ that require high-frequency data fidelity to maintain accurate pricing models and efficient collateral management.

Origin

The necessity for Decentralized Data Access grew out of the inherent limitations of early decentralized exchanges.

Initial iterations relied on centralized APIs, creating a paradoxical situation where protocols aiming for decentralization remained tethered to legacy infrastructure. Developers realized that a chain is only as secure as the data it consumes. If the input is compromised, the smart contract logic ⎊ no matter how immutable ⎊ becomes a vector for financial extraction.

- Information asymmetry: Early market participants faced significant disadvantages due to uneven access to real-time price discovery.

- Oracle manipulation: Malicious actors exploited low-liquidity pools to artificially skew price feeds, leading to cascading liquidations.

- Protocol fragmentation: Diverse ecosystems lacked a standardized method for cross-chain data verification, hindering liquidity depth.

This realization shifted the focus toward building modular, decentralized infrastructure capable of providing cryptographically signed data. The evolution of consensus mechanisms allowed for the development of decentralized oracle networks, which transformed data access from a bottleneck into a competitive advantage for high-performance trading protocols.

Theory

The mathematical framework underpinning Decentralized Data Access relies on the interaction between validator stake-weighting and statistical outlier detection. To maintain data integrity, protocols utilize a median-aggregation function, which minimizes the impact of adversarial nodes attempting to inject corrupt pricing information.

This is essentially a game-theoretic approach to truth, where the cost of attacking the system significantly outweighs the potential profit from doing so.

| Parameter | Centralized Feed | Decentralized Access |

| Trust Model | Reputation-based | Cryptographically verified |

| Failure Mode | Single point of failure | Byzantine fault tolerance |

| Latency | Low | Variable based on consensus |

The integrity of decentralized data access is maintained through Byzantine fault-tolerant consensus mechanisms that penalize adversarial reporting.

In the context of options, the system must account for the volatility skew, requiring high-resolution data points to calibrate the Black-Scholes or local volatility models effectively. When data ingestion occurs at intervals exceeding the market’s volatility, the resulting pricing errors lead to misaligned option premiums and arbitrage opportunities that drain protocol liquidity. The system must, therefore, balance update frequency with gas costs, utilizing off-chain aggregation to optimize the settlement layer.

Approach

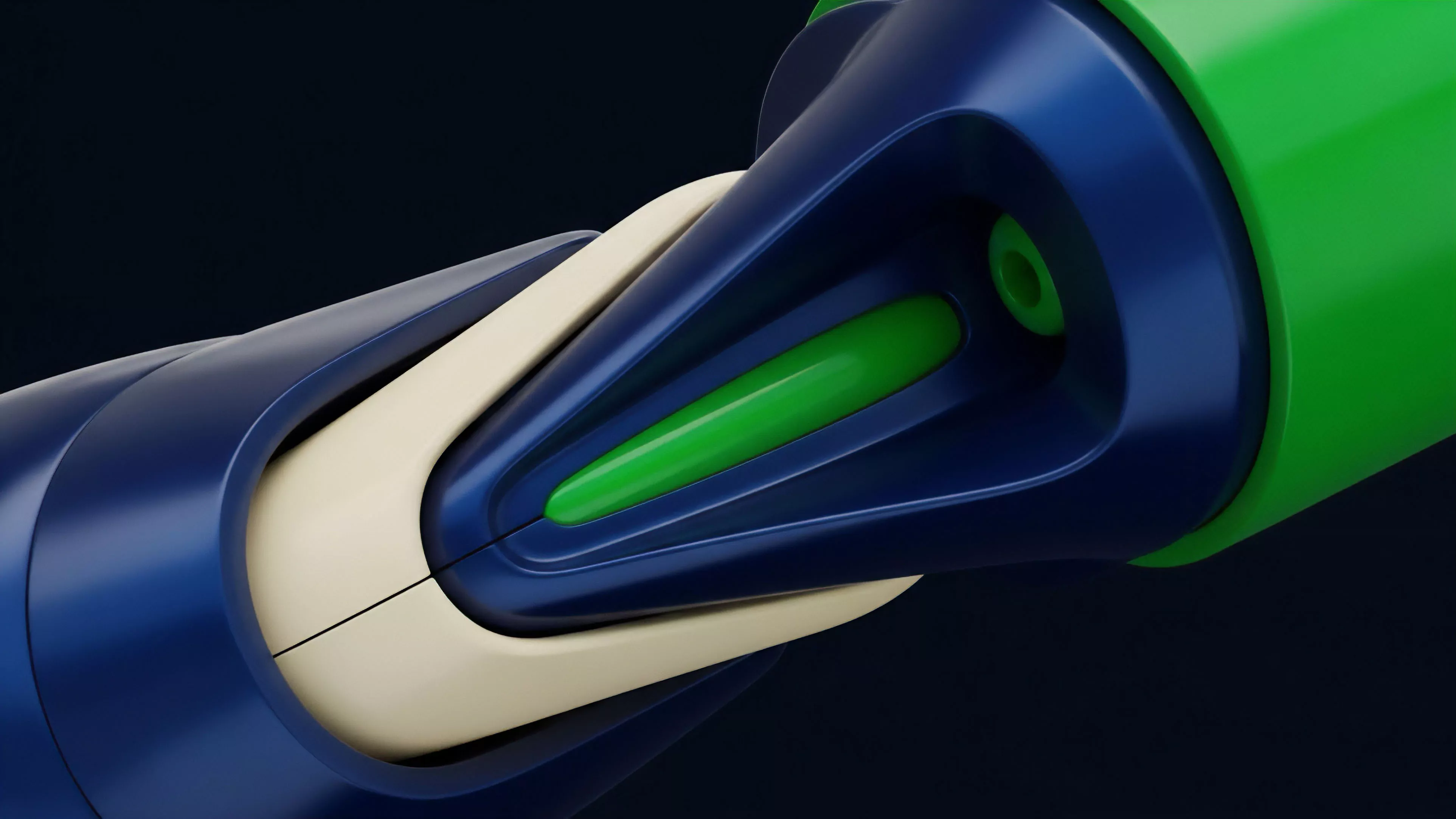

Current implementations of Decentralized Data Access prioritize modularity and interoperability.

Protocols now integrate directly with cross-chain messaging layers, allowing them to pull validated data from any supported network. This architecture allows traders to execute complex strategies across disparate liquidity venues without sacrificing the security of the underlying settlement layer. The focus has moved toward minimizing the latency between the occurrence of a market event and its reflection on-chain.

- Aggregated feeds: Systems pull data from multiple exchanges, weighting them by volume to ensure accurate price discovery.

- Zero-knowledge proofs: Emerging architectures utilize these proofs to verify data validity without revealing the underlying raw inputs.

- Custom oracle designs: Protocols develop specialized feeds tailored to the specific risk parameters of their derivative products.

This is a stark departure from the static feeds of the past. The system now treats data as a dynamic, evolving asset, constantly recalibrating based on market conditions. One might observe that the complexity of these systems is a direct response to the sophistication of the financial instruments being traded.

The movement of capital across borders ⎊ both geographical and protocol-based ⎊ is accelerating, necessitating a more robust and responsive infrastructure for data consumption.

Evolution

The transition from primitive, single-source oracles to multi-layered, decentralized data pipelines reflects the broader maturity of the digital asset market. Initially, developers focused on simple spot price retrieval. As the market grew, the requirements shifted toward supporting complex derivative structures.

This necessitated the inclusion of historical data, volume metrics, and implied volatility surfaces. The current landscape is defined by the integration of institutional-grade data providers into decentralized networks, bridging the gap between legacy finance and on-chain execution.

Decentralized data access has evolved from simple spot price delivery into a sophisticated pipeline for institutional-grade market analytics.

This growth has not been linear. We have witnessed periodic cycles of over-engineering followed by sharp corrections, as protocols learned that excessive complexity often introduces new attack vectors. The current focus is on creating lightweight, high-performance solutions that maintain security without compromising the speed required for modern market making.

The industry is currently moving toward specialized, application-specific data layers that prioritize domain expertise over generalized oracle services.

Horizon

The future of Decentralized Data Access lies in the development of predictive, AI-driven data ingestion layers. Instead of merely reflecting historical or current prices, future systems will likely incorporate real-time sentiment analysis and macro-economic indicator feeds to anticipate market volatility before it manifests in price action. This shift will fundamentally change the pricing of options, as models begin to incorporate non-linear, exogenous data inputs directly into the risk-neutral valuation.

| Feature | Current State | Future State |

| Data Type | Price and Volume | Predictive Sentiment and Macro |

| Intelligence | Reactive | Proactive |

| Integration | Manual | Autonomous |

As these systems become more autonomous, the role of human governance will likely shift from active management to the setting of high-level risk parameters. The ultimate objective is a self-regulating financial ecosystem where data access is so seamless and secure that the distinction between centralized and decentralized markets becomes irrelevant to the end user. This path leads to a global, permissionless market where sophisticated financial strategies are accessible to all participants with verified, real-time intelligence. What happens to market efficiency when the data feed itself begins to anticipate and price in future systemic shocks before they are realized?