Essence

Data Verification Processes represent the architectural bedrock of decentralized derivative markets. These mechanisms function as the authoritative bridge between off-chain asset price discovery and on-chain settlement execution. Without rigorous verification, the integrity of smart contract margin engines remains vulnerable to external manipulation, rendering automated liquidation protocols ineffective.

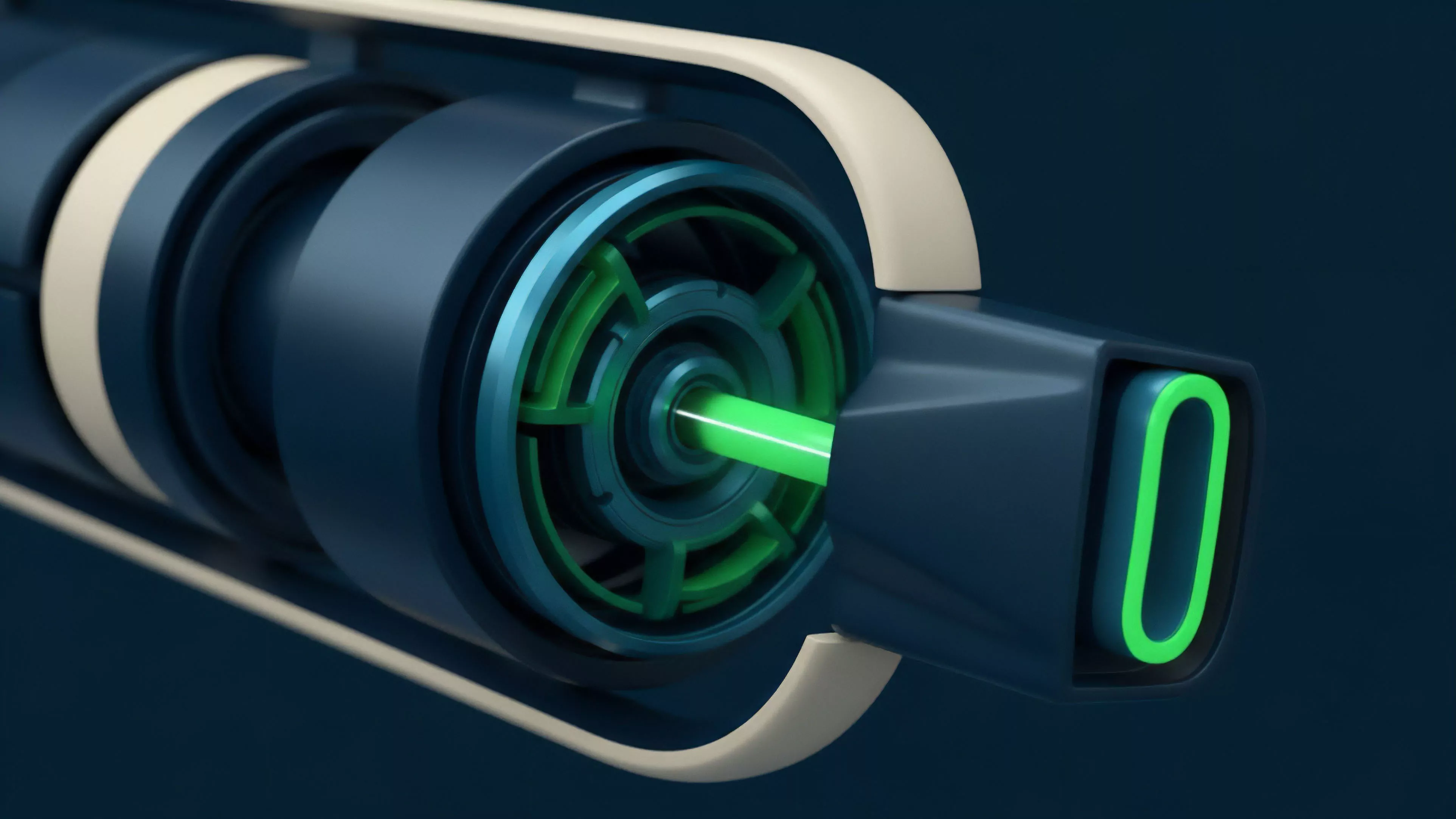

Data verification processes act as the cryptographic bridge ensuring off-chain price discovery translates accurately into on-chain settlement.

The primary objective involves establishing a high-fidelity representation of real-world asset states within a permissionless environment. This involves aggregating, filtering, and validating data streams from centralized exchanges, decentralized liquidity pools, and proprietary market makers. The resulting verified data provides the necessary inputs for calculating Delta, Gamma, and Vega, which dictate the solvency of open positions.

Origin

The necessity for robust Data Verification Processes emerged alongside the first generation of decentralized perpetual swaps and options protocols.

Early iterations relied on single-source price feeds, which exposed platforms to significant oracle manipulation risks. Attackers frequently exploited these simplistic implementations by triggering artificial liquidations through rapid, localized price spikes on thin order books.

Early reliance on single-source price feeds highlighted the systemic fragility inherent in naive data aggregation strategies.

The evolution of these systems traces back to the refinement of decentralized oracle networks. Developers recognized that trust-minimized financial products require a consensus-based approach to data validation. This transition marked a shift from centralized gatekeepers to multi-layered verification architectures designed to withstand adversarial market conditions and protocol-level exploits.

Theory

The theoretical framework governing Data Verification Processes rests upon the principle of adversarial resilience.

A system must assume that every data input is potentially malicious or erroneous. Consequently, the architecture incorporates statistical filters and consensus algorithms to isolate the true market price from noise or coordinated manipulation attempts.

Market Microstructure Integration

- VWAP Aggregation: Systems calculate the Volume Weighted Average Price across multiple venues to mitigate the impact of localized liquidity gaps.

- Deviation Thresholds: Smart contracts enforce strict limits on the rate of change allowed for price updates, preventing rapid volatility spikes from triggering cascading liquidations.

- Latency Arbitrage Protection: Advanced protocols implement randomized delay buffers to neutralize the advantage held by high-frequency actors seeking to front-run oracle updates.

Quantitative Modeling Parameters

| Parameter | Verification Role |

| Time-Weighted Average | Smooths volatility for margin calls |

| Median Filtering | Removes extreme outliers from data feeds |

| Confidence Intervals | Determines validity of price updates |

The mathematical rigor applied to these processes determines the liquidation threshold efficiency. If the verification lag exceeds the speed of market movements, the protocol risks insolvency due to outdated collateral valuation. The physics of the protocol must align with the underlying asset volatility to maintain equilibrium.

Approach

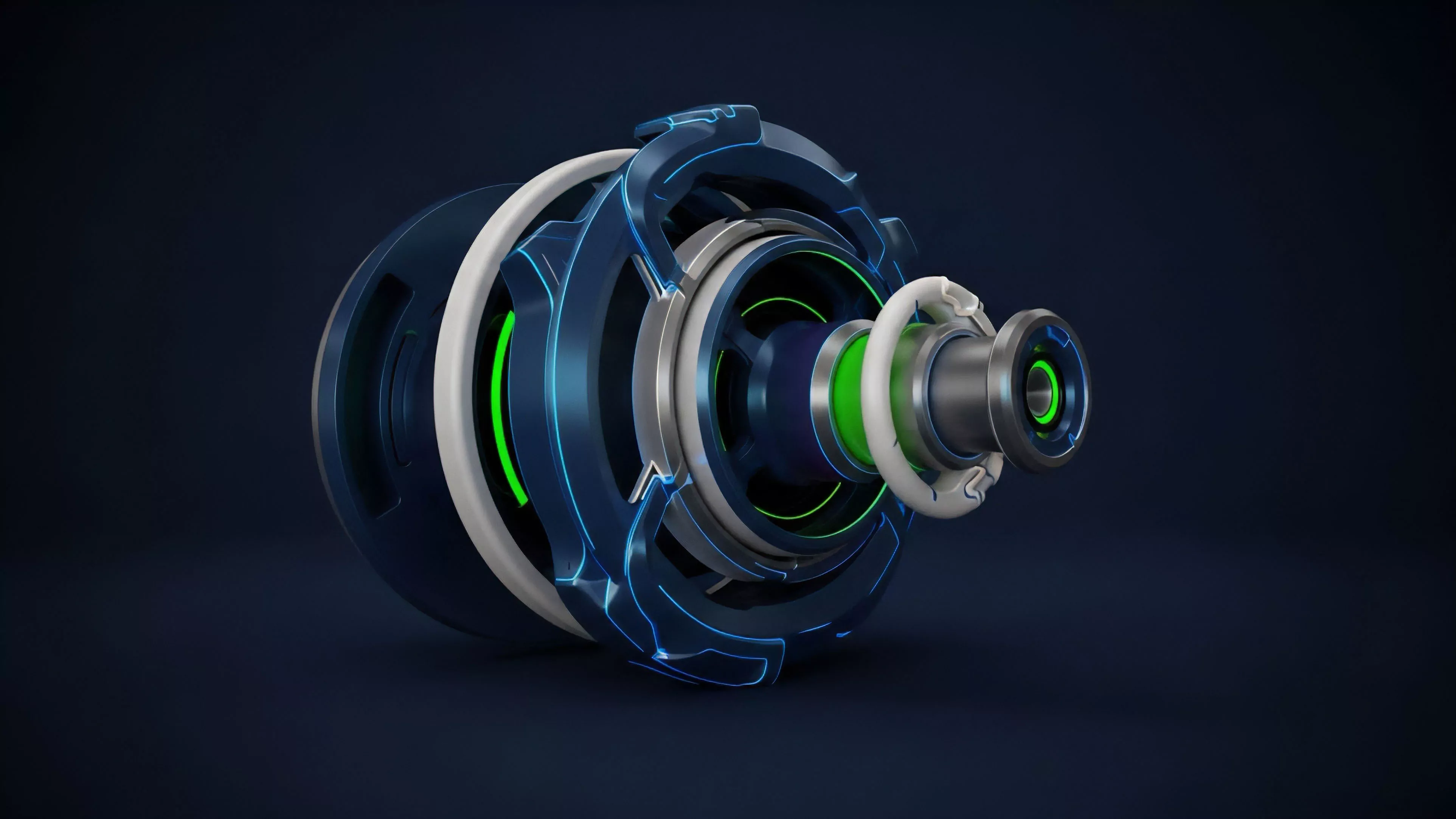

Current strategies prioritize redundancy and cryptographic proofing.

Protocols move away from monolithic data feeds toward decentralized networks that incentivize honest reporting through game-theoretic mechanisms. This ensures that the cost of manipulating the data feed exceeds the potential profit from the derivative position.

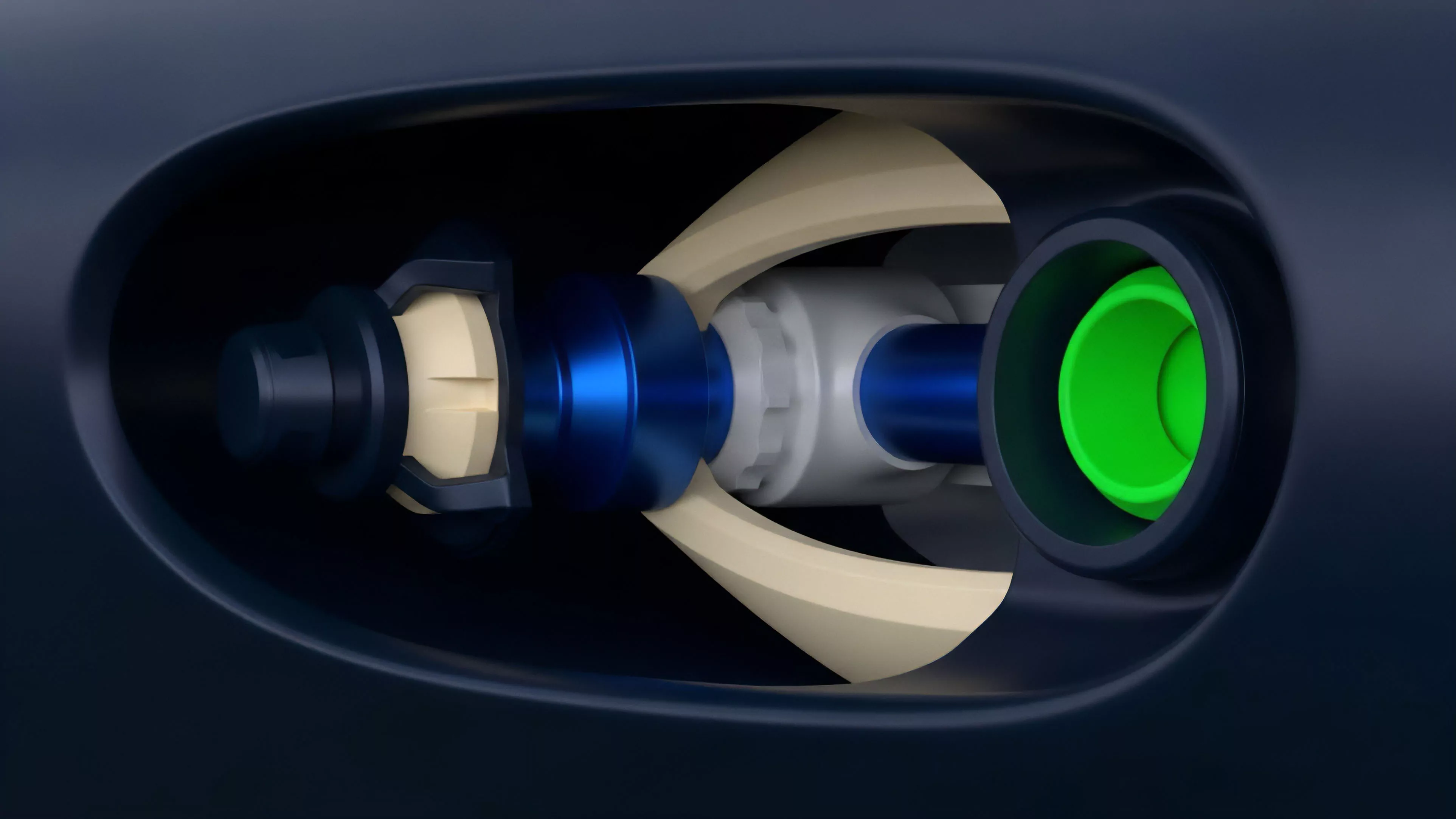

Decentralized verification networks leverage economic incentives to ensure that the cost of manipulation remains prohibitively high for actors.

Operational Validation Framework

- Data Source Diversity: Integrating inputs from Tier-1 exchanges and decentralized order books to broaden the liquidity base.

- Proof of Stake Validation: Requiring nodes to stake collateral, which is subject to slashing if they submit fraudulent or inaccurate price data.

- Zk-Proof Integration: Utilizing zero-knowledge proofs to verify the computational integrity of off-chain price calculations without exposing sensitive source data.

The integration of Zk-proofs allows for a significant reduction in on-chain storage requirements while maintaining high levels of security. This is a critical development for scaling derivative platforms to handle higher transaction volumes without sacrificing the integrity of the underlying price discovery mechanism.

Evolution

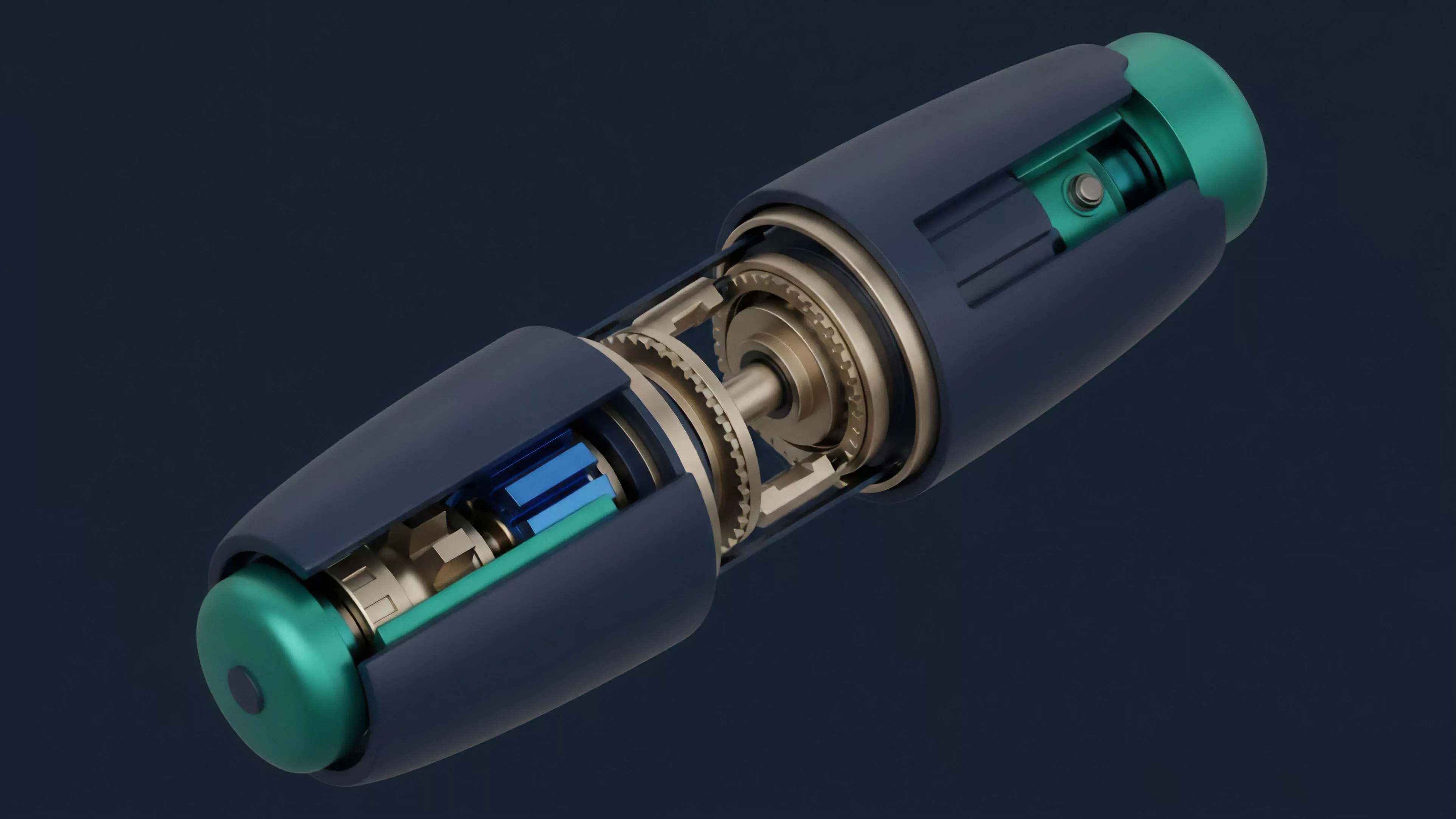

The progression of Data Verification Processes has moved from basic median-price feeds to sophisticated, multi-factor validation engines. Initially, protocols were limited by the throughput of the underlying blockchain, often resulting in stale data.

Modern architectures now leverage Layer 2 scaling solutions to process data updates with sub-second latency, dramatically improving capital efficiency.

Modern verification architectures leverage layer two scaling to minimize latency and improve the precision of margin engine calculations.

This evolution also encompasses the shift toward cross-chain verification. As liquidity fragments across disparate ecosystems, protocols must now verify prices from multiple chains to provide unified settlement services. This requires a complex orchestration of cross-chain messaging protocols and synchronized validator sets to prevent arbitrageurs from exploiting price discrepancies between chains.

Horizon

The future of Data Verification Processes lies in the development of autonomous, self-correcting oracle systems.

These systems will utilize machine learning models to detect anomalous price behavior in real-time, adjusting their weighting of specific data sources dynamically. This will reduce the reliance on static thresholds and improve the protocol’s ability to survive black-swan events.

Strategic Developments

- Real-time Anomaly Detection: Integrating AI-driven filters to identify and isolate flash-crash scenarios before they impact collateral valuation.

- Programmable Oracles: Allowing developers to customize verification logic for specific asset classes, tailoring risk management to the unique volatility profiles of various derivatives.

- Threshold Cryptography: Enhancing the security of data aggregation by requiring a minimum number of nodes to sign off on price updates, ensuring no single point of failure exists.

As decentralized markets mature, the ability to verify data with absolute certainty will dictate which protocols achieve dominant market share. The technical struggle will focus on balancing computational overhead with the necessity for extreme, sub-second accuracy in volatile market conditions.