Essence

The functional architecture of crypto options markets relies entirely on the integrity and timeliness of their underlying data streams. These streams are not simply price feeds; they are the high-frequency information channels that power risk calculation, settlement logic, and automated collateral management. In a decentralized environment, where a counterparty cannot simply be trusted to fulfill their obligations, the data itself becomes the primary source of truth for all financial operations.

This shift means data streams are elevated from a secondary resource to a core financial primitive, defining the parameters of every derivative contract. The reliability of these streams directly dictates the viability of complex financial instruments.

Origin

The genesis of data streams in derivatives can be traced back to traditional finance, where centralized data vendors like Bloomberg and Refinitiv provided proprietary feeds to institutions. These feeds were high-cost, high-latency, and opaque, operating as a necessary barrier to entry for smaller market participants. The crypto derivative market began by attempting to replicate this model in a decentralized context, but quickly discovered the inherent vulnerabilities of relying on off-chain data for on-chain settlement.

Early attempts to build options protocols were plagued by oracle manipulation risks, where a single, centralized data source could be exploited to liquidate positions unfairly. The evolution from these initial, flawed architectures to today’s more robust systems required a fundamental re-engineering of data verification. This transition involved moving from a simple “push” model ⎊ where a single source pushes data onto the chain ⎊ to a more resilient, aggregated “pull” model, where protocols query multiple independent sources for consensus.

Theory

The theoretical foundation of options pricing, specifically the Black-Scholes-Merton model, places significant weight on the volatility parameter. In crypto, this parameter is not static; it is a dynamic surface that must be constantly refreshed by real-time data streams. The core data requirements for a functioning options protocol extend far beyond the spot price of the underlying asset.

A truly robust system must consume and process a high volume of data to calculate and maintain the implied volatility surface ⎊ the set of implied volatilities across different strikes and expirations. This surface is a dynamic, multi-dimensional dataset that changes constantly based on market sentiment and order flow.

Approach

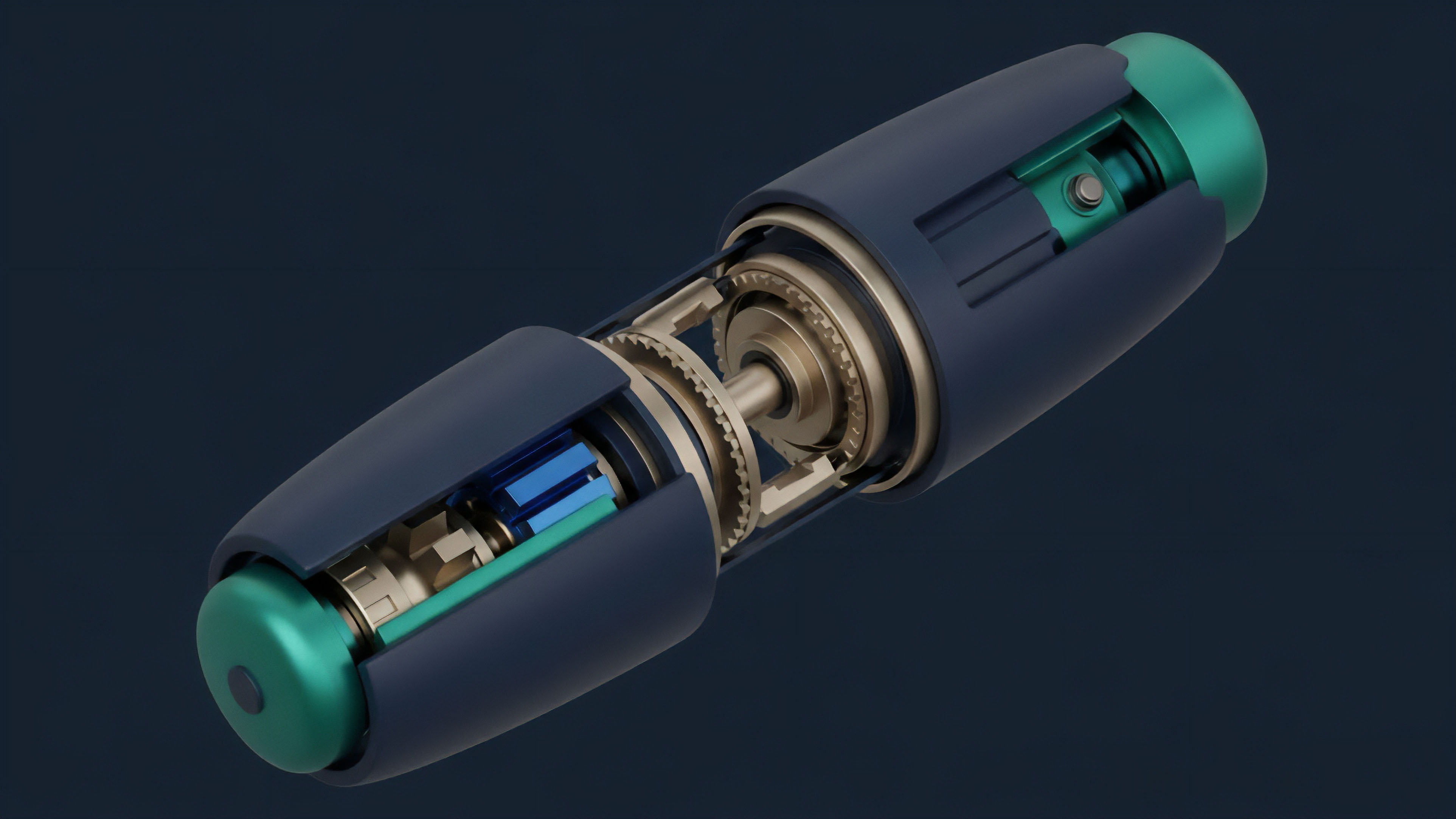

The current approach to building data streams for crypto options focuses on two primary challenges: data integrity and latency management. Data integrity is addressed through decentralized oracle networks, which aggregate data from multiple off-chain sources and provide cryptographic proof of its accuracy. This method mitigates single points of failure and makes manipulation significantly more expensive.

Latency management is a challenge specific to high-frequency trading in decentralized finance. A price update on an options protocol might be delayed by blockchain block times, creating opportunities for front-running. To counter this, many protocols employ techniques such as batch processing of orders and implementing time-weighted average prices (TWAPs) rather than relying on a single, instantaneous price point.

Data streams are the foundational layer of risk management in decentralized options, enabling the real-time calculation of risk exposures for all participants.

Evolution

Data streams for crypto options have evolved significantly in complexity. Initially, the requirement was simply for accurate spot prices. The next stage introduced the need for high-frequency implied volatility feeds, as market makers sought to automate their pricing models.

Today, the most advanced data streams are moving beyond simple pricing data to incorporate liquidation data and funding rate data from perpetual futures markets. This integration is essential because the perpetual futures market acts as a proxy for spot market sentiment and often dictates the short-term direction of implied volatility.

Data Stream Evolution in Derivatives

- Phase 1: Spot Price Feeds: Simple, low-frequency data feeds primarily used for basic collateral valuation and settlement.

- Phase 2: Implied Volatility Surfaces: High-frequency feeds providing dynamic volatility data for complex pricing models and automated market maker (AMM) algorithms.

- Phase 3: Cross-Protocol Data Aggregation: Integration of data from multiple derivative markets, including funding rates from perpetual futures, to build a comprehensive view of systemic leverage and risk.

Horizon

The future of data streams in crypto options points toward greater data verification through cryptographic methods and the use of artificial intelligence to predict market dynamics. The integration of zero-knowledge proofs (ZK-proofs) will allow protocols to verify the accuracy of off-chain data without revealing the data itself, significantly enhancing privacy and security. Furthermore, machine learning models will move beyond simply consuming data streams to generating predictive synthetic volatility surfaces that anticipate future market movements.

These predictive models will not only enhance pricing accuracy but also create new financial primitives where data itself is tradable as an asset.

The next generation of data streams will leverage zero-knowledge proofs to verify data integrity without compromising user privacy.

Data Stream Challenges and Solutions

| Challenge Area | Current Solution | Horizon Solution |

|---|---|---|

| Latency and Front-running | Batch processing, TWAP implementation | Layer 2 data networks, high-frequency oracles |

| Data Integrity | Decentralized oracle aggregation | ZK-proofs for off-chain verification |

| Systemic Risk Analysis | Manual analysis of funding rates | AI-driven correlation and contagion modeling |

Glossary

On-Chain Data Verification

Systemic Risk Indicators

Predictive Volatility Modeling

Financial Data Streams

Real-Time Data Streams

Decentralized Oracle Aggregation

Cross-Chain Data Interoperability

Front-Running

Real-Time Risk Calculation