Essence

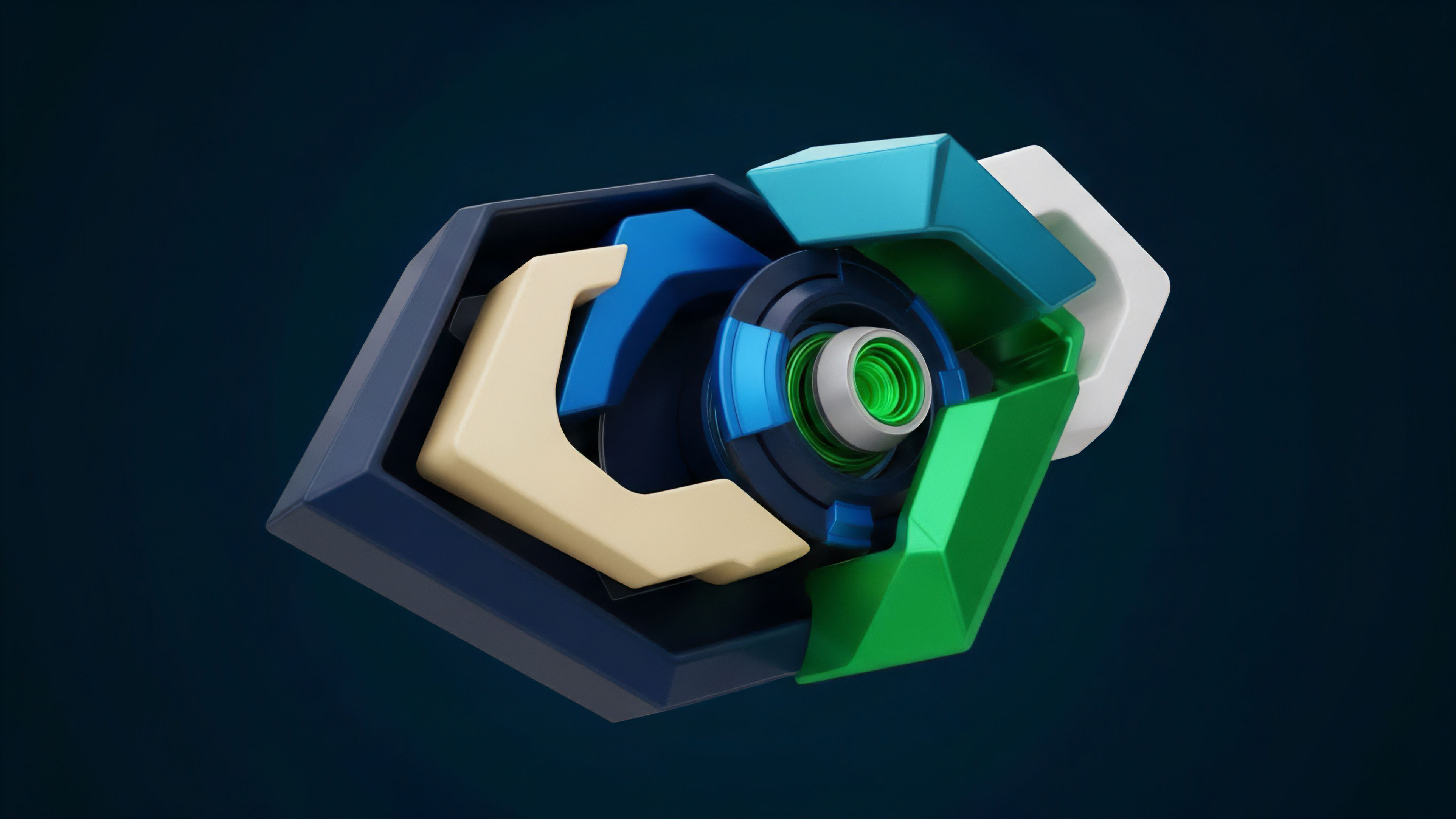

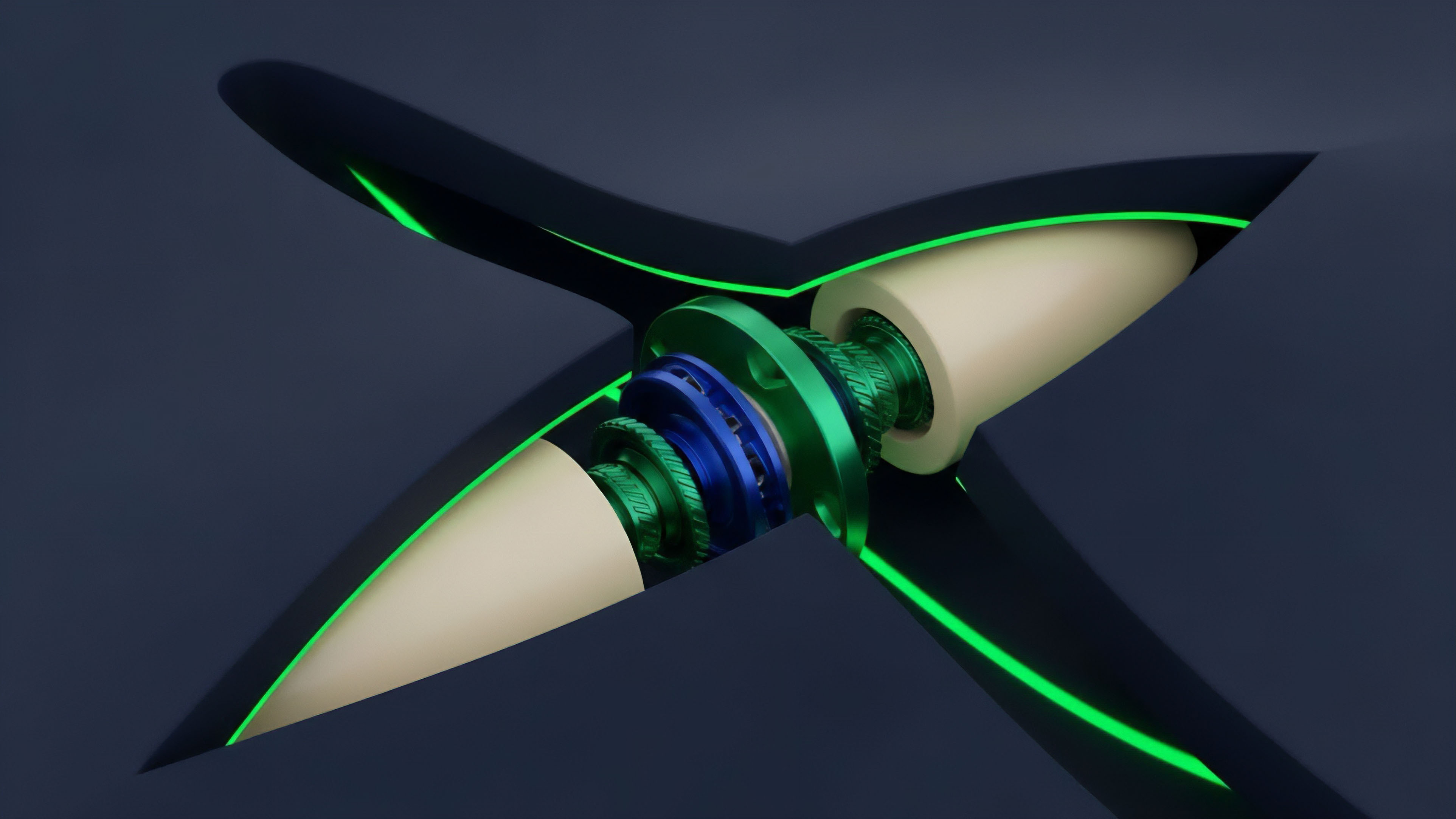

Data Stream Integrity in crypto options refers to the absolute reliability and timeliness of external information used by smart contracts for pricing, collateral valuation, and settlement. This integrity extends beyond a simple spot price feed to encompass complex inputs like volatility surfaces, interest rate curves, and correlation data. A decentralized options protocol relies on these data streams as its core operational truth, replacing the trusted centralized clearing house of traditional finance.

The core challenge lies in ensuring this data is delivered to the chain in a secure, verifiable, and economically sound manner, especially given the high frequency and low latency required for dynamic options pricing and risk management. Without verifiable data integrity, a decentralized options market cannot function securely; it becomes vulnerable to front-running, manipulation, and cascading liquidations triggered by incorrect inputs. The architecture of a data stream must resist adversarial attacks, where participants attempt to feed false data to profit from arbitrage opportunities or to force liquidations against competitors.

Data Stream Integrity is the fundamental requirement for trustless settlement in decentralized options markets, ensuring that smart contracts operate on verifiable, accurate, and timely external information.

The data feed for an options protocol is significantly more complex than a standard spot exchange rate. Options pricing models, particularly the Black-Scholes-Merton model and its extensions, require multiple inputs, including time to expiration, strike price, and volatility. The integrity of the volatility input, often represented by a volatility surface or skew, is critical.

A manipulated volatility surface can lead to mispricing options, allowing attackers to buy underpriced options or sell overpriced ones, draining liquidity from the protocol. This highlights the systemic risk inherent in a poorly designed data stream.

Origin

The necessity of robust data stream integrity in decentralized finance (DeFi) emerged directly from early systemic failures in oracle design.

The first generation of DeFi protocols often relied on simplistic or single-source price feeds, which proved to be a critical vulnerability. Flash loan attacks, where an attacker borrows large sums of capital, manipulates the price on a decentralized exchange (DEX), and then uses the manipulated price to execute a profitable trade on a lending or options protocol before repaying the loan, highlighted the fragility of these systems. This vulnerability became particularly acute for options protocols, which are far more sensitive to price fluctuations and volatility inputs than simple lending platforms.

Early exploits demonstrated that a simple average price feed from a few DEXs was insufficient for robust risk management. The industry recognized that a data feed for derivatives needed to be more than a snapshot; it required a mechanism that aggregated data across multiple sources, applied statistical analysis to detect anomalies, and implemented economic incentives to ensure data providers acted honestly. This shift marked the transition from a naive reliance on single-source data to the development of sophisticated, decentralized oracle networks.

The focus shifted from simply getting data onto the chain to ensuring the economic security and verifiability of that data before it reached the smart contract.

Theory

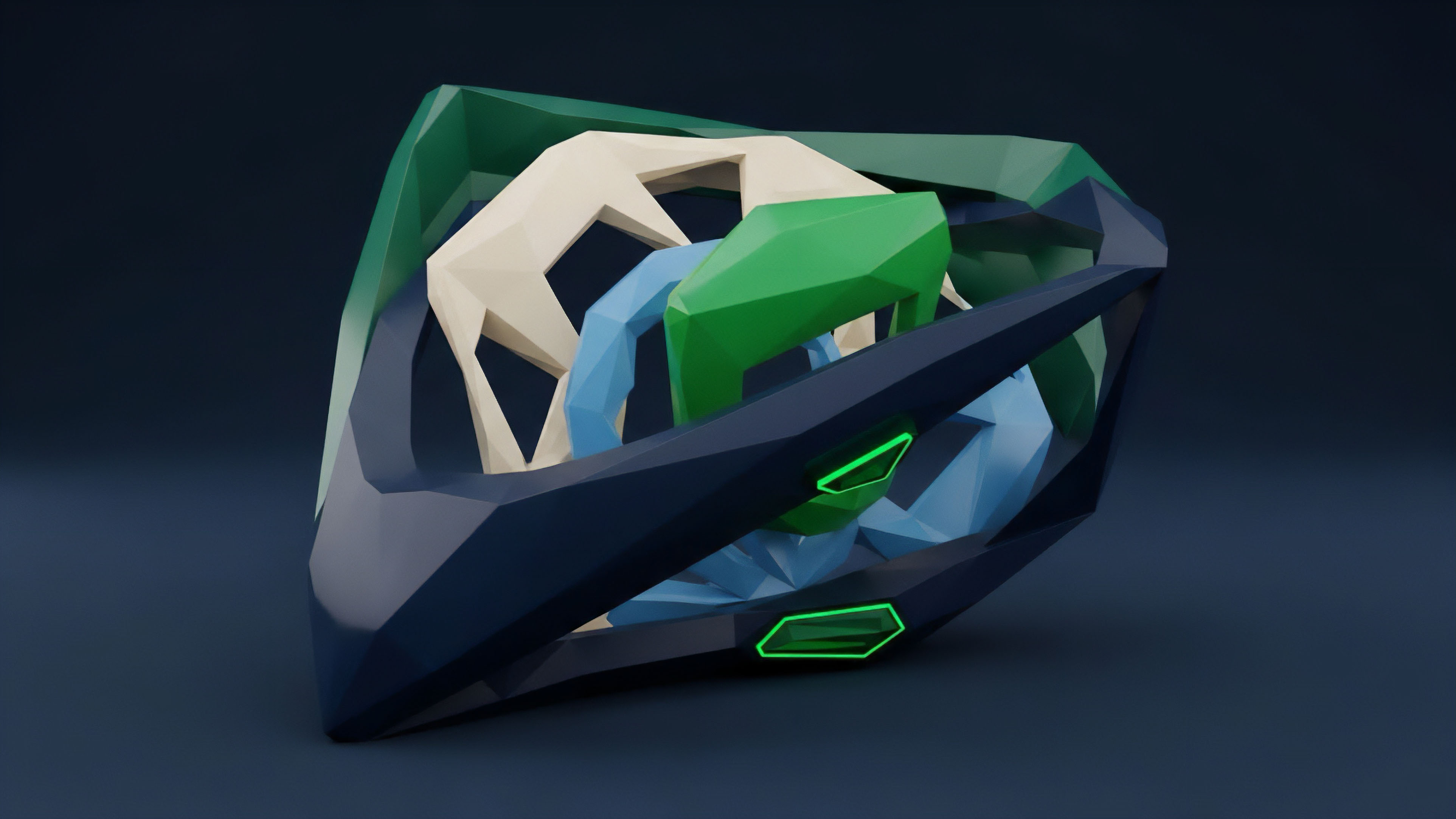

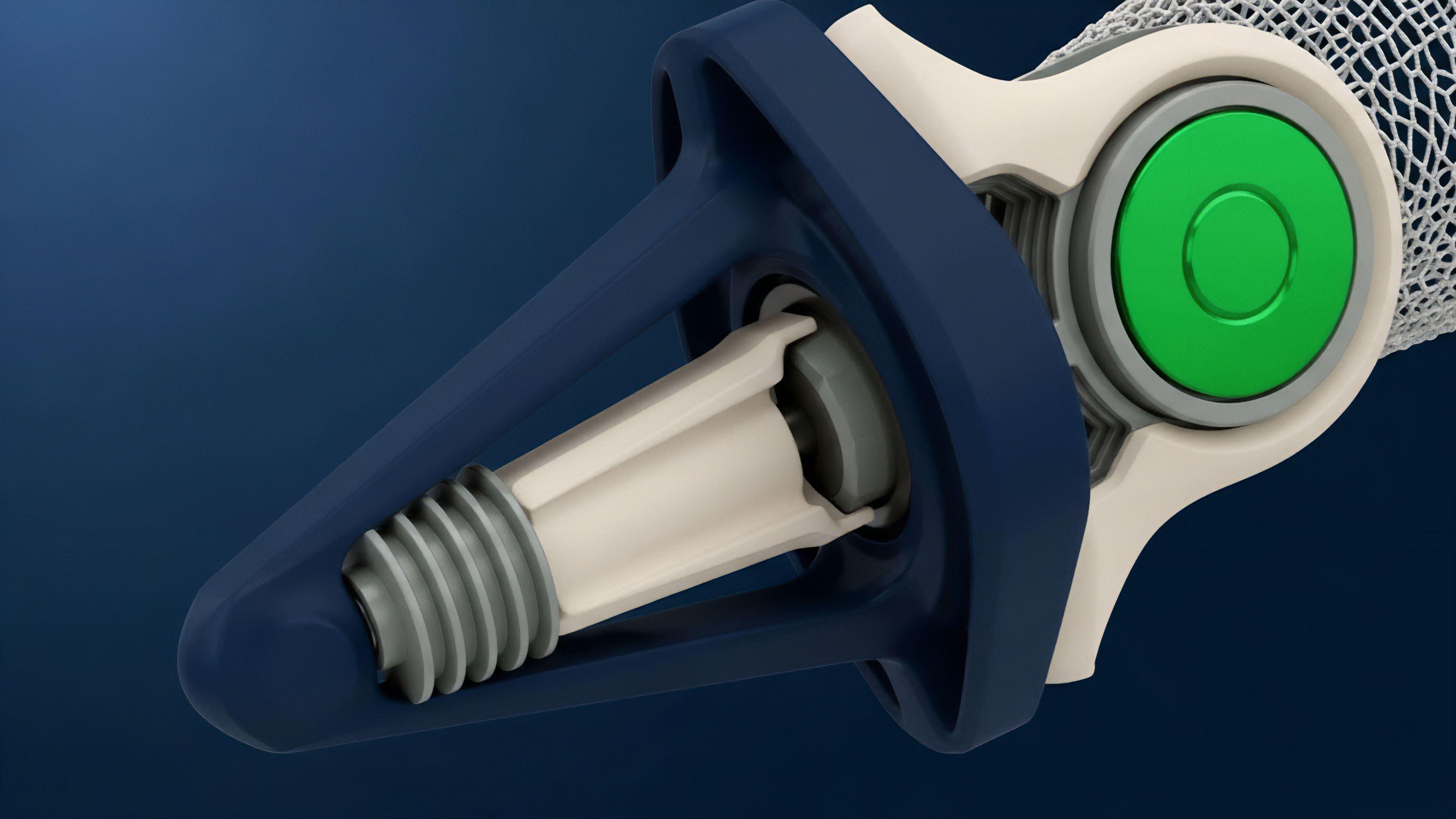

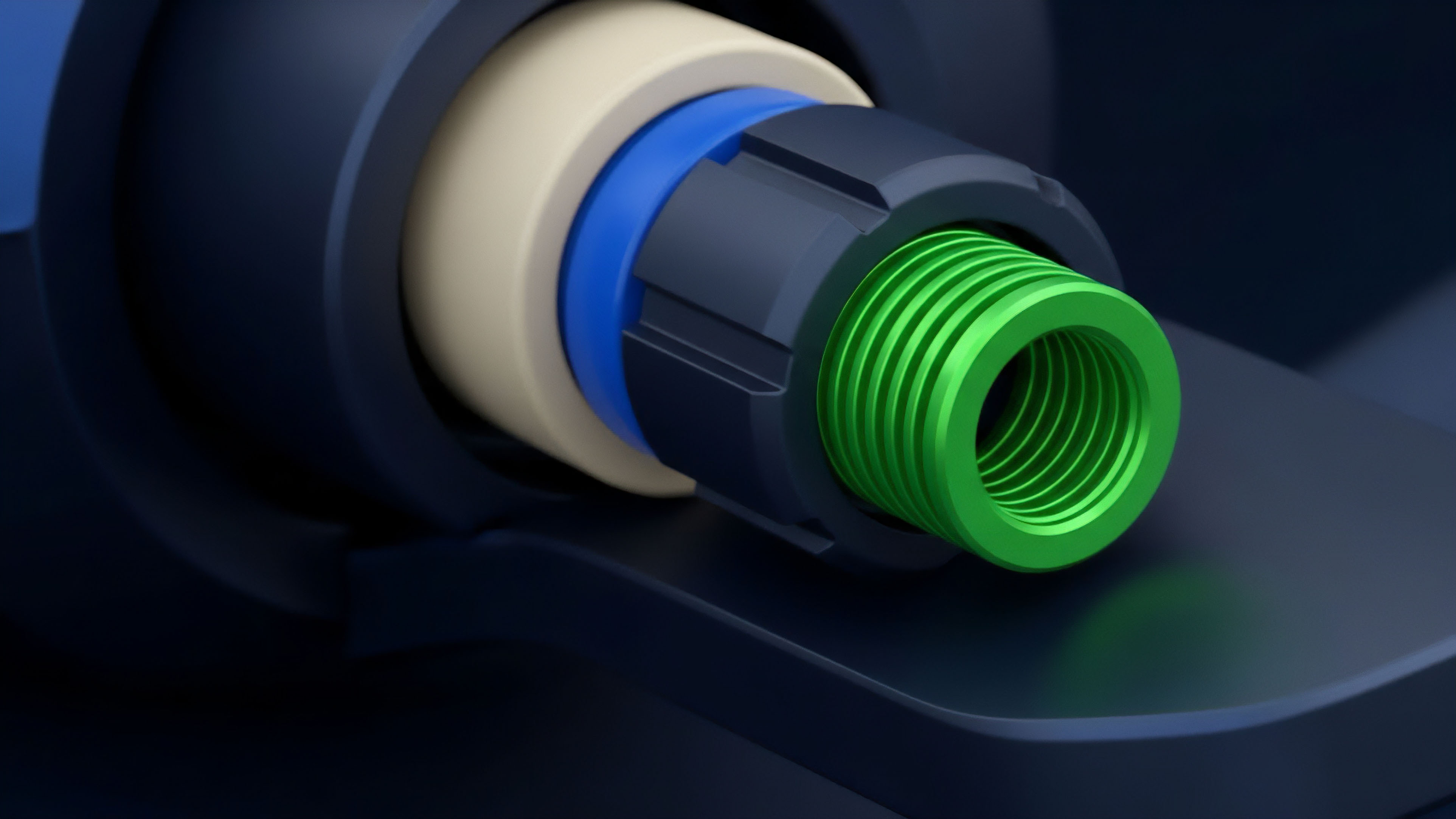

The theoretical foundation of Data Stream Integrity for options protocols rests on two primary pillars: economic game theory and statistical robustness. From a game-theoretic perspective, a decentralized oracle network must be designed to make the cost of providing false data significantly higher than the potential profit from doing so.

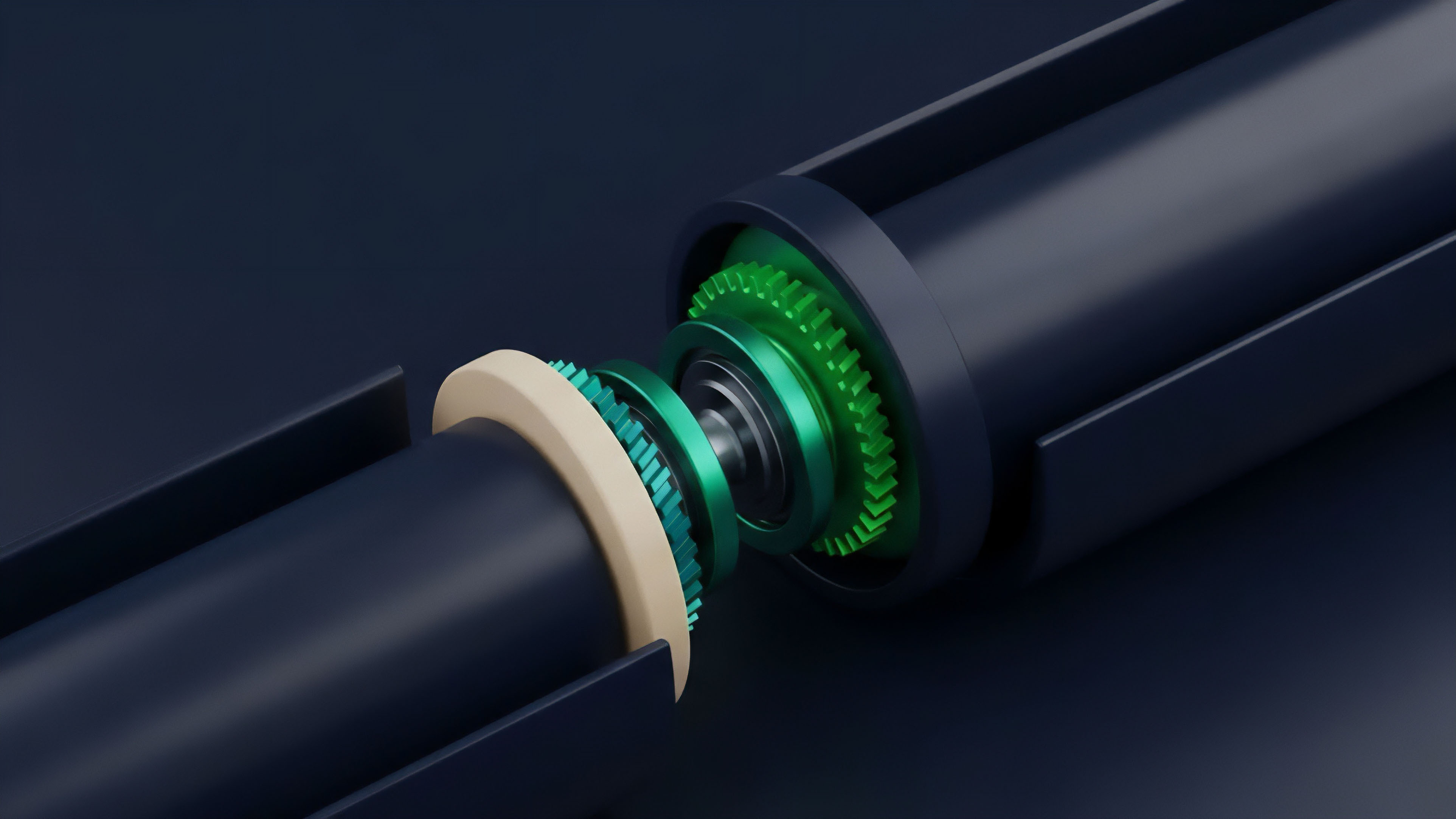

This is achieved through mechanisms like staking and slashing, where data providers must stake collateral that can be taken away if they report incorrect information. The network’s design must ensure that the collective incentive for honesty outweighs individual incentives for manipulation. From a statistical standpoint, the integrity of the data stream is maintained through sophisticated aggregation techniques.

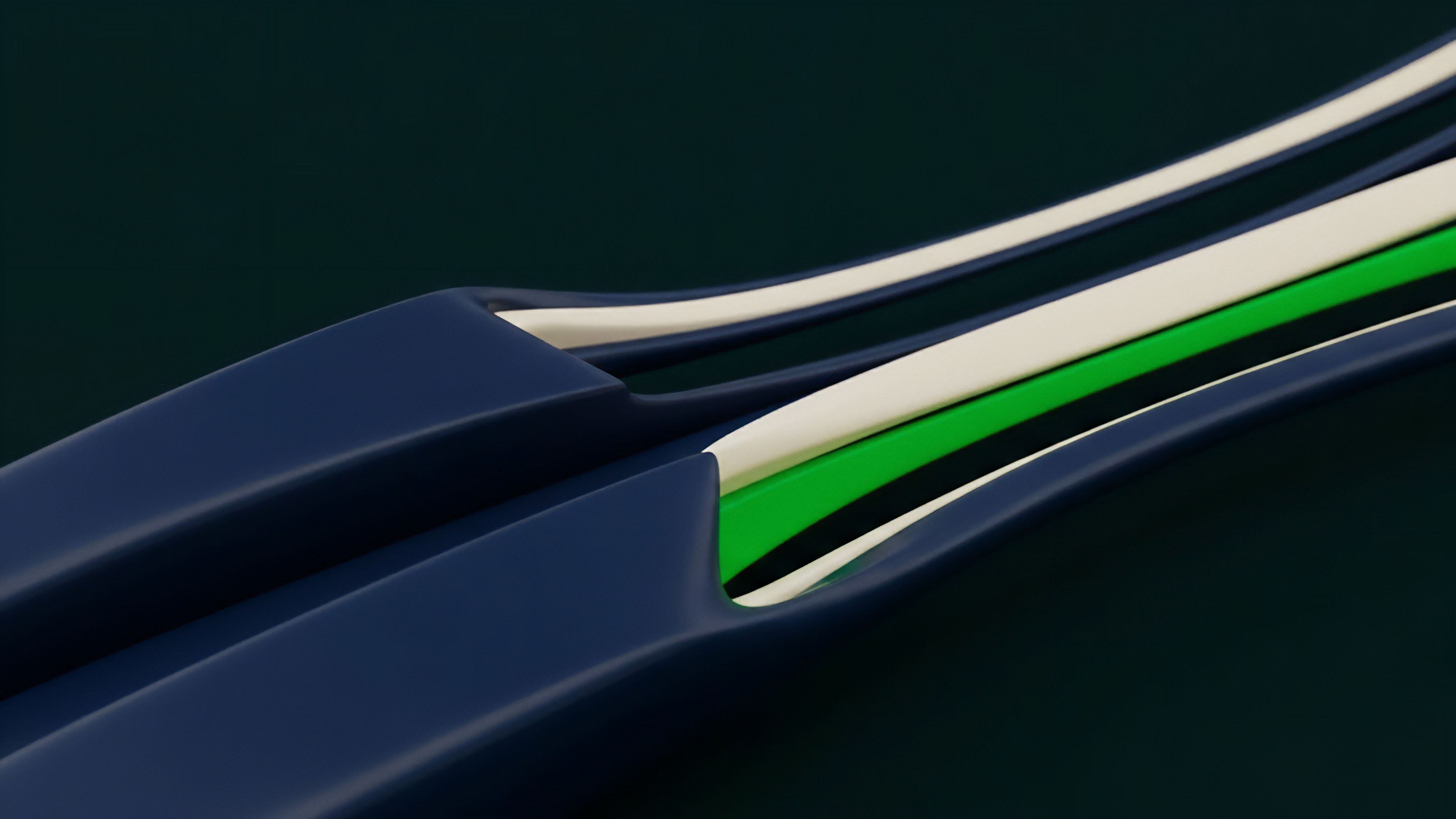

Instead of relying on a single source, protocols use a median or weighted average of data from numerous independent providers. This approach makes it difficult for a single attacker to corrupt the feed without controlling a majority of the providers. The challenge is particularly acute for volatility data, which is not directly observable on-chain and must be derived from market data.

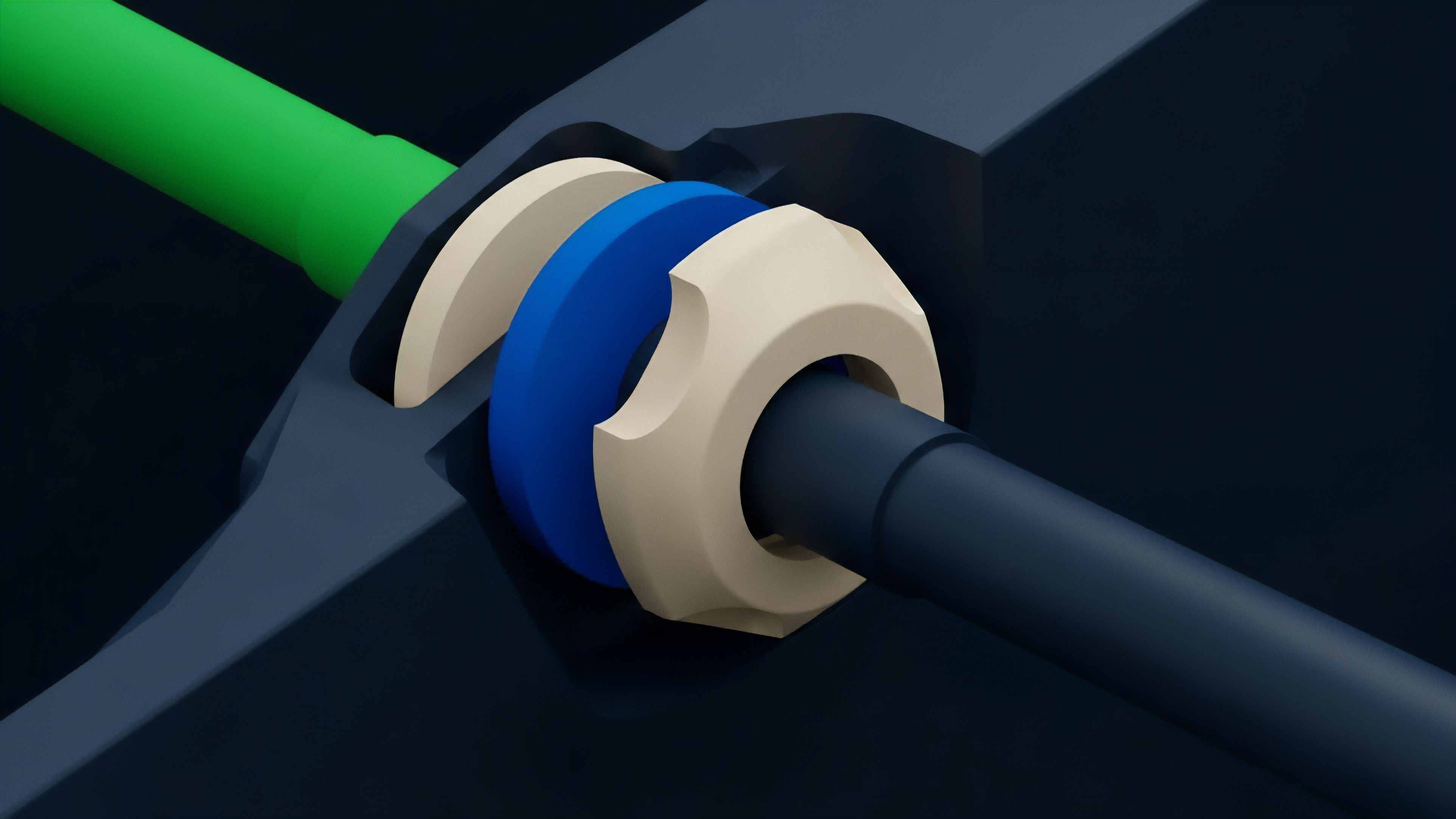

Data Aggregation and Anomaly Detection

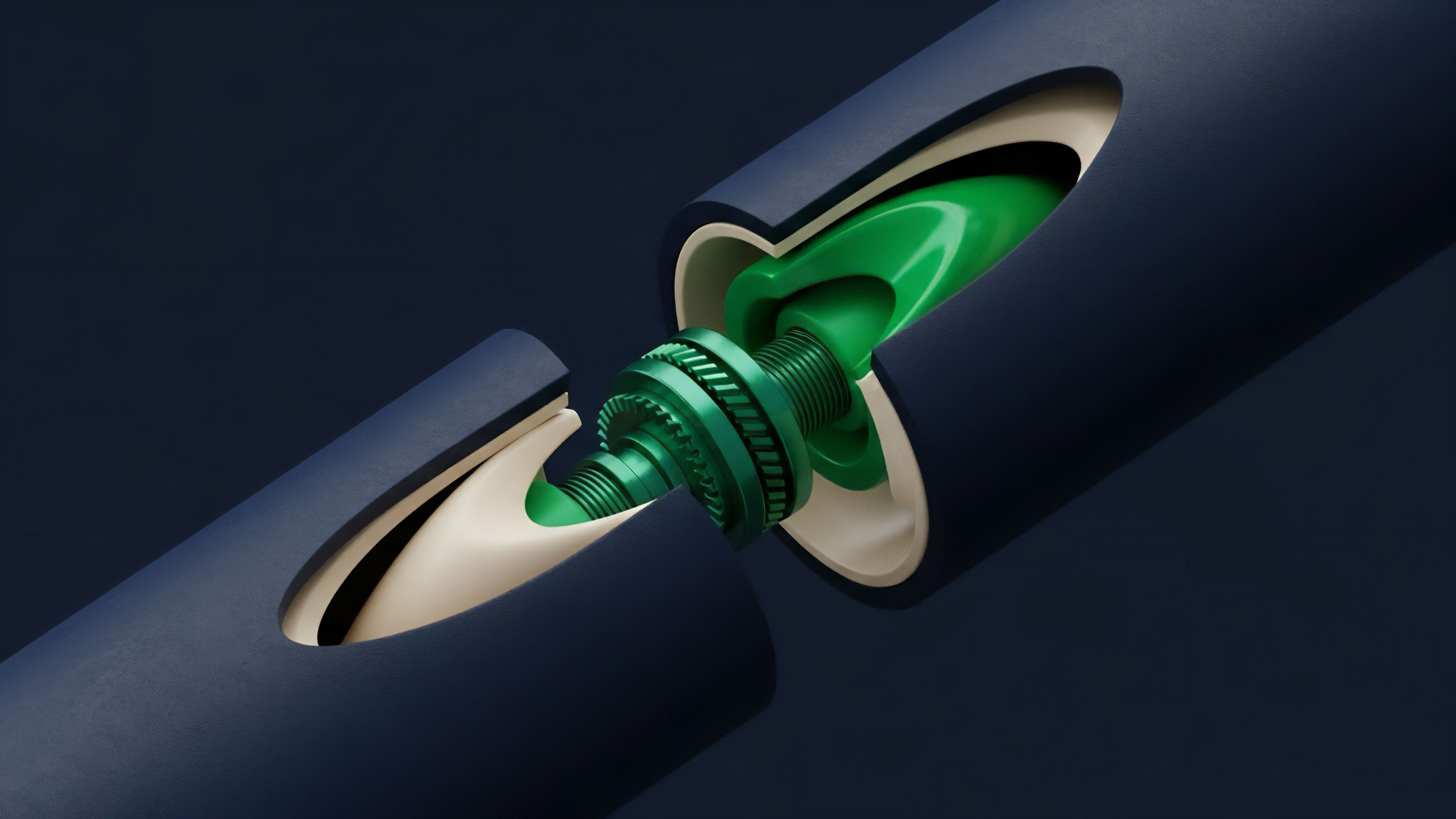

Data aggregation for options protocols requires specific methods to handle the volatility surface. The volatility skew ⎊ the implied volatility of options with different strike prices but the same expiration ⎊ is a critical input. A robust data stream must accurately reflect this skew across various strikes and expirations.

The theoretical approach often involves:

- Weighted Median Calculation: Aggregating price data from multiple sources by taking a median rather than a mean, which reduces the impact of single outliers or manipulated data points.

- Deviation Thresholds: Implementing automated checks where data points that deviate significantly from the consensus are discarded. This prevents a small number of attackers from influencing the overall feed.

- Statistical Modeling: Using models to calculate implied volatility based on real-time order book data and recent trade history, rather than relying on a static value.

Comparative Oracle Models

Different oracle models offer distinct trade-offs between security, latency, and cost. A robust options protocol must choose an oracle architecture that aligns with its specific risk profile. The following table compares common oracle design patterns based on their primary characteristics:

| Oracle Design Pattern | Description | Latency Characteristics | Security Model |

|---|---|---|---|

| Centralized Oracles | Data provided by a single, trusted entity (e.g. a centralized exchange). | Low latency; high frequency updates. | Trust-based; susceptible to single point of failure. |

| Decentralized Aggregation Oracles | Data collected from multiple independent nodes and aggregated on-chain (e.g. Chainlink). | Higher latency due to on-chain aggregation; updates are batched. | Economic security via staking and slashing; highly resilient to manipulation. |

| Decentralized Exchange (DEX) Oracles | Using the spot price from a decentralized exchange’s liquidity pool (e.g. Uniswap TWAP). | Low latency; high frequency updates. | Vulnerable to flash loan attacks and low liquidity pool manipulation. |

Approach

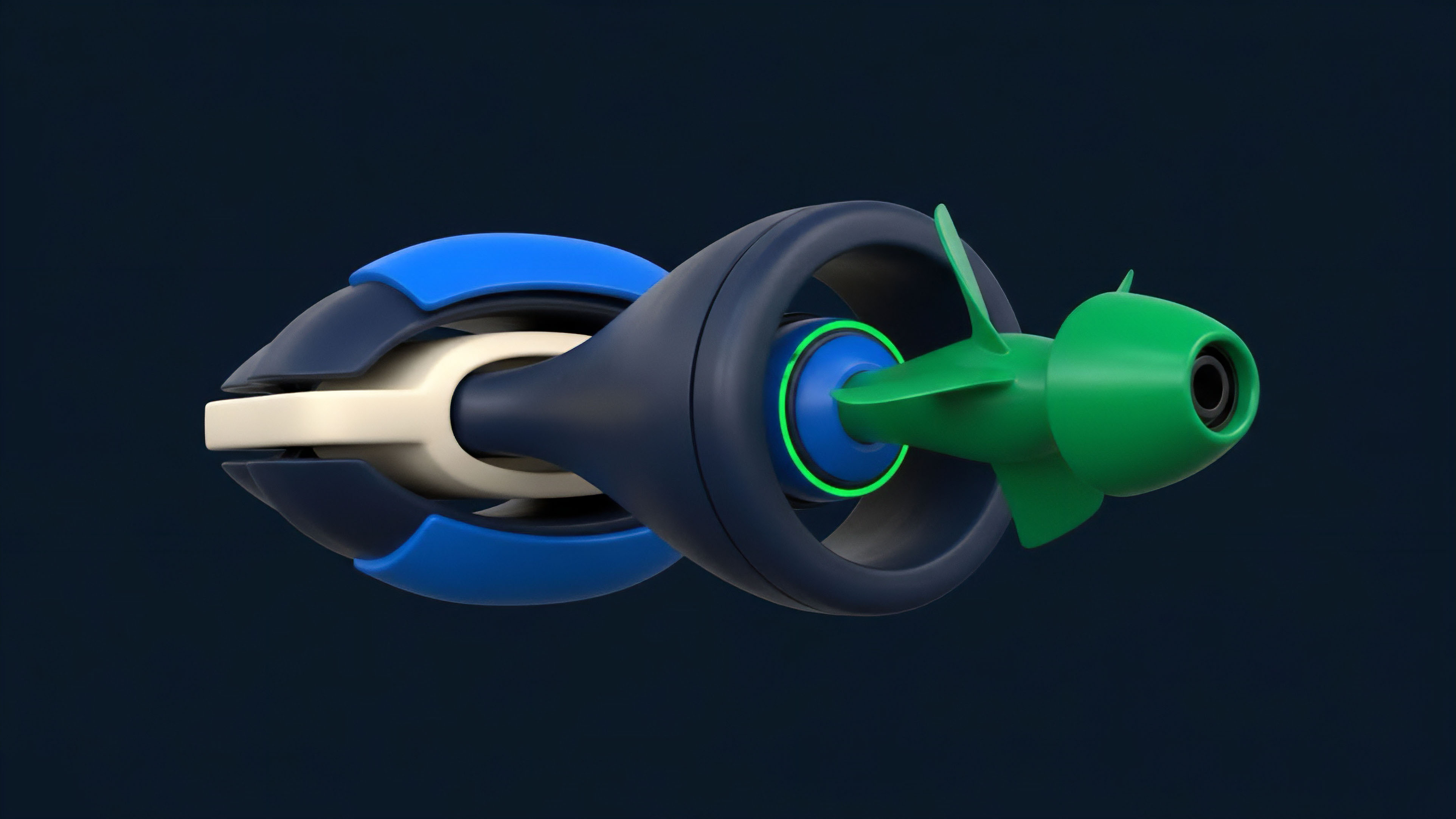

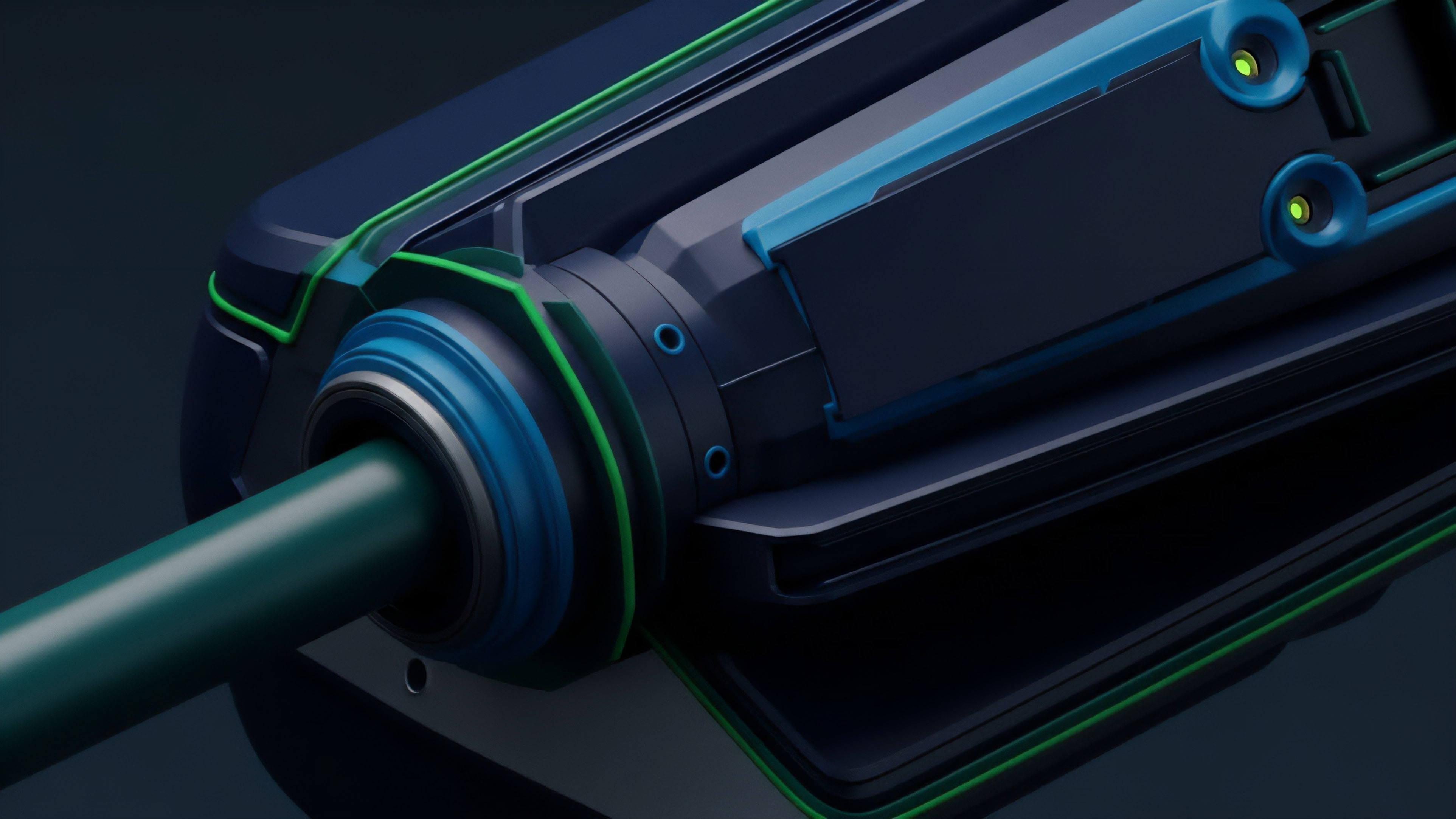

Current implementations of Data Stream Integrity in crypto options protocols focus on mitigating the specific risks associated with options trading. The primary approach involves integrating robust oracle solutions with on-chain risk management systems. The architecture must account for the high leverage and time-sensitive nature of options.

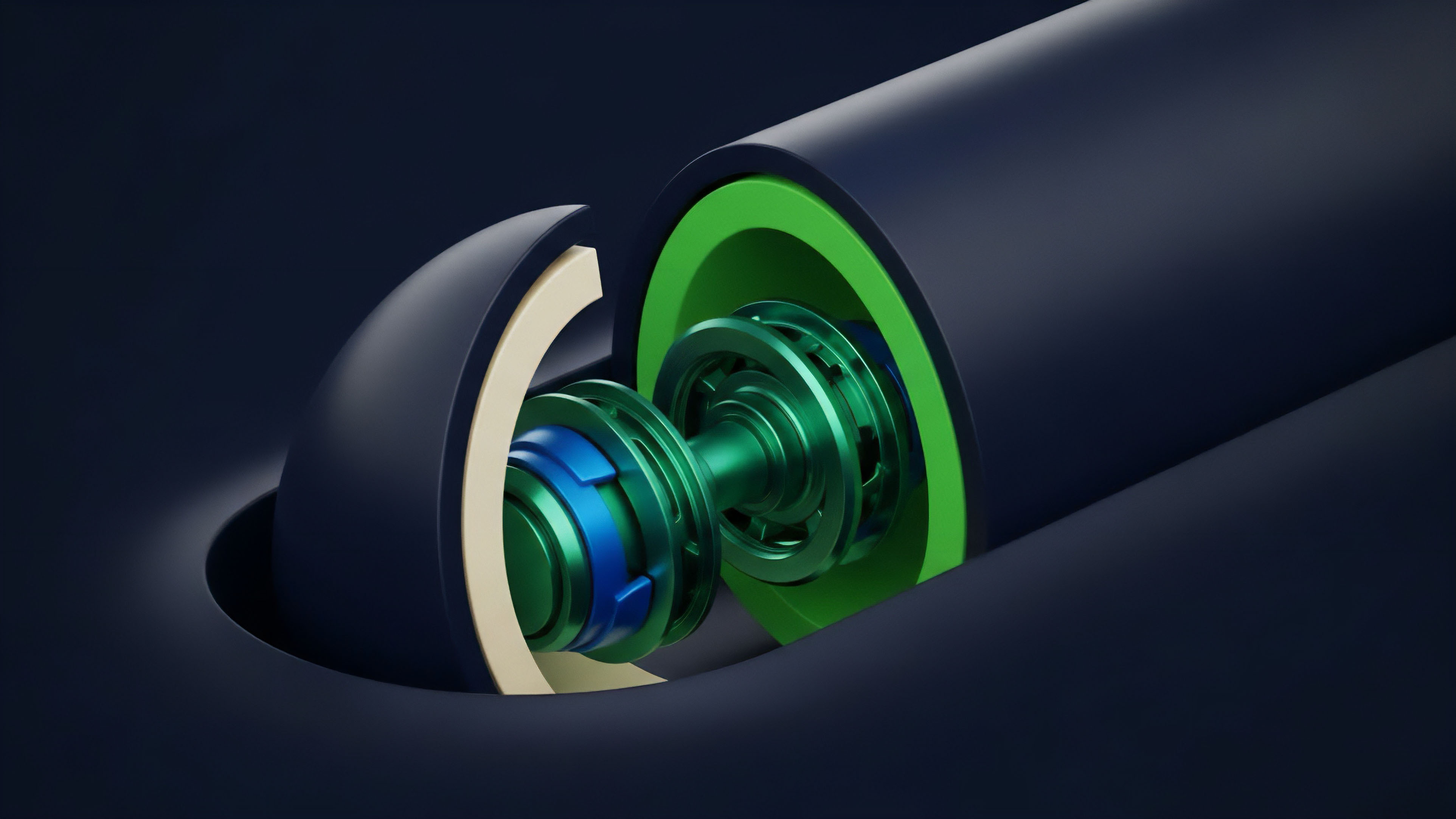

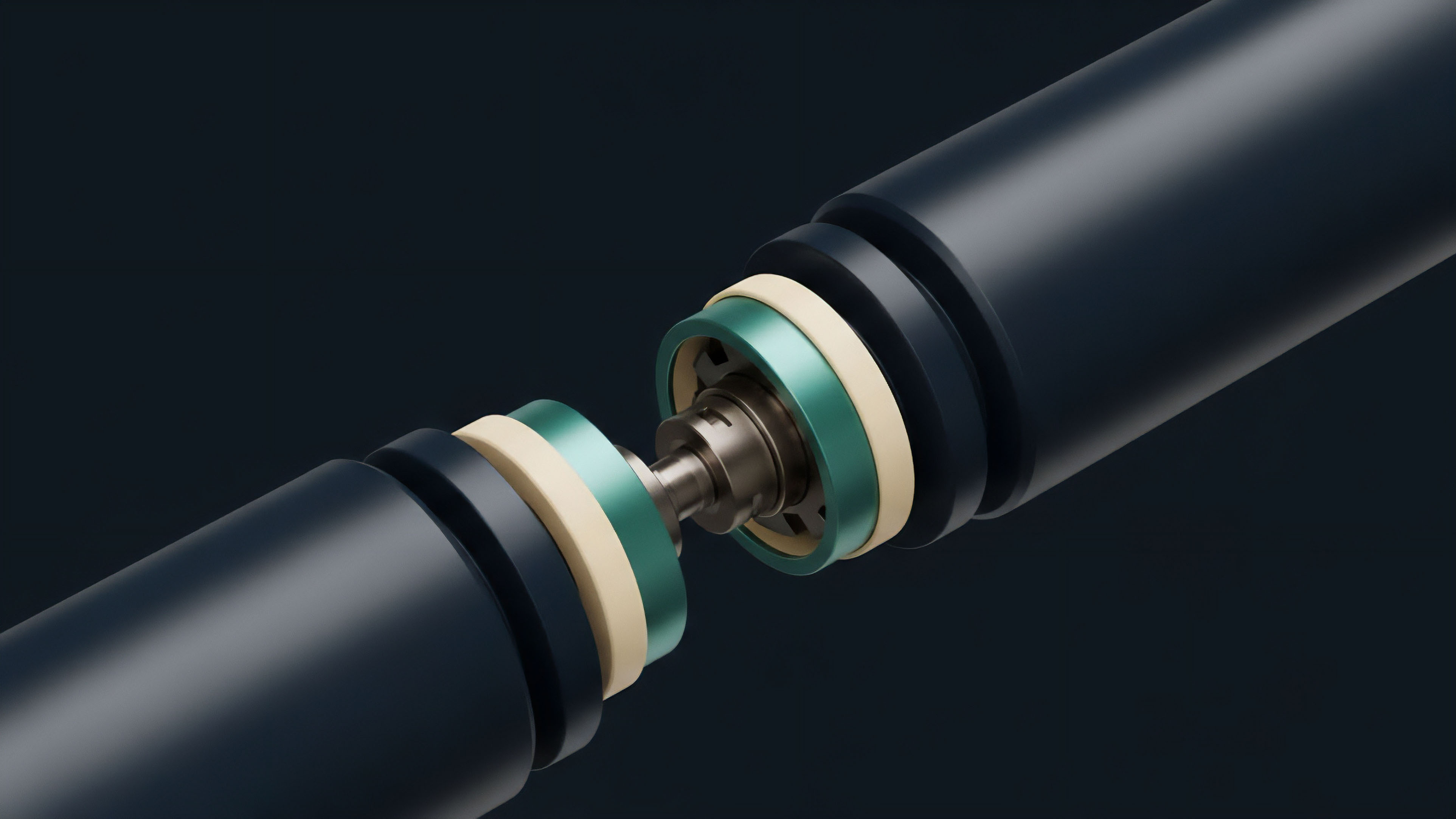

Liquidation Engine and Data Verification Layers

Options protocols utilize a multi-layered approach to protect against data manipulation. The liquidation engine, which automatically closes positions when collateral falls below a certain threshold, relies heavily on accurate data streams. To protect this engine, protocols implement verification layers that check the integrity of the oracle feed before a liquidation event.

These verification layers often employ circuit breakers. A circuit breaker automatically halts liquidations or trading if the data feed reports a price that deviates significantly from a secondary, less frequently updated feed, or if it crosses pre-defined volatility thresholds. This approach provides a necessary buffer against flash crashes or short-term oracle manipulations.

Effective risk management requires protocols to implement “circuit breakers” that automatically pause liquidations or trading when data feeds exhibit abnormal behavior, preventing cascading failures.

Data Feed Resiliency Strategies

The practical application of data integrity principles in options protocols involves several strategies to ensure continuous operation and minimize manipulation risk. These strategies include:

- Hybrid Data Sourcing: Combining data from both decentralized oracle networks and centralized exchange APIs to cross-verify prices. The centralized data acts as a secondary check against anomalies in the decentralized feed.

- Time-Weighted Averages (TWAPs): Using TWAPs over a longer period (e.g. 10 minutes) rather than instantaneous spot prices. This makes it significantly more expensive for an attacker to manipulate the price for a sustained duration required to impact the TWAP.

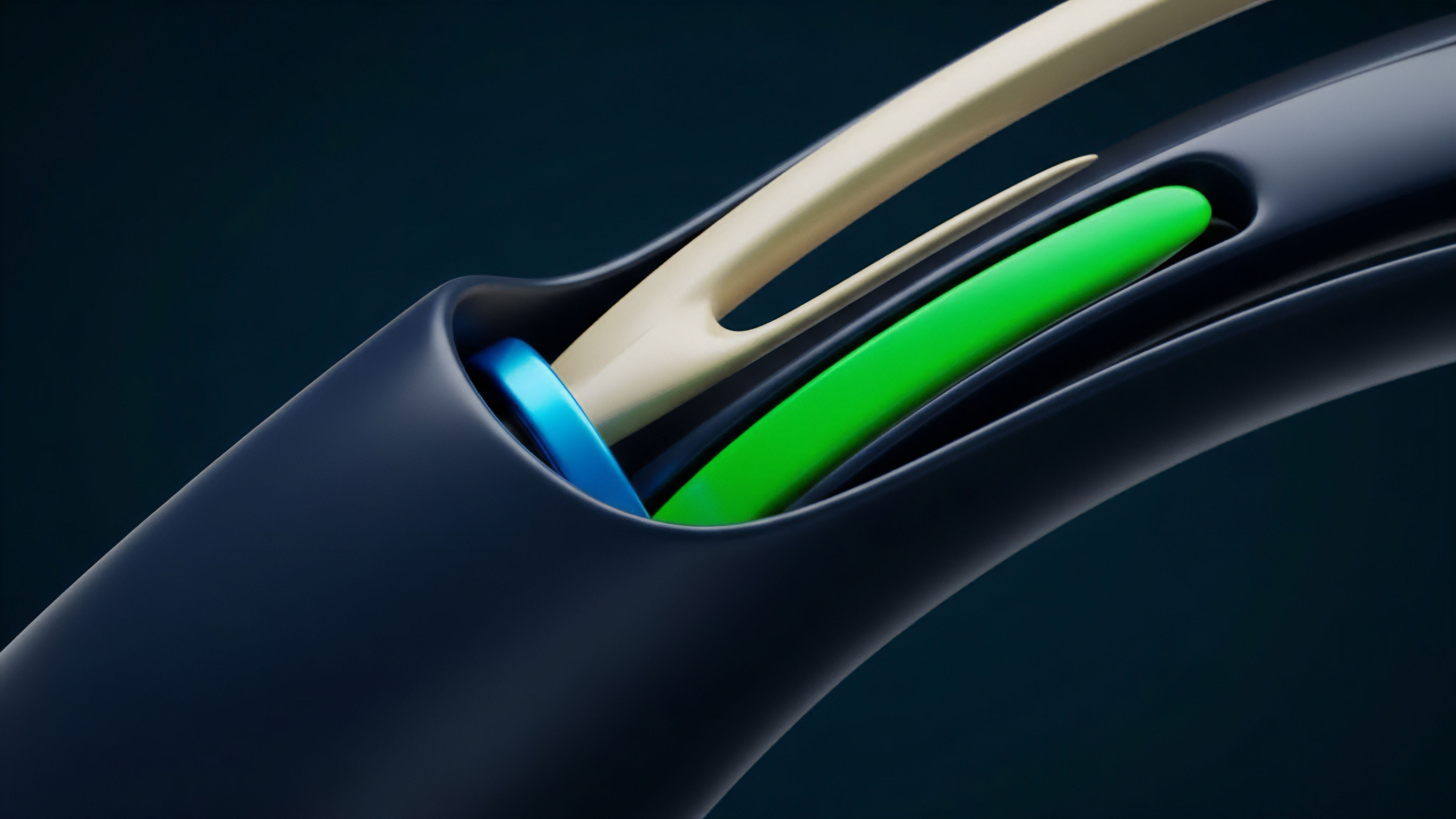

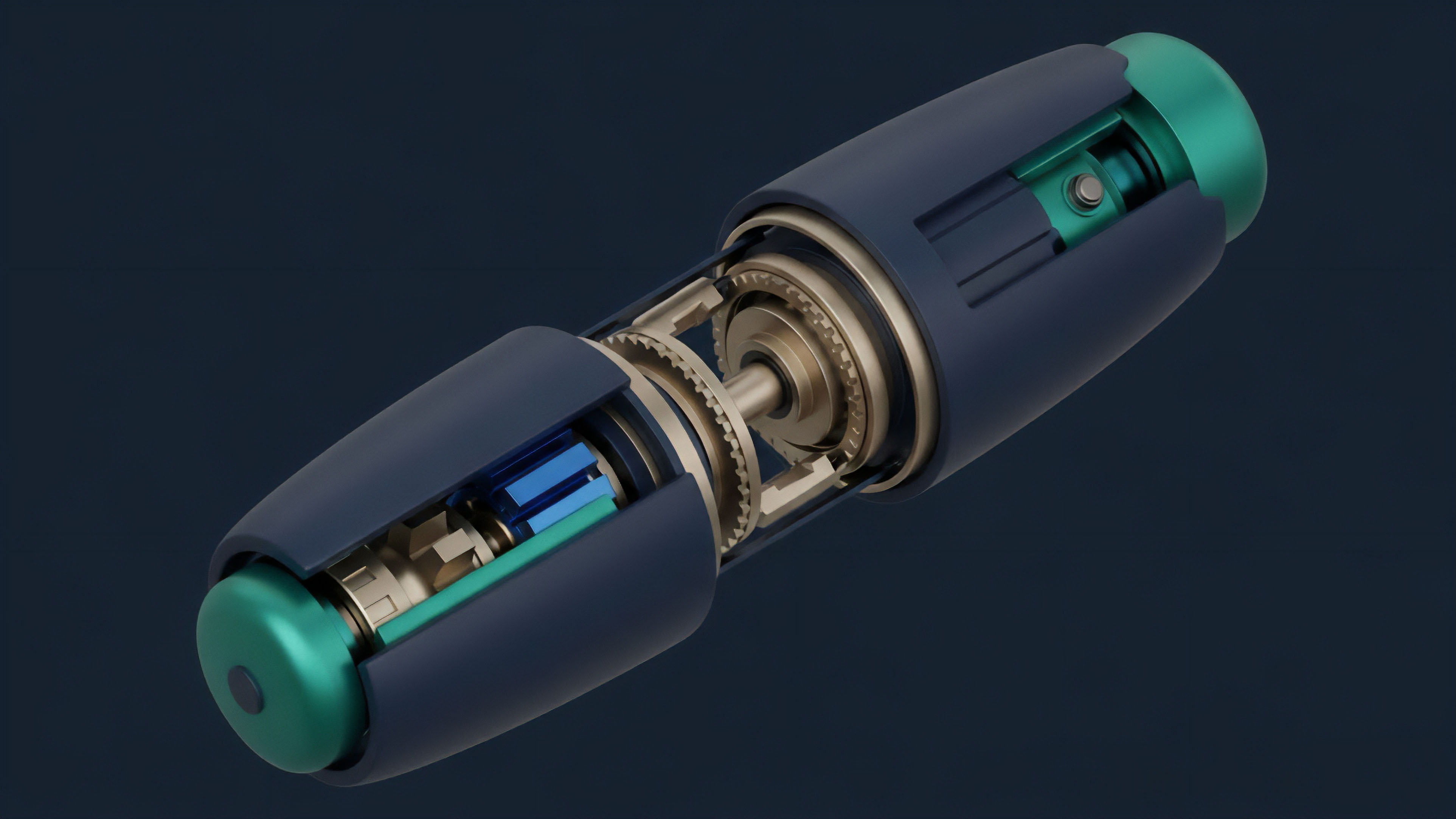

- Off-Chain Computation: Calculating complex values like implied volatility off-chain and then submitting a cryptographic proof to the mainnet. This reduces on-chain gas costs and allows for more complex models, while maintaining verifiability.

Evolution

The evolution of data stream integrity for crypto options reflects a continuous adaptation to new attack vectors and market dynamics. The shift from simple spot price feeds to complex volatility surfaces represents a significant architectural leap. Early protocols struggled with the high cost of calculating and delivering volatility data on-chain, often leading to a reliance on centralized oracles for this specific input.

The next phase involved moving these calculations off-chain and using cryptographic proofs to verify the results. This hybrid approach allows for complex computations without the high gas fees of Layer 1 blockchains. The current stage of evolution is focused on scaling these solutions to Layer 2 networks.

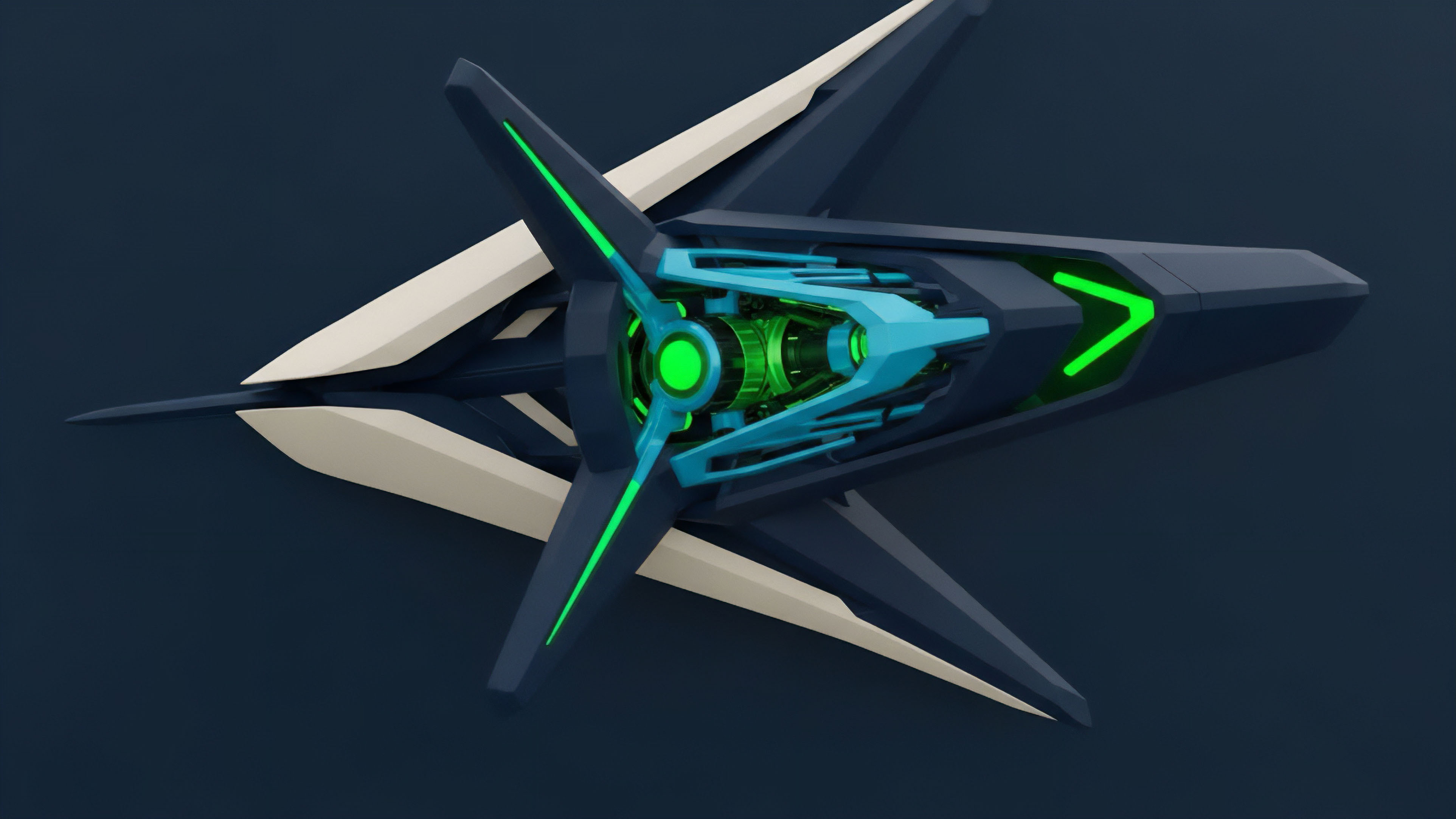

Moving data aggregation to Layer 2 reduces latency and cost, allowing for higher frequency updates. The development of cross-chain options protocols further complicates data integrity. A protocol operating on Layer 2 must source data from multiple Layer 1 and Layer 2 ecosystems.

This requires a new architecture for data routing and verification, where data streams must be secured across different consensus environments. The challenge of maintaining integrity across these disparate systems is a primary focus for current development.

Horizon

Looking ahead, the future of data stream integrity for crypto options points toward fully decentralized volatility surfaces and a data marketplace where integrity itself is a core product.

The next generation of options protocols will move beyond relying on external oracles for pre-calculated volatility inputs. Instead, they will use on-chain mechanisms to dynamically derive implied volatility from real-time options order book data. This approach reduces external dependencies and creates a truly self-contained system where all necessary data is generated within the protocol’s own ecosystem.

This evolution will lead to a new type of data market where protocols can purchase data integrity services. The data feed itself becomes a financial product, with different tiers of security and latency available. This marketplace will allow protocols to choose between highly secure, low-latency feeds for high-value options and less frequent updates for long-term positions.

The future of data integrity involves the creation of fully decentralized volatility surfaces, where protocols generate necessary pricing data internally from on-chain order books, reducing reliance on external oracles.

A significant challenge on the horizon involves integrating machine learning models for anomaly detection. These models will analyze historical data and current market conditions to identify potential manipulation attempts in real time, going beyond simple deviation checks. The goal is to create a data stream that is not only robust against known attack vectors but also adaptive to novel forms of manipulation. The ultimate objective is to make the data feed as secure as the underlying blockchain itself.

Glossary

Oracle Integrity Architecture

Data Integrity Failure

Cryptographic Integrity

Oracle Consensus Integrity

Staked Capital Integrity

Data Integrity Auditing

Burning Mechanism Integrity

Open Market Integrity

Data Stream Resilience