Essence

Data Stewardship Programs within decentralized financial architectures function as the protocol-level governance mechanisms ensuring the integrity, availability, and verifiable provenance of off-chain and on-chain datasets utilized by automated option pricing models. These programs operationalize the transformation of raw market information into structured, immutable inputs for derivative smart contracts, mitigating the information asymmetry inherent in decentralized liquidity pools.

Data Stewardship Programs serve as the essential verification layer that transforms raw decentralized data into actionable inputs for derivative pricing engines.

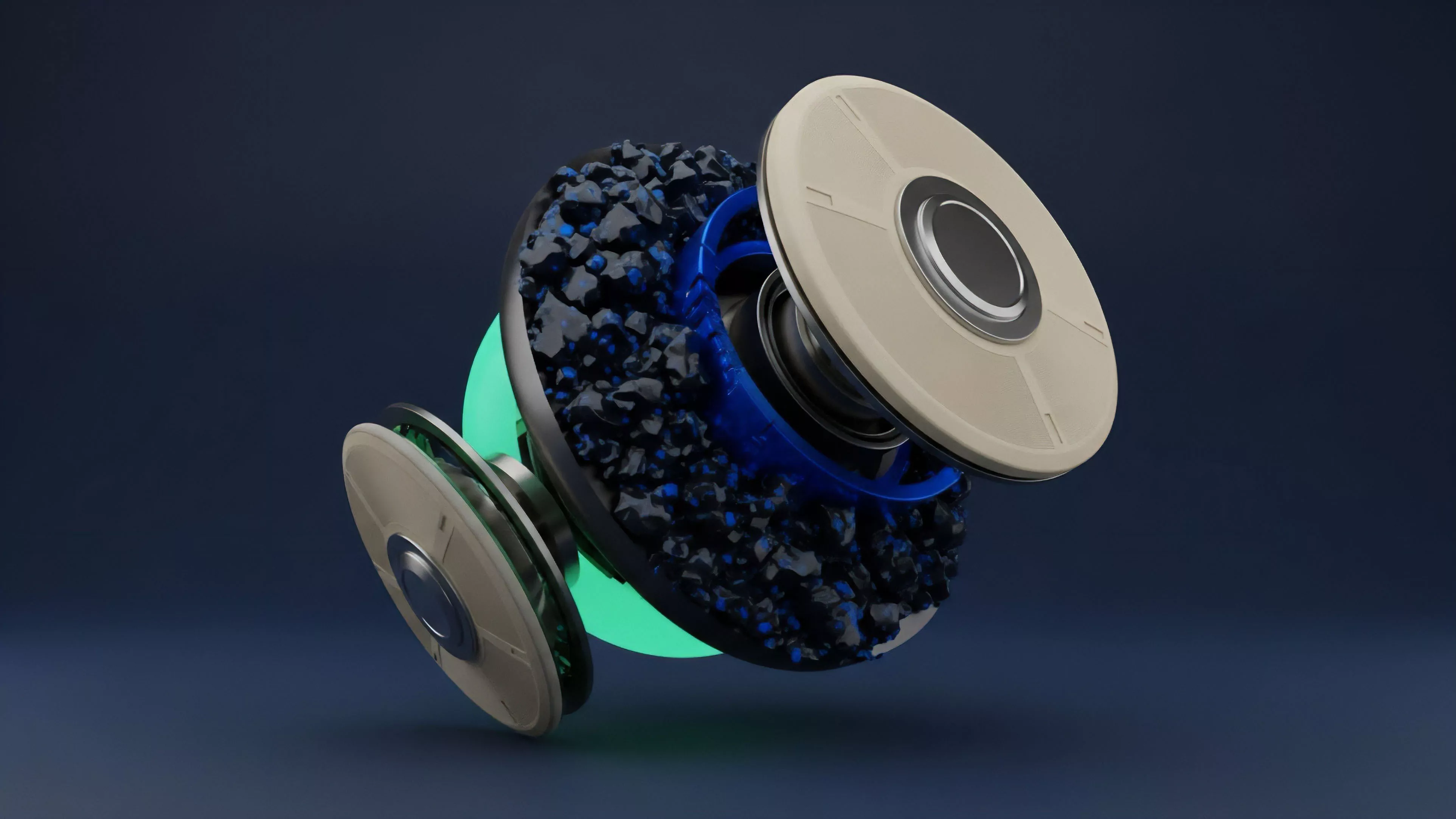

By assigning specific roles to participants ⎊ often incentivized through token-based rewards ⎊ these systems create a decentralized oracle environment where the validity of underlying asset prices, volatility surfaces, and historical skew data is maintained through cryptographic proof rather than centralized trust. The functional requirement for such stewardship arises from the adversarial nature of crypto markets, where erroneous or manipulated data directly facilitates predatory liquidation of option positions.

Origin

The genesis of Data Stewardship Programs traces back to the fundamental failure of early decentralized exchanges to account for the latency and manipulation risks associated with external price feeds. As derivative complexity grew, moving from simple spot swaps to complex path-dependent options, the reliance on single-source oracles became a systemic liability.

- Oracle Vulnerability: Early protocols faced catastrophic losses due to price manipulation on low-liquidity centralized exchanges.

- Governance Evolution: Initial decentralized governance models lacked the granular technical control required to manage data quality standards.

- Cryptographic Proofs: The shift toward verifiable computation allowed protocols to require proof of data validity from stewardship participants.

This transition reflects the broader movement from trust-based centralized data providers to trust-minimized, incentive-aligned networks. Protocols moved away from singular reliance on centralized entities toward distributed networks of participants tasked with verifying the veracity of inputs before those inputs trigger state changes within the derivative contract.

Theory

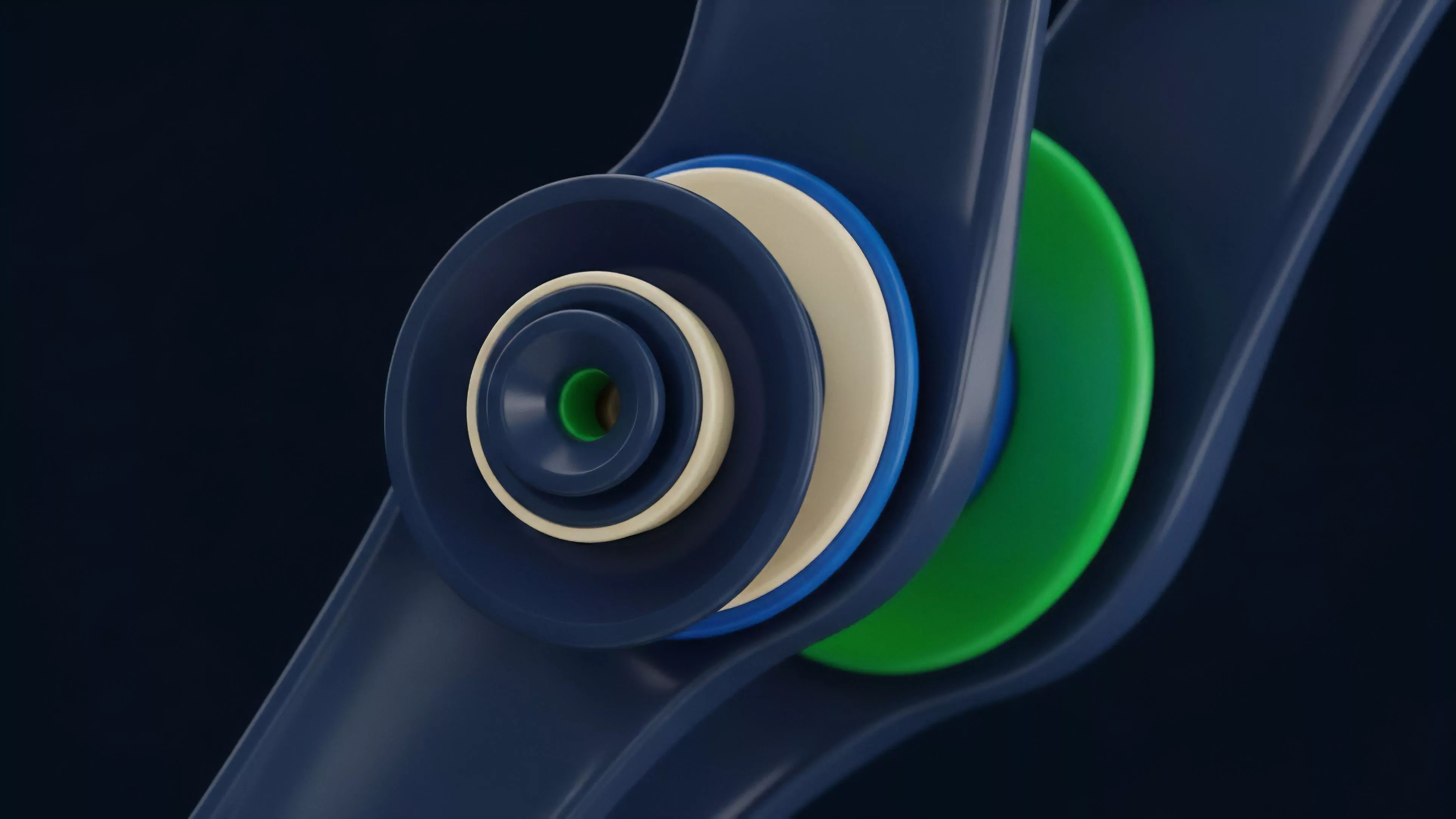

The theoretical framework governing Data Stewardship Programs relies on the application of game theory to ensure honest data reporting. By structuring the interaction between data providers and the protocol as a multi-stage game, designers create conditions where the cost of reporting fraudulent data outweighs the potential profit from market manipulation.

| Mechanism | Function |

| Staking Requirements | Ensures capital commitment from data stewards |

| Challenge Windows | Provides time for adversarial verification of reported data |

| Slashing Conditions | Penalizes dishonest participants via capital forfeiture |

The mathematical modeling of these systems often employs Bayesian inference to aggregate reports from multiple stewards, effectively filtering out noise and intentional inaccuracies. When stewards provide inputs, the protocol evaluates these against historical distributions and consensus among peers.

Stewardship theory dictates that participant behavior remains rational only when the cost of adversarial action exceeds the expected value of the resulting protocol failure.

The system architecture must account for network latency, as the speed of information propagation directly impacts the arbitrage opportunities available to sophisticated traders. A failure to synchronize the steward network with the settlement frequency of the options leads to significant slippage and misalignment between the derivative price and the underlying spot market.

Approach

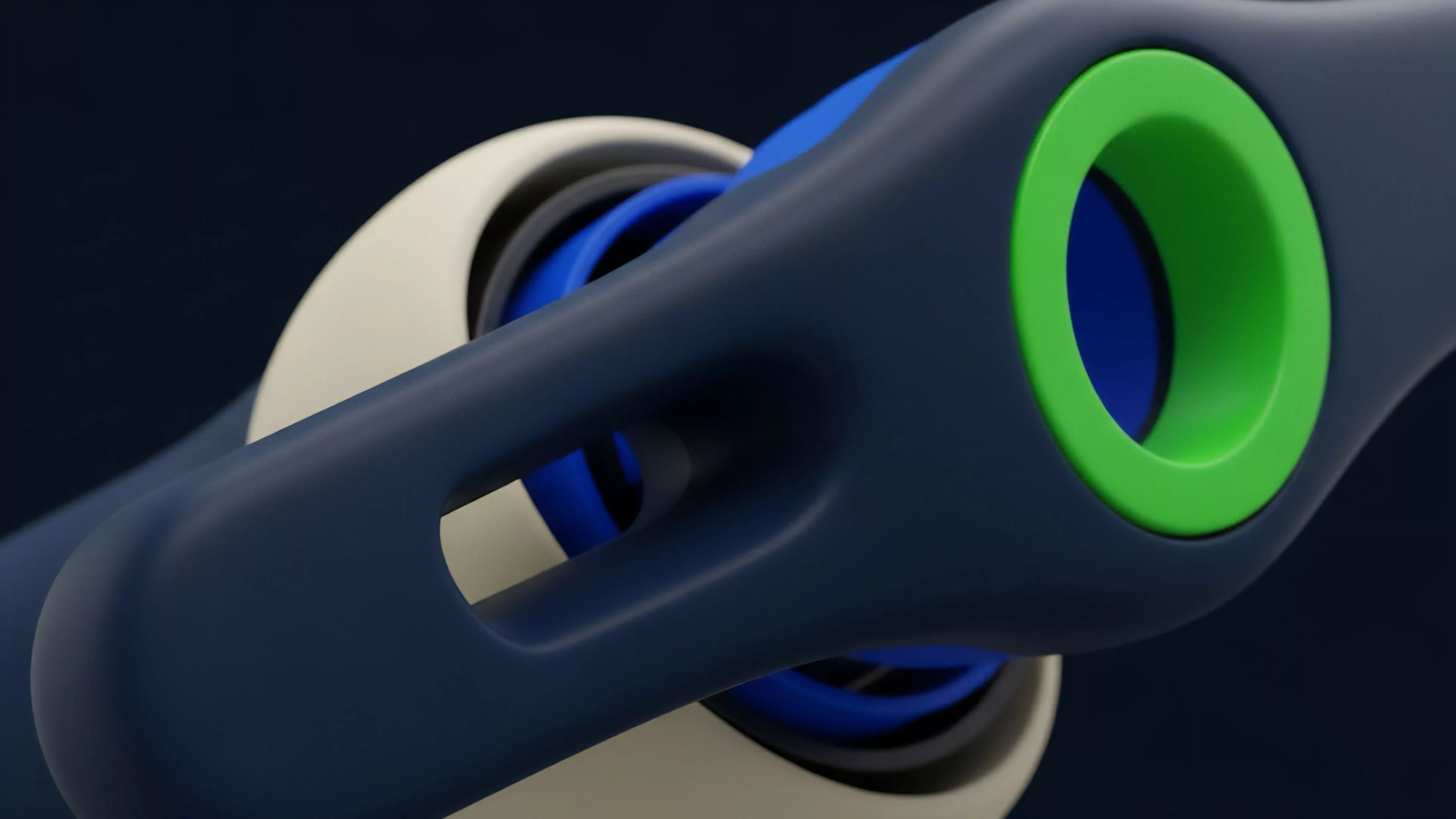

Current implementation strategies for Data Stewardship Programs emphasize modularity and multi-layered validation. Rather than relying on a monolithic data source, protocols now deploy distinct stewardship layers that handle different data types, ranging from real-time spot price feeds to complex implied volatility surface updates.

- Reputation Scoring: Participants accumulate non-transferable scores based on historical data accuracy.

- Latency Mitigation: Use of specialized off-chain computation nodes to process high-frequency market data.

- Incentive Alignment: Protocol revenue streams are directly linked to the performance metrics of the stewardship network.

This approach forces a shift in how market makers manage their own risk, as they must now monitor the health and responsiveness of the stewardship layer as closely as the volatility of the underlying asset. The technical architecture often involves zero-knowledge proofs to verify that the data processed by a steward matches the raw data sourced from external exchanges without exposing sensitive order flow information.

Evolution

The trajectory of Data Stewardship Programs shows a shift from reactive, manual governance to proactive, automated oversight. Initially, data stewardship existed as a peripheral concern, often delegated to third-party providers with limited accountability.

As market cycles matured, the systemic risks associated with inaccurate data became undeniable, forcing protocols to internalize the stewardship function. The integration of advanced statistical modeling into the stewardship layer has transformed the role of the participant from a simple relay node to an active risk analyst. Modern protocols now require stewards to calculate and verify risk parameters, such as the Greek sensitivities of complex option structures, before these are accepted by the smart contract.

Systemic resilience requires the continuous evolution of stewardship mechanisms to counter increasingly sophisticated adversarial attacks on price discovery.

The current landscape reflects a transition toward cross-chain interoperability, where stewardship programs must maintain data integrity across disparate blockchain environments. This expansion necessitates standardized protocols for data transmission, ensuring that the integrity of an option’s pricing logic remains consistent even as it moves between liquidity venues.

Horizon

The future of Data Stewardship Programs points toward the complete automation of data validation through decentralized AI-driven agents. These agents will monitor the entire market spectrum, detecting anomalies in price formation and volatility shifts with higher precision than human-managed systems.

- Autonomous Validation: AI agents will replace manual stewardship in high-frequency option markets.

- Cross-Protocol Integration: Unified data standards will allow for seamless option pricing across all decentralized venues.

- Dynamic Slashing: Real-time risk assessment will adjust staking requirements based on current market volatility.

The next iteration of these systems will likely prioritize the reduction of information latency to near-zero, enabling decentralized options to compete directly with centralized derivatives platforms on execution speed and capital efficiency. The ultimate objective is the creation of a self-correcting financial infrastructure where the stewardship layer is indistinguishable from the underlying protocol consensus, rendering external manipulation technically impossible.