Essence

Data Sharding Techniques represent the architectural decomposition of state and transaction history across multiple, parallelized sub-networks within a distributed ledger. This methodology moves beyond the monolithic constraints of traditional blockchain structures, where every participant validates every transaction. By partitioning the network into distinct shards, the system achieves horizontal scalability, allowing for increased throughput without sacrificing decentralization.

Data sharding enables horizontal scalability by partitioning ledger state across parallel sub-networks, allowing throughput to increase without requiring every node to process every transaction.

The fundamental utility of this architecture lies in its ability to manage the trilemma of security, decentralization, and scalability. Each shard maintains its own state, effectively creating smaller, manageable environments for validation. The systemic implication is a transformation of the consensus mechanism from a global bottleneck into a localized, efficient operation, permitting the network to process complex financial activities at a scale previously reserved for centralized entities.

Origin

The concept emerged from the necessity to resolve the throughput limitations inherent in early proof-of-work protocols.

As network activity increased, the requirement for every node to maintain the entire state of the chain led to congestion and prohibitive transaction costs. Researchers sought inspiration from database management systems, specifically the practice of horizontal partitioning, to distribute the load across multiple servers.

- Database Partitioning: The historical practice of splitting large databases into smaller, faster, and more easily managed pieces.

- State Bloat Mitigation: The primary driver for sharding, addressing the unsustainable growth of the global ledger state.

- Parallel Validation: The technical shift toward concurrent processing of transaction blocks rather than sequential execution.

This transition reflects the broader evolution of distributed systems, moving from single-threaded, globally consistent states toward multi-threaded, asynchronously reconciled architectures. The shift acknowledges that global consensus is expensive and often redundant for non-conflicting transactions, leading to the design of protocols that optimize for localized truth.

Theory

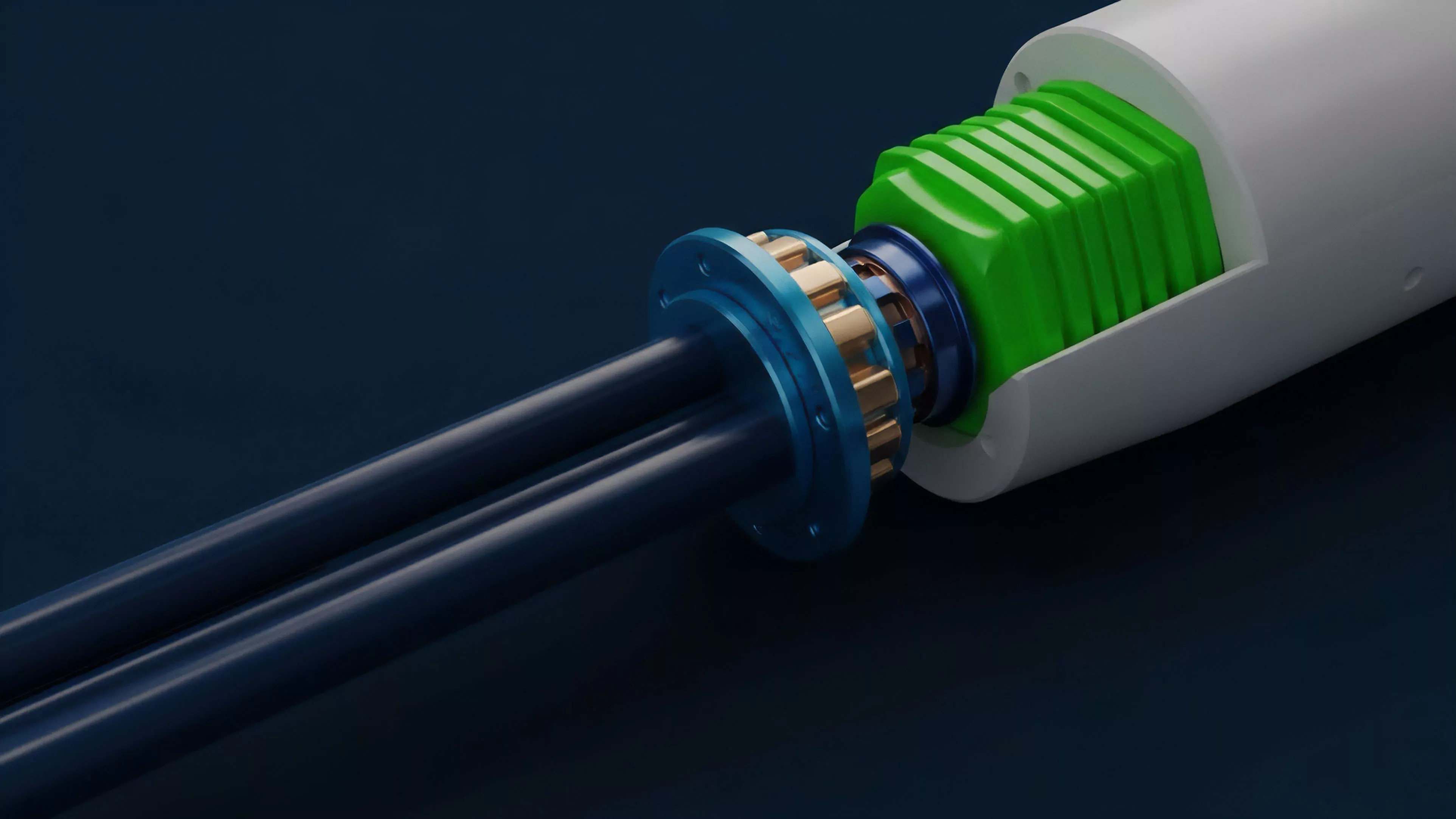

The mathematical underpinning of Data Sharding Techniques relies on the assumption that the total network security is maintained through sampling or cross-shard communication protocols. The system must ensure that no single shard becomes an isolated, vulnerable environment.

This requires sophisticated cryptographic primitives, such as data availability proofs, to verify that transaction data is accessible to all network participants without requiring full download.

| Component | Functional Mechanism |

| State Partitioning | Distributes account balances and contract data |

| Cross-Shard Communication | Enables asset movement between isolated partitions |

| Data Availability | Ensures transaction integrity via cryptographic sampling |

The integrity of sharded systems relies on cryptographic proofs that verify data accessibility across the network, ensuring security without requiring every node to hold the entire state.

Adversarial game theory plays a critical role here. Participants are incentivized to act honestly within their shard, while randomized validator rotation prevents collusion. The system must account for the latency introduced by cross-shard messaging, which can create temporary liquidity traps for derivatives markets if settlement times are not strictly governed by the protocol architecture.

Approach

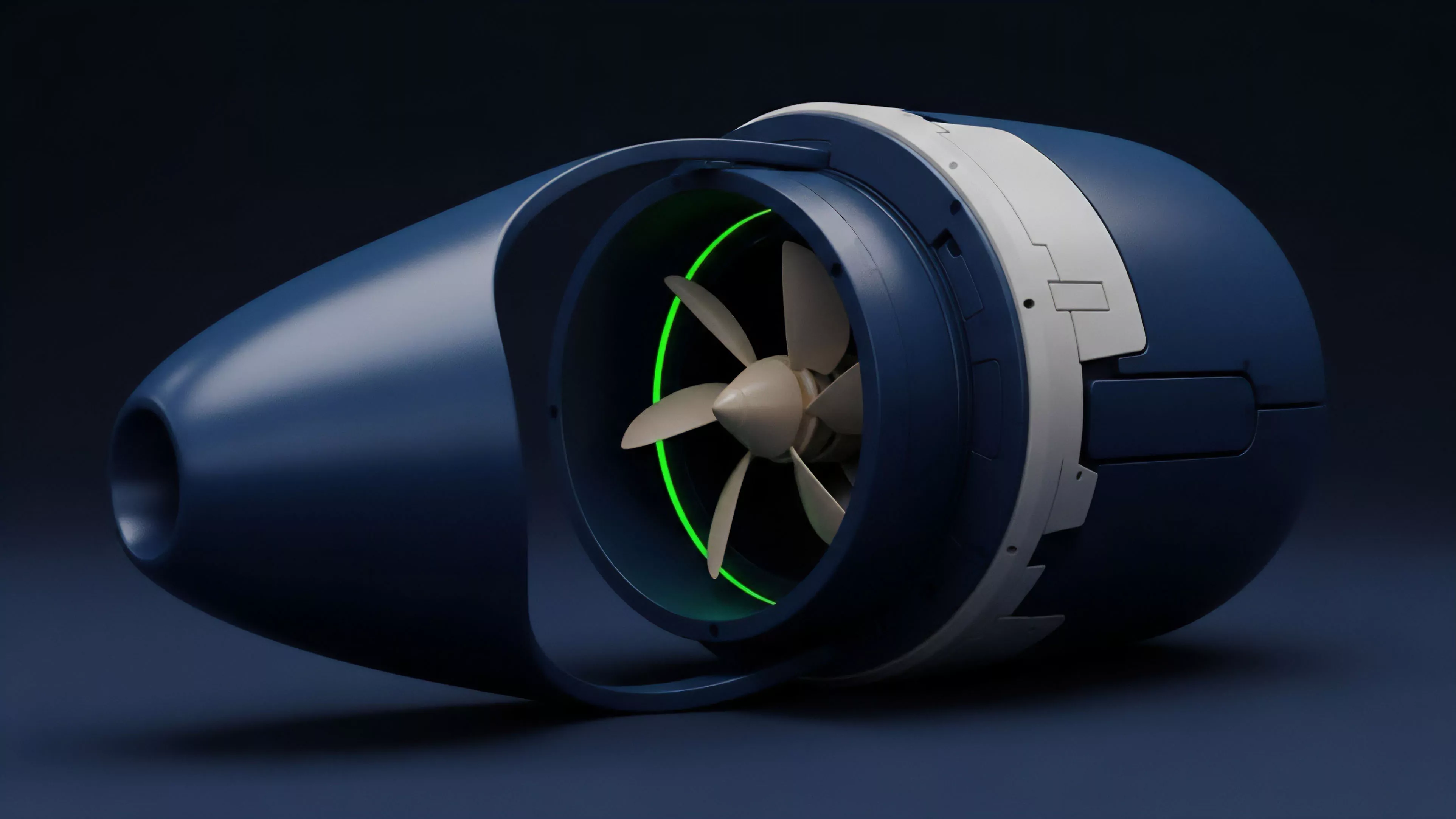

Current implementation focuses on modularity, where the execution layer is decoupled from the data availability layer.

Protocols now utilize Blob Storage and specialized DA Layers to optimize how data is indexed and verified. This architecture permits developers to build decentralized exchanges and options platforms that operate with near-instant settlement times, as the underlying chain provides the necessary throughput for high-frequency order book updates.

- Execution Sharding: Processing smart contract logic in parallel across different shards.

- Data Availability Sampling: Allowing light nodes to verify data existence through probabilistic checks.

- Synchronous Composability: Maintaining the ability for assets to interact across shards without excessive latency.

The primary challenge remains the maintenance of synchronous composability. When liquidity is fragmented across shards, the efficiency of capital is compromised. Modern approaches utilize asynchronous messaging protocols that allow for atomic swaps, effectively mitigating the risk of fragmented liquidity pools while maintaining the performance benefits of a sharded environment.

Evolution

The transition from simple state sharding to complex, multi-layered architectures marks the maturation of the technology.

Early designs were rigid, forcing fixed roles upon nodes. Current iterations are dynamic, allowing for adaptive shard sizing based on real-time network load. This evolution mirrors the development of cloud infrastructure, where resources are allocated based on demand rather than static capacity.

Dynamic sharding allows protocols to adapt resource allocation based on network demand, transforming the ledger into a flexible, responsive financial infrastructure.

One might consider the parallel between this and the development of high-frequency trading platforms in traditional finance, where low-latency execution is paramount. The system is moving toward a model where the user remains unaware of the underlying sharding structure, interacting with a seamless interface while the protocol manages the complexity of state reconciliation in the background. This shift is essential for attracting institutional capital, which demands both performance and rigorous, verifiable security.

Horizon

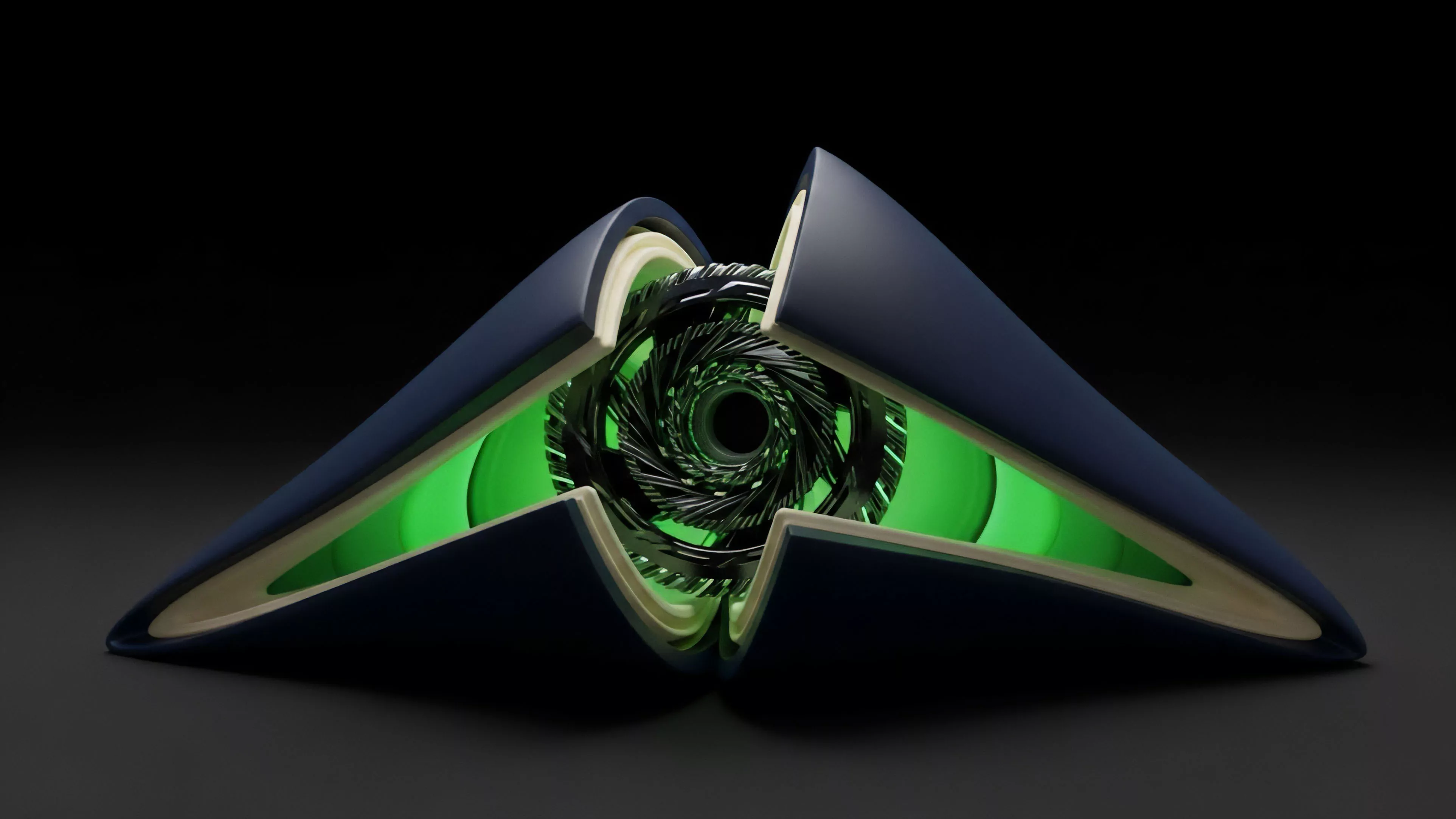

Future development centers on ZK-Sharding, where zero-knowledge proofs replace traditional validation for cross-shard verification.

This advancement reduces the communication overhead between shards to near-zero, effectively solving the latency issues that currently hinder the adoption of complex derivative instruments. The integration of these techniques will facilitate a truly global, permissionless market where capital efficiency is limited only by the speed of light, not the speed of global consensus.

| Technological Trend | Financial Impact |

| ZK-Proofs | Compressed, trustless cross-shard settlement |

| Adaptive Sharding | Resilient throughput during market volatility |

| Modular DA | Lowered cost of derivative data storage |

The ultimate goal is the creation of a unified, high-performance financial operating system. As these techniques reach maturity, the distinction between centralized and decentralized exchange speeds will vanish, forcing a total reconfiguration of how liquidity is provided and how market risk is priced across the digital asset landscape.