Essence

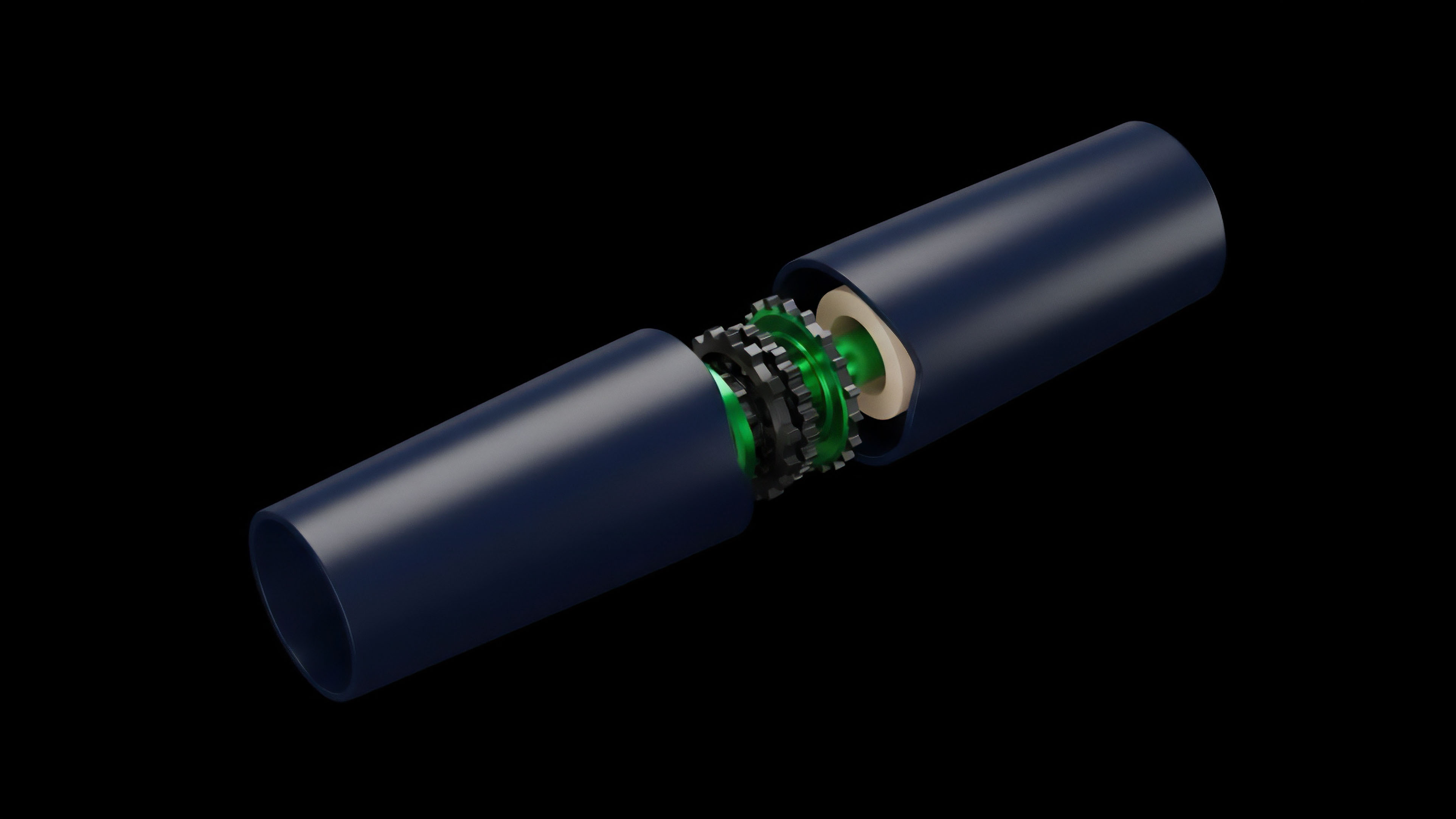

Data Quality Assurance (DQA) within crypto options markets represents the set of processes and protocols required to verify the integrity, accuracy, and timeliness of the underlying data feeds that govern financial logic. In decentralized finance (DeFi), DQA is fundamentally a systems risk management function, directly addressing the critical vulnerabilities introduced by external information sources ⎊ oracles. The core challenge in options trading, whether centralized or decentralized, is pricing and managing risk based on reliable inputs.

For decentralized protocols, a single bad data point can lead to catastrophic liquidations or incorrect settlements, undermining the entire system’s solvency. DQA is therefore not simply a compliance checkbox; it is the essential mechanism that validates the “truth” of the inputs before they are processed by a smart contract.

The integrity of a derivatives market hinges on the assumption that all participants are operating from the same, verifiable information. When we talk about options, this data includes not only the price of the underlying asset but also the time remaining until expiration and the calculation of implied volatility. If a protocol uses a manipulated price feed, it can lead to front-running opportunities, where an attacker profits by triggering liquidations or exercising options at an artificial price.

DQA aims to prevent this by establishing rigorous standards for data collection, aggregation, and validation before the data is accepted by the options protocol’s risk engine.

Data Quality Assurance is the foundational layer that validates the “truth” of inputs for financial logic in decentralized options protocols.

Origin

The necessity for robust DQA in crypto derivatives protocols stems from a specific history of financial systems failures, both in traditional finance and in the early days of DeFi. In traditional markets, data quality issues often arose from latency arbitrage, where high-frequency traders exploited microsecond delays in price feed updates across different venues. However, the origin story in DeFi is distinct and far more adversarial.

The “oracle problem” became a central concern during the initial rise of DeFi, as early protocols were repeatedly exploited through price manipulation attacks. These attacks often involved flash loans, where an attacker borrowed a large amount of capital to temporarily manipulate the price of an asset on a low-liquidity exchange. If a derivatives protocol relied on this single, manipulated price feed, the attacker could trigger liquidations or profit from arbitrage against the protocol’s treasury.

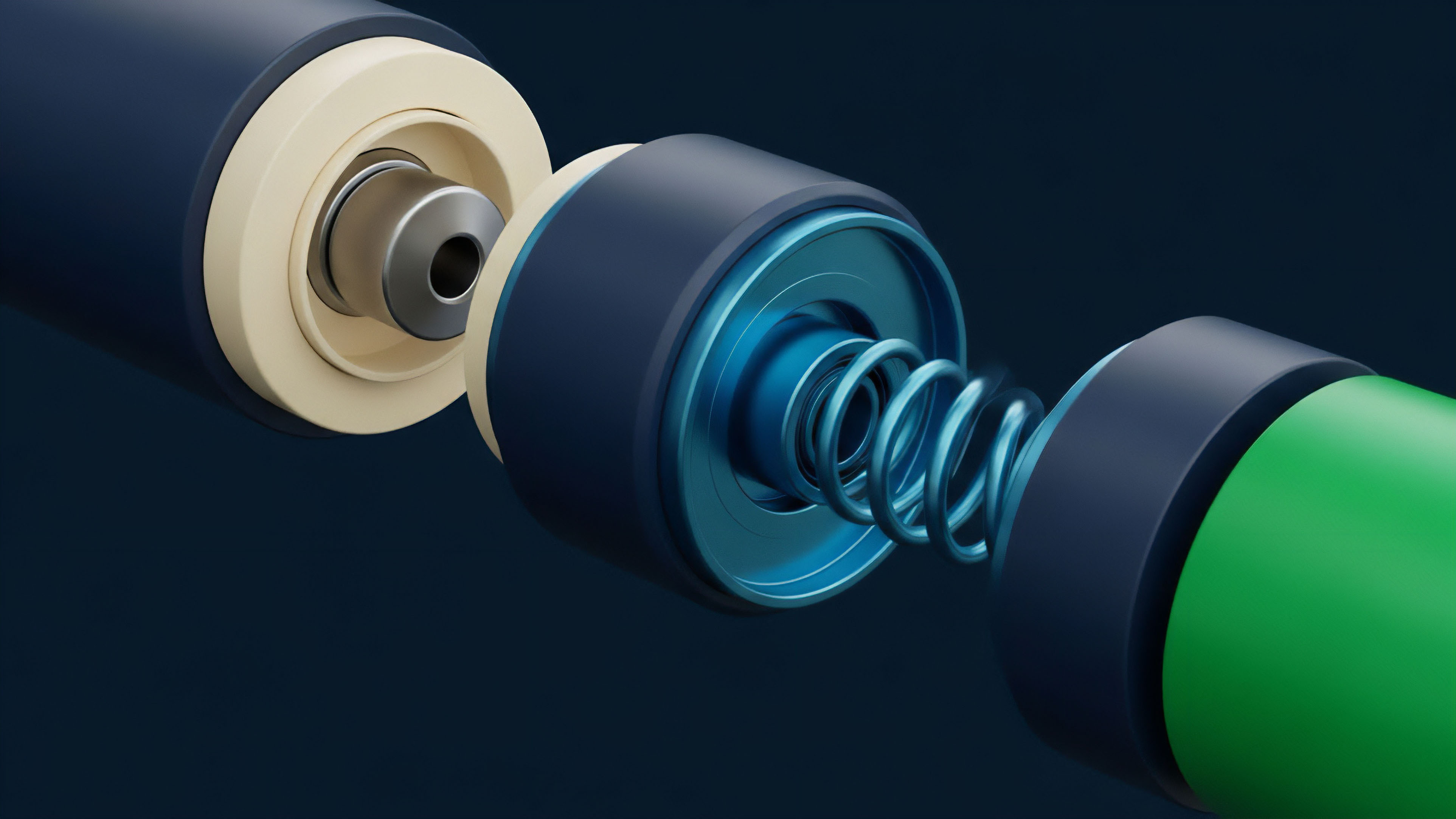

The early failures of protocols relying on single-source oracles ⎊ often resulting in millions of dollars lost ⎊ forced a rapid evolution in DQA practices. The industry quickly recognized that data integrity requires a decentralized, multi-layered approach. The solution was to move beyond single-point feeds and towards aggregated data sources.

This evolution began with the implementation of Time-Weighted Average Prices (TWAPs) and Medianizers, which averaged prices over time and across multiple sources to smooth out volatility and mitigate manipulation. The focus shifted from simply acquiring data to actively verifying its provenance and resistance to manipulation. This transition from a single data point to a verifiable data stream is the origin point for modern DQA in decentralized derivatives.

Theory

The theoretical foundation of DQA in crypto options is a synthesis of market microstructure analysis and quantitative finance principles. From a quantitative perspective, options pricing models like Black-Scholes require five primary inputs: the underlying asset price (S), strike price (K), time to expiration (T), risk-free rate (r), and implied volatility (sigma). DQA specifically targets the integrity of S, T, and sigma, as K and r are generally fixed parameters within the smart contract logic.

The theoretical challenge lies in maintaining data integrity across a system where every participant is incentivized to exploit data discrepancies. This requires a shift from simple data validation to a game theory approach. DQA protocols must be designed to make the cost of manipulation significantly higher than the potential profit.

This is achieved through a combination of economic incentives and statistical methods. The primary theoretical mechanisms for achieving this are:

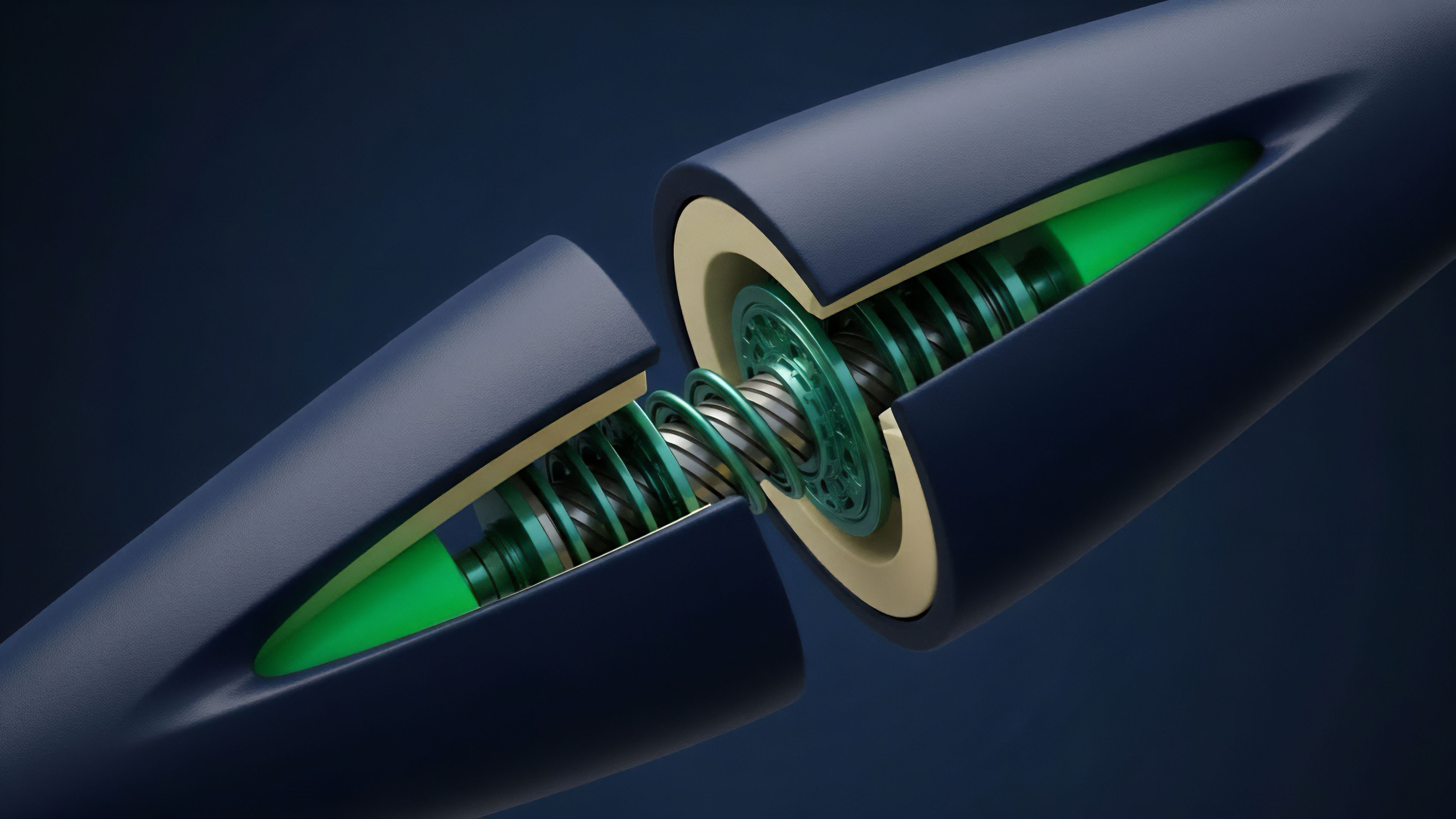

- Data Source Aggregation: The principle that aggregating prices from multiple, independent sources (e.g. centralized exchanges, decentralized exchanges) reduces the impact of manipulation on any single source. The DQA system must apply specific weighting mechanisms to these sources based on liquidity and historical reliability.

- Time-Weighted Average Price (TWAP): A method for calculating a price feed by averaging the price over a specified time interval. The TWAP minimizes the effectiveness of flash loan attacks by making it computationally and financially expensive to sustain a manipulated price over a prolonged period.

- Outlier Detection and Data Validation: Statistical models are used to identify price points that deviate significantly from the norm. This involves calculating a moving average and standard deviation to flag and discard extreme outliers. A robust DQA system must also validate the time input (T) by ensuring accurate block time synchronization across the network.

The challenge of implied volatility (IV) calculation presents a unique DQA problem. Unlike the underlying asset price, IV is not a directly observable market variable; it is derived from option prices. DQA for options protocols must ensure that the IV used in pricing models accurately reflects the market’s expectation of future volatility, rather than being skewed by low-liquidity option pools or malicious actors attempting to influence the IV surface.

| Metric | Definition | Relevance to Options DQA |

|---|---|---|

| Latency | Time delay between data generation and protocol consumption. | High latency can lead to stale prices, creating arbitrage opportunities and incorrect liquidations. |

| Freshness | The recency of the data point used by the smart contract. | Ensures the protocol reacts to current market conditions, vital for short-term options and margin calls. |

| Deviation Threshold | The maximum allowable difference between a new price point and the previous price. | Prevents large price spikes from manipulation or fat-finger errors from affecting protocol state. |

| Source Count | The number of independent sources contributing to the aggregated price feed. | Reduces single-point-of-failure risk and increases the cost of manipulation. |

Approach

Current DQA approaches for crypto options protocols prioritize resilience against manipulation over speed. The primary methodology involves creating a multi-layered defense system where data is first aggregated, then validated, and finally verified by a decentralized network before being accepted by the options vault.

Oracle Aggregation and Filtering

The most common approach is to source price data from multiple independent oracle networks and centralized exchanges. This creates redundancy. The DQA pipeline then filters this data using statistical methods.

This process typically involves:

- Source Selection: Protocols select sources based on liquidity and historical reliability. Sources with high trading volume and deep order books are weighted more heavily in the calculation.

- Medianization: The protocol calculates the median price from all sources, rather than the average. The median is less susceptible to outliers caused by a single malicious source.

- Outlier Rejection: Any price point that deviates significantly from the median (e.g. beyond two standard deviations) is discarded. This statistical approach prevents single data feeds from disproportionately influencing the final price.

The challenge here lies in balancing security with responsiveness. Aggregating data across many sources and over time introduces latency. While a longer TWAP period offers greater security against flash loans, it can also cause liquidations to occur at prices that are no longer representative of current market conditions, creating a different type of risk for users.

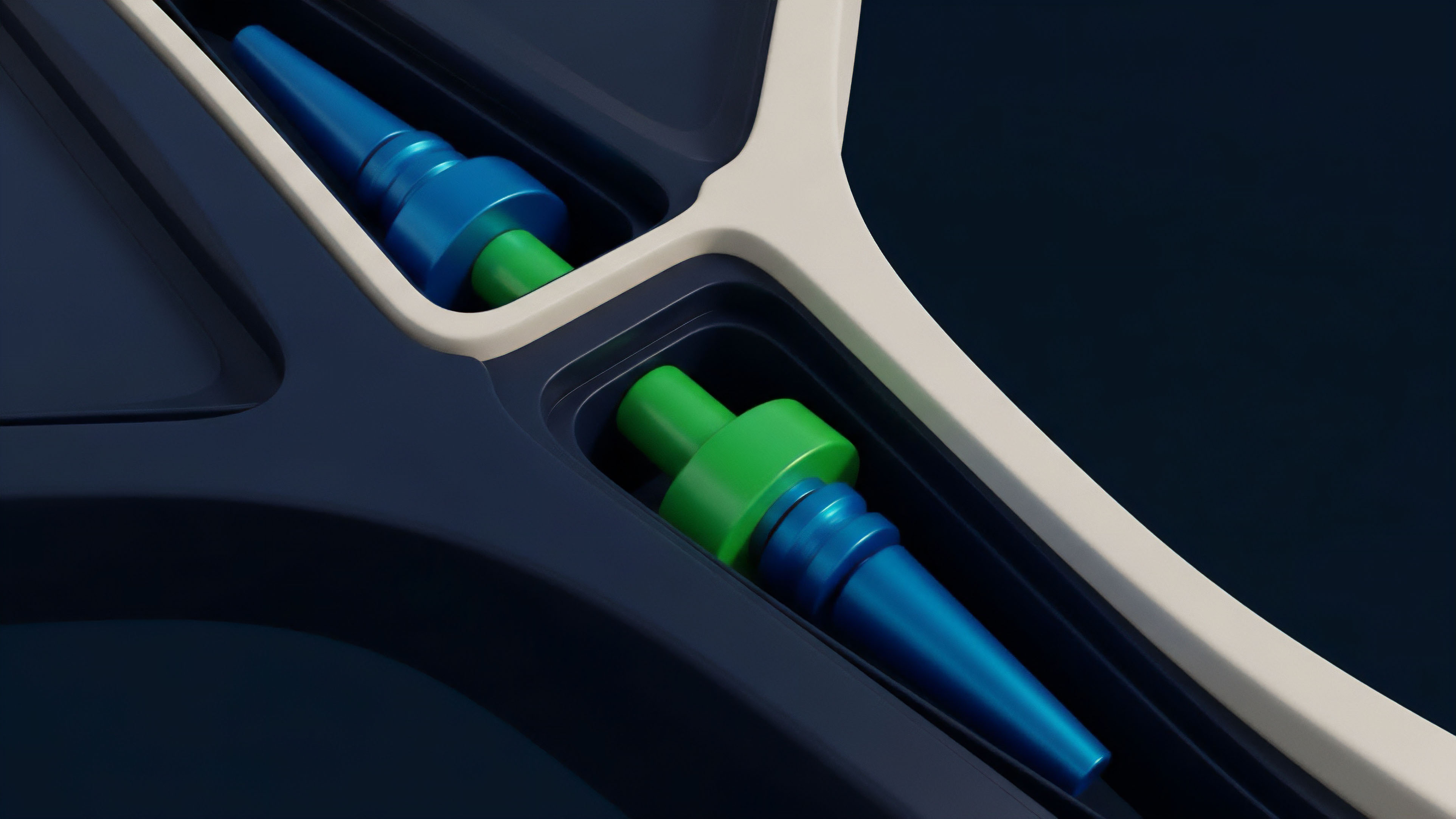

Data Provenance and Attestation

Advanced DQA systems are moving towards verifiable data provenance. This involves tracking the origin and journey of every data point from its source to its use in the smart contract. The goal is to provide a clear audit trail for all inputs.

For options, this is critical for post-mortem analysis of liquidations and for ensuring that the implied volatility calculations are based on verifiable inputs. Protocols are implementing cryptographic proofs to attest to the validity of data before it is submitted on-chain. This ensures that the data being used by the protocol has not been tampered with and meets the predefined quality standards.

Evolution

The evolution of DQA in crypto options reflects a continuous arms race between protocol designers and adversarial actors. Initially, DQA was a reactive measure, implemented after an exploit to patch a vulnerability. Today, it is a proactive design principle.

The shift from simple TWAPs to complex, multi-layered oracle networks represents a significant step forward.

From Single Feeds to Multi-Chain Networks

Early derivatives protocols often relied on single oracle feeds, which were easily compromised. The evolution has seen a move toward sophisticated, multi-chain data networks that provide redundant feeds. These networks not only aggregate data from multiple sources but also ensure that data is verified by independent validators across different blockchains.

This cross-chain verification introduces a new layer of security, making it exponentially more expensive for an attacker to manipulate the data across all sources simultaneously. The complexity of options pricing requires a higher standard of data integrity than spot trading, necessitating a shift toward highly redundant data delivery mechanisms.

The evolution of DQA reflects a shift from reactive patching to proactive, multi-layered system design, where data integrity is treated as a core security feature rather than an afterthought.

Data-Driven Governance and Insurance

As DQA systems mature, they are being integrated directly into protocol governance. This allows for data integrity metrics to trigger automated responses, such as pausing liquidations if data quality falls below a certain threshold. Furthermore, the concept of data integrity insurance is gaining traction.

These insurance mechanisms are designed to compensate users for losses incurred due to oracle failures or data manipulation. This financial layer of protection ensures that even if a DQA system fails, the protocol has a mechanism to mitigate the systemic risk to users.

Horizon

Looking ahead, the future of DQA in crypto options will be defined by two key areas: the use of advanced machine learning for anomaly detection and the development of verifiable computation for data integrity. The current generation of DQA relies on predefined statistical thresholds for outlier detection. However, these static thresholds are often too slow to react to sophisticated, rapidly evolving manipulation tactics.

Real-Time Anomaly Detection

The next iteration of DQA will involve machine learning models trained on historical data and real-time order flow. These models will identify subtle patterns in market data that signal manipulation attempts before they trigger a full-scale exploit. This allows protocols to proactively halt liquidations or adjust pricing models in real-time.

This approach requires protocols to move beyond simple data aggregation and into predictive analytics. This is where the integration of market microstructure analysis becomes critical; the DQA system must understand the nuances of order book dynamics and liquidity shifts to differentiate between genuine market movements and manipulative actions.

Zero-Knowledge Proofs for Data Validity

A more long-term horizon involves using zero-knowledge proofs (ZKPs) to verify data validity. ZKPs allow a protocol to prove that a data point meets specific criteria without revealing the data itself. For options protocols, this means a protocol could verify that an implied volatility calculation was performed correctly on a set of market data, without exposing the full dataset to the public.

This approach significantly enhances data privacy while maintaining verifiable integrity. The implementation of ZKPs for DQA will enable more complex derivatives products to operate on-chain with a higher degree of confidence, reducing counterparty risk and allowing for more efficient capital deployment.

| Methodology | Mechanism | Key Advantage | Current Challenges |

|---|---|---|---|

| AI/ML Anomaly Detection | Machine learning models identify manipulation patterns in real-time order flow data. | Proactive defense against novel manipulation tactics; real-time risk mitigation. | Model training data requirements; high computational cost; potential for false positives. |

| Verifiable Computation (ZKPs) | Cryptographic proofs attest to data integrity without revealing underlying data. | Enhanced data privacy; verifiable data provenance; increased trust in complex calculations. | High computational overhead; complexity of implementation; nascent technology. |

Glossary

Market Data Quality

Data Quality Management

Cross-Chain Data Synchronization

Capital Efficiency Optimization

Automated Assurance Markets

Financial Settlement Assurance

Data Quality Metrics

Crypto Options Protocols

Security Assurance Frameworks