Essence

Data Normalization Processes represent the architectural foundation for ensuring cross-venue consistency in decentralized derivatives markets. These protocols translate heterogeneous raw data from disparate decentralized exchanges, automated market makers, and order books into a unified, actionable format. Without this homogenization, quantitative models fail, as they receive contradictory price signals and liquidity metrics from identical underlying assets.

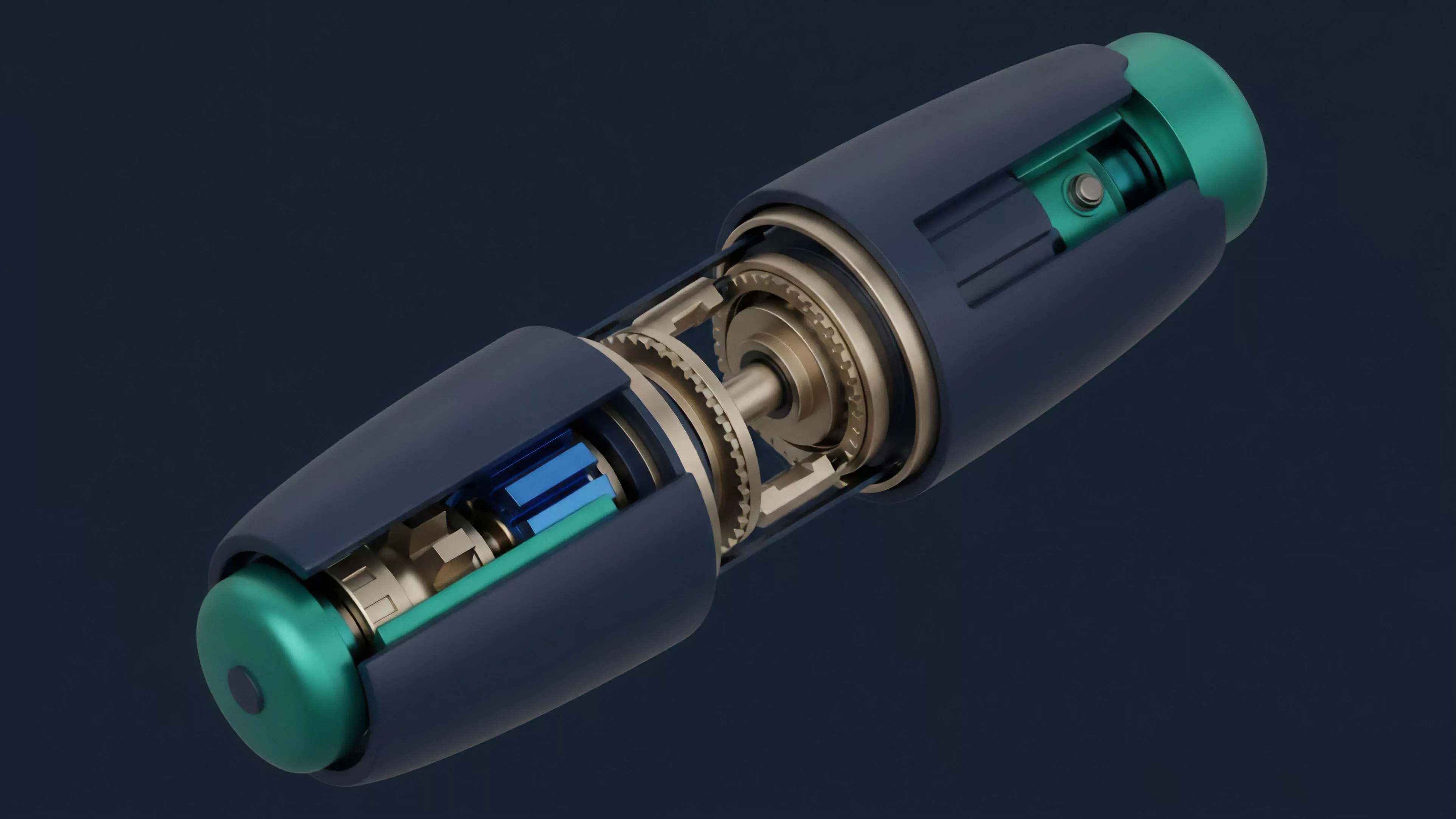

Data normalization transforms fragmented market information into a coherent standard required for reliable pricing and risk management.

The core objective remains the elimination of technical noise inherent in blockchain data structures. By aligning timestamp granularity, tick size, and asset identifiers, these systems allow derivative engines to execute margin calls, delta hedging, and settlement with mathematical certainty. The system functions as a translator between the chaotic reality of on-chain activity and the rigid requirements of derivative financial instruments.

Origin

The necessity for these processes originated from the rapid fragmentation of liquidity across multiple automated market makers and order book protocols.

Early decentralized finance iterations relied on single-source oracles, which proved vulnerable to manipulation and lacked the depth required for complex options trading. As traders sought more sophisticated instruments, the requirement for aggregating data from diverse venues became unavoidable.

- Oracle Decentralization: Early attempts to pull data directly from single sources created single points of failure that necessitated robust aggregation layers.

- Liquidity Fragmentation: The emergence of competing liquidity pools forced developers to create systems that synthesize price feeds across multiple protocols.

- Arbitrage Efficiency: Market participants required unified data to identify price discrepancies across venues, driving the demand for standardized feeds.

Developers observed that raw blockchain logs lacked the context necessary for financial analysis, such as implied volatility or open interest calculations. Consequently, off-chain computation and specialized indexer protocols evolved to pre-process this data, creating the current landscape where normalized feeds serve as the bedrock for all derivative operations.

Theory

The theory rests on the assumption that market efficiency requires a singular, authoritative representation of state. In the context of options, this involves reconstructing the order book from event logs, calculating greeks in real-time, and filtering out synthetic wash trading.

The transformation process follows a strict hierarchy of data refinement, moving from raw transaction logs to highly structured financial primitives.

| Layer | Function |

| Ingestion | Raw event collection from blockchain nodes |

| Cleaning | Filtering anomalies and non-financial transactions |

| Normalization | Mapping disparate schemas to a unified standard |

| Aggregation | Calculating global metrics like open interest |

Mathematical consistency across derivative models depends entirely on the accuracy of the underlying data standardization layer.

Systems must handle high-frequency updates while maintaining consensus-level integrity. Any deviation in the normalization logic results in incorrect margin requirements, leading to cascading liquidations during high-volatility regimes. The architecture mimics traditional high-frequency trading infrastructure but must adapt to the inherent latency and deterministic nature of decentralized execution environments.

Approach

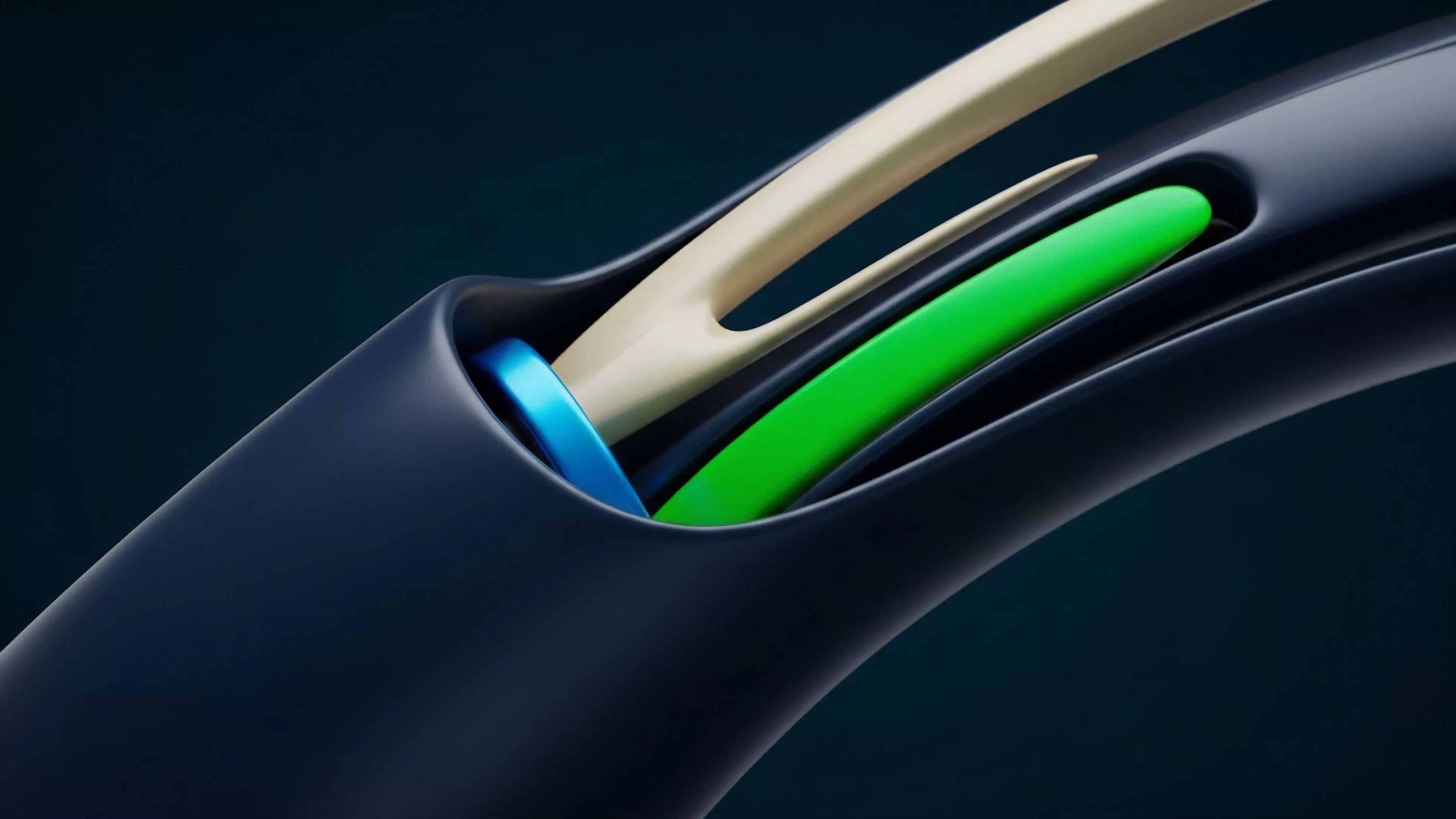

Current strategies utilize distributed indexing nodes to perform real-time computation on incoming blocks.

These nodes maintain local state machines that track the lifecycle of every option contract, from minting to expiration. By decoupling the data ingestion from the settlement layer, protocols achieve a degree of horizontal scalability that would be impossible within a single smart contract.

- Schema Alignment: Developers enforce strict data structures to ensure that options from different protocols share identical field names and units.

- Latency Mitigation: Indexers employ caching layers to provide sub-second access to normalized data, vital for automated market maker operations.

- Anomaly Detection: Statistical filters identify and exclude outlier trades that would otherwise skew volatility surfaces and risk metrics.

The focus shifts toward verification. Modern systems employ cryptographic proofs, such as zero-knowledge circuits, to confirm that the normalization process occurred correctly without requiring the consumer to trust the indexer. This ensures that the derived financial metrics remain trustless, aligning with the core ethos of decentralized finance.

Evolution

Development moved from rudimentary price scraping to sophisticated, multi-layered data pipelines.

Early efforts focused on basic price parity, whereas contemporary systems manage complex derivative structures including perpetual options and exotic instruments. The transition occurred as protocols recognized that inaccurate data leads directly to systemic insolvency. The evolution tracks closely with the maturation of blockchain infrastructure.

Initial iterations struggled with block time limitations, leading to stale pricing. Current architectures leverage layer-two solutions and specialized data availability layers to maintain throughput. This transition marks the move from experimental finance to robust, institutional-grade infrastructure.

Horizon

Future developments prioritize the integration of real-time, cross-chain data normalization.

As liquidity moves between chains, the systems must normalize data across different consensus mechanisms without sacrificing speed. This expansion involves creating universal standards for option definitions, allowing for seamless interoperability between protocols on disparate networks.

Standardized data interfaces across chains will enable a truly global and unified market for decentralized derivative instruments.

The next phase involves the automation of the normalization logic itself. Adaptive algorithms will detect changes in protocol schemas and update their parsing logic without human intervention. This self-healing architecture is required to sustain the growth of decentralized markets, where constant innovation leads to rapidly shifting data structures.