Essence

Data fragmentation represents the dispersion of critical market information across disparate, non-interoperable venues within the decentralized finance ecosystem. In the context of crypto options, this challenge manifests as a fractured view of liquidity, pricing, and implied volatility surfaces. Unlike traditional finance where centralized exchanges provide a consolidated feed of order book depth and trade history, decentralized options protocols operate in isolation.

A single underlying asset may have options trading on multiple AMM-based platforms, order book exchanges, and different blockchain layers (L1s and L2s). This creates significant information asymmetry for market participants. The consequence of this dispersion is that no single entity possesses a complete picture of the market’s risk profile or true liquidity.

This makes accurate pricing and efficient risk management for options strategies exceptionally difficult.

Data fragmentation in crypto options markets prevents the formation of a unified implied volatility surface, making accurate risk calculation and pricing models unreliable.

The core issue extends beyond a simple lack of data aggregation. It touches upon the fundamental challenge of trust and data integrity in a permissionless environment. When a market maker or a quantitative strategy attempts to calculate the theoretical value of an option, it requires real-time, high-fidelity data on the underlying asset’s price, historical volatility, and the existing order book depth across all venues.

When this data is fragmented, strategies are forced to make decisions based on incomplete or stale information. This increases the cost of capital for market makers, widens spreads, and ultimately reduces the efficiency of the options market as a whole. The result is a less robust market structure where liquidity is shallow and vulnerable to exploitation.

Origin

The genesis of data fragmentation in crypto options is deeply rooted in the architectural decisions made during the initial phases of decentralized finance.

The design choice to prioritize permissionless access and censorship resistance over centralized efficiency led to a proliferation of competing protocols. Each protocol, whether an order book model like Lyra or an AMM-based approach like Hegic, built its own data infrastructure, liquidity pools, and risk engines. This initial fragmentation was compounded by the rise of the multi-chain ecosystem.

As capital moved from Ethereum to alternative Layer 1s like Solana and Layer 2s like Arbitrum, liquidity became siloed. The options market, being a derivative of the underlying asset market, inherited this structural dispersion. This challenge is a direct consequence of the “protocol physics” inherent in a multi-chain environment.

In traditional finance, data flows through established, high-speed networks and exchanges. In DeFi, data must be bridged between chains, a process that introduces latency, cost, and additional security risks. The very nature of decentralized consensus mechanisms, which prioritize state integrity over real-time data flow, makes data aggregation a non-trivial technical problem.

The market’s inability to reconcile disparate data sources in real-time creates a systemic inefficiency. The problem is further exacerbated by the varying data reporting standards of different protocols. Some protocols may rely on internal oracles, while others use external data feeds, each with different update frequencies and aggregation methodologies.

This lack of standardization makes it nearly impossible for a single market participant to construct a coherent picture of the global implied volatility surface.

| Data Source Type | Impact on Options Pricing | Latency & Reliability Profile |

|---|---|---|

| Centralized Exchange Order Books | High-fidelity underlying price data. Limited options data. | Low latency, high reliability. Single point of failure risk. |

| Decentralized AMM Pools | Liquidity depth data for specific strikes. High slippage risk. | Variable latency, susceptible to sandwich attacks. |

| On-Chain Oracle Feeds | Underlying asset price for settlement. Not options specific. | High latency (block time dependent), high security cost. |

| Off-Chain Data Aggregators | Attempts to unify fragmented data. | Medium latency, dependent on aggregator methodology. |

Theory

The theoretical impact of data fragmentation on options pricing models can be analyzed through the lens of quantitative finance and behavioral game theory. From a quantitative perspective, data fragmentation directly corrupts the inputs required for models like Black-Scholes-Merton. The most critical input, implied volatility (IV), is derived from market prices.

When prices are fragmented across multiple venues, there is no single, accurate implied volatility surface. Instead, market makers are left with a collection of fragmented surfaces, each reflecting only a fraction of the total market liquidity. This phenomenon introduces a significant “arbitrage opportunity” for those with superior data aggregation capabilities, but creates a systemic risk for those without.

The fragmentation of liquidity means that large orders cannot be filled efficiently. A market maker attempting to hedge an option position by buying or selling the underlying asset across fragmented venues will experience higher slippage. This increased cost of execution must be priced into the option premium, resulting in wider spreads and less efficient pricing.

From a behavioral game theory standpoint, data fragmentation creates an adversarial environment where information asymmetry is exploited. Liquidity providers operating on different chains or protocols often act as “siloed competitors,” unaware of each other’s full positions. This lack of information sharing prevents the market from reaching a stable equilibrium.

The result is a system where participants are incentivized to engage in “information arbitrage” rather than genuine value creation through risk transfer.

- Implied Volatility Surface Distortion: Fragmentation makes it impossible to accurately calculate the implied volatility surface across all strikes and expirations. Market makers must approximate IV based on limited data, leading to mispricing and inefficient capital allocation.

- Liquidity Silos and Phantom Liquidity: The perceived liquidity on a single protocol may be shallow, while significant liquidity exists on another protocol. This creates “phantom liquidity,” where a large order appears viable but cannot be executed without causing significant slippage across multiple venues.

- Cross-Chain Basis Risk: The underlying asset price itself can vary across different chains due to bridging latency and differing oracle feeds. This creates basis risk between the option’s settlement chain and the underlying asset’s price discovery chain.

- Greeks Calculation Inaccuracy: Fragmentation introduces noise into the calculation of options Greeks (Delta, Gamma, Vega, Theta). When the underlying price feed is inconsistent or delayed, the calculation of Delta and Gamma becomes unreliable, making hedging strategies prone to significant errors.

Approach

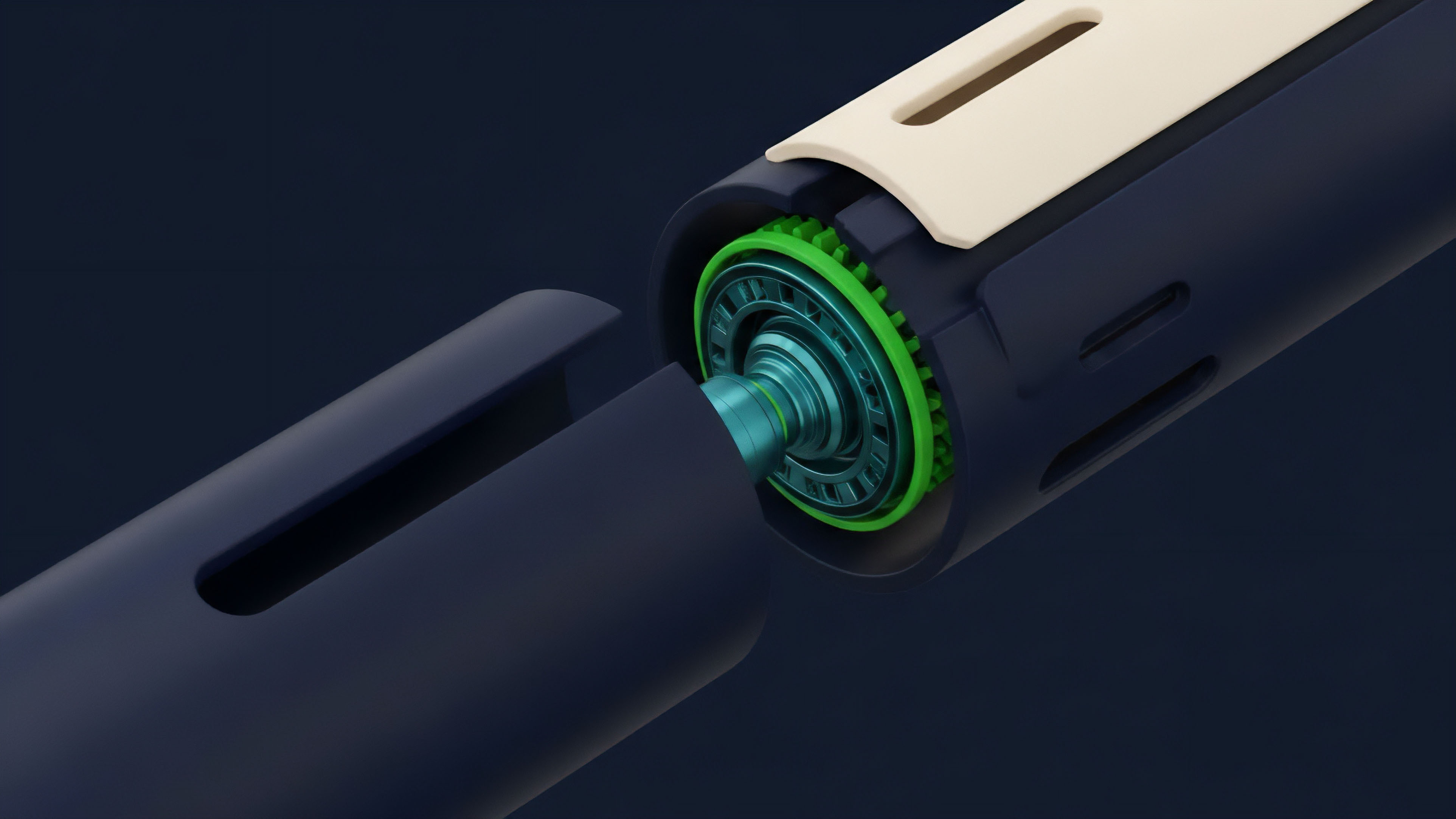

Addressing data fragmentation requires a multi-layered approach that combines technical infrastructure improvements with strategic market-making practices. The current approach focuses on two main areas: data aggregation layers and enhanced oracle design. Data aggregation layers, often implemented as middleware or off-chain services, attempt to consolidate information from multiple decentralized exchanges (DEXs) and options protocols.

These services provide a unified API feed that combines order book depth and trade history from various sources. The challenge here lies in verifying the integrity of the data and ensuring real-time updates. If the aggregation layer itself introduces latency, the data remains stale, defeating the purpose.

A more robust solution involves designing specialized oracle networks for derivatives. Unlike simple price feeds, these advanced oracles must aggregate a complex data set that includes not just the underlying asset price, but also a representation of the implied volatility surface from multiple sources. Protocols like Pyth Network attempt to solve this by creating a network of data providers (market makers, exchanges) that push real-time pricing data to a single network.

This allows protocols to access a high-fidelity, aggregated feed for options pricing and settlement. Market makers must also adapt their strategies to operate within a fragmented landscape. This involves a shift from passive quoting to active arbitrage and liquidity provision across multiple venues simultaneously.

| Strategy | Description | Risk Profile |

|---|---|---|

| Cross-Venue Arbitrage | Identifying and exploiting pricing discrepancies between fragmented options protocols and underlying asset markets. | High technical skill, high capital requirements, execution risk. |

| Liquidity Aggregation Bots | Automated systems that place orders across multiple protocols to create a deeper virtual order book for users. | High latency risk, potential for front-running. |

| Dynamic Hedging | Adjusting hedge positions in real-time based on fragmented data feeds, often requiring high-frequency trading infrastructure. | Significant slippage risk during volatile market conditions. |

Effective mitigation of data fragmentation requires a combination of robust off-chain data aggregation and new oracle designs capable of providing a unified implied volatility surface.

Evolution

The evolution of data fragmentation has followed the growth trajectory of the multi-chain ecosystem. Initially, fragmentation was primarily contained within Ethereum, where different protocols competed for liquidity. The introduction of Layer 2 solutions and sidechains was intended to solve scaling issues, but it inadvertently exacerbated data fragmentation by creating more distinct liquidity pools.

The rise of cross-chain bridges introduced new complexities, making it difficult to reconcile data across different environments. The market’s response to this challenge has progressed through several stages. Early solutions involved simple data scraping and manual aggregation by market makers.

This proved unsustainable as the number of protocols grew. The next phase saw the development of specialized data aggregators and dashboards that provide a unified view of liquidity. However, these tools often suffer from latency issues and are unable to provide a complete picture of the implied volatility surface, which requires complex calculations based on fragmented order book data.

A key development has been the emergence of “intent-based architectures.” Instead of users interacting directly with a specific fragmented protocol, they express an “intent” (e.g. “I want to buy a call option at this strike”). A network of solvers then finds the best execution path across all available liquidity pools, effectively abstracting away the fragmentation from the end user.

This approach aims to solve fragmentation at the user interface level, rather than by trying to unify the underlying data infrastructure.

- Siloed Protocol Competition: Initial phase where protocols on the same chain competed for liquidity, leading to isolated data environments.

- Multi-Chain Dispersion: Expansion to L1s and L2s, where liquidity is physically separated by bridges, creating significant data latency and basis risk.

- Aggregator Layer Development: Introduction of middleware services to consolidate data feeds from multiple protocols, addressing a symptom rather than the root cause.

- Intent-Based Abstraction: The most recent development, where user requests are routed by solvers across fragmented venues, creating a seamless user experience by abstracting away the underlying complexity.

Horizon

Looking forward, the future of data fragmentation will be defined by the successful implementation of shared data layers and the development of more sophisticated “DeFi-native” pricing standards. The current approach of aggregating fragmented data is inherently inefficient; the next generation of solutions will focus on preventing fragmentation at the source. One potential pathway involves a unified data standard where protocols commit to a shared oracle or data layer.

This would allow for the creation of a truly global implied volatility surface that can be accessed by all participants. The challenge here is convincing protocols to adopt a single standard, which requires overcoming competitive incentives and establishing a trusted, decentralized governance model for the data layer itself. Another potential solution lies in the evolution of Layer 0 protocols and interoperability standards.

As cross-chain communication becomes more efficient and secure, the distinction between liquidity on different chains may blur. If capital and data can flow seamlessly between chains, the fragmentation problem could be mitigated by creating a single, virtual order book that spans multiple networks. The ultimate goal is to move beyond simply coping with fragmentation toward a state where market structure is inherently unified.

This requires a shift in design philosophy, moving away from siloed protocol development toward a collaborative ecosystem where data sharing is a fundamental design principle. This shift would allow for the creation of more robust risk engines, more efficient capital allocation, and ultimately, a more mature and resilient crypto options market.

The future of decentralized options requires a shift from coping with fragmented data to establishing a unified data standard, allowing for efficient risk engines and true price discovery across all venues.

Glossary

Crypto Options

Smart Contract Vulnerabilities

Price Discovery Fragmentation

Layer 2 Solutions

Cross-Chain Arbitrage

Capital Efficiency

Security Fragmentation

Liquidity Fragmentation Mitigation

Jurisdictional Liquidity Fragmentation