Essence

Data Feed Transparency defines the degree of verifiability regarding the origin, aggregation, and computational processing of price inputs powering decentralized derivative protocols. In the architecture of crypto options, the price feed serves as the single point of truth for mark-to-market calculations, liquidation triggers, and volatility surface construction. When this mechanism operates in opacity, it introduces counterparty risk indistinguishable from traditional centralized black-box clearinghouses.

Data Feed Transparency establishes the verifiable chain of custody for market price inputs that dictate derivative settlement and risk management outcomes.

Systemic integrity hinges on the ability of market participants to audit the specific liquidity venues, filtering algorithms, and consensus mechanisms employed by an oracle or internal feed. Without this clarity, traders cannot accurately price the probability of automated liquidations, leading to distorted risk premiums and inefficient capital allocation. The Derivative Systems Architect views this not as a mere feature, but as the foundational layer of protocol trust, where the visibility of input data directly correlates with the resilience of the derivative instrument against adversarial market conditions.

Origin

The genesis of Data Feed Transparency lies in the fundamental mismatch between the high-frequency nature of centralized order books and the latency-constrained, trustless execution environment of decentralized ledgers. Early protocols relied on rudimentary on-chain aggregators that suffered from manipulation risks and poor temporal resolution. As derivative markets expanded, the necessity for robust, manipulation-resistant pricing became the primary driver for innovation in decentralized oracle networks.

- Centralized Exchange Dependency created initial vulnerabilities where protocol solvency depended on the opaque API data of a single, often unregulated, venue.

- Manipulation Resistance requirements forced the adoption of volume-weighted average price (VWAP) and medianized inputs to dampen the impact of anomalous trade spikes.

- Adversarial Evolution occurred as market participants identified that price feeds could be gamed via wash trading or thin-order-book manipulation, prompting a shift toward multi-source aggregation.

Historical market cycles have consistently demonstrated that derivative protocols failing to secure their price inputs against exogenous shocks face catastrophic liquidation cascades. This painful reality forced a transition from implicit trust in provider-reported data toward explicit, cryptographic proof of input integrity.

Theory

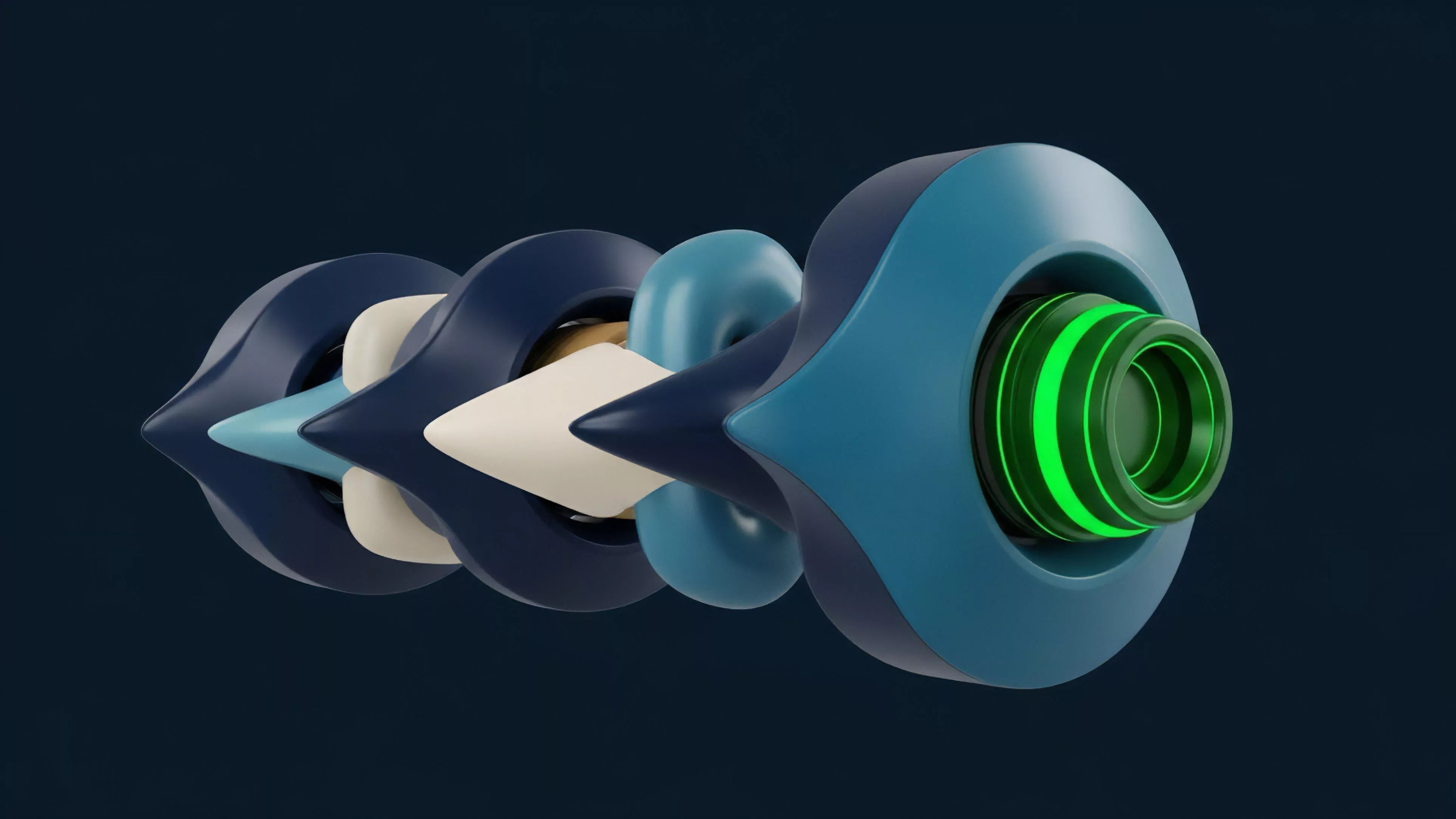

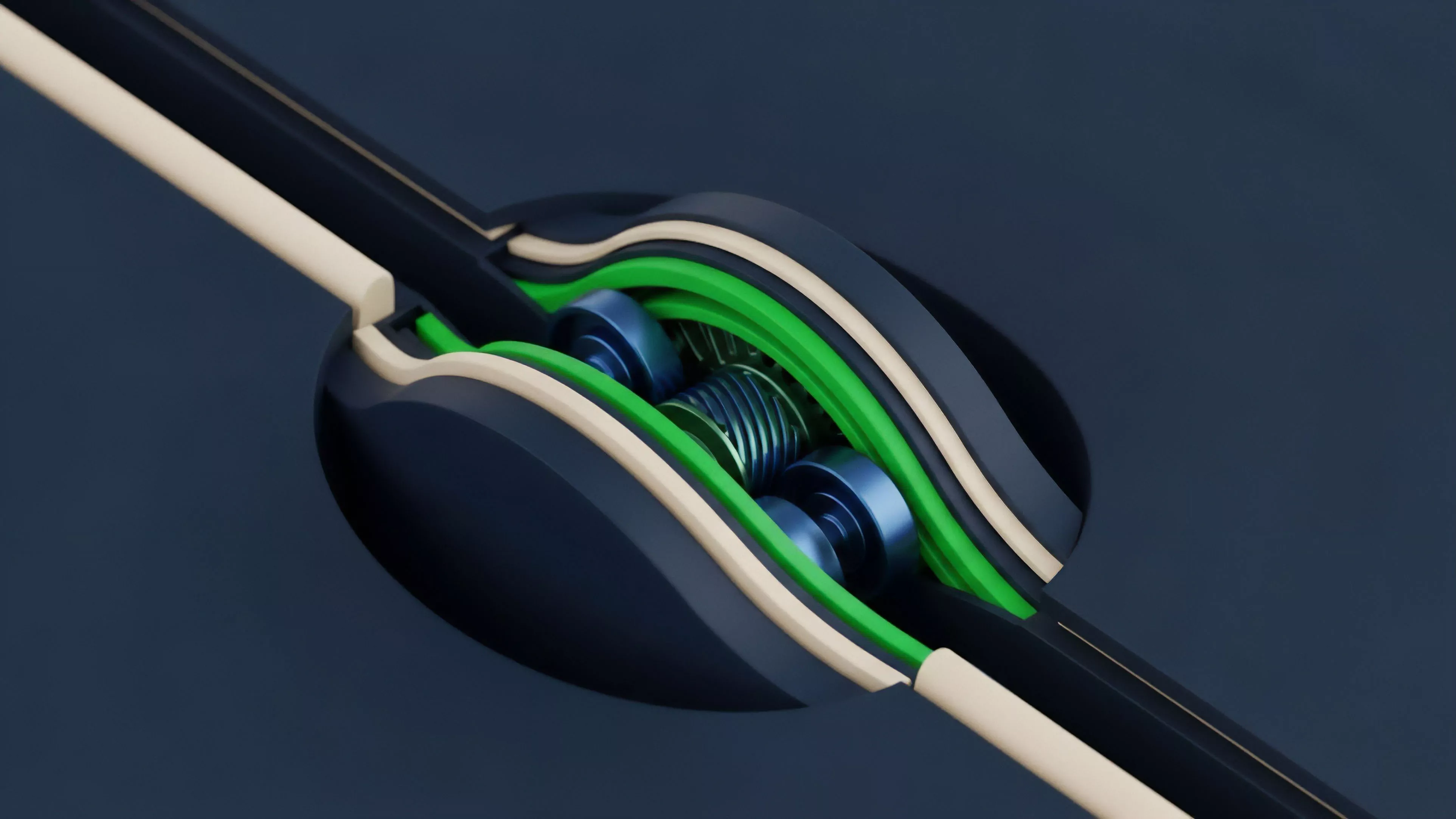

The technical architecture of Data Feed Transparency involves a rigorous multi-stage pipeline designed to minimize the deviation between on-chain representation and global market reality. The process begins at the ingestion layer, where raw tick data is pulled from diverse liquidity venues. The subsequent aggregation layer employs statistical filters to identify and discard outliers, typically utilizing robust estimators like the interquartile mean or weighted medians to mitigate the influence of localized price anomalies.

| Layer | Function | Risk Mitigation |

|---|---|---|

| Ingestion | Data retrieval from venues | API latency and downtime |

| Aggregation | Statistical normalization | Price manipulation and flash crashes |

| Consensus | Validator agreement | Byzantine node behavior |

Quantitative finance models require precise inputs for Greeks calculation ⎊ delta, gamma, vega ⎊ which are hyper-sensitive to feed jitter. A transparent feed exposes the variance and refresh rate of these inputs, allowing sophisticated traders to adjust their hedging strategies accordingly. The protocol physics dictates that if the feed updates slower than the volatility of the underlying asset, the system inherently creates a latency arbitrage opportunity for informed participants at the expense of liquidity providers.

Protocol stability depends on the statistical rigor and auditability of price aggregation methods that define the boundaries of automated liquidation.

Approach

Current implementations prioritize decentralization of the data source to prevent single points of failure. The most advanced systems now utilize decentralized oracle networks where independent node operators fetch, sign, and submit price data. This creates an auditable trail of individual contributions.

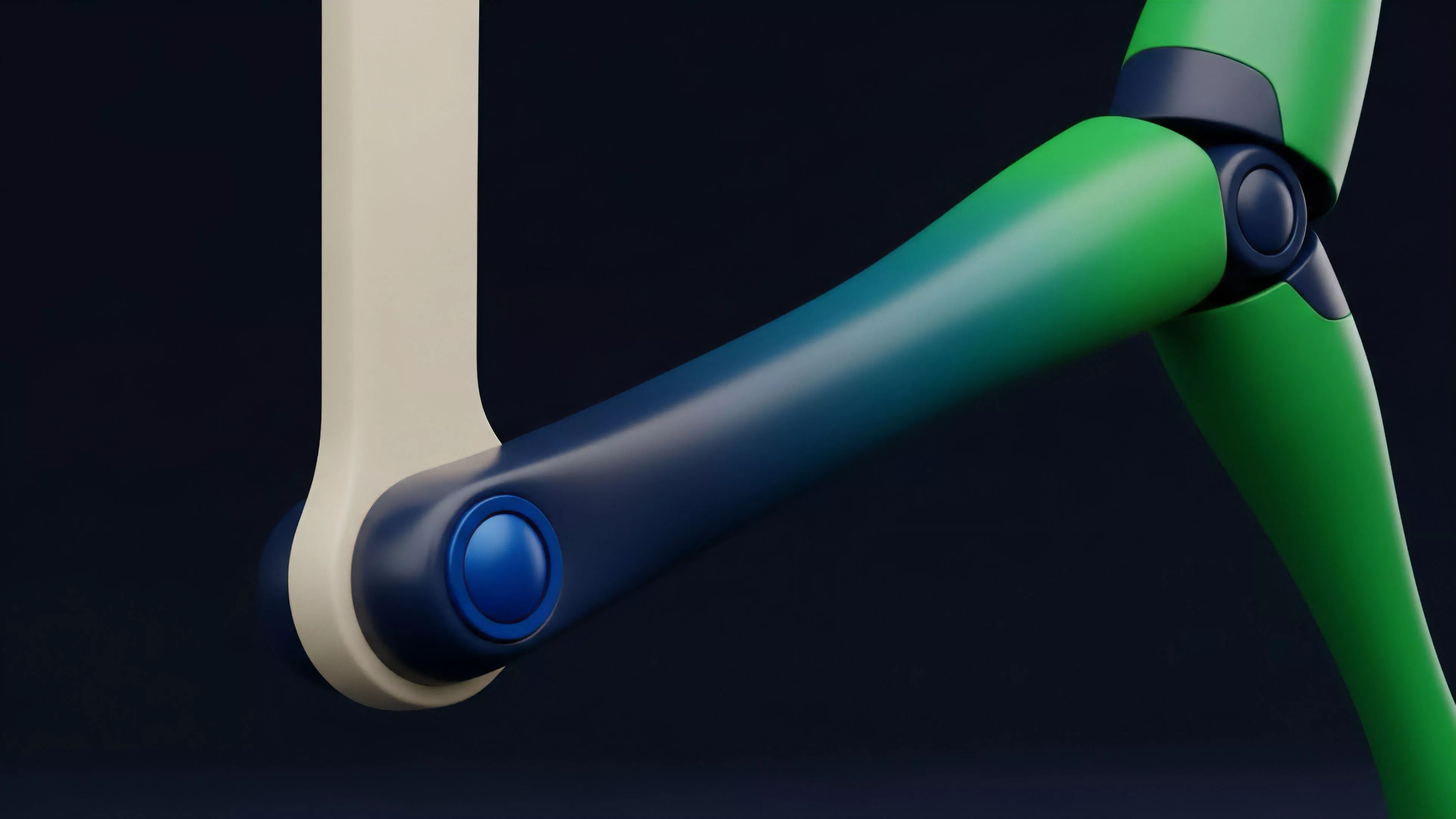

Protocols increasingly require node operators to stake collateral, creating economic penalties for submitting inaccurate or stale data, effectively aligning the incentives of the data providers with the health of the derivative system.

- Source Diversification ensures that price data is pulled from a broad array of high-volume exchanges to reduce the impact of venue-specific technical failure.

- Cryptographic Signing allows any participant to verify that the submitted price point originated from an authorized node operator, preventing unauthorized data injection.

- Staking Incentives provide a mechanism where the economic cost of submitting malicious data exceeds the potential gain from manipulating the derivative settlement price.

The shift toward modular data architectures allows protocols to swap feed providers without re-engineering the entire smart contract suite. This agility is vital for maintaining resilience against evolving market microstructure threats. The Derivative Systems Architect treats the price feed as a high-stakes financial instrument, subjecting it to the same stress tests as the clearing engine itself.

Evolution

The trajectory of price discovery has moved from simple, monolithic feeds toward sophisticated, verifiable computation. Initially, protocols accepted any provided data, assuming honest behavior. The subsequent era introduced basic sanity checks.

We are now in the era of proof-of-data, where protocols demand verifiable evidence of the entire pipeline, including the specific code versions running on oracle nodes. This is where the pricing model becomes elegant ⎊ and dangerous if ignored. The market has matured enough to recognize that transparency is the only viable defense against systemic contagion.

Verifiable price data transforms opaque market inputs into public, audit-ready components of the decentralized financial stack.

The integration of Zero-Knowledge proofs represents the next step in this evolution. These proofs allow oracle nodes to demonstrate that they have correctly processed data according to a pre-defined algorithm without revealing the raw, proprietary source data in its entirety. This addresses the tension between commercial data sensitivity and the requirement for public auditability.

Horizon

Future iterations will likely move toward real-time, on-chain volatility surface construction, where Data Feed Transparency extends to the entire implied volatility curve. This will allow for dynamic margin requirements that adjust instantaneously to market conditions, rather than relying on static, lagged parameters. The convergence of off-chain computation and on-chain settlement will enable more complex derivative structures, such as exotic options, to be priced and cleared with the same rigor as standard instruments.

| Metric | Legacy Feed | Next Generation Feed |

|---|---|---|

| Latency | Seconds | Milliseconds |

| Verification | Post-hoc | Real-time ZK-proof |

| Scope | Spot price | Full volatility surface |

The ultimate goal is the complete elimination of reliance on external trust, where the feed is an emergent property of the market’s own liquidity rather than an imported metric. This evolution necessitates a deep integration between order flow analysis and oracle design. One might wonder if the ultimate price feed is simply the market’s own decentralized order book, where settlement is derived directly from the limit order state rather than external price inputs.