Essence

Data Feed Accuracy represents the fidelity of information transmission between decentralized exchange venues and the smart contract settlement layers. This metric defines the temporal and numerical alignment between off-chain market reality and on-chain state execution. In the domain of crypto derivatives, this mechanism functions as the primary arbiter of solvency for margin-based positions.

Data Feed Accuracy determines the integrity of automated liquidation engines by ensuring contract settlement reflects precise market valuations.

Financial systems rely on this synchronization to maintain equilibrium. When latency or divergence occurs between the source of truth and the execution layer, the resulting discrepancy creates structural vulnerabilities. These gaps often manifest as toxic arbitrage opportunities, where participants exploit the delay to extract value from the protocol, ultimately degrading the collateralization ratios that protect the system from systemic failure.

Origin

The genesis of Data Feed Accuracy resides in the technical limitations of early oracle architectures.

Initial designs struggled to reconcile the high-frequency nature of centralized order books with the deterministic, block-based execution environment of blockchain networks. Developers sought to replicate traditional financial infrastructure, yet the absence of a unified, high-speed connectivity layer necessitated the creation of decentralized data verification protocols.

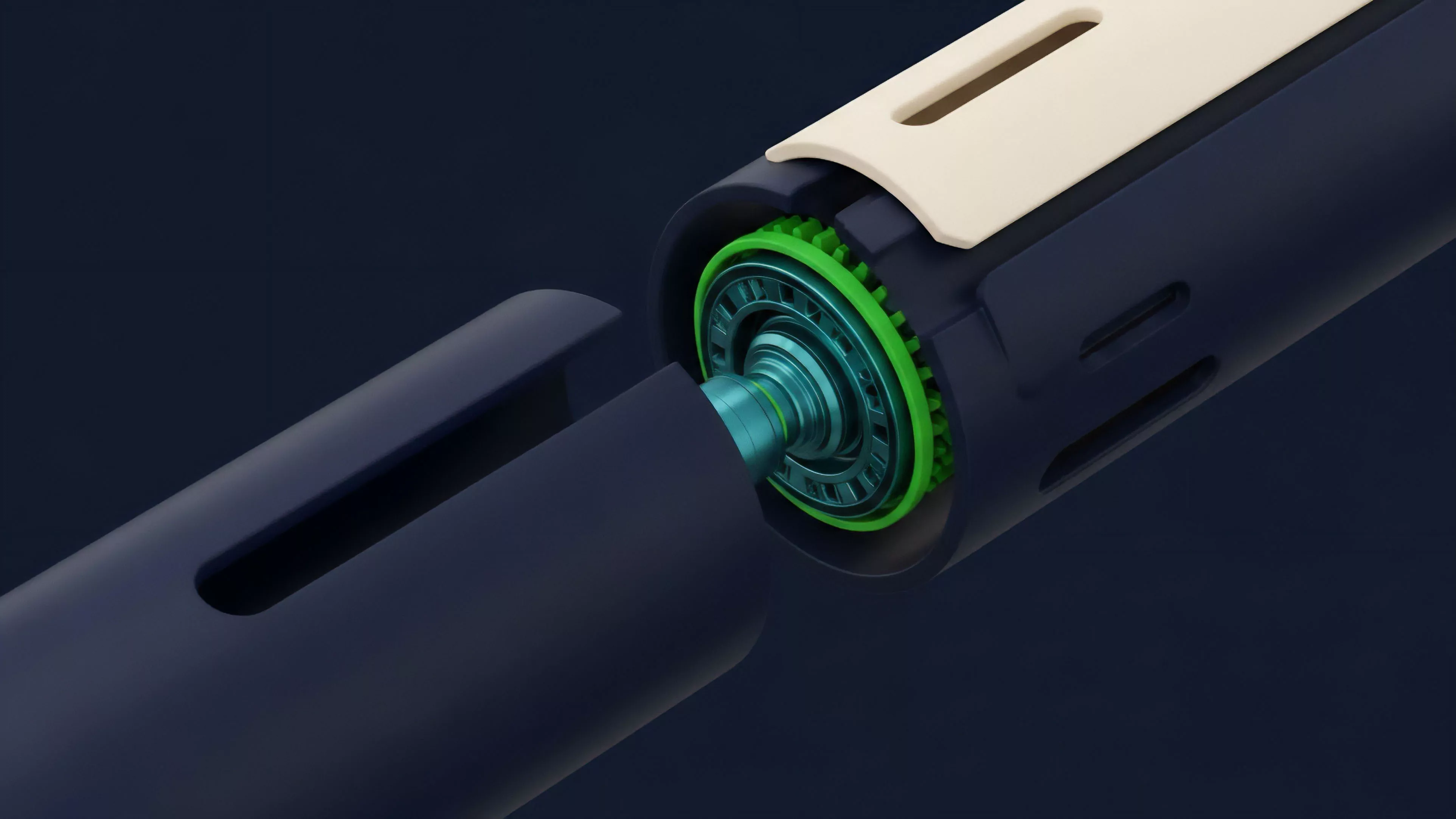

- Oracle Decentralization: Shifted trust from singular endpoints to distributed node networks.

- Latency Minimization: Developed push-based models to update prices upon significant market movement.

- Security Hardening: Introduced cryptographic proofs to validate data origin and prevent tampering.

This evolution was driven by the urgent requirement to prevent oracle manipulation attacks. As derivatives protocols expanded, the cost of inaccurate data grew, forcing a transition from simple request-response models to complex, multi-source aggregation systems designed to withstand adversarial market conditions.

Theory

The theoretical framework for Data Feed Accuracy centers on the relationship between update frequency, source diversity, and the tolerance for deviation. Quantitatively, this involves modeling the probability of oracle error against the liquidation threshold of a position.

If the variance in the price feed exceeds the maintenance margin, the protocol risks triggering incorrect liquidations or failing to execute necessary ones during periods of extreme volatility.

| Mechanism | Function | Risk Factor |

| Medianization | Aggregates multiple sources to filter outliers | Low sensitivity to rapid flash crashes |

| Deviation Threshold | Updates only when price shifts by a set percentage | Latency during high-velocity market moves |

| Time-Weighted Average | Smooths price inputs over specific intervals | Susceptibility to stale data pricing |

The robustness of a derivatives protocol is inversely proportional to the time delay between market price discovery and oracle update latency.

In adversarial game theory, participants actively search for discrepancies between feeds. A protocol with low Data Feed Accuracy becomes a target for automated agents that monitor these gaps, extracting liquidity before the system can correct its internal state. This interaction defines the protocol physics, where the speed of consensus directly impacts the efficiency of the margin engine.

Approach

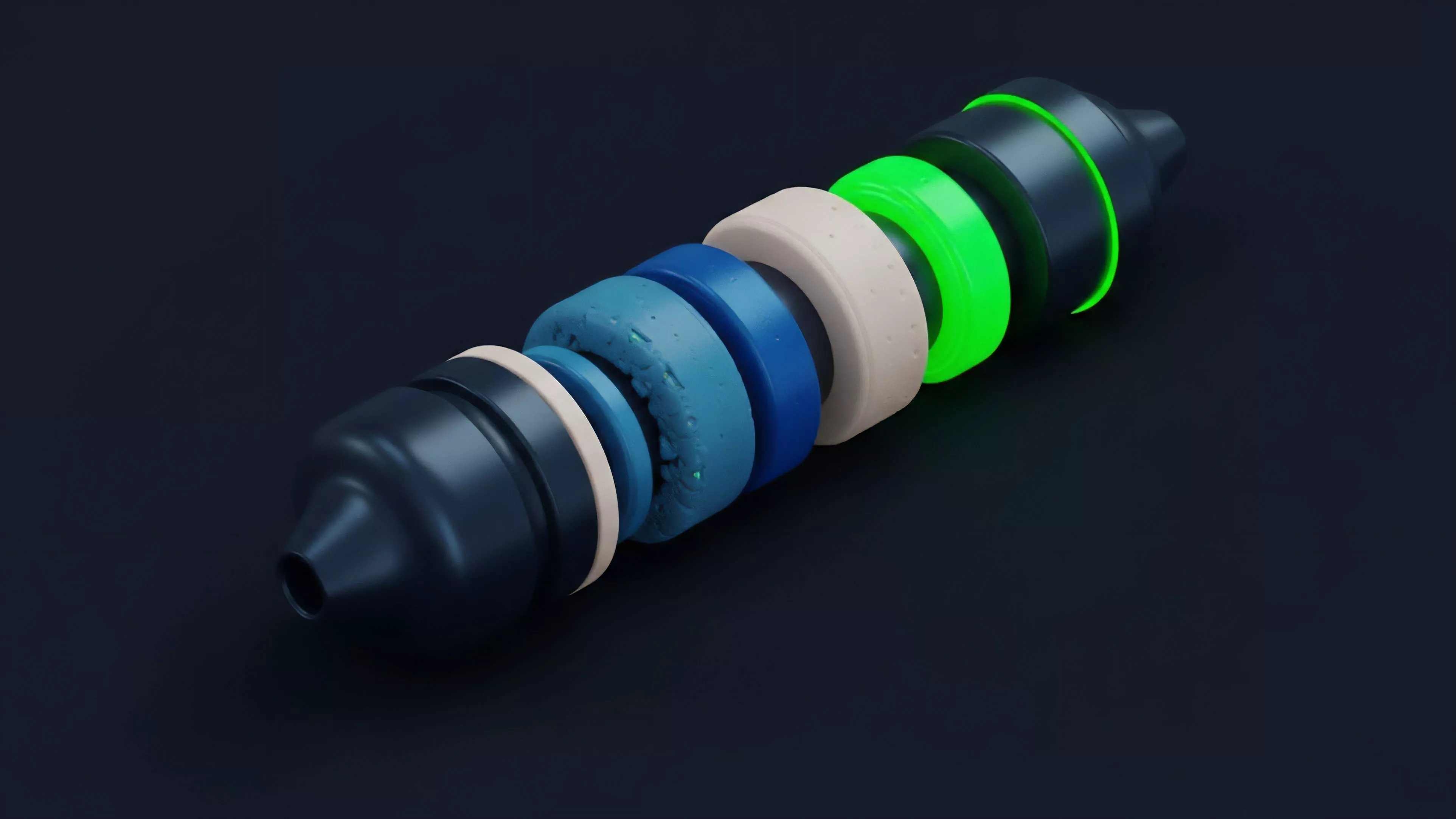

Current methodologies emphasize the integration of hybrid oracle solutions that combine off-chain computation with on-chain verification.

Modern protocols utilize advanced filtering algorithms to discard erroneous inputs from compromised or malfunctioning nodes. By assigning weights to data providers based on historical reliability, these systems attempt to insulate themselves from the inherent instability of decentralized networks.

- Aggregated Feeds: Combining data from centralized exchanges, decentralized pools, and futures markets.

- Staking Incentives: Penalizing nodes that provide data diverging from the calculated global median.

- Circuit Breakers: Pausing liquidations when data volatility surpasses predefined safety parameters.

This approach shifts the focus toward proactive risk mitigation. Instead of assuming data integrity, the system treats all incoming signals as potentially adversarial, continuously validating inputs against secondary sources before allowing them to impact the contract state.

Evolution

The trajectory of Data Feed Accuracy has moved from simple, centralized API queries toward complex, cryptographically secured streams. Early iterations suffered from single points of failure, where a compromised endpoint could dictate the pricing of an entire protocol.

The shift toward decentralized oracle networks significantly reduced this vulnerability, though it introduced new challenges regarding latency and network congestion.

Advancements in zero-knowledge proofs and off-chain scaling now enable higher update frequencies without compromising the underlying security of the data.

The industry now faces the requirement to scale these solutions to accommodate institutional-grade derivatives. The evolution reflects a broader transition toward high-fidelity market data, where sub-second updates are no longer a luxury but a requirement for maintaining parity with traditional high-frequency trading venues. Market participants now demand transparency in how these feeds are constructed, leading to the rise of verifiable, audit-ready data structures.

Horizon

The future of Data Feed Accuracy lies in the development of real-time, permissionless, and censorship-resistant data pipelines.

Future architectures will likely leverage native blockchain throughput to move price discovery entirely on-chain, eliminating the need for external intermediaries. This will necessitate a fundamental redesign of how margin engines handle data, moving toward asynchronous settlement models that can process price updates in parallel with trading activity.

- Native Protocol Oracles: Decentralized price discovery mechanisms built directly into the base layer.

- Predictive Data Aggregation: Machine learning models that anticipate price volatility to adjust update frequency dynamically.

- Cross-Chain Data Interoperability: Standardized formats that allow liquidity to move seamlessly across ecosystems while maintaining price parity.

These developments will redefine the competitive landscape, where the protocols that offer the highest degree of Data Feed Accuracy will attract the most sophisticated market makers. The focus will remain on minimizing the cost of trust, ensuring that decentralized derivatives can operate with the same efficiency and reliability as their legacy counterparts.