Essence

Data Driven Risk Assessment functions as the computational backbone for navigating decentralized derivative markets. It replaces subjective intuition with systematic quantification, utilizing high-frequency telemetry from order books, chain-native margin engines, and volatility surfaces to map exposure. This framework provides the objective reality necessary to price risk accurately in an environment where smart contract execution replaces traditional clearinghouse guarantees.

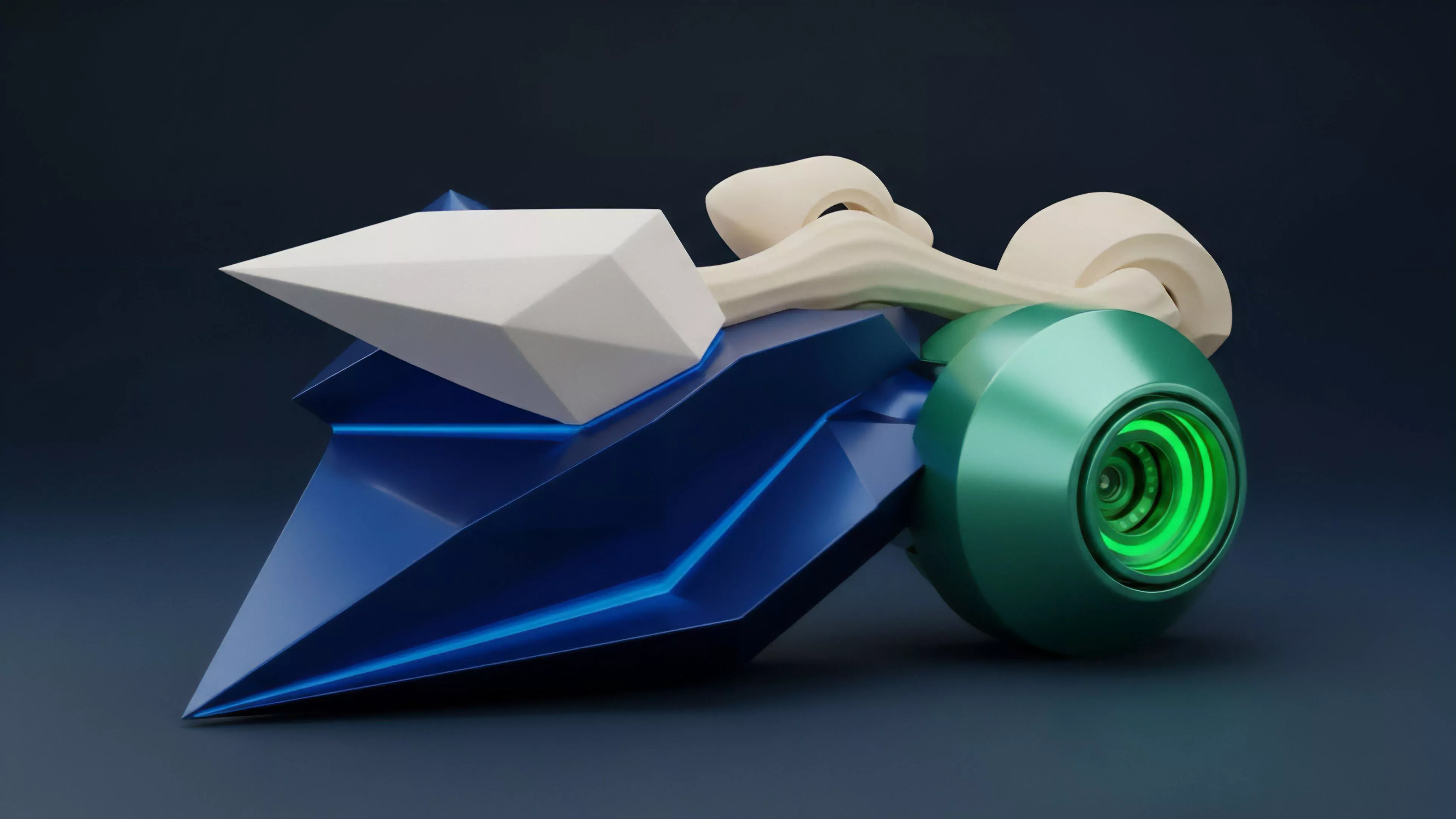

Data Driven Risk Assessment transforms raw market telemetry into actionable risk metrics for decentralized derivative protocols.

At its core, this approach treats market participants as agents within a complex, adversarial system. By continuously analyzing liquidity depth, liquidation thresholds, and basis volatility, the mechanism identifies structural weaknesses before they propagate into systemic failures. It shifts the burden of proof from historical precedent to real-time, on-chain state verification, ensuring capital efficiency remains balanced against potential insolvency events.

Origin

The necessity for Data Driven Risk Assessment emerged from the inherent limitations of early decentralized finance protocols that relied on simplistic, static collateralization ratios.

Market participants observed that during high-volatility regimes, these legacy models failed to account for rapid price cascades and the subsequent breakdown of liquidity provision. Early developers recognized that decentralized exchanges required dynamic, automated systems capable of adjusting margin requirements in response to evolving market conditions.

- Systemic Fragility: Initial protocols lacked automated mechanisms to adjust for rapid changes in underlying asset liquidity.

- Algorithmic Oversight: The move toward programmatic risk management allowed for the integration of real-time volatility data into margin calculations.

- Adversarial Design: Protocols began incorporating game-theoretic incentives to ensure that participants remain incentivized to maintain system stability.

This evolution drew heavily from traditional quantitative finance models, adapting Black-Scholes pricing and Value at Risk (VaR) frameworks to the 24/7, high-velocity environment of crypto assets. By synthesizing these established financial theories with the transparency of public ledgers, architects developed the first iteration of truly responsive, data-reliant risk frameworks.

Theory

The theoretical framework rests on the continuous evaluation of Greek exposure and liquidity dynamics. Mathematical modeling determines the probability of insolvency by stress-testing portfolios against extreme price movements, while protocol physics dictate how these assessments influence liquidation engine triggers.

| Metric | Function | Impact |

|---|---|---|

| Delta | Directional exposure | Adjusts hedge requirements |

| Gamma | Convexity risk | Calibrates rebalancing frequency |

| Vega | Volatility sensitivity | Updates margin buffer sizing |

Effective risk management requires constant calibration of portfolio sensitivities against shifting market volatility and liquidity depth.

Systems must account for the non-linear relationship between collateral value and liquidation pressure. In moments of extreme stress, liquidity vanishes, rendering standard linear models ineffective. Consequently, advanced frameworks utilize stochastic processes to simulate tail-risk events, ensuring that the protocol remains solvent even when oracle latency or gas spikes impede standard settlement processes.

The interplay between these variables creates a feedback loop where risk assessment directly influences the cost of leverage.

Approach

Modern implementation of Data Driven Risk Assessment focuses on integrating multi-dimensional data feeds into a unified margin engine. Architects deploy sophisticated pipelines that ingest order flow, funding rates, and open interest to calculate the real-time health of individual accounts.

- Telemetry Ingestion: Aggregating off-chain and on-chain data points to maintain a high-fidelity view of market conditions.

- Parameter Optimization: Adjusting liquidation thresholds dynamically based on the current volatility surface and available liquidity.

- Adversarial Testing: Running continuous simulations to identify potential exploits in the margin engine logic.

This methodology emphasizes the role of the Liquidation Engine as the ultimate arbiter of system health. By monitoring the speed at which collateral can be offloaded during market downturns, the system modulates user leverage to prevent cascading liquidations. The objective is to maintain a state of equilibrium where the protocol is sufficiently capitalized to absorb sudden shifts without relying on centralized intervention.

Evolution

The transition from simple, static models to highly complex, predictive systems marks a significant shift in decentralized market infrastructure.

Early systems operated in isolation, blind to broader macro-crypto correlations and cross-venue liquidity fragmentation. As protocols matured, they integrated cross-chain data and advanced machine learning models to anticipate volatility spikes before they manifested in order flow.

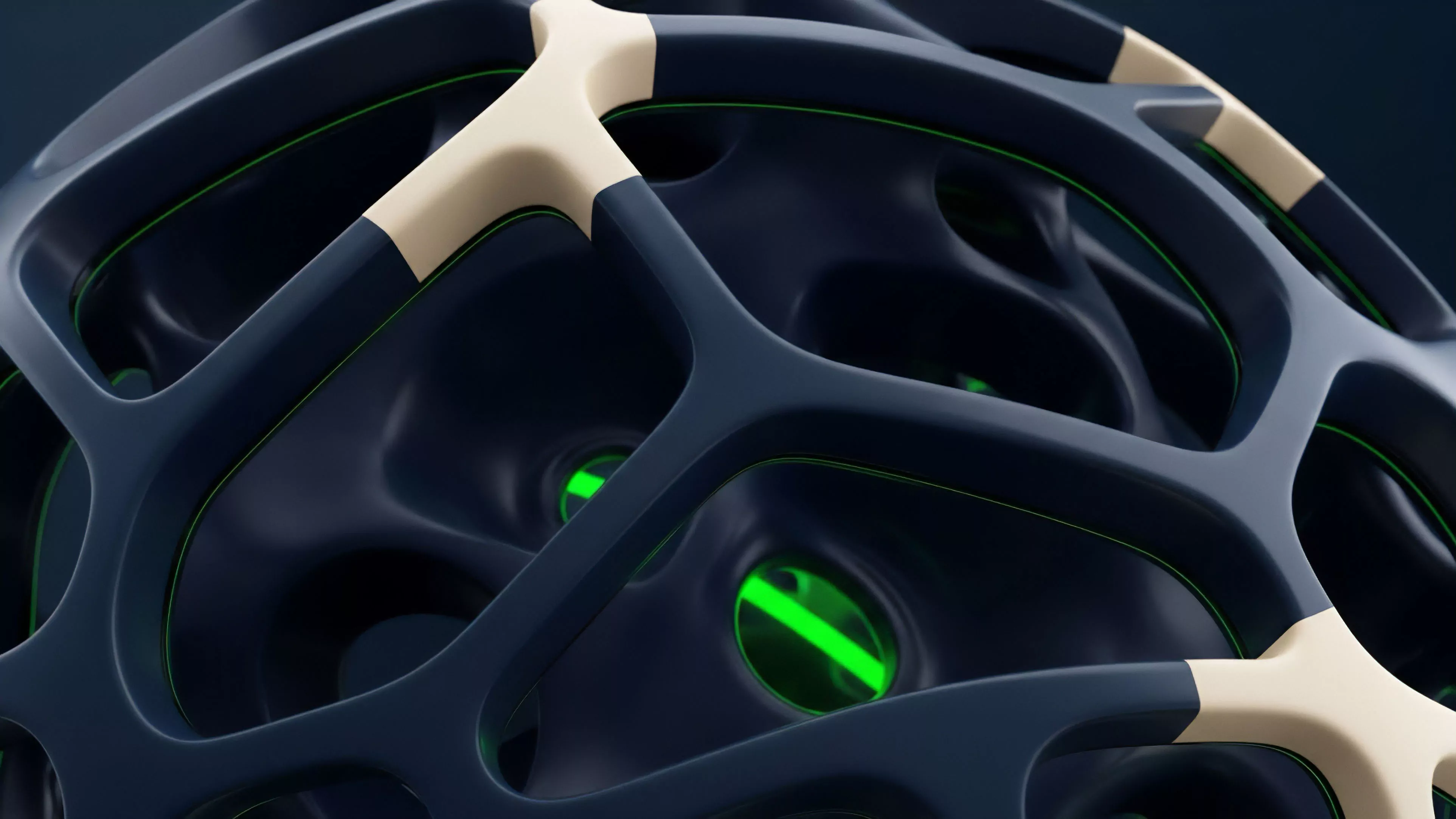

Systemic resilience depends on the ability of decentralized protocols to ingest and react to fragmented global liquidity data in real time.

This evolution also mirrors the increasing sophistication of market participants who now utilize automated arbitrage agents that exploit any latency in risk adjustment. My own observation suggests that we are witnessing a permanent shift where the margin engine itself is the most important piece of code in any decentralized exchange. The reliance on human intervention has been removed, replaced by immutable code that executes risk management based on predefined, data-driven parameters.

Horizon

Future developments in Data Driven Risk Assessment will likely prioritize privacy-preserving computation, allowing protocols to verify solvency without exposing sensitive account data.

We are moving toward a state where risk parameters are set autonomously by decentralized governance models that ingest vast quantities of historical and real-time data, optimizing for both capital efficiency and system stability.

| Future Development | Objective | Primary Benefit |

|---|---|---|

| ZK-Proofs | Solvency verification | Privacy-preserving risk assessment |

| Cross-Chain Oracles | Data aggregation | Mitigation of oracle manipulation |

| AI-Driven Modeling | Predictive analytics | Proactive margin adjustment |

The ultimate goal involves creating a self-healing financial system that maintains its integrity regardless of external market shocks. As these systems become more autonomous, the focus will shift toward the robustness of the underlying consensus mechanisms and the security of the smart contracts governing the risk assessment logic itself. The future of decentralized derivatives depends on our ability to build systems that remain functional under extreme adversarial conditions.