Essence

Data Compression Techniques within decentralized derivative markets represent the mathematical reduction of redundant information across order books, state updates, and clearing mechanisms. These methods ensure that high-frequency financial signals remain transmissible despite the inherent bandwidth constraints of distributed ledger technology. By stripping away non-essential data headers and utilizing state-diff encoding, protocols achieve greater throughput for complex option strategies.

Data compression optimizes the transmission of derivative state changes to maintain low-latency price discovery in resource-constrained environments.

These mechanisms are not merely about storage efficiency; they fundamentally alter the economic feasibility of on-chain market making. When order flow data is compressed, the cost of updating margin requirements and volatility surfaces decreases, allowing for more granular risk management. This efficiency directly impacts the capital requirements for liquidity providers, as lower operational overhead translates into tighter spreads and increased market depth.

Origin

The architectural roots of these techniques lie in the convergence of classical information theory and the specific limitations of early smart contract platforms.

Developers faced a binary choice: either sacrifice the complexity of derivative instruments or invent ways to pack more logic into limited gas budgets. Early attempts involved simple off-chain batching, but the requirement for trustless verification necessitated on-chain compression algorithms.

- State Diff Encoding: Protocols evolved to store only the changes in account balances rather than full state snapshots.

- Merkle Mountain Ranges: Efficient structures were adopted to verify large datasets with minimal proof size.

- Zero Knowledge Succinct Proofs: Advanced cryptographic primitives emerged to compress the validation of entire execution histories into constant-size proofs.

This trajectory mirrors the history of traditional high-frequency trading, where the speed of data serialization determined competitive advantage. In decentralized systems, the constraint shifted from hardware latency to network throughput and computational gas limits. The industry adopted techniques from distributed systems to ensure that complex options ⎊ which require constant re-evaluation of greeks ⎊ could function without overwhelming the underlying consensus layer.

Theory

The quantitative framework for Data Compression Techniques relies on the entropy of order flow.

In a perfectly efficient market, every price update contains maximum information, making lossless compression difficult. However, crypto derivative markets exhibit significant spatial and temporal redundancy. By applying delta-encoding to option greeks and volatility parameters, systems can eliminate the transmission of static values, focusing only on the stochastic shifts that drive pnl.

| Method | Mechanism | Application |

| Delta Encoding | Transmits changes from previous state | Order book updates |

| Huffman Coding | Assigns shorter codes to frequent data | Protocol event logs |

| Recursive Merkle Trees | Compresses multiple proofs into one | Settlement verification |

The mathematical elegance here is found in the trade-off between computational overhead for compression and the savings in network bandwidth. As market participants increase their reliance on automated hedging agents, the demand for compressed, high-fidelity data feeds grows. These agents must process thousands of updates per second; thus, the protocol design must treat compression as a first-class citizen of the execution engine.

Sometimes, I wonder if we are merely building increasingly complex layers to mask the fundamental slowness of decentralized consensus. The obsession with throughput often distracts from the reality that financial risk remains concentrated regardless of how efficiently we pack our data packets.

Approach

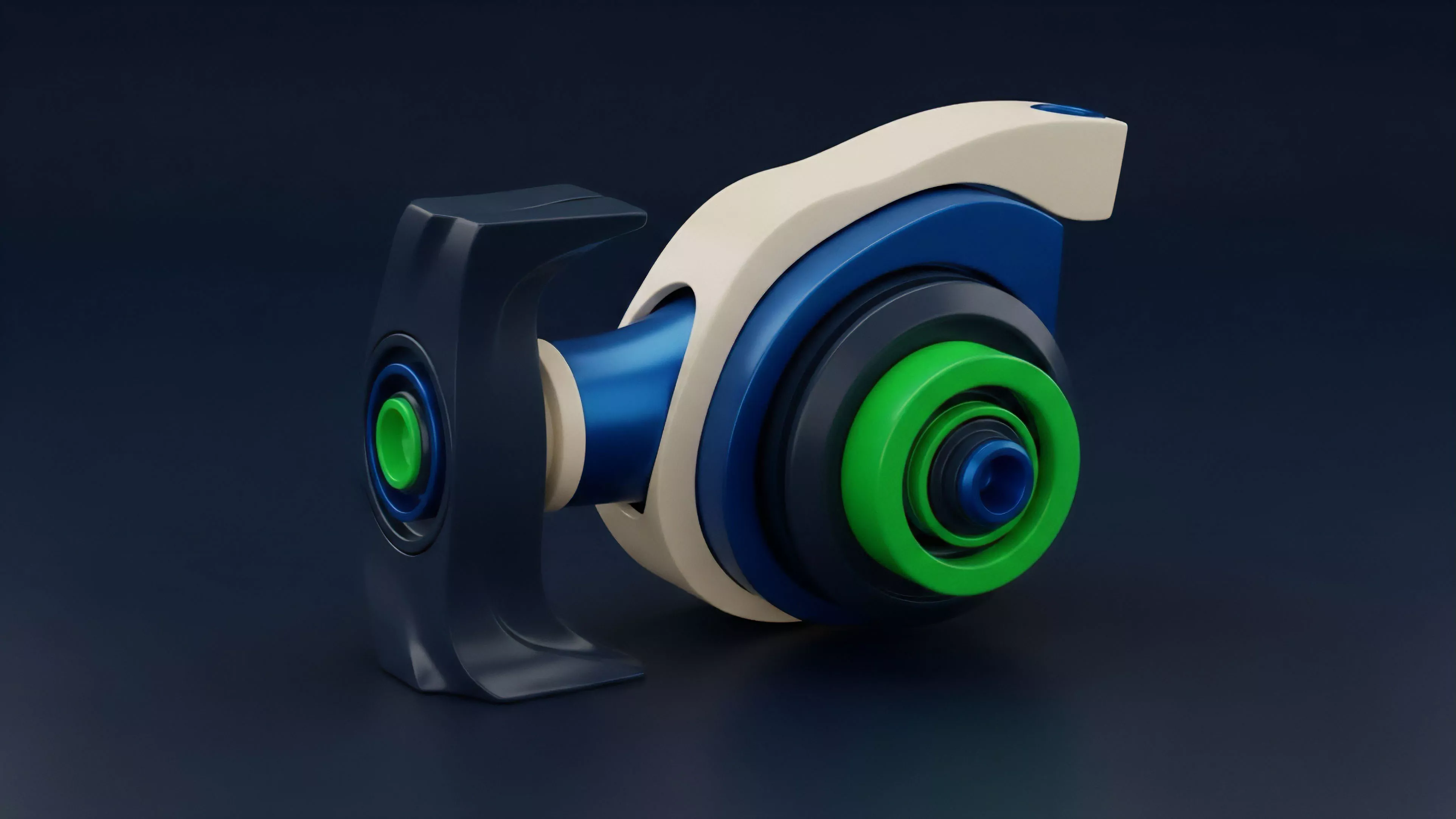

Modern implementation focuses on the integration of Data Compression Techniques directly into the settlement layer. Instead of treating compression as a post-processing step, contemporary protocols embed these logic gates within the smart contracts themselves.

This approach allows for real-time validation of compressed data, ensuring that participants cannot inject malicious, malformed updates into the order book.

Protocol efficiency is achieved when compression algorithms allow the system to scale without compromising the integrity of the underlying state.

Strategists currently utilize several distinct architectures to manage this data load:

- Aggregated State Updates: Bundling multiple option exercises into a single transaction to reduce overhead.

- Sparse Merkle Trees: Maintaining large datasets where only active derivative positions occupy storage space.

- Off-chain Data Availability: Moving non-critical metadata to decentralized storage layers while keeping state roots on-chain.

This methodology requires a deep understanding of the cost-benefit analysis between gas consumption and data accessibility. A system that compresses too aggressively risks making the data unreadable to independent auditors, thereby increasing the trust requirements of the protocol. Achieving the balance is the primary challenge for any architect building for long-term systemic stability.

Evolution

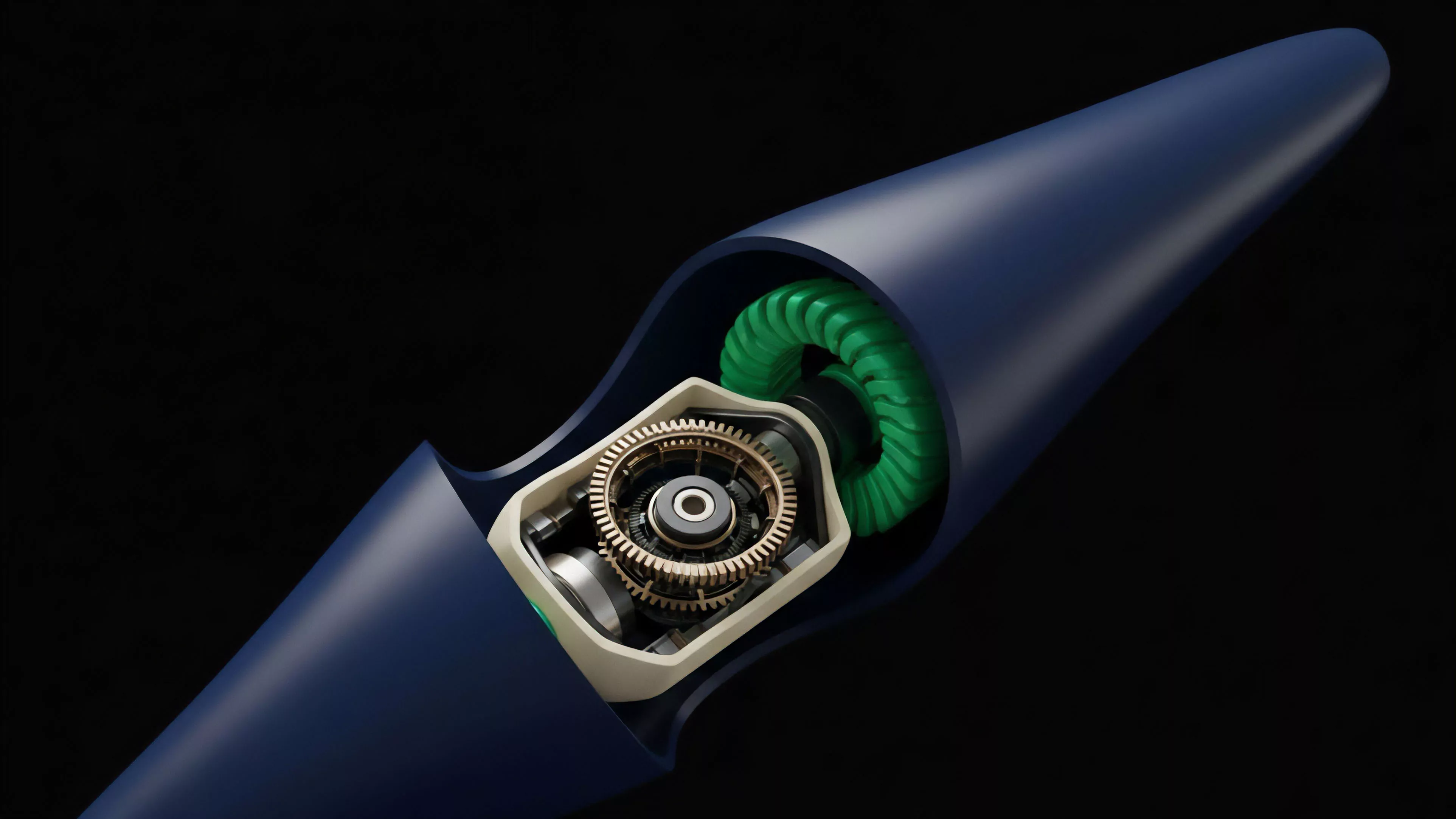

The transition from simple, monolithic smart contracts to modular, rollup-centric architectures has forced a radical change in how derivative data is handled.

Early iterations relied on inefficient, brute-force state updates that frequently congested the network during high-volatility events. Today, the focus has shifted toward Zero Knowledge Compression, where the computational burden is shifted to off-chain provers, leaving the main chain to verify only the final, highly condensed state.

| Era | Primary Focus | Constraint |

| Early DeFi | Functionality | Gas costs |

| Scaling Phase | Throughput | Network latency |

| Modular Era | Proof size | Computational verification |

This evolution is driven by the necessity of survival in a highly competitive, adversarial environment. Protocols that fail to implement efficient data management quickly find themselves priced out of the market by rising transaction costs. The future of decentralized options is predicated on the ability to handle massive order flows without requiring a centralized sequencer, necessitating continued innovation in compression and data availability.

Horizon

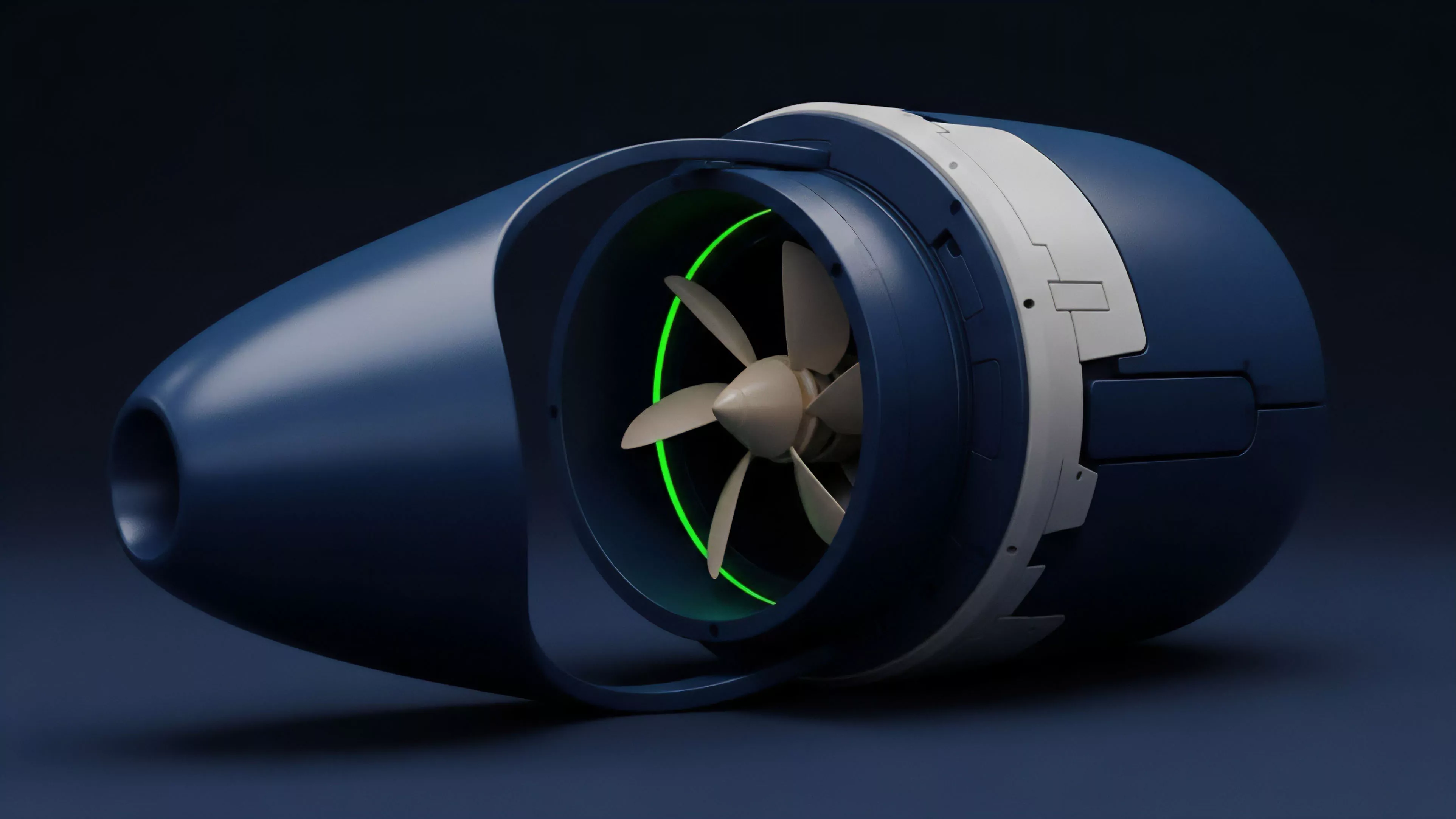

The next stage involves the deployment of Hardware-Accelerated Compression directly into the validator stack.

By utilizing field-programmable gate arrays to perform real-time data reduction, protocols will achieve throughput levels comparable to centralized exchanges. This transition will allow for the introduction of more complex exotic options that are currently too data-intensive for existing infrastructure.

The future of decentralized finance depends on our ability to verify massive, compressed datasets at the speed of light.

As these systems mature, the focus will move from internal protocol efficiency to cross-protocol data standardization. We will likely see the emergence of shared compression standards, allowing derivative data to move seamlessly between different layers of the modular stack. This will reduce liquidity fragmentation and enable a more unified global market for crypto derivatives, provided that the security of these compression proofs remains unassailable.