Essence

Data Backup Procedures in the context of crypto derivatives represent the operational protocols designed to ensure the persistence and recoverability of cryptographic keys, order book states, and margin account balances. These procedures function as the final defense against systemic collapse when smart contract logic or infrastructure layers experience catastrophic failure.

Data backup procedures serve as the ultimate insurance mechanism for maintaining financial continuity during infrastructure failure.

At their core, these procedures mandate the secure, geographically distributed replication of state data and access credentials. The objective is to mitigate the risk of permanent capital loss resulting from node corruption, validator downtime, or unforeseen vulnerabilities in protocol execution.

Origin

The genesis of robust Data Backup Procedures traces back to the inherent fragility of early decentralized exchanges and the recurring loss of private keys by retail participants. Early iterations focused on manual mnemonic phrase storage, a primitive but foundational method for seed recovery.

As derivative markets matured, the shift toward complex, non-custodial liquidity pools necessitated more sophisticated approaches. Market participants recognized that relying solely on on-chain state was insufficient during periods of extreme volatility, where rapid re-synchronization of off-chain order books became a survival requirement for market makers.

- Mnemonic recovery phrases provided the initial standard for individual asset security.

- Hardware security modules emerged to protect institutional-grade signing infrastructure.

- State snapshotting evolved to facilitate rapid restoration of decentralized derivative exchange states.

Theory

The theoretical framework for Data Backup Procedures relies on the principle of redundancy and the minimization of single points of failure. From a quantitative perspective, the value of a backup is defined by the reduction in the expected loss of capital during a black-swan event.

| Metric | Description | Systemic Relevance |

|---|---|---|

| RPO | Recovery Point Objective | Determines the maximum acceptable data loss. |

| RTO | Recovery Time Objective | Defines the duration allowed for service restoration. |

| Integrity | State Consistency | Ensures recovered data matches the canonical chain. |

The efficiency of a backup protocol is measured by the inverse relationship between recovery time and the potential for market contagion.

Systems must account for the asynchronous nature of blockchain consensus. When a protocol state is partitioned, the backup must reconcile the local order flow with the global ledger to prevent double-spending or erroneous margin liquidations. This requires advanced cryptographic verification of snapshots.

Approach

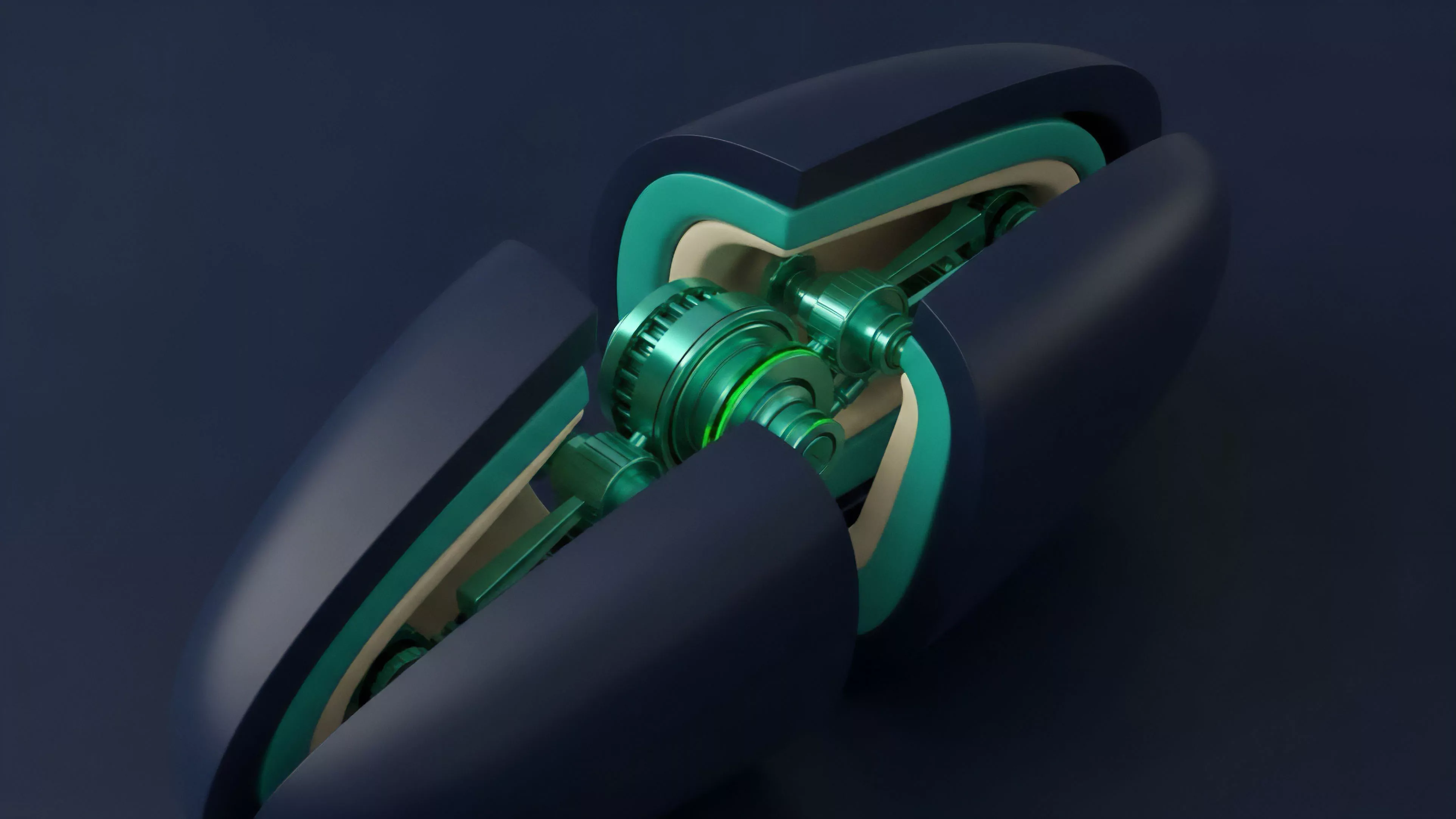

Current implementation strategies for Data Backup Procedures emphasize automation and zero-trust architectures.

Market makers and protocol operators now deploy multi-layered solutions that decouple data storage from execution logic, ensuring that a compromise in one layer does not propagate to the recovery environment.

- Automated snapshotting captures the full state of derivative order books at high frequency.

- Sharded storage distributes backup fragments across disparate geographical jurisdictions.

- Threshold cryptography allows for the reconstruction of access credentials without exposing a single master key.

This approach mitigates the risks associated with centralized cloud storage by utilizing decentralized storage protocols. By distributing the load, operators ensure that the restoration process remains resilient even under adversarial network conditions or targeted denial-of-service attacks.

Evolution

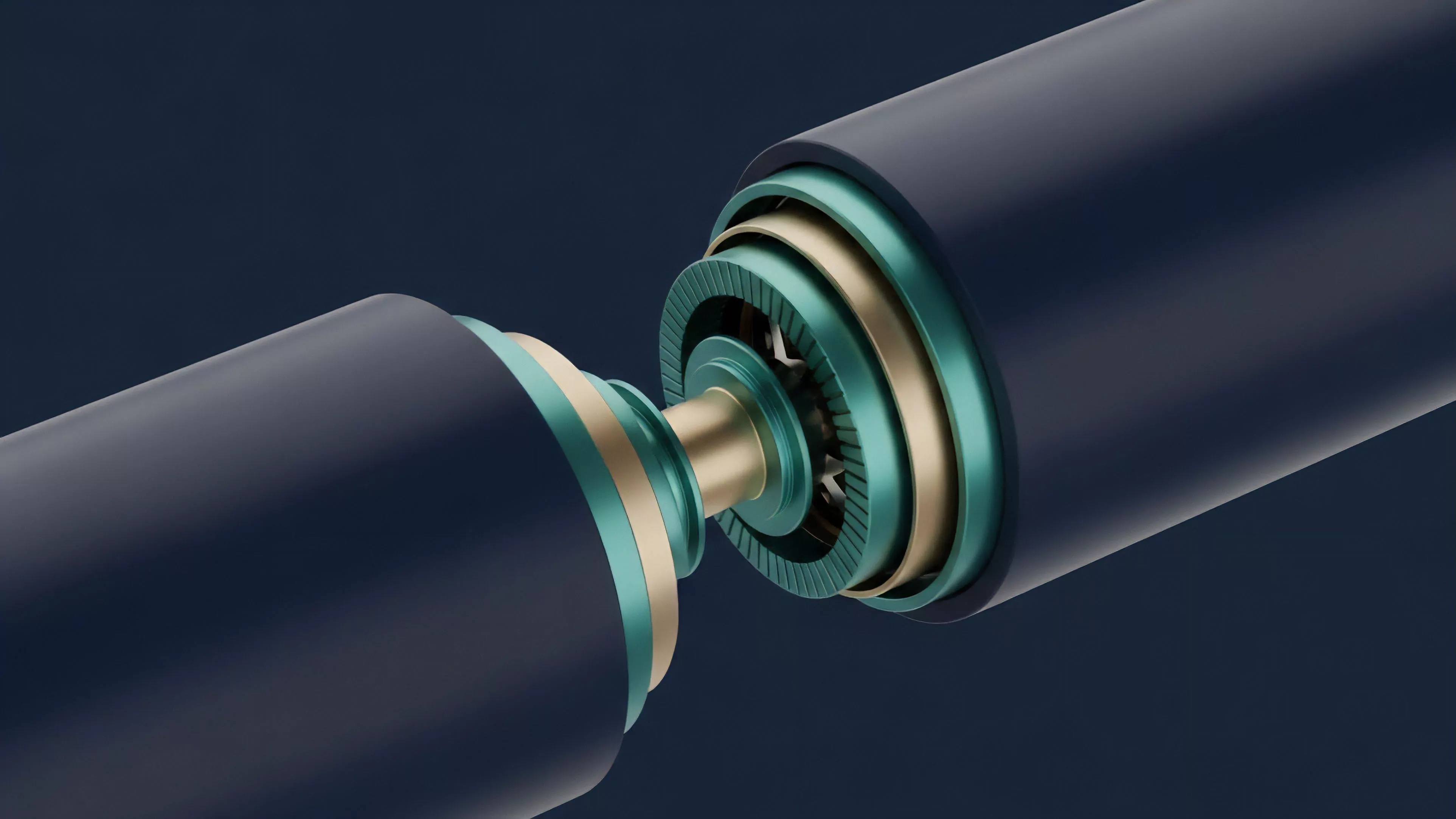

The trajectory of Data Backup Procedures has moved from simple, static storage to dynamic, protocol-integrated resilience. Early methods treated backups as a secondary, offline concern.

Today, the integration of state-syncing mechanisms directly into consensus layers represents a major shift in architecture.

Evolution in backup design centers on the transition from static offline archives to live, protocol-aware state synchronization.

Modern derivative protocols now utilize verifiable state proofs, allowing clients to reconstruct the order book independently of the primary interface. This advancement effectively removes the dependency on a single frontend provider, enhancing the overall censorship resistance of the financial system.

| Era | Focus | Constraint |

|---|---|---|

| Legacy | Manual Key Storage | High user error risk |

| Intermediate | Centralized Cloud Snapshots | Single point of failure |

| Advanced | On-chain State Proofs | High computational overhead |

Horizon

Future developments in Data Backup Procedures will likely incorporate zero-knowledge proofs to enable privacy-preserving state verification. This will allow market participants to prove the validity of their margin positions and account history without revealing the underlying transaction data to unauthorized parties. Furthermore, the integration of autonomous, AI-driven recovery agents will reduce the human intervention required during system failures. These agents will monitor network health and trigger automatic re-synchronization processes, ensuring that derivative markets maintain liquidity even when individual infrastructure nodes falter. What happens when the speed of automated recovery exceeds the latency of human intervention in decentralized margin liquidation events?