Essence

Data Availability constitutes the structural guarantee that the transaction data required to verify a block is accessible to all network participants. In the context of decentralized derivatives, this is the bedrock of trustless settlement. Without verifiable access to the underlying state, participants cannot confirm the validity of price feeds, liquidation thresholds, or collateralization ratios.

The system relies on the assumption that any node can reconstruct the global state to audit the integrity of the ledger.

Data availability ensures that all network participants can verify transaction legitimacy by accessing the underlying data necessary for state reconstruction.

When this property is compromised, the protocol faces a catastrophic failure of transparency. Derivatives, which derive their value from underlying assets, depend on precise state synchronization. If a sequencer or validator withholds data, the settlement of options or futures contracts becomes unverifiable.

This creates an environment where market participants remain unable to determine their actual risk exposure or execute necessary defensive actions.

Origin

The concept emerged from the scalability constraints inherent in monolithic blockchain architectures. Early distributed ledger designs mandated that every node process every transaction, which created a bottleneck as network activity increased. To resolve this, researchers proposed modular architectures where the execution, settlement, and data storage layers operate independently.

- Sharding: Initial proposals to split the network into smaller segments necessitated a mechanism to ensure data remained available across partitions.

- Fraud Proofs: These mechanisms allow light clients to detect invalid state transitions without downloading the entire chain, provided the data is available for challenge.

- Data Availability Sampling: This technique enables nodes to verify that data exists by checking small, random portions of the total dataset, drastically reducing bandwidth requirements.

These developments shifted the focus from processing every transaction to verifying that the data behind those transactions is accessible. This transition marks the evolution of blockchain design from simple payment networks to complex financial settlement layers capable of supporting high-frequency derivative trading.

Theory

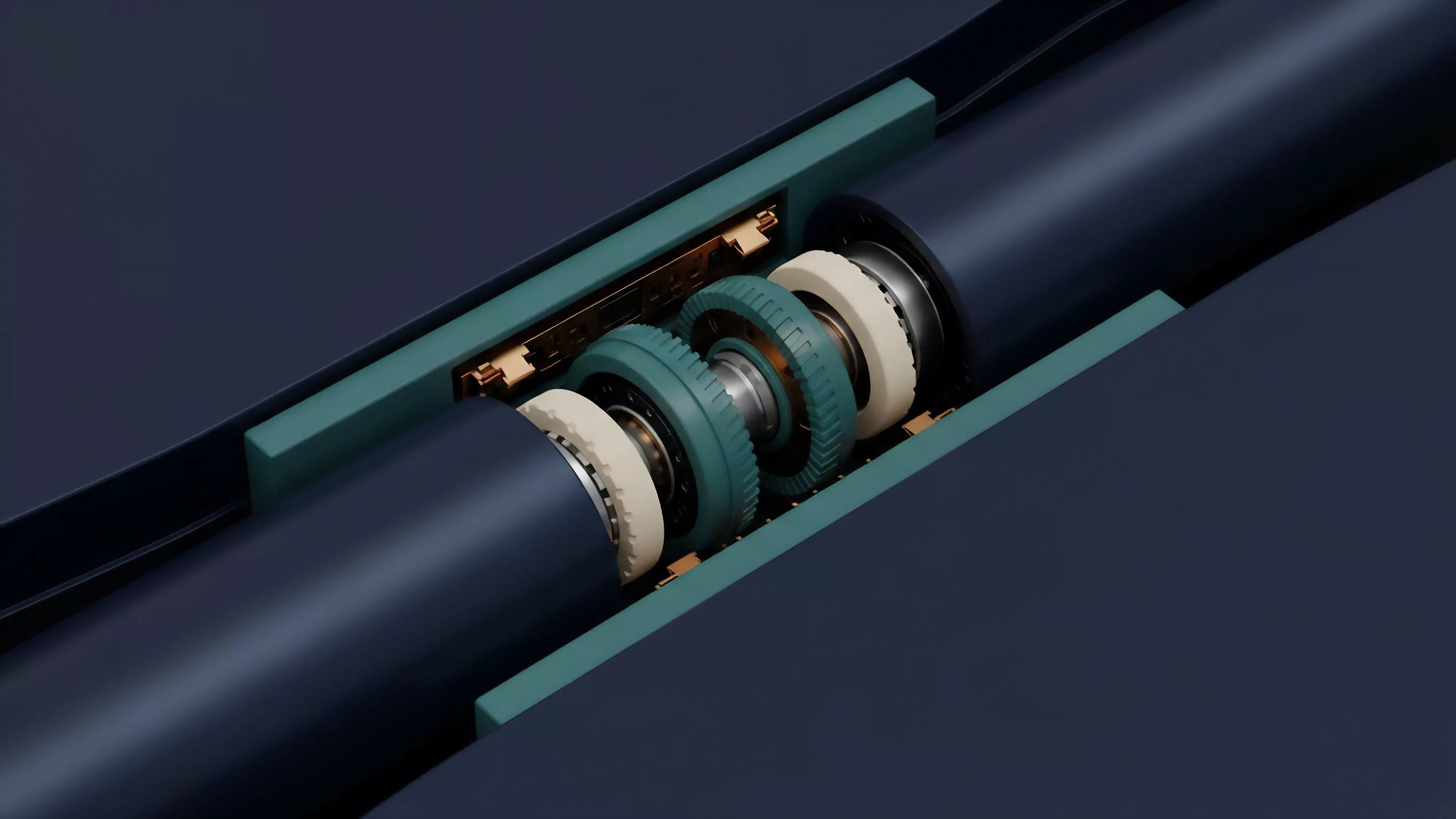

The mathematical rigor of data availability rests on the assumption of adversarial participation. Protocols employ erasure coding to expand the original data into a larger set of redundant fragments. This allows for the reconstruction of the entire dataset even if a significant portion of the fragments is missing.

From a quantitative perspective, the probability of successfully hiding data becomes exponentially small as the number of sampling nodes increases.

| Mechanism | Function | Risk Factor |

| Erasure Coding | Redundancy generation | Data reconstruction cost |

| Sampling | Verification probability | Node network latency |

| Commitments | Integrity verification | Cryptographic overhead |

The interaction between these components dictates the security bound of the protocol. If the cost of withholding data exceeds the potential gain from malicious activity, the system achieves an equilibrium of availability. However, market participants often ignore the tail risks associated with sequencer failure or malicious data withholding, assuming that the protocol remains perpetually accessible.

Sometimes, the most stable systems are those where the cost of verification is distributed across the entire participant set, preventing any single entity from monopolizing the truth.

The security of decentralized derivatives relies on erasure coding and sampling to ensure that the cost of withholding data remains prohibitive for adversarial actors.

Approach

Modern protocols utilize specialized layers to handle the broadcast and storage of transaction data. These layers decouple the consensus on data existence from the execution of derivative smart contracts. By offloading the data storage burden, execution environments maintain higher throughput without sacrificing the ability of independent auditors to verify the state.

- Sequencer Decentralization: Distributing the responsibility for transaction ordering to prevent data withholding by a single entity.

- Proof of Custody: Implementing cryptographic proofs that demonstrate specific nodes are storing the required data fragments.

- Verifiable Delay Functions: Utilizing time-based puzzles to ensure that data is published before the corresponding state transition is finalized.

The current landscape sees a shift toward dedicated availability committees and specialized infrastructure providers. These entities perform the function of ensuring that the state remains auditable, yet this introduces a dependency on their continued operation. Participants must evaluate the robustness of these layers as rigorously as they analyze the underlying option pricing models.

Evolution

The trajectory of this domain has moved from simple on-chain storage to sophisticated, multi-layered consensus protocols. Initially, the focus remained on maximizing block space efficiency, but the rise of complex derivative protocols necessitated a higher standard of state integrity. The industry now recognizes that data availability is not an optional feature but a core requirement for the long-term survival of decentralized finance.

Data availability infrastructure has evolved from simple on-chain storage into specialized, decentralized layers that underpin the integrity of complex financial settlement.

As trading venues move toward higher leverage and more complex instruments, the requirements for data accessibility grow more stringent. The integration of zero-knowledge proofs has further refined the approach, allowing for succinct verification of large datasets. This progression indicates a future where the distinction between centralized and decentralized performance will continue to shrink, provided the underlying data remains accessible to all.

Horizon

The next phase involves the development of self-healing networks that automatically redistribute data fragments when nodes go offline. This will likely involve advanced game-theoretic incentive structures that reward nodes for long-term data preservation. As these systems mature, the reliance on centralized sequencers will diminish, paving the way for truly permissionless derivative markets.

| Development Phase | Technical Focus | Financial Impact |

| Phase One | Sampling efficiency | Reduced settlement latency |

| Phase Two | Incentivized storage | Increased collateral reliability |

| Phase Three | Automated self-healing | Systemic resilience enhancement |

Ultimately, the objective is to create a financial environment where the risk of state unavailability is eliminated by design. This will allow for the proliferation of sophisticated derivatives that currently remain constrained by the limitations of existing consensus mechanisms. The success of these systems depends on the ability to align the incentives of validators with the necessity of maintaining a transparent, verifiable record of all market activity.