Essence

Data Availability Costs represent the systemic friction incurred by decentralized applications when accessing and verifying external data sources necessary for contract execution. For derivatives protocols, this cost is not simply a fee; it is a fundamental constraint that dictates capital efficiency, liquidation thresholds, and overall systemic risk. The core challenge lies in the inherent isolation of smart contracts from the outside world.

To settle an option contract, calculate margin requirements, or execute a liquidation, the protocol requires a real-time, tamper-proof price feed. The expense associated with obtaining this feed, verifying its integrity on-chain, and ensuring its timeliness constitutes the primary component of the Data Availability Cost. This cost structure forces protocols to make critical design trade-offs.

If data updates are too expensive, the protocol must either increase collateral requirements to buffer against stale data risk or reduce the frequency of updates, which introduces significant latency. Latency in data feeds directly impacts the accuracy of option pricing models and creates windows of opportunity for front-running or malicious arbitrage. The Data Availability Cost therefore functions as a direct tax on financial precision within a decentralized system.

It is a cost that must be paid in either direct gas fees, reduced capital efficiency through overcollateralization, or increased systemic risk.

Data Availability Costs represent the systemic friction incurred by decentralized applications when accessing and verifying external data sources necessary for contract execution.

Origin

The concept of Data Availability Costs emerged from the “oracle problem” in early decentralized finance. When protocols first attempted to build derivatives on high-throughput, high-cost Layer 1 blockchains, particularly Ethereum during periods of high network congestion, the expense of updating price feeds became prohibitive. Early solutions relied on centralized or highly consolidated oracle networks, which reduced costs but introduced single points of failure.

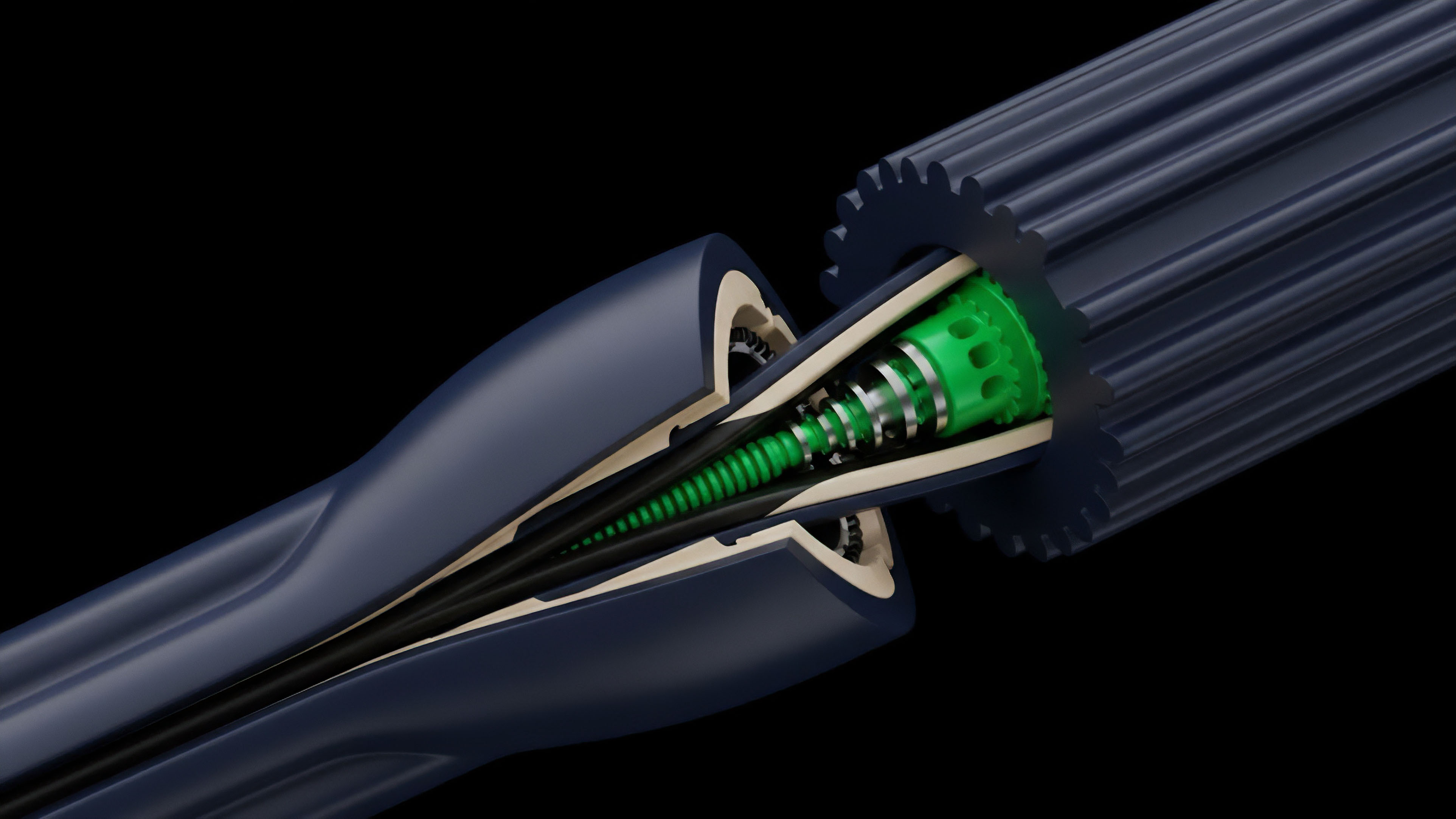

The initial design of decentralized oracle networks, while enhancing security through redundancy, significantly increased the cost of data updates. Each data point required multiple validators to submit transactions on-chain, escalating gas fees. The origin of DAC as a specific financial constraint can be traced to the development of complex derivatives, such as perpetual futures and options, where near-instantaneous price updates are essential for risk management.

The high cost of these updates directly impacted the viability of low-collateral products. Protocols designed to handle options faced a dilemma: either pay high costs to maintain tight collateral ratios or accept higher latency and force users to overcollateralize. This led to the architectural separation of data availability from execution logic, a necessary step for scaling derivatives.

- Oracle Network Architecture: The design choice of how many nodes aggregate data, how often they update, and whether they operate on-chain or off-chain.

- Transaction Cost Dynamics: The underlying gas costs of the base layer blockchain, which directly determine the price of on-chain data verification.

- Data Latency Requirements: The specific needs of the financial instrument. Options with short expirations require high-frequency updates, increasing DAC.

- Security Model: The economic cost of ensuring data integrity, which involves staking and slashing mechanisms that impose indirect costs on data providers.

Theory

The impact of Data Availability Costs on derivatives pricing can be modeled as a data risk premium. In traditional quantitative finance, risk models account for market volatility, interest rate fluctuations, and counterparty risk. In decentralized finance, an additional variable must be introduced: the risk associated with data latency and availability.

This risk premium is directly proportional to the Data Availability Cost. A high DAC implies higher latency, which in turn leads to a wider bid-ask spread and less efficient pricing. From a quantitative perspective, high DAC environments distort the implied volatility surface.

When data updates are infrequent, a protocol’s liquidation engine operates with stale information. This creates a risk profile where the protocol itself is exposed to sudden market movements between updates. To compensate, options market makers demand higher premiums for options with strikes near the current price (high gamma risk), effectively steepening the volatility skew.

The Black-Scholes model, which assumes continuous price observation, fails in a high-DAC environment. Protocols must instead adopt models that account for discrete data updates, often requiring higher capital buffers to mitigate the risk of price slippage between data points. The resulting cost is passed on to the end user through higher option premiums or higher capital requirements for selling options.

| Oracle Architecture | Data Availability Cost Impact | Latency Implications | Capital Efficiency Trade-off |

|---|---|---|---|

| Centralized Oracle | Low (Single submission) | High (Single point of failure) | High (Risk of manipulation) |

| Decentralized Oracle Network (L1) | High (Multiple on-chain submissions) | Low (High frequency possible) | Low (High cost, high security) |

| Off-chain Computation/L2 Rollup | Low (Data verification off-chain) | Medium (Data propagation delay) | High (Low cost, complex architecture) |

Approach

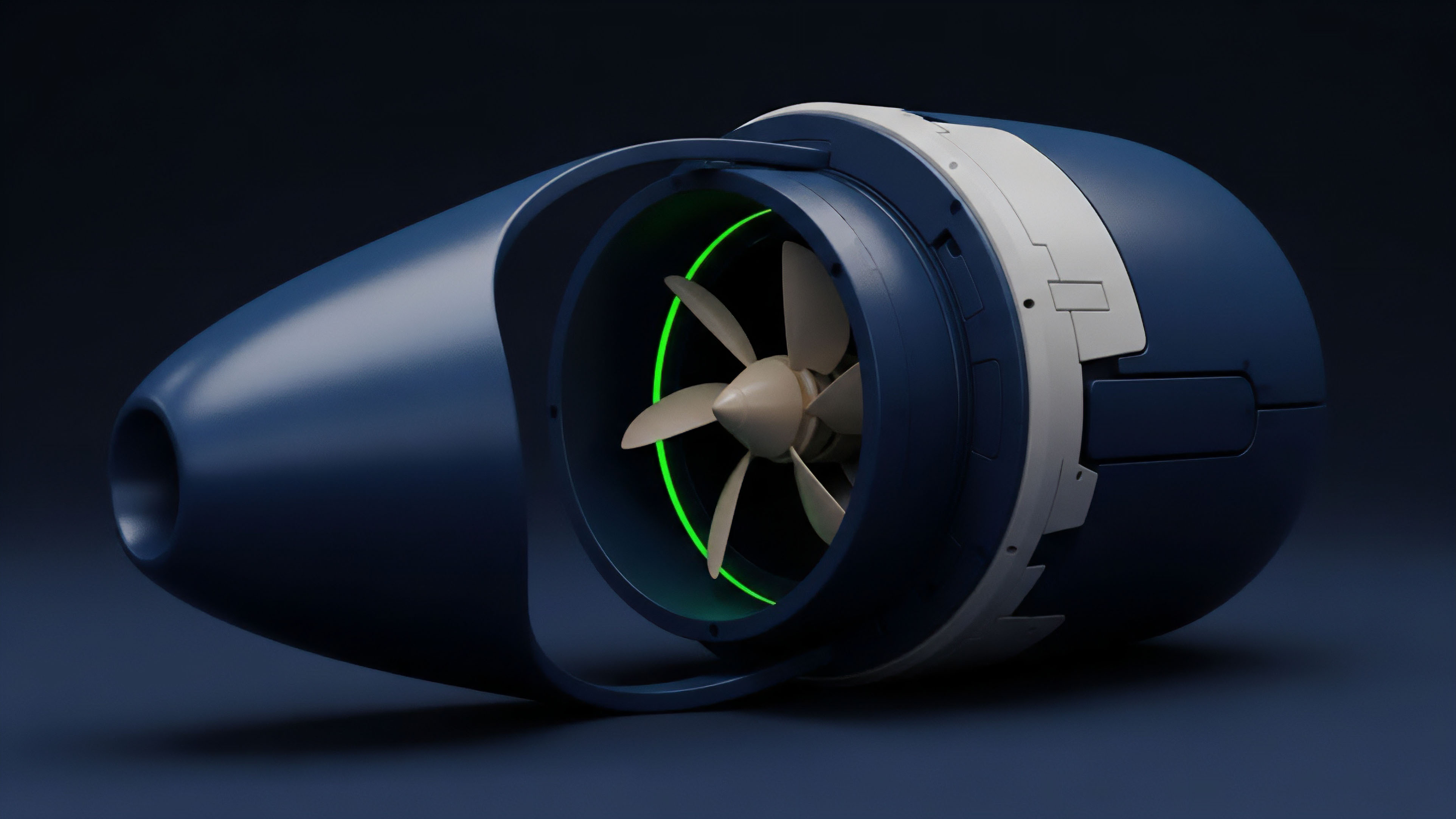

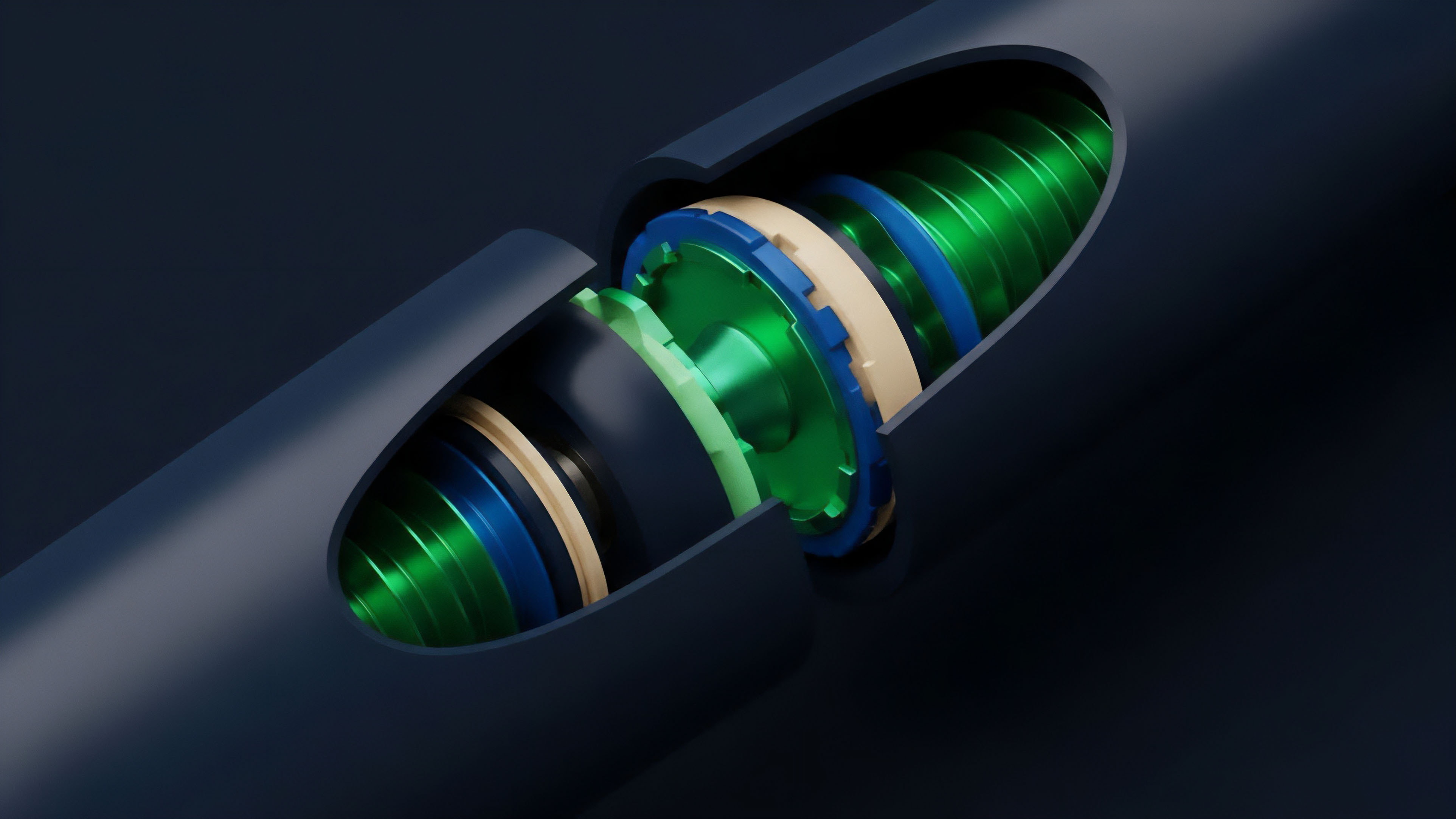

Protocols currently manage Data Availability Costs by separating the data verification process from the main execution layer. This approach involves utilizing Layer 2 scaling solutions, data compression techniques, and specialized data availability layers. The core strategy is to minimize the amount of data that must be posted on the high-cost base layer blockchain.

This allows for more frequent data updates while keeping costs manageable. A common approach involves a “pull-based” oracle model. Instead of having the oracle network continuously push data to the blockchain, the protocol allows users or liquidators to pull the data when needed, paying the gas cost at that specific time.

This shifts the cost from a continuous operational expense to an event-driven cost, improving capital efficiency for the protocol. However, this model introduces a new challenge: a potential race condition where multiple participants attempt to pull data and execute liquidations simultaneously, leading to high gas costs during periods of volatility.

- Optimistic Rollups: Protocols utilize optimistic rollups to post compressed data batches to the L1. The cost of data availability is amortized across many transactions, significantly reducing the cost per update. The trade-off is a delay in finality due to the challenge period.

- ZK-Rollups: Zero-knowledge proofs verify off-chain computations, ensuring data integrity without requiring all data to be re-executed on-chain. This reduces the data footprint on the L1, but the computational cost of generating the proofs is high.

- Data Availability Sampling (DAS): New data availability layers like Celestia allow protocols to post data off-chain while still enabling light nodes to verify data integrity by sampling small portions of the data block. This promises to reduce DAC significantly by separating data from execution.

Data availability sampling, as implemented in data-centric architectures, aims to separate data verification from execution, drastically reducing the cost of posting data while maintaining security.

Evolution

The evolution of Data Availability Costs reflects a shift from a simple on-chain transaction cost to a complex architectural constraint. Initially, DAC was a direct function of L1 gas prices. Protocols reacted by overcollateralizing and limiting the complexity of their financial instruments.

The second phase of evolution involved the creation of specialized oracle networks and Layer 2 solutions, which reduced the cost per update but introduced new challenges related to data latency and liquidity fragmentation. The current stage of evolution focuses on data availability layers. The core idea is that a blockchain’s primary function does not need to be execution.

Instead, a blockchain can serve as a highly secure data availability layer, while execution occurs on separate, specialized rollups. This architectural shift, championed by projects like Ethereum 2.0 and Celestia, fundamentally redefines the cost structure of data. By moving from a high-cost execution model to a low-cost data availability model, protocols can support more complex derivatives with higher capital efficiency.

This transition creates a new set of market dynamics. Arbitrage opportunities now exist not only between exchanges but also between data availability layers and execution environments. A high-speed, low-cost data layer allows for more frequent rebalancing of automated market maker (AMM) option pools, tightening spreads and increasing liquidity.

Conversely, a high-cost data environment forces AMMs to update prices less frequently, leading to higher impermanent loss for liquidity providers and less efficient pricing for traders.

Horizon

Looking ahead, the future of Data Availability Costs will be defined by two key developments: data availability sampling and the rise of data-centric architectures. The transition to a modular blockchain design where execution layers are decoupled from data availability layers will fundamentally alter the cost function for derivatives protocols.

This new paradigm will allow for extremely low-cost data updates, enabling new classes of options and derivatives that are currently economically unviable. The challenge shifts from simply paying for data to managing the integrity of data across different layers. In a modular world, protocols must ensure that data posted to the availability layer is correctly interpreted by the execution layer.

This introduces new risks related to data propagation delay and cross-chain communication. However, a significant reduction in DAC will allow protocols to reduce overcollateralization requirements, freeing up billions in capital currently locked in buffers against data risk. This will significantly increase the capital efficiency of the entire decentralized derivatives market.

| Model Parameter | Current L1 Data Availability (EVM) | Future DAS Data Availability (Modular) |

|---|---|---|

| Data Cost per Byte | High (Proportional to gas price) | Low (Proportional to data layer cost) |

| Verification Method | Full execution by all nodes | Data sampling by light nodes |

| Liquidity Impact | Fragmentation, high spreads | Consolidation, low spreads |

| Capital Efficiency | Low (High overcollateralization) | High (Low overcollateralization) |

The transition to data availability sampling will fundamentally change the cost structure of data, enabling new derivatives and reducing capital requirements for market makers.

Glossary

Decentralized Data Availability

Compliance Costs

Layer 2 Scaling Costs

Storage Costs

Volatile Transaction Costs

Sequencer Operational Costs

Consensus Layer Costs

Data Posting Costs

Verification Gas Costs