Essence

Data Availability functions as the structural bedrock for decentralized financial systems, ensuring that transaction records remain accessible and verifiable by all network participants. Without guaranteed access to this state data, consensus mechanisms falter, rendering financial settlements insecure and prone to censorship. Cost Optimization Strategies represent the deliberate architectural choices designed to minimize the overhead associated with publishing this state data to the primary settlement layer.

Data availability ensures verifiable transaction history while cost optimization minimizes the capital burden of network settlement.

The tension between these two forces dictates the scalability limits of decentralized exchanges and derivative platforms. Financial protocols must balance the requirement for absolute data transparency with the economic reality of block space scarcity. This trade-off is the primary determinant of transaction throughput and the ultimate feasibility of high-frequency decentralized trading.

Origin

The necessity for these strategies arose from the fundamental limitations of monolithic blockchain architectures, where every node validates every transaction.

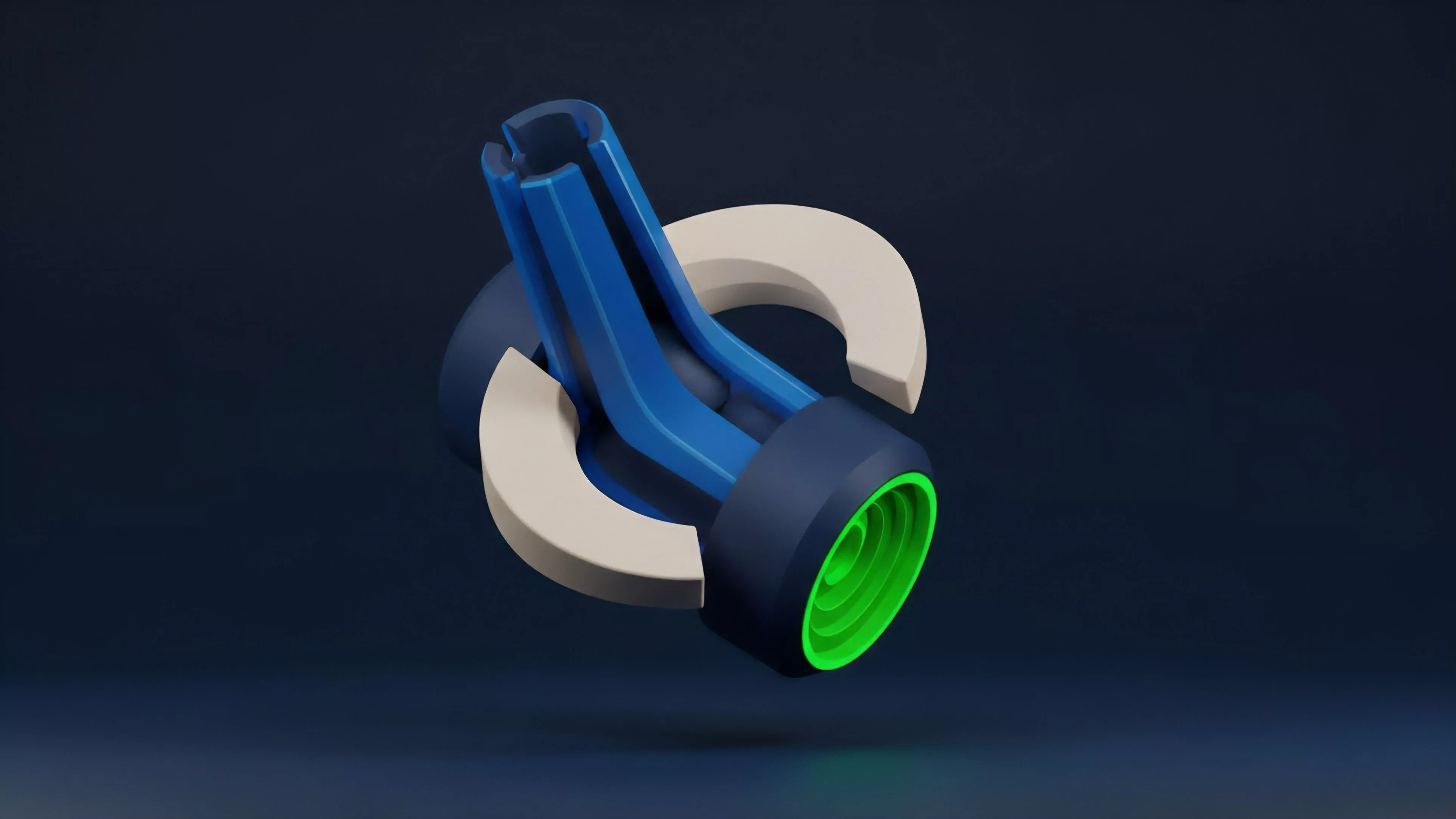

This design choice, while secure, creates a bottleneck that inflates transaction costs during periods of high market activity. Early decentralized finance participants encountered these friction points when simple swap operations became prohibitively expensive, leading to the development of modular blockchain stacks. The shift toward modularity separates execution, settlement, and data availability into distinct layers.

This separation allows specialized protocols to handle data storage more efficiently, bypassing the constraints of general-purpose blockchains. Developers identified that by decoupling data availability from execution, they could achieve significant reductions in gas expenditure without compromising the security guarantees of the underlying network.

| Architecture Type | Data Handling | Cost Impact |

| Monolithic | Integrated | High |

| Modular | Decoupled | Low |

Theory

Protocol Physics dictates that the cost of data availability is directly proportional to the security budget of the settlement layer. When a derivative protocol posts its state to a mainnet, it inherits that network’s security but incurs the full market rate for block space. Theoretical models suggest that optimizing this process requires moving data to off-chain environments that utilize cryptographic proofs to maintain integrity.

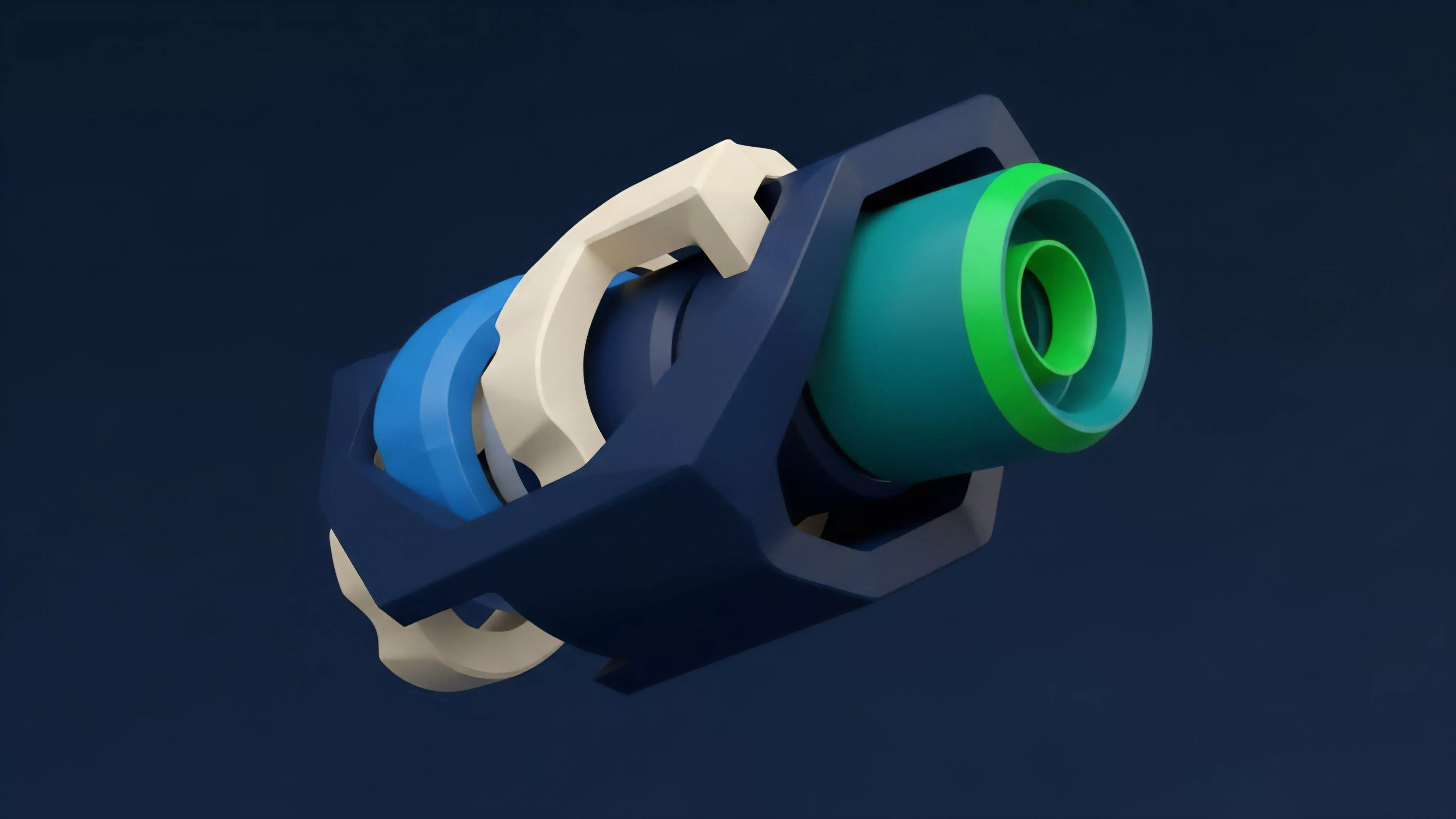

- Validity Proofs allow for the compression of massive datasets into small, verifiable strings that confirm the accuracy of state transitions.

- Data Availability Sampling enables nodes to verify that data is present without downloading the entire dataset, drastically lowering hardware requirements.

- Blob Storage provides a dedicated, temporary space for transaction data, separating it from the primary execution path to lower congestion.

This structural approach relies on Game Theory to incentivize nodes to store and serve data. Participants are compensated for their role in maintaining availability, creating a competitive market for storage that drives costs downward. The efficiency of this model depends on the robustness of the fraud proofs or validity proofs used to punish malicious actors who attempt to withhold data.

Cryptographic proofs enable state verification at a fraction of the cost required for full on-chain data replication.

The interplay between these variables creates a feedback loop where lower costs attract more volume, which in turn demands more efficient data handling. One might view this as a digital equivalent to the transition from physical ledger entries to high-speed electronic clearinghouses, where the goal remains the reduction of settlement latency.

Approach

Modern decentralized derivative venues employ several tactical frameworks to maintain profitability while ensuring platform solvency. These venues often utilize Layer 2 Rollups to bundle thousands of trades before committing a single proof to the settlement layer.

This batching mechanism amortizes the fixed cost of data publication across a large user base, effectively lowering the per-trade overhead. Another prevalent technique involves Off-Chain Order Books combined with on-chain settlement. This architecture permits high-frequency updates to pricing and margin status without requiring a blockchain transaction for every minor movement.

Only the final clearing and settlement events are broadcast to the network, significantly reducing the data footprint.

| Strategy | Mechanism | Primary Benefit |

| Rollup Batching | Transaction Aggregation | Lower Gas Fees |

| State Channels | Direct Peer Settlement | Zero Latency |

| Data Compression | Proof Minification | Storage Efficiency |

The implementation of these strategies necessitates a rigorous focus on Smart Contract Security. Each layer of abstraction introduces potential vectors for exploit, requiring auditors to verify that the cryptographic proofs remain immutable and that the data availability guarantees are enforced by the protocol consensus rules.

Evolution

The path toward current efficiency has been marked by a transition from basic on-chain logging to sophisticated, proof-based architectures. Early decentralized exchanges functioned as simple automated market makers that recorded every action directly on the ledger.

This was inherently unsustainable for complex financial instruments like options, where Greeks calculations and margin adjustments occur continuously. The introduction of specialized Data Availability Layers transformed this landscape. These protocols provide a dedicated, decentralized marketplace for data, allowing execution layers to store information independently of the settlement chain.

This development mirrors the evolution of traditional finance, where trading venues, clearing houses, and custodians operate as distinct, interconnected entities.

Decentralized data layers decouple execution from settlement, mirroring the modularity found in mature financial market structures.

We now observe a movement toward application-specific blockchains that optimize their entire stack for derivative trading. These environments integrate data availability and cost management at the protocol level, removing the need for external rollups and further streamlining the path from order execution to final settlement.

Horizon

Future developments will focus on the convergence of Zero-Knowledge Cryptography and decentralized storage to create near-zero-cost data availability. The next iteration of derivative platforms will likely utilize recursive proofs to aggregate data from multiple chains, creating a unified liquidity environment that operates without the current limitations of cross-chain bridges.

- Recursive SNARKs will permit the verification of entire network states within a single, tiny cryptographic proof.

- Distributed Hash Tables will evolve to become the standard for persistent, censorship-resistant data retrieval in financial protocols.

- Automated Cost Arbitrage will allow protocols to dynamically route data to the cheapest available storage layer in real-time.

The systemic risk of these advancements lies in the increasing complexity of the stack. As we abstract away the underlying data layer, the burden of security shifts to the cryptographic primitives themselves. The successful protocols will be those that prioritize verifiable simplicity over raw, unoptimized throughput, ensuring that the market remains resilient against both technical failure and adversarial manipulation.