Essence

Data Availability represents the foundational guarantee that transaction records remain accessible and verifiable by all network participants, preventing unilateral censorship or state withholding. Within the context of decentralized derivatives, this mechanism ensures that the state required to calculate option payoffs, collateralization ratios, and liquidation thresholds resides in a publicly auditable format. When this accessibility is compromised, the integrity of financial settlement protocols degrades, creating systemic vulnerability.

Data availability serves as the immutable anchor for verifying state transitions and enforcing contractual obligations in decentralized financial systems.

Cost Optimization addresses the economic friction inherent in achieving this availability. As decentralized systems scale, the overhead of broadcasting and storing every state change across every node becomes prohibitive. This friction manifests as high gas fees for options traders, rendering complex strategies like butterfly spreads or volatility hedging economically non-viable.

Future systems must balance the imperative of universal verifiability with the practical constraints of throughput and transaction costs.

Origin

The architectural challenge of Data Availability traces back to the fundamental trilemma of blockchain design, which posits a trade-off between security, decentralization, and scalability. Early iterations of decentralized exchanges forced every participant to process every transaction, creating a bottleneck that severely limited liquidity for derivative instruments. This forced reliance on monolithic chains necessitated exorbitant fees for simple operations.

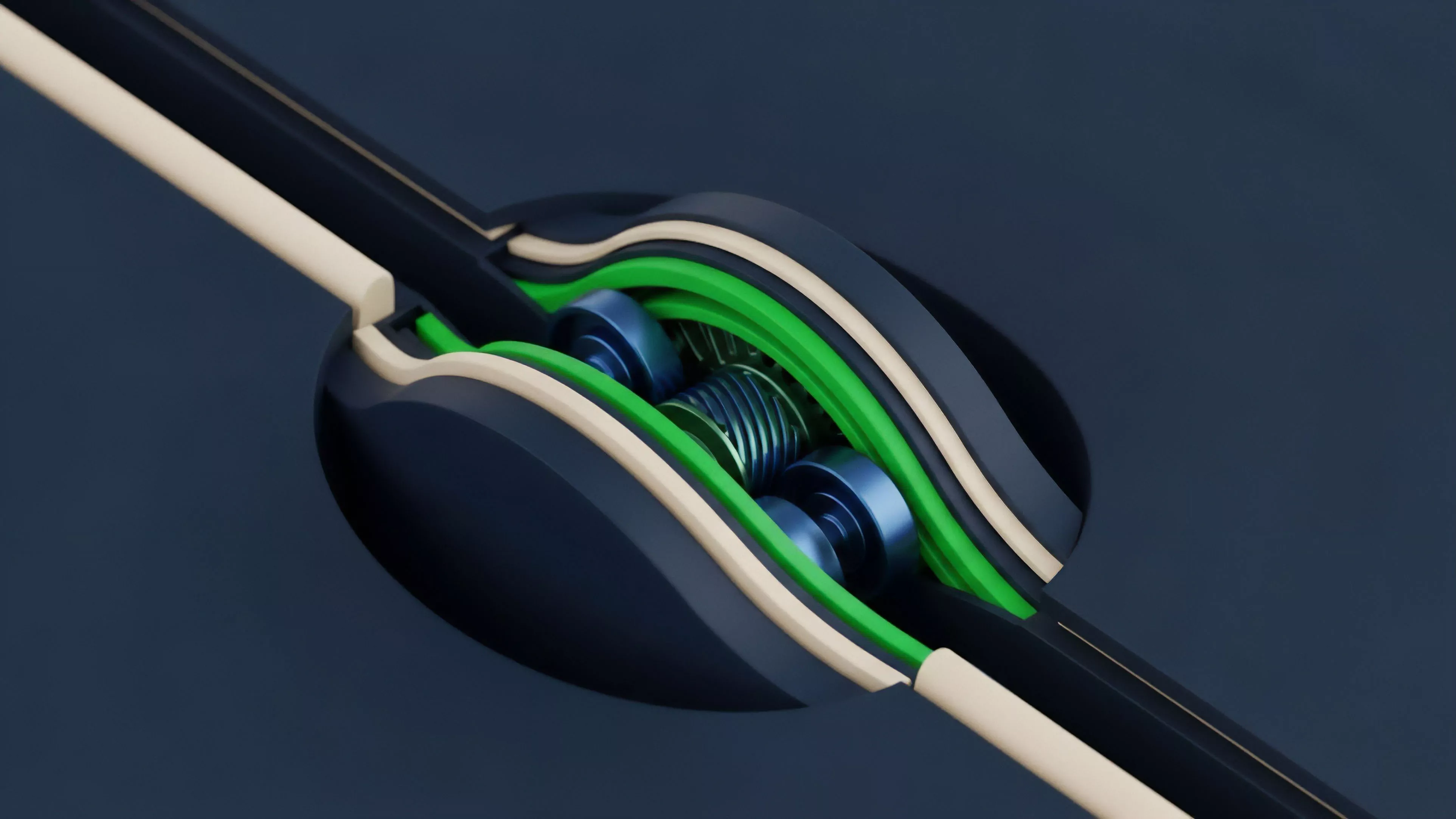

- Modular Architecture emerged as the primary response, decoupling execution from data publication to bypass throughput constraints.

- Sampling Techniques provide the statistical foundation for verifying availability without requiring full node participation, lowering the barrier for light clients.

- Rollup Technologies aggregate multiple derivative trades into single proofs, drastically reducing the cost per individual position update.

These developments shifted the focus from brute-force replication to cryptographic verification. The evolution of Zero Knowledge Proofs further refined this by allowing protocols to prove the validity of a state change without requiring the full disclosure of the underlying transaction data, provided the data remains available elsewhere for verification purposes.

Theory

The mechanics of Data Availability and Cost Optimization rely on rigorous cryptographic proofs that ensure the system remains trustless even when data is sharded or offloaded. The mathematical burden of proof must be distributed such that no single entity can manipulate the perceived state of the derivative market.

Probabilistic Verification

The system utilizes Data Availability Sampling, where nodes randomly query small portions of a block to confirm the existence of the full dataset with high statistical confidence. This allows for massive scaling in transaction volume while maintaining security guarantees equivalent to traditional full nodes. The mathematical risk of data withholding is reduced to a negligible probability through repeated sampling cycles.

Probabilistic sampling transforms the verification process from a resource-intensive obligation into a scalable, lightweight cryptographic check.

Economic Efficiency

Cost Optimization involves minimizing the amount of on-chain footprint required to finalize a trade. By moving the heavy computation of options pricing and Greeks calculations to off-chain environments, protocols achieve significant reductions in settlement costs. The following table illustrates the comparative trade-offs between different data handling frameworks.

| Framework | Data Integrity | Cost Efficiency | Scalability |

| Monolithic Settlement | Absolute | Low | Restricted |

| Optimistic Rollups | High | Moderate | High |

| Zero Knowledge Proofs | Maximum | High | Extreme |

The intersection of these techniques forces a departure from legacy centralized models, where the market maker dictates the price and the flow. In a decentralized environment, the cost of the trade is a function of the protocol efficiency, not the rent-seeking behavior of a central intermediary. The market microstructure becomes a function of the protocol physics, where the cost of data is the primary driver of liquidity.

Approach

Current implementations of Data Availability focus on creating specialized layers that serve as a source of truth for execution environments.

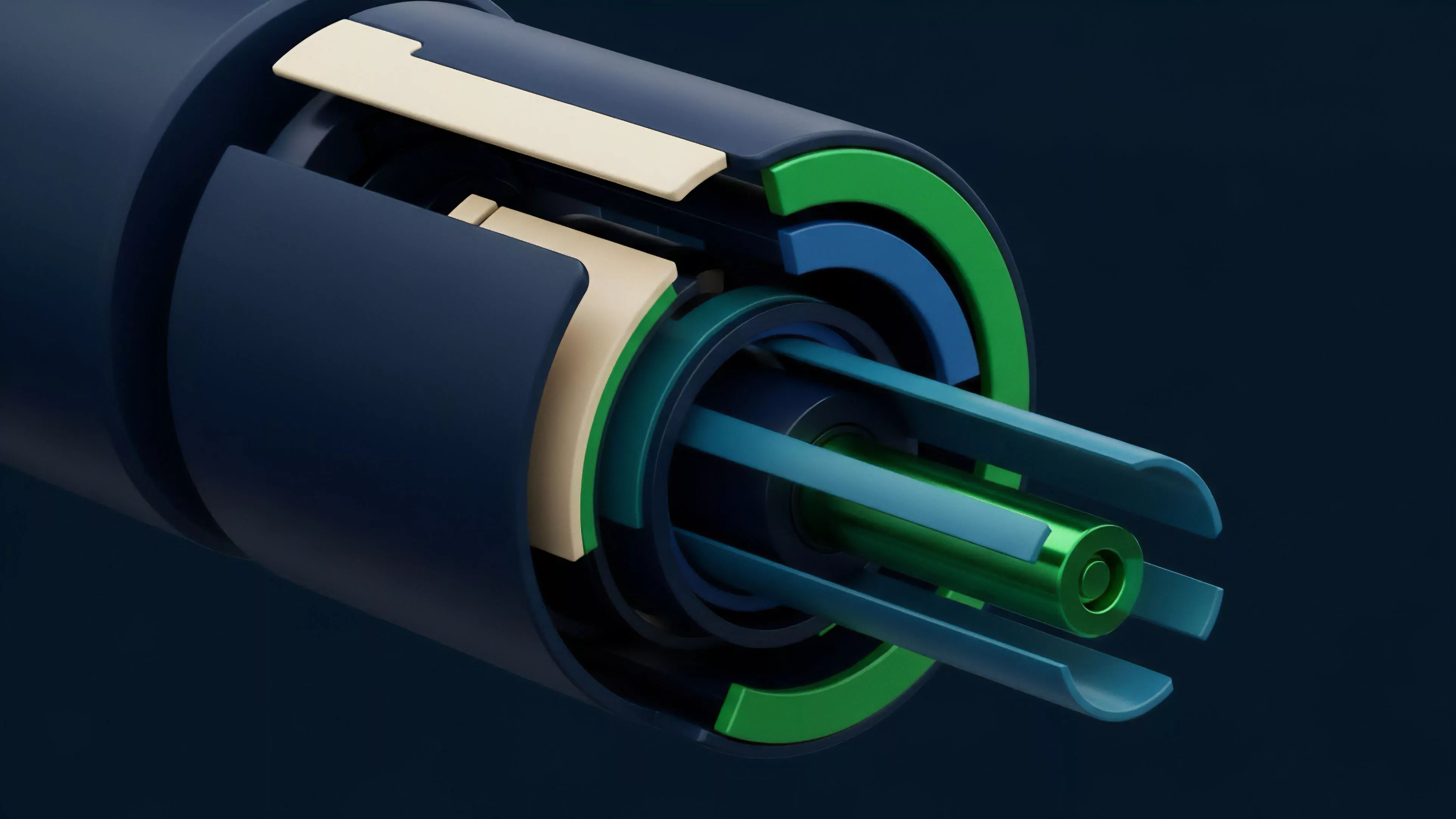

These layers utilize Erasure Coding, a technique that allows the original data to be reconstructed even if significant portions of the network fail or become malicious. This ensures that even in an adversarial environment, the integrity of the derivative contract remains intact.

- State Commitment requires that every option trade be recorded against a verified root, ensuring consistency across the entire ledger.

- Blob Storage utilizes temporary, low-cost memory spaces for transaction data that does not need permanent on-chain retention for settlement purposes.

- Client Diversity maintains the robustness of the system by preventing any single software implementation from dominating the validation process.

Optimized data pathways allow for the efficient execution of complex derivative strategies by reducing the cost of proving state validity.

My assessment of current architectures reveals a distinct trend toward off-chain computation combined with on-chain settlement. This split allows traders to benefit from the speed of traditional finance while retaining the self-custody and transparency of blockchain-native systems. The primary hurdle remains the latency introduced by proof generation, which can impact the accuracy of real-time Greeks in volatile markets.

Evolution

The transition from simple token transfers to complex, high-frequency derivative markets has forced a radical redesign of Data Availability.

Early protocols suffered from severe congestion, where the cost of submitting an option expiration event could exceed the value of the contract itself. This structural limitation prompted the development of Layer 2 solutions that prioritize transaction throughput without compromising the security of the underlying assets. The shift toward modularity represents the most significant change in the last few years.

By separating the consensus layer from the data availability layer, developers have successfully isolated the cost of security from the cost of execution. This allows for a more granular approach to pricing, where users only pay for the specific security guarantees they require for their specific derivative strategies. A brief look at history suggests that every financial revolution begins with a bottleneck that eventually forces a new technological paradigm.

Just as the invention of the telegraph transformed the speed of information transfer in commodity markets, these new protocols are fundamentally altering the speed of settlement for digital assets. We are currently witnessing the migration from inefficient, monolithic chains to highly optimized, multi-layered infrastructures that can support institutional-grade derivative trading volumes.

Horizon

Future systems will prioritize Data Availability through decentralized committees and hardware-accelerated proof verification. The next generation of protocols will likely integrate Dynamic Sharding, where the data requirements of a specific derivative instrument are automatically scaled based on the volatility and open interest of that market.

This adaptive approach will ensure that cost optimization is not a static configuration but a fluid response to market demand.

Future scaling will rely on adaptive data architectures that dynamically adjust resource allocation based on real-time market volatility.

The ultimate objective is the creation of a global, permissionless derivative market that matches the liquidity and efficiency of traditional exchanges while eliminating the systemic risks associated with central clearinghouses. This vision depends on our ability to solve the remaining latency issues inherent in cryptographic proof generation. As we refine these systems, the distinction between on-chain and off-chain finance will continue to erode, resulting in a more resilient and transparent financial infrastructure.