Essence

Data Access Restrictions function as the architectural boundaries governing the visibility and retrieval of trade-related information within decentralized derivatives protocols. These constraints dictate the granularity, latency, and permissioning of order flow data, liquidity depth, and historical trade execution logs. By controlling who perceives specific market telemetry, protocols exert influence over the efficiency of price discovery and the distribution of information asymmetry among participants.

Data access restrictions define the boundaries of information visibility within decentralized derivative markets.

These mechanisms often serve as a defense against predatory automated strategies, such as high-frequency front-running or sandwich attacks. When a protocol limits access to the mempool or obscures order book snapshots, it alters the competitive landscape. Participants must then adapt their trading strategies to account for reduced transparency, shifting from reactive execution based on real-time order flow to predictive models grounded in aggregate network state.

Origin

The necessity for Data Access Restrictions stems from the inherent transparency of public distributed ledgers.

In traditional finance, dark pools and private order books provide venues where institutional participants execute large trades without alerting the broader market to their intentions. Early decentralized exchanges lacked these features, rendering all order intent visible to any observer monitoring the network.

- Public Ledger Transparency: The open nature of blockchain transaction propagation creates an environment where every pending trade is broadcasted, inviting adversarial actors to capture value from that visibility.

- MEV Extraction: The rise of Maximal Extractable Value highlighted the vulnerability of public order flow, where searchers and validators reorder transactions to their benefit.

- Protocol Privacy Design: Architects recognized that to replicate institutional-grade execution, protocols required technical methods to mask trade details until final settlement occurs.

This evolution marks a shift from total transparency to selective disclosure. By embedding restrictions directly into smart contracts, developers attempt to balance the ethos of open finance with the requirement for competitive execution environments.

Theory

The theoretical framework surrounding Data Access Restrictions involves the tension between market efficiency and participant protection. From a quantitative perspective, restricted access to order flow data creates a non-linear information distribution.

Participants with direct access to private RPC nodes or validator-level information possess a distinct advantage over those relying on public indexing services.

Restricted access to order flow data creates a non-linear distribution of information across market participants.

Adversarial agents leverage these asymmetries to optimize their own execution while imposing costs on others. The following table illustrates the trade-offs inherent in different access models:

| Access Model | Transparency Level | Adversarial Exposure | Execution Latency |

|---|---|---|---|

| Public Mempool | Maximum | High | Variable |

| Private Relayer | Restricted | Low | Low |

| Encrypted Order Flow | Zero | Negligible | High |

The mathematical modeling of these systems often utilizes game theory to predict how traders respond to information constraints. If the cost of accessing data exceeds the expected value derived from that data, participants migrate toward protocols with superior privacy-preserving architectures. This creates a feedback loop where liquidity follows the most secure and least exploitable infrastructure.

Sometimes I think the entire structure of modern finance is a fragile stack of assumptions regarding who sees what, and the blockchain is just the first time we have been forced to write these rules in permanent, immutable code. This reality dictates that every line of smart contract logic must anticipate the most sophisticated actor attempting to bypass its visibility constraints.

Approach

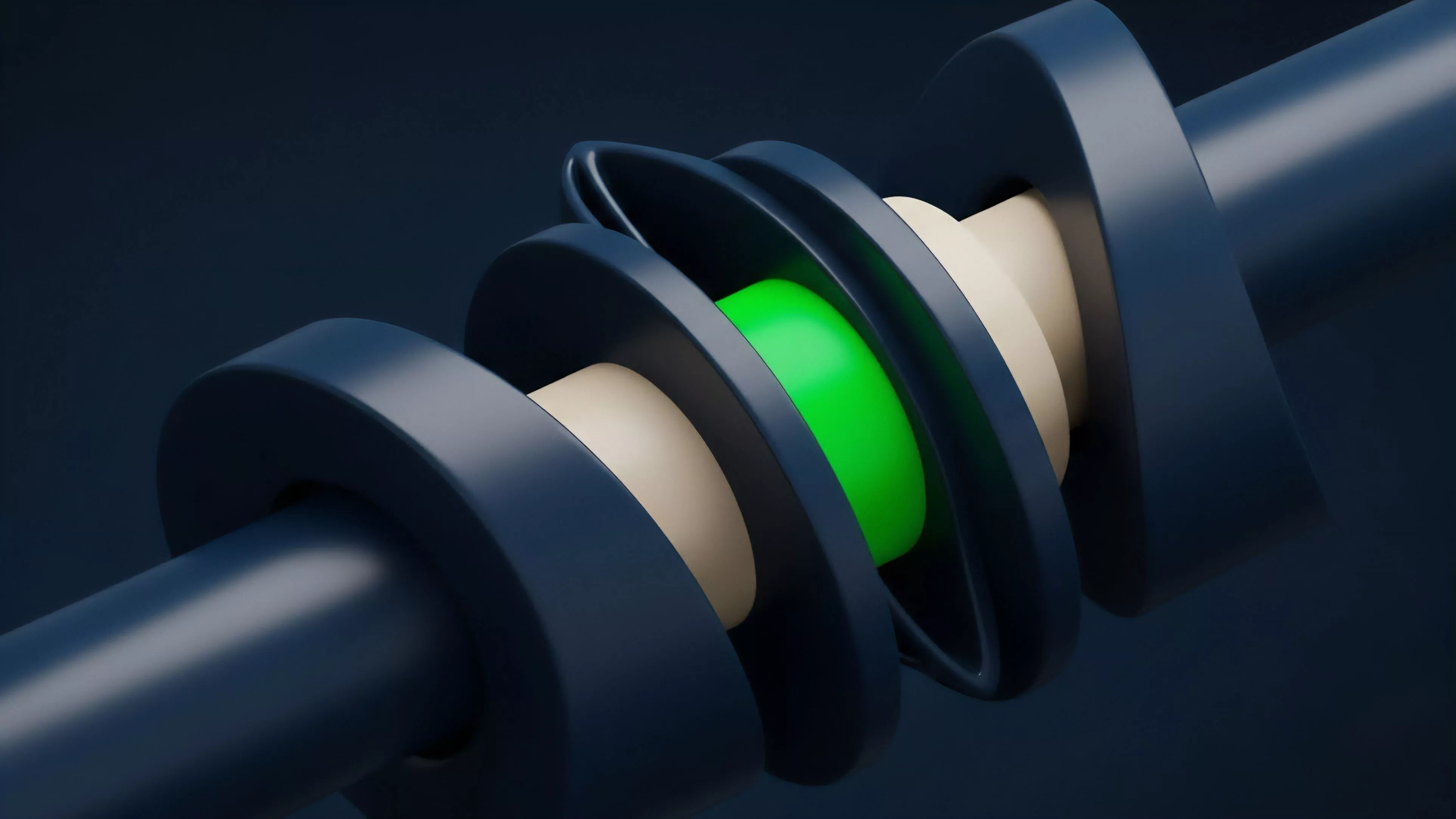

Current implementation of Data Access Restrictions utilizes several cryptographic and architectural strategies. Protocols now frequently employ batch auctions rather than continuous order books to neutralize the advantages of low-latency data access.

By aggregating orders over a short time window and executing them at a single clearing price, the protocol effectively hides the sequence of arrival, rendering front-running technically impossible.

Batch auctions neutralize the advantage of rapid data access by aggregating orders and executing them at a single clearing price.

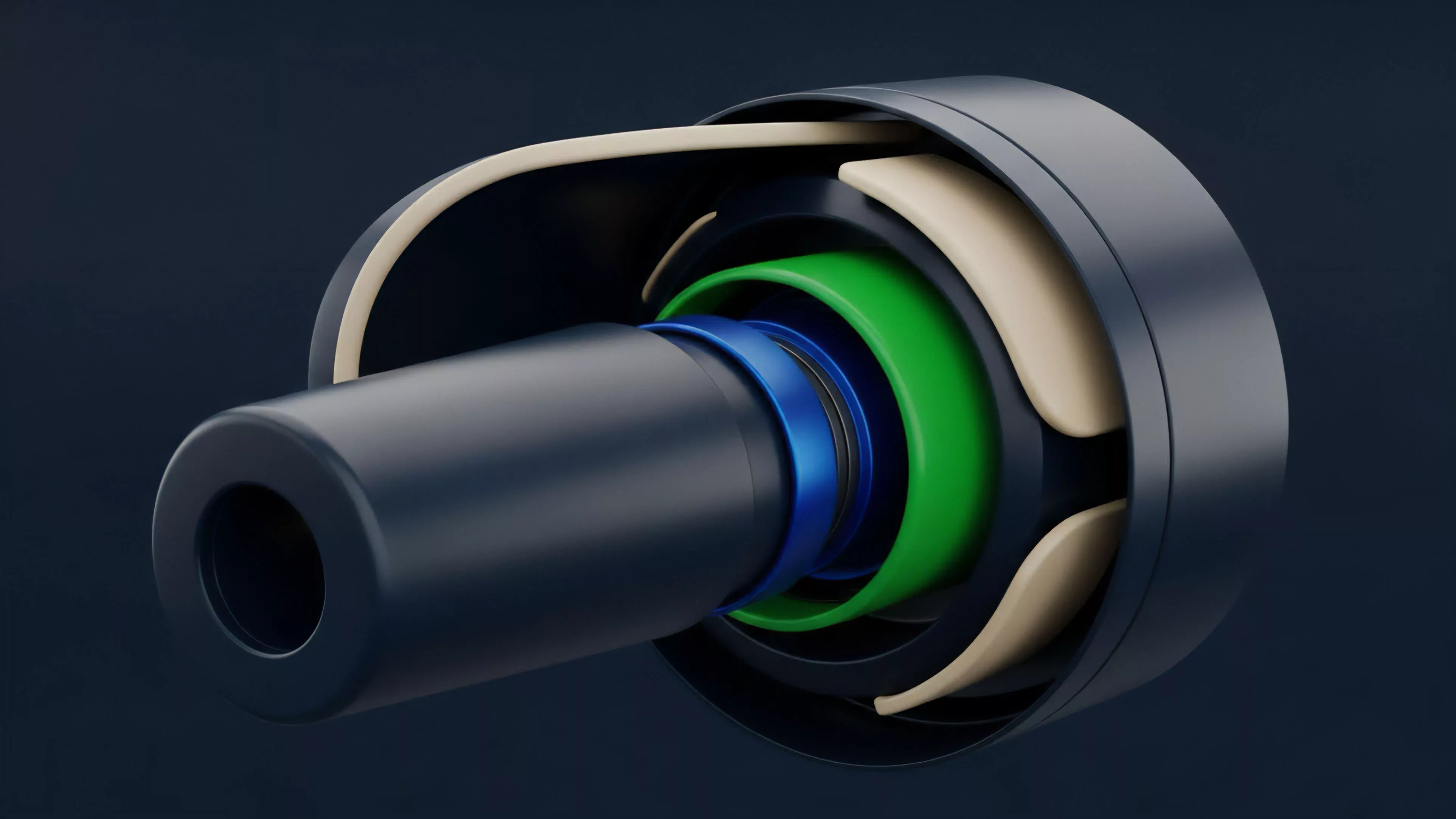

Another approach involves the use of threshold cryptography to encrypt order details. In this architecture, the order content remains hidden from validators and observers until a threshold of decentralized nodes performs a decryption process. This ensures that the order flow remains confidential during the critical period between submission and inclusion in a block.

- Threshold Encryption: Ensuring that sensitive order information is cryptographically protected until the point of settlement.

- Batching Mechanisms: Reducing the granularity of trade visibility by grouping transactions into discrete time-bound windows.

- Off-Chain Matching: Moving the order matching process to trusted or decentralized off-chain environments where data access is strictly governed by protocol rules rather than public network visibility.

Evolution

The trajectory of Data Access Restrictions shows a clear transition from reactive patching to proactive architectural design. Early iterations relied on simple front-end obfuscation, which proved insufficient against actors monitoring the underlying smart contract events. As the stakes increased, the industry moved toward sophisticated privacy-preserving primitives.

| Development Phase | Primary Focus | Systemic Implication |

|---|---|---|

| Early Phase | Frontend Hiding | Ineffective against chain-level monitoring |

| Intermediate Phase | Flashbots and Relayers | Centralized trust requirements for privacy |

| Advanced Phase | Threshold Cryptography | Trust-minimized, protocol-native privacy |

This progression highlights the increasing technical sophistication required to maintain order flow integrity. The reliance on centralized relayers as a temporary solution has necessitated a move toward decentralized, protocol-native solutions that do not require trusting a single intermediary with access to sensitive data.

Horizon

The future of Data Access Restrictions lies in the development of fully homomorphic encryption and zero-knowledge proof systems that allow for trade execution without revealing underlying data to any party, including the validator. These technologies will enable the creation of dark pools that are mathematically guaranteed to be private, even in the presence of malicious actors.

Future protocols will leverage zero-knowledge proofs to enable private execution without compromising trust-minimized settlement.

The ultimate objective is to decouple market participation from data visibility. As these protocols mature, we expect to see a fragmentation of liquidity based on the level of privacy offered. Institutional capital will gravitate toward venues that provide the highest degree of protection against adversarial data extraction, while retail participants will continue to utilize protocols that balance accessibility with sufficient security. The systemic risk will shift from simple front-running to more complex, protocol-level exploits targeting the cryptographic assumptions themselves.