Essence

Crypto Market Efficiency describes the speed and accuracy with which asset prices incorporate all available information within decentralized environments. It functions as a measure of how effectively arbitrageurs, automated market makers, and liquidity providers minimize discrepancies between spot, perpetual, and options pricing. The state of this efficiency determines the reliability of price discovery, impacting the cost of hedging and the precision of risk management across decentralized finance.

Crypto Market Efficiency defines the velocity at which public and private information reflects within the price of digital assets across decentralized venues.

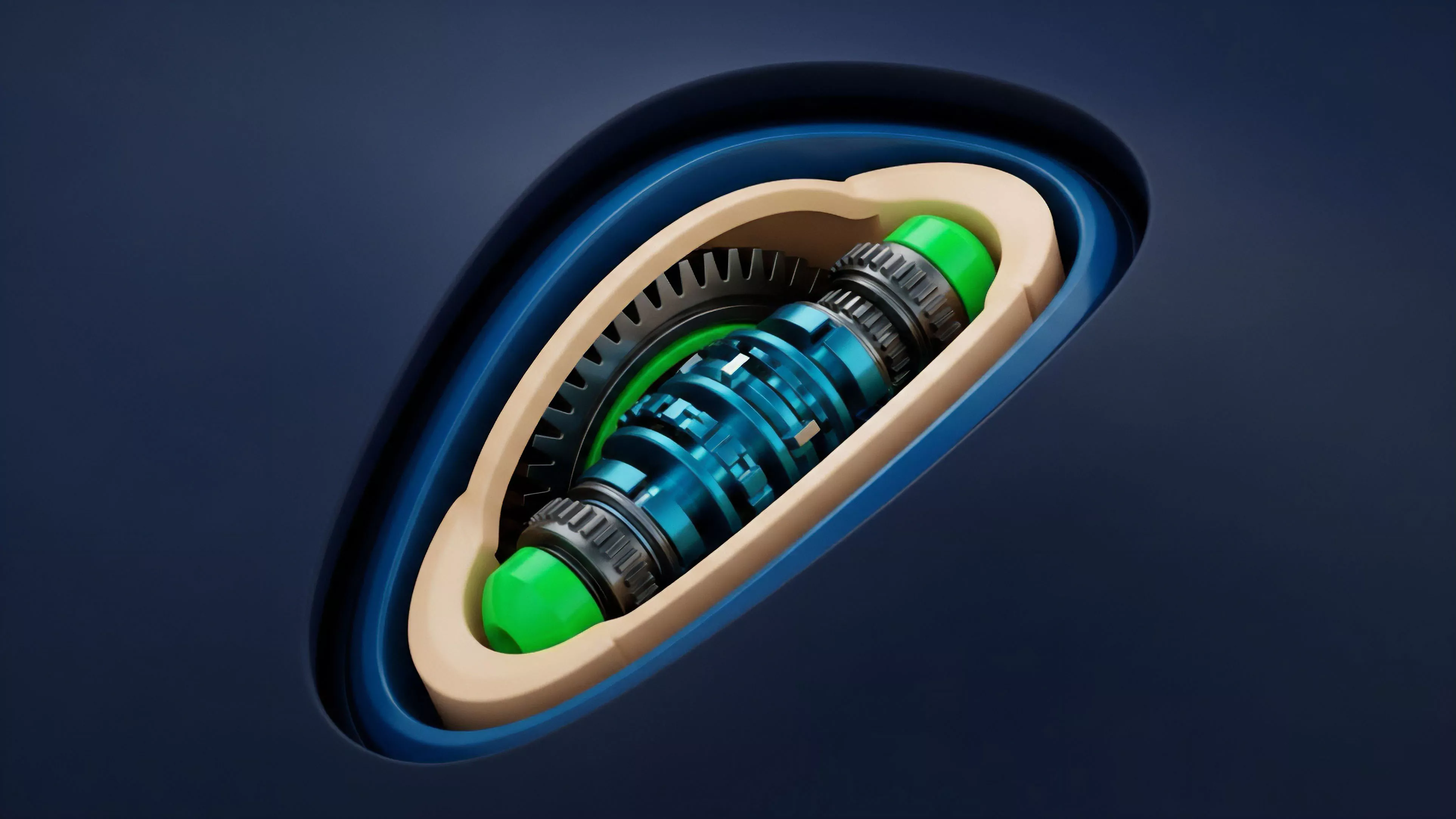

The concept relies on the assumption that market participants act rationally to exploit price gaps, thereby driving the system toward equilibrium. In crypto, this process involves the interplay of on-chain data transparency and the latency inherent in consensus mechanisms. When information flows without friction, prices track intrinsic value closely; when structural barriers like gas costs, oracle delays, or liquidity fragmentation persist, the market deviates from an efficient state, creating opportunities for sophisticated actors.

Origin

The intellectual roots of this concept stem from traditional finance literature, specifically the efficient market hypothesis which posits that prices fully reflect all available information. Early proponents argued that in competitive markets, asset prices move randomly because new information arrives unpredictably. Translating this to the digital asset space required accounting for the unique permissionless architecture and 24/7 trading cycles that characterize decentralized protocols.

The origin of market efficiency in decentralized systems traces back to the application of arbitrage mechanics within permissionless liquidity pools.

Historically, the development of decentralized exchanges and automated market makers necessitated a new framework for understanding price discovery. Without centralized order books, the reliance shifted toward constant product formulas and decentralized oracles. These mechanisms introduced a different set of constraints, forcing researchers to reconcile traditional quantitative models with the realities of smart contract execution, block times, and the adversarial nature of mempool dynamics.

Theory

Theoretical modeling of this concept demands a multi-dimensional view of how liquidity depth and execution latency interact. At its core, the theory suggests that efficiency is a function of the cost to trade versus the potential gain from correcting a mispricing. If the cost to rebalance a pool exceeds the deviation, the price remains stale, illustrating a breakdown in the efficiency mechanism.

| Metric | Impact on Efficiency |

| Oracle Latency | Increases risk of stale pricing |

| Gas Throughput | Limits arbitrage frequency |

| Pool Depth | Reduces slippage during rebalancing |

Behavioral game theory also plays a critical role here. Participants do not just respond to price signals; they anticipate the actions of MEV searchers and liquidators. This creates a feedback loop where the pursuit of individual profit dictates the aggregate efficiency of the market.

The system becomes a living, breathing mechanism where the threat of liquidation keeps participants honest, ensuring that even in a decentralized setting, the incentives remain aligned with the broader health of the protocol.

Approach

Current analysis of this efficiency utilizes quantitative finance to measure the correlation between various trading venues. Practitioners monitor the basis trade, tracking the spread between spot and derivatives, to gauge how well the market is pricing future expectations. By employing sophisticated Greeks analysis, traders identify whether volatility surfaces are accurately reflecting the risk of extreme price movements.

Modern market analysis focuses on measuring the basis spread and volatility skew as primary indicators of decentralized price alignment.

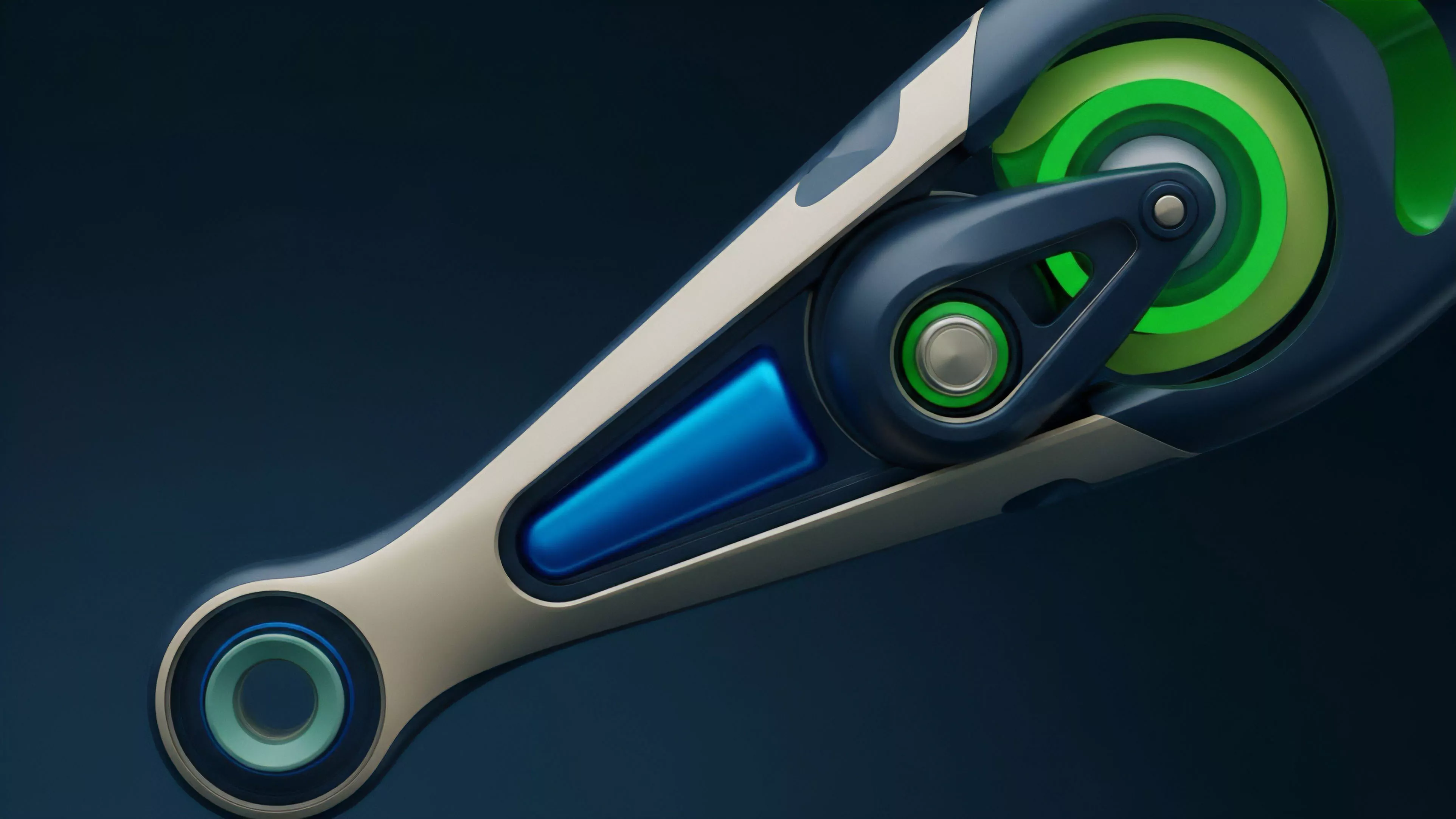

Systems architects now prioritize the following components to enhance the efficiency of their platforms:

- Liquidity aggregation across multiple protocols reduces the impact of isolated order books.

- Cross-chain messaging protocols enable faster arbitrage between disparate networks.

- Optimistic oracles provide more accurate price feeds by incentivizing truthful reporting through game-theoretic mechanisms.

Sometimes the most effective way to understand these dynamics is to observe the failure points ⎊ where the price disconnects from the underlying asset. A sudden spike in funding rates often indicates that the market is struggling to maintain equilibrium, forcing participants to adjust their strategies rapidly to avoid catastrophic losses. This constant state of flux represents the true nature of digital markets, where stability is not a given, but a result of relentless competition.

Evolution

The progression from simple decentralized exchanges to complex derivative platforms marks a significant shift in how efficiency is achieved. Early models relied on basic automated market makers, which were susceptible to significant slippage. As the space matured, the introduction of order book protocols and synthetic assets allowed for more granular price discovery, moving closer to the standards set by institutional finance.

| Stage | Primary Driver |

| Foundational | Simple AMM liquidity |

| Intermediate | Derivative instruments |

| Advanced | Institutional-grade cross-chain arbitrage |

This evolution also reflects the changing regulatory and technical landscape. As protocols implement more robust governance models, the ability to adapt to market stress increases. The transition toward modular architectures allows for specialized execution layers, which directly address the bottlenecks that previously hindered the speed of price discovery.

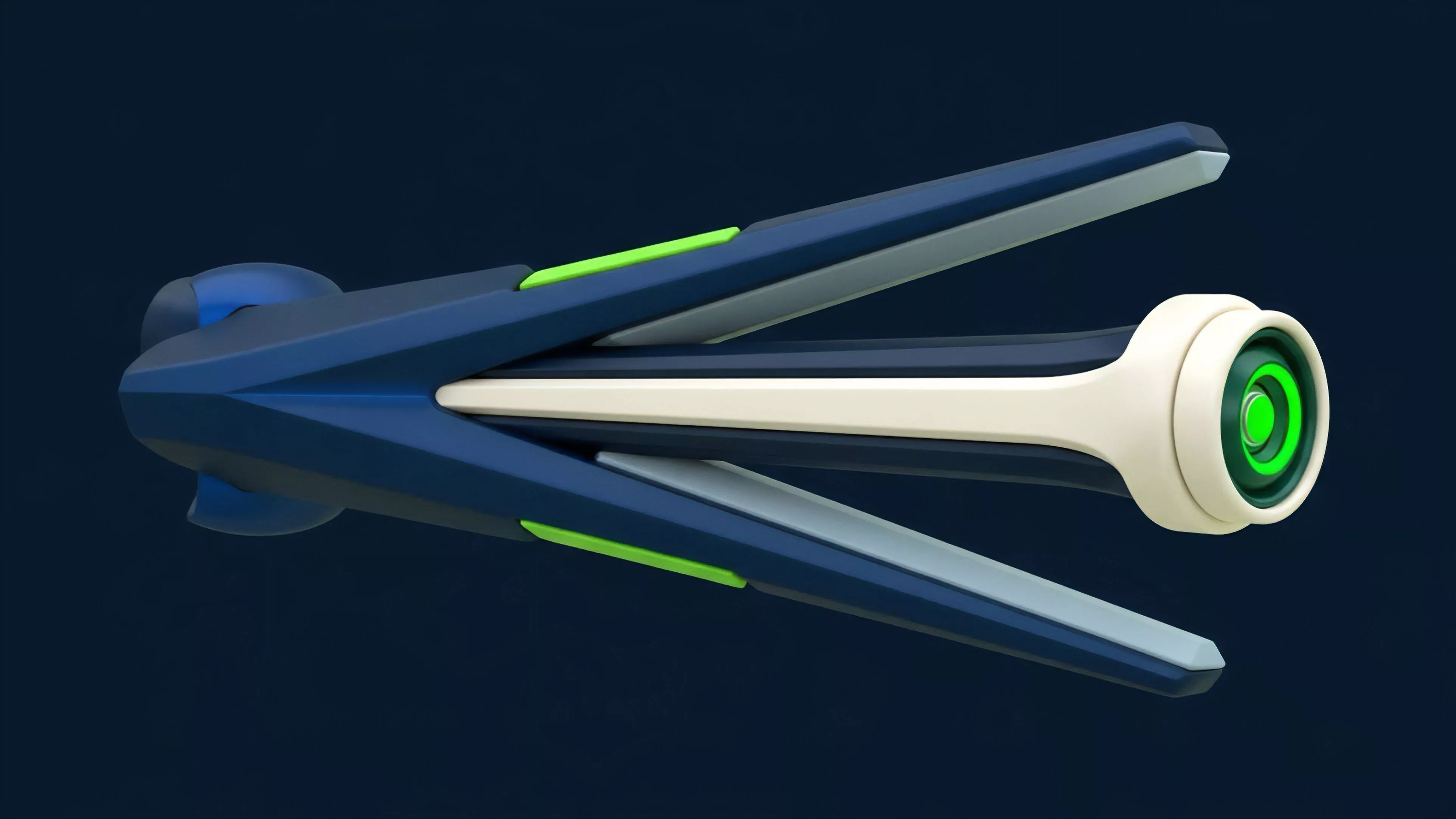

The industry is moving away from monolithic designs toward interconnected, high-performance systems.

Horizon

Future developments will center on the integration of zero-knowledge proofs to enhance privacy without sacrificing the transparency required for market efficiency. This will allow institutional participants to enter the space while maintaining confidentiality, significantly increasing the volume and liquidity of decentralized venues. Furthermore, the rise of autonomous agents will likely accelerate the speed of arbitrage, pushing the market toward a near-instantaneous state of price alignment.

The future of market efficiency lies in the synergy between privacy-preserving computation and high-speed autonomous arbitrage agents.

The ultimate goal is to create a financial infrastructure where capital moves with minimal friction across global borders. As protocols continue to refine their margin engines and risk parameters, the reliance on centralized intermediaries will decrease, fostering a more resilient financial system. The path forward is not linear; it is a series of iterative improvements that slowly replace outdated legacy systems with transparent, mathematically verifiable alternatives.