Essence

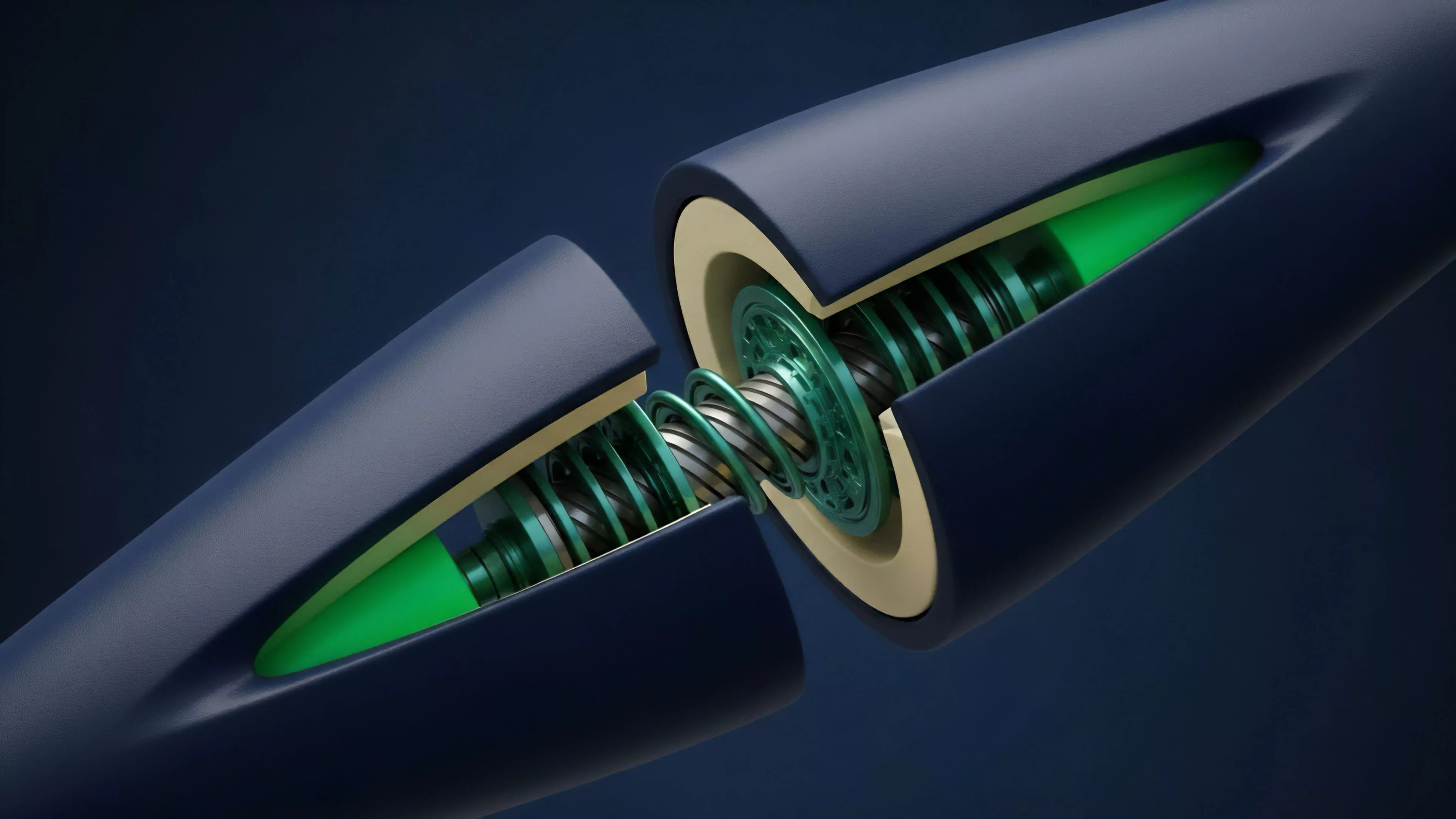

Cross-Chain Data Availability represents the foundational mechanism ensuring that state transitions occurring on one decentralized ledger remain verifiable by participants on another. In the architecture of modular blockchain systems, this function decouples the execution layer from the settlement layer, requiring a robust, decentralized protocol to store transaction data so that nodes can confirm validity without downloading the entire chain history.

Cross-Chain Data Availability serves as the verifiable ledger of record that allows distinct execution environments to prove the integrity of their state transitions to external settlement layers.

The systemic relevance of this concept resides in its ability to mitigate the trust assumptions inherent in multi-chain environments. When an asset moves across a bridge or a roll-up settles to a base layer, the security of that movement depends entirely on whether the underlying data is accessible and immutable. Without guaranteed access to this data, participants cannot challenge fraudulent state transitions, rendering the entire security model of decentralized finance vulnerable to censorship or malicious actor interference.

Origin

The genesis of Cross-Chain Data Availability lies in the scaling trilemma, specifically the conflict between decentralization, security, and throughput.

Early monolithic architectures required every node to process every transaction, creating a bottleneck that limited network capacity. Researchers identified that by separating data storage from transaction execution, networks could achieve higher throughput while maintaining security.

- Data Availability Sampling allows light clients to verify that data exists without downloading full blocks.

- Fraud Proofs provide a mechanism for participants to contest invalid state transitions if the data remains accessible.

- Validity Proofs utilize cryptographic constructions like zk-SNARKs to provide mathematical certainty of correctness, though these still require underlying data availability to prevent data withholding attacks.

This shift toward modularity mirrors the evolution of traditional financial clearinghouses, where the separation of trade execution, clearing, and settlement created specialized, high-performance infrastructures. The industry moved away from monolithic designs toward specialized layers, creating the necessity for a standardized way to ensure data remains persistent across these fragmented environments.

Theory

The theoretical framework of Cross-Chain Data Availability relies on adversarial game theory and cryptographic verification. At its core, the protocol must ensure that even if a majority of validators attempt to withhold data, the honest minority retains the ability to reconstruct the state or force a halt to invalid operations.

| Mechanism | Function | Risk |

| Data Availability Sampling | Probabilistic verification | Network latency |

| Data Availability Committees | Trusted quorum validation | Centralization of authority |

| Proof of Custody | Cryptographic proof of storage | Collusion among nodes |

The mathematical rigor involves balancing the sampling rate against the network propagation delay. If the sampling rate is too low, the probability of missing a data withholding attack increases. If the propagation delay is too high, the system suffers from degraded performance.

This is the classic trade-off between liveness and safety, where the protocol designer must choose parameters that align with the specific security requirements of the connected networks.

The integrity of cross-chain financial systems rests upon the probabilistic guarantee that transaction data is accessible to all relevant validation agents.

This domain also intersects with information theory, as the efficient encoding of data (using erasure coding) allows for reconstruction even when large portions of the network are offline. The physics of these protocols is essentially an exercise in maintaining a consistent global state in an environment characterized by asynchronous message passing and potentially malicious participants.

Approach

Current implementations of Cross-Chain Data Availability utilize specialized protocols designed to offload the burden from primary settlement layers. These approaches prioritize high-throughput data storage, often utilizing erasure coding to ensure redundancy.

- Rollup Centric Design delegates the responsibility of data publication to a specific layer, which then provides a hash of the data to the settlement layer.

- External Data Availability Layers function as independent, decentralized networks optimized for data storage, providing proofs that data is accessible to any interested party.

- Bridge-Integrated Validation embeds availability checks directly into the cross-chain messaging protocol, requiring a cryptographic confirmation before assets are released on the destination chain.

The current market microstructure reflects a high degree of fragmentation. Liquidity providers must navigate varying security guarantees depending on which data availability solution a specific bridge or roll-up employs. This introduces a hidden risk premium, as the cost of capital in a highly secure environment differs significantly from one relying on a smaller, less robust committee of validators.

Evolution

The transition from simple bridge-based messaging to sophisticated, modular data availability layers marks a critical maturity phase for digital assets.

Early iterations relied on centralized relayers, which introduced significant counterparty risk. The market recognized that if the relayer controlled the data, they effectively controlled the asset. The industry moved toward decentralized light-client protocols, which reduced reliance on trusted intermediaries.

These advancements allow for trust-minimized interoperability, where the security of the cross-chain transfer is tied to the security of the underlying blockchain rather than the honesty of a bridge operator. This evolution is driven by the necessity for capital efficiency; as decentralized finance grows, the ability to move assets securely without locking them in high-risk bridges becomes a competitive advantage. Sometimes I wonder if our obsession with speed blinds us to the fragility we introduce by cutting corners on data verification.

Anyway, the trajectory is clear: we are moving toward a standardized, protocol-level approach to availability that treats data as a public good rather than a private cost.

Horizon

The future of Cross-Chain Data Availability lies in the convergence of cryptographic proofs and decentralized storage incentives. We expect to see the emergence of unified standards that allow any blockchain to plug into a global data availability network, effectively commoditizing the storage and verification of transaction history.

Unified data availability protocols will standardize security guarantees across disparate networks, reducing the complexity of cross-chain risk management.

Future architectures will likely leverage advanced zero-knowledge proofs to minimize the amount of data that needs to be transmitted, further reducing the latency of cross-chain state updates. This will enable near-instantaneous settlement across heterogeneous chains, creating a truly global, unified liquidity pool. The ultimate goal is a system where the physical location of the data is irrelevant to the security of the transaction, allowing for seamless value transfer across the entire decentralized landscape.