Essence

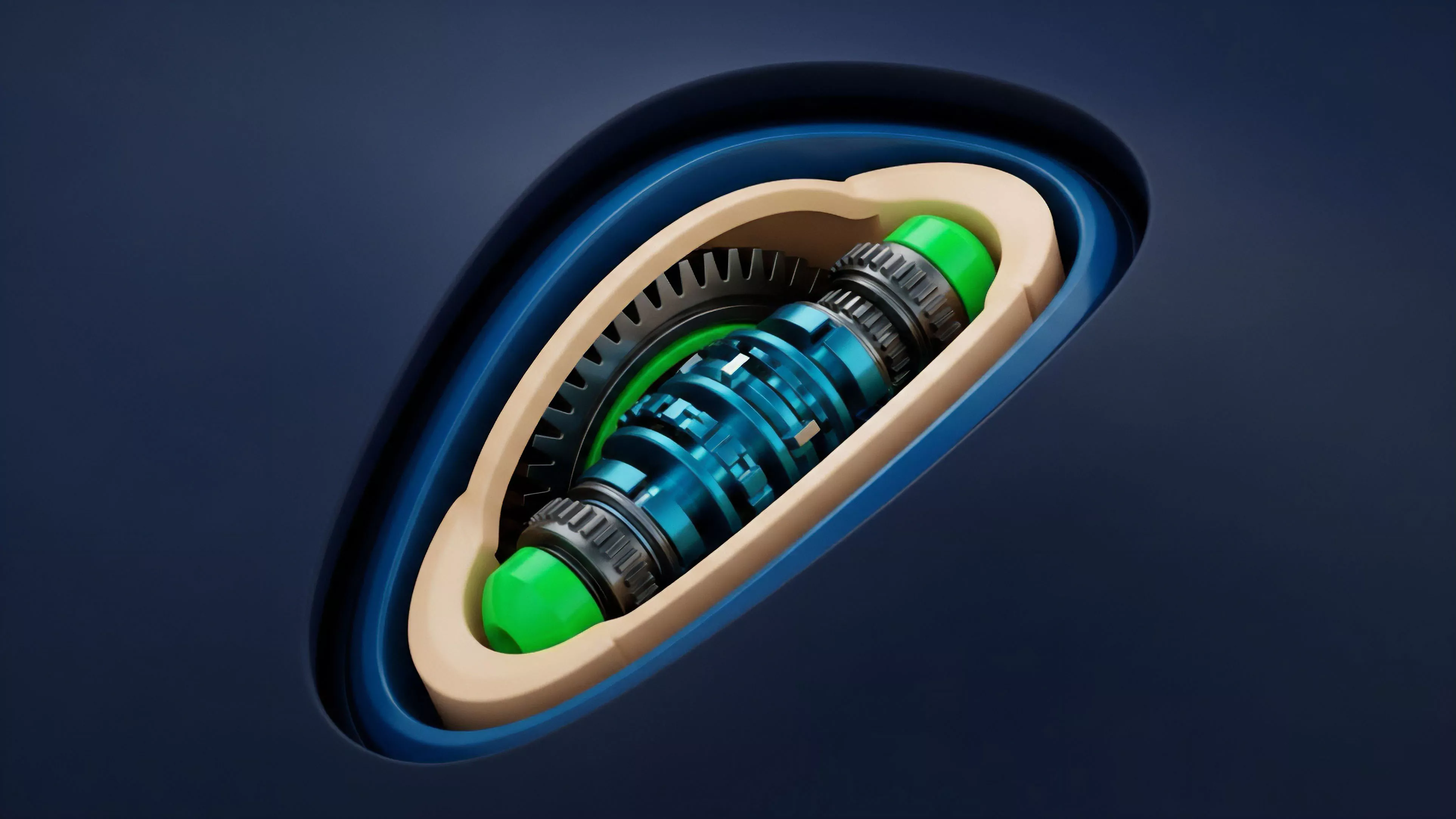

Continuous Integration Pipelines represent the automated orchestration of financial logic, risk parameters, and smart contract deployment within decentralized derivative protocols. These systems function as the circulatory mechanism for digital asset markets, ensuring that pricing models, collateral requirements, and settlement instructions remain synchronized with real-time volatility data.

Automated pipelines translate high-frequency market data into executable contract states, ensuring protocol resilience through continuous validation of financial variables.

The core utility resides in the mitigation of human latency. By removing manual intervention from the lifecycle of a crypto option ⎊ from premium calculation to margin adjustment ⎊ these pipelines establish a deterministic environment where code enforces fiscal policy. This shift demands a rigorous alignment between off-chain oracle updates and on-chain execution logic, as any divergence creates immediate arbitrage opportunities for sophisticated market participants.

Origin

The architectural roots of these systems stem from traditional high-frequency trading infrastructure, adapted for the constraints of distributed ledgers.

Early iterations relied on manual execution scripts, but the shift toward decentralized finance necessitated a transition to trustless, automated workflows. This evolution mirrors the development of software engineering practices, where continuous integration was designed to catch defects early in the development cycle.

- Deterministic Execution: The requirement for predictable outcomes in a trustless environment drove the shift from human-in-the-loop systems to fully automated pipelines.

- Oracle Integration: The necessity to ingest external volatility data without introducing centralized points of failure forced the creation of robust, decentralized data feeds.

- Smart Contract Composability: The ability to link distinct financial instruments necessitated a unified pipeline structure to manage collateral across interconnected protocols.

These pipelines emerged as a response to the inherent fragility of manual margin calls and static pricing models in the highly volatile crypto landscape. The transition from monolithic, centralized clearinghouses to modular, code-based pipelines represents the most significant shift in market infrastructure since the inception of electronic trading.

Theory

Financial stability within decentralized derivatives relies on the tight coupling of volatility surfaces and liquidation engines. The theoretical framework for these pipelines is built upon the assumption that market participants will exploit any pricing discrepancy, forcing protocols to update their state at speeds exceeding human cognition.

| Component | Function | Risk Metric |

|---|---|---|

| Pricing Oracle | Updates volatility surface | Data latency |

| Margin Engine | Validates collateral ratios | Under-collateralization |

| Settlement Layer | Executes contract expiry | Execution slippage |

Rigorous mathematical modeling of option Greeks allows these pipelines to dynamically adjust risk thresholds, maintaining protocol solvency under extreme stress.

The interaction between these components is non-linear. As market volatility spikes, the pipeline must simultaneously update the implied volatility parameters and increase the frequency of margin checks. If the computational load exceeds the capacity of the underlying blockchain consensus mechanism, the pipeline fails, leading to cascading liquidations and potential systemic collapse.

This underscores the need for optimized code that minimizes gas consumption while maximizing execution precision.

Approach

Current methodologies emphasize the modularity of Continuous Integration Pipelines to ensure upgradeability without disrupting existing positions. Architects now employ a layered approach, separating the data ingestion layer from the execution layer to isolate potential failures.

- State Machine Verification: Protocols now utilize formal verification to ensure that every transition within the pipeline adheres to predefined financial invariants.

- Asynchronous Processing: Off-chain computation engines are increasingly used to calculate complex risk metrics before submitting final states to the blockchain for verification.

- Adaptive Margin Models: Pipelines now incorporate dynamic collateral requirements that adjust based on the historical volatility and liquidity profile of the underlying asset.

This approach shifts the focus from simple execution to systemic risk management. It requires a constant, adversarial testing of the pipeline to identify edge cases where rapid price movements might bypass safety checks. The sophistication of these systems is such that they effectively act as autonomous market makers, balancing protocol health against the needs of liquidity providers.

Evolution

The transition from static, period-based updates to real-time, event-driven pipelines defines the current state of market infrastructure.

Early designs struggled with the inherent limitations of blockchain throughput, often resulting in stale pricing data and delayed liquidations. The industry moved toward Layer 2 scaling solutions and dedicated execution environments to resolve these bottlenecks. Sometimes I consider whether we are merely building faster engines for an inevitable market crash, or if this speed is the only thing preventing total systemic entropy.

Anyway, the shift toward decentralized sequencers and improved oracle technology has significantly reduced the latency gap, allowing these pipelines to operate with greater reliability. This evolution has also seen the introduction of multi-chain compatibility, where a single pipeline manages positions across multiple decentralized environments.

Horizon

The future of these systems involves the integration of predictive analytics and machine learning to anticipate volatility shifts before they manifest in price action. By incorporating real-time sentiment analysis and on-chain flow data, Continuous Integration Pipelines will evolve from reactive systems to proactive risk managers.

Future pipeline architectures will likely leverage zero-knowledge proofs to validate complex financial computations off-chain while maintaining total transparency on-chain.

This trajectory suggests a world where derivative protocols become increasingly self-governing, with pipelines capable of adjusting their own risk parameters in response to changing macro conditions. The ultimate goal is a truly autonomous financial infrastructure that operates with higher efficiency and lower systemic risk than traditional, human-managed institutions. The next phase of development will focus on cross-protocol interoperability, enabling seamless collateral movement and risk aggregation across the entire decentralized financial landscape.