Essence

Consensus Protocol Validation functions as the definitive mechanism for establishing state finality within decentralized ledger environments. It represents the algorithmic verification process wherein network participants confirm the legitimacy of proposed transactions and block data, ensuring the integrity of the underlying shared database. By enforcing rigorous cryptographic rules, this validation process prevents double-spending and unauthorized state transitions, acting as the bedrock for all derivative financial activity built upon the network.

Consensus Protocol Validation ensures transaction integrity and state finality through algorithmic verification of distributed network data.

The systemic relevance of this process extends to the reliability of automated financial engines. When smart contracts execute options or collateralized lending agreements, they rely entirely on the premise that the validated state of the blockchain is accurate and immutable. If the validation process falters, the entire stack of derivative products ⎊ ranging from decentralized perpetuals to exotic options ⎊ faces immediate exposure to settlement risk and potential catastrophic liquidation.

Origin

The genesis of Consensus Protocol Validation traces back to the technical challenge of achieving Byzantine Fault Tolerance in distributed systems without a central authority.

Early implementations, such as Proof of Work, established the foundational requirement for resource expenditure to secure the network, creating a physical constraint on the ability to propose and validate blocks. This design choice necessitated that the economic cost of subverting the validation process exceed the potential gains from doing so.

- Proof of Work: Established initial security through computational resource commitment.

- Proof of Stake: Shifted the validation burden to capital commitment and economic bonding.

- Byzantine Fault Tolerance: Solved the fundamental communication problem in trustless distributed systems.

As decentralized finance matured, the requirements for validation evolved beyond mere security to include throughput and finality speed. The transition from probabilistic finality ⎊ common in early systems ⎊ to deterministic finality has become the primary focus of modern protocol design. This shift allows derivative markets to operate with higher leverage and lower latency, as participants can rely on the near-instantaneous confirmation of trade settlement.

Theory

The architecture of Consensus Protocol Validation rests on the interaction between incentive design and cryptographic verification.

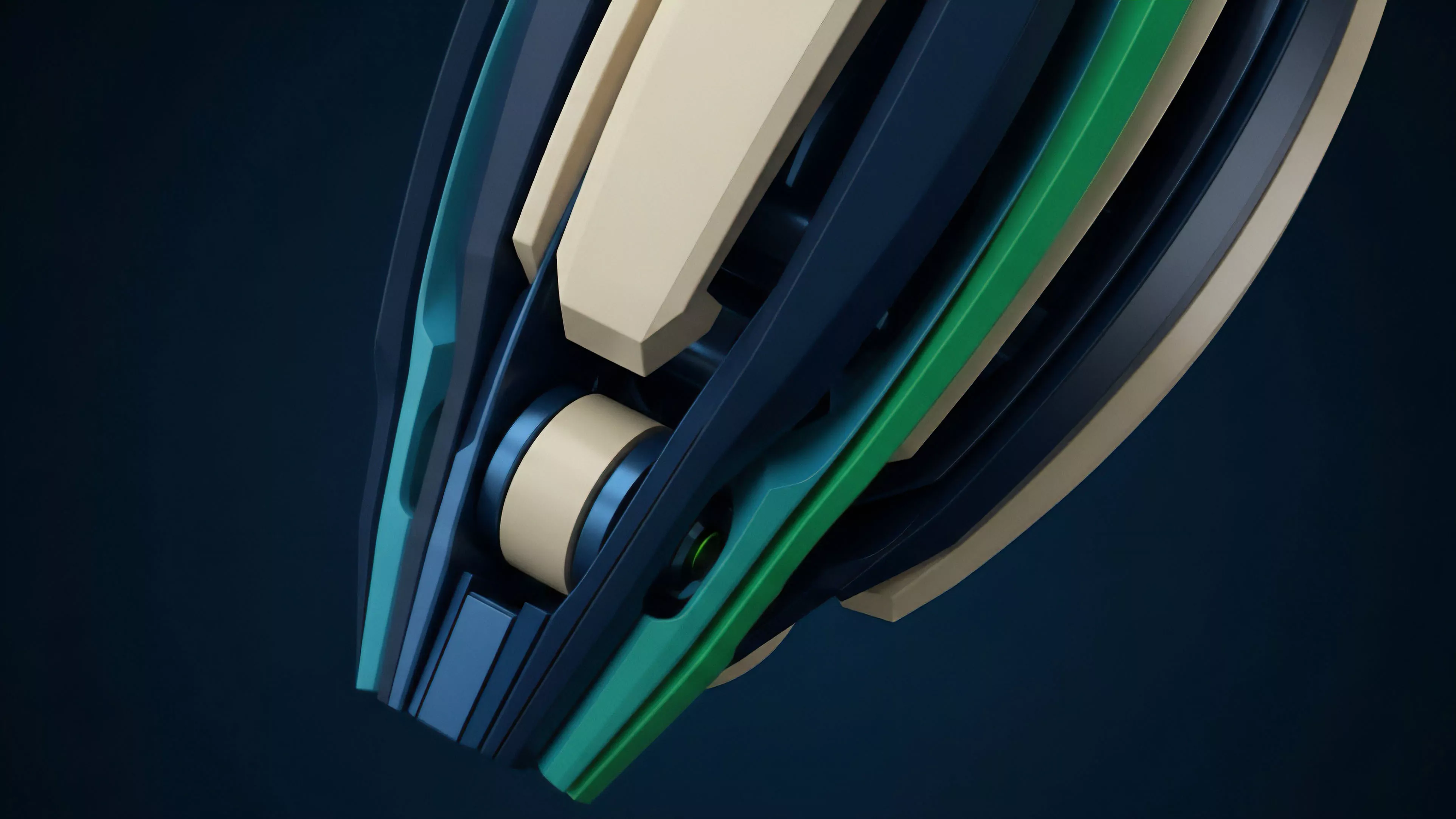

Validators operate under a strict game-theoretic framework where rational behavior is rewarded and malicious actions are penalized via slashing mechanisms. This economic structure forces participants to prioritize network health, as their own capital is bonded to the performance of the validation process.

| Mechanism | Primary Constraint | Finality Characteristic |

| PoW | Hashrate | Probabilistic |

| PoS | Staked Capital | Deterministic |

Quantitative models for protocol risk assessment focus on the cost of corruption ⎊ the financial resources required to gain control over a majority of the validation power. In options pricing, this cost of corruption acts as a tail-risk variable. If the validation layer exhibits signs of centralization or reduced validator diversity, the systemic risk increases, necessitating higher risk premiums for derivative contracts that rely on the underlying protocol state.

Validators maintain state integrity through bonded economic incentives that penalize malicious activity while rewarding accurate block production.

Consider the interplay between validator latency and option delta hedging. High-frequency delta adjustments require immediate, accurate state information. Any delay or instability in the validation process creates a mismatch between the theoretical price of an option and the actual market state, leading to slippage that disproportionately impacts automated market makers and systematic traders.

Approach

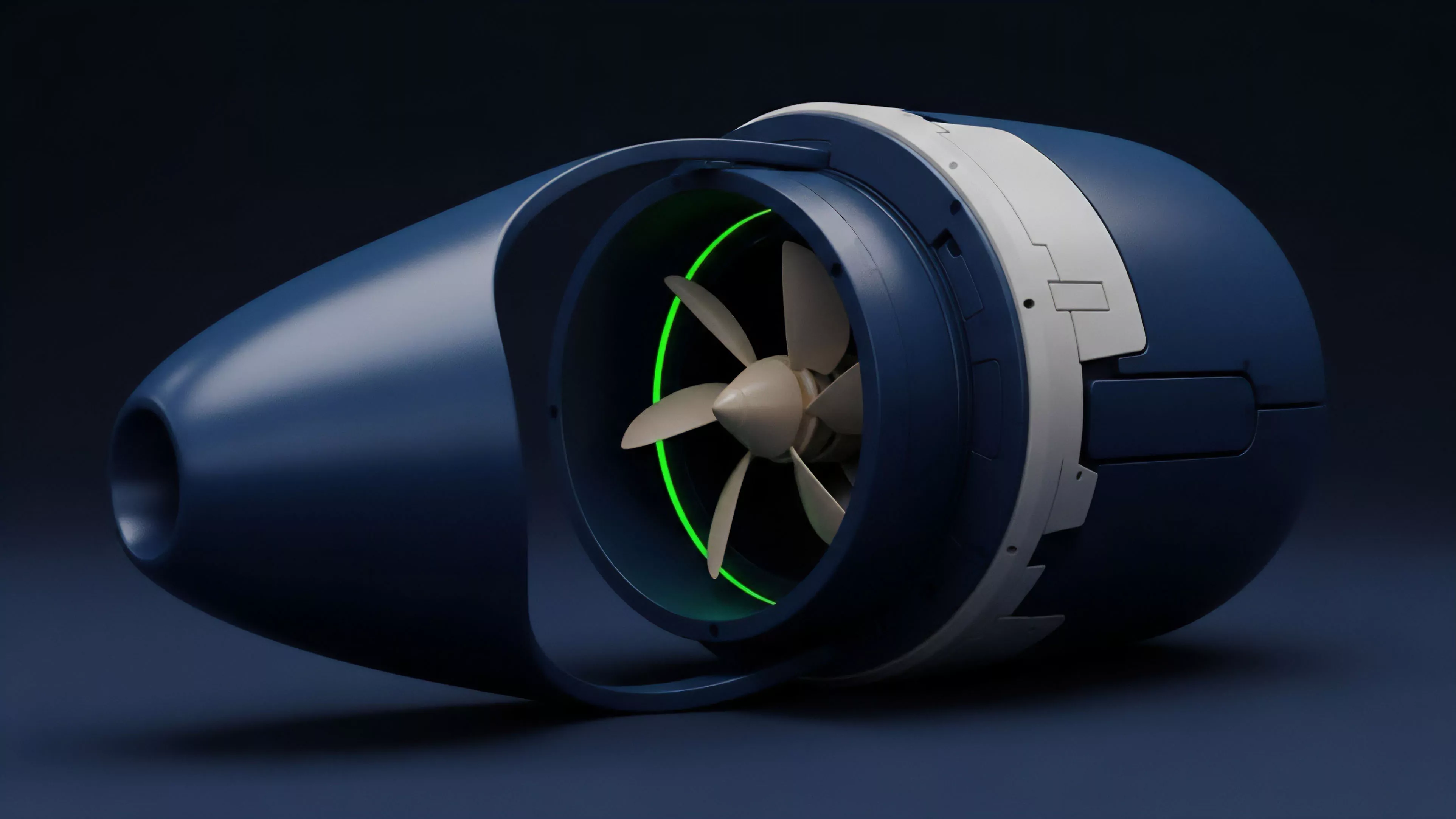

Current validation strategies utilize multi-layered consensus architectures to optimize for speed and security.

Modern protocols often decouple the production of blocks from the finalization of transactions, employing separate committees to handle verification tasks. This structural refinement allows for a higher volume of derivative trades to settle without compromising the security of the broader network.

- Validator Sets: Rotating groups of participants tasked with verifying specific transaction batches.

- Slashing Conditions: Automated penalties triggered by double-signing or extended downtime.

- Finality Gadgets: Specialized sub-protocols that provide cryptographic guarantees of block irreversibility.

The professional approach to risk management now incorporates monitoring of validator health as a primary data input. Institutional market makers monitor on-chain metrics ⎊ such as validator participation rates, proposer timing, and consensus churn ⎊ to adjust their liquidity provision models in real-time. This level of technical oversight is mandatory for managing systemic risk in a permissionless environment where code execution remains the final arbiter of financial outcomes.

Evolution

The progression of Consensus Protocol Validation has moved from simple, monolithic designs toward highly modular and specialized frameworks.

Initially, validation was a singular process performed by all network nodes. Today, the industry utilizes sharding and modular execution layers, where validation responsibilities are partitioned to improve scalability.

Modern validation frameworks prioritize modularity and deterministic finality to support high-throughput decentralized derivative markets.

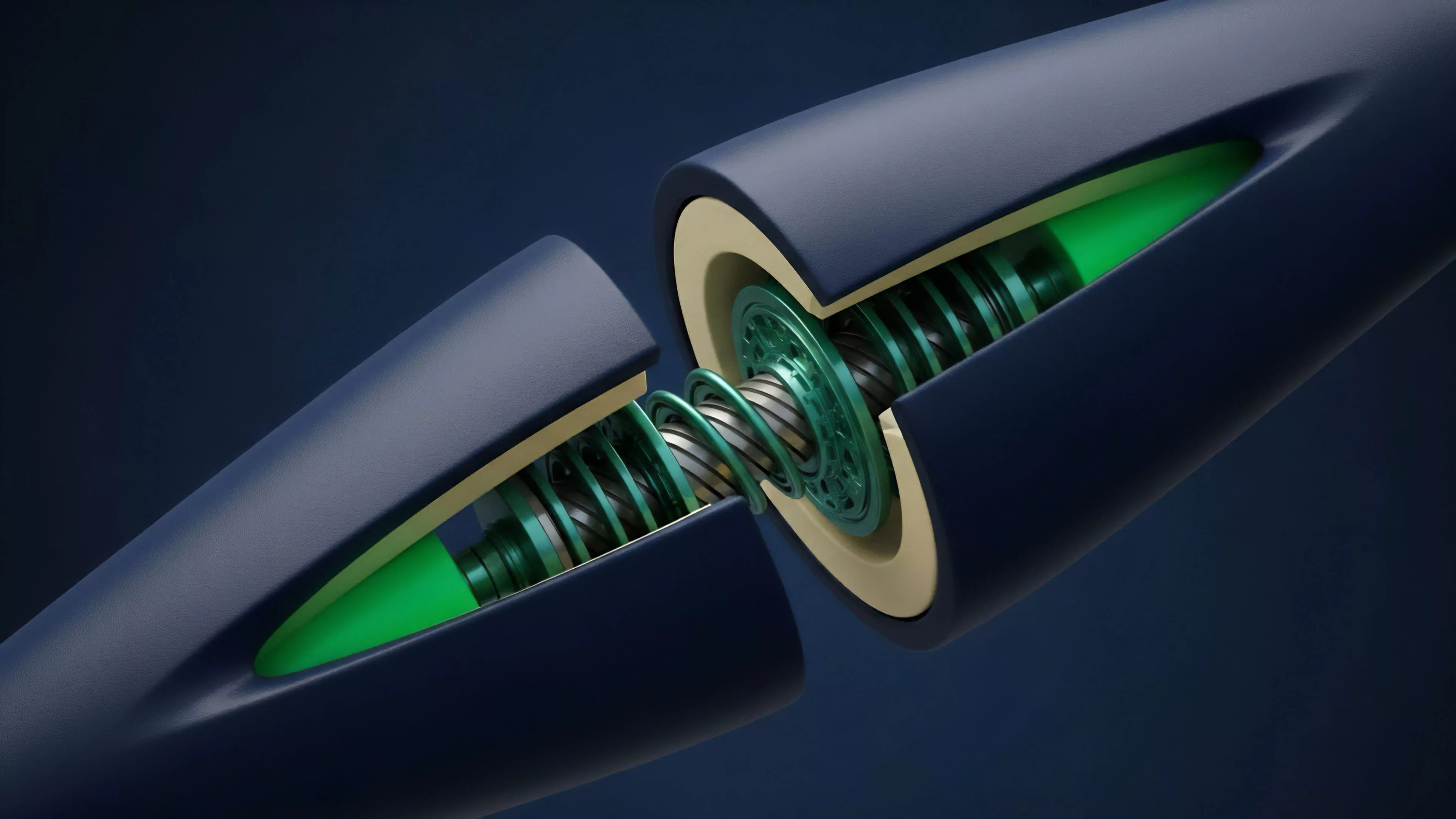

This evolution mirrors the history of financial exchanges, moving from localized, manual settlement to global, automated clearing systems. The transition toward zero-knowledge proofs for validation represents the current frontier, where the validity of state transitions is proven mathematically rather than through repetitive re-execution. This reduces the computational load on validators, allowing for more efficient resource allocation and deeper liquidity pools.

Horizon

Future developments in Consensus Protocol Validation will focus on reducing the latency of cross-chain settlement.

As liquidity becomes increasingly fragmented across multiple protocols, the ability to validate and bridge state information securely will define the next generation of derivative instruments. The emergence of shared security models, where multiple chains utilize the same validator set, suggests a move toward a more interconnected and robust validation landscape.

| Development Trend | Financial Impact |

| Zero Knowledge Verification | Lower Settlement Latency |

| Shared Security Layers | Reduced Cross-Chain Risk |

| Optimistic Finality | Higher Capital Efficiency |

The ultimate goal remains the creation of a trustless, global clearing house that operates without institutional intermediaries. By minimizing the human element in validation, the system moves toward a state where financial risk is strictly limited to the underlying code and market dynamics. Success in this domain will allow for the proliferation of complex derivative products that are currently confined to traditional, centralized exchanges.